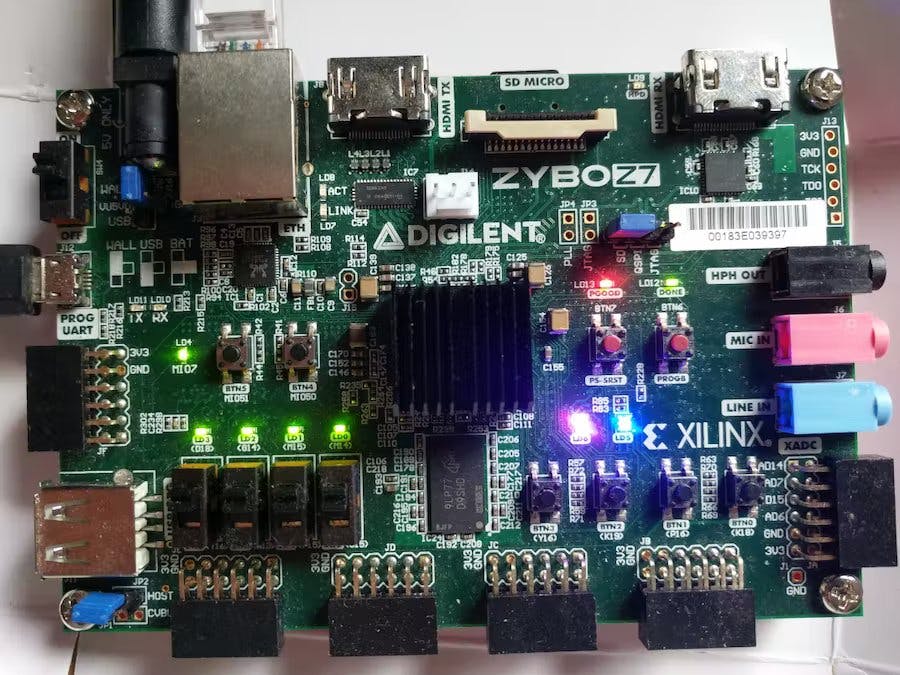

In the first part, I have enabled control for the on-board buttons, switches and LEDs via the GPIO controller. In this part, I am going to implement a custom IP core to control the colour and brightness of the two (one) RGB LEDs on the Zybo Z7-20 (Z7-10).

At the end of this story, you'll find quick-setup instructions to reproduce the full demo from the source code repositories.

ConceptThe RGB LEDs are actually composed of three single LEDs: a red, a green and a blue one. Every colour can be switched on/off by a distinct pin. Thus, by changing the brightness of each LED, we can basically mix any colour. But how do we actually adjust the brightness if we only have an on/off switch? The answer is called PWM (pulse-width modulation). When switching an LED on and off faster than approximately 50Hz, the human eye is not able to detect any flickering. Hence, by changing the ratio between the on time and the off time, we are able to trick the human eye into perceiving different brightness levels.

Although a modern CPU is certainly fast enough to perform this job in isolation, it can become tricky to ensure the correct timing under high CPU load. Even if we managed to meet the tight timing requirements for the PWM, other applications would need to be preempted frequently. Fortunately, we also have the programmable logic (PL) that we can equip with a custom IP core to perform PWM in flawless perfection, leaving all CPU resources for more interesting computations.

Before starting with the actual implementation, let me illustrate what I'd like to achieve:

The figure shows the basic idea of the my IP core for controlling a single RGB LED. The IP core holds a cycle counter that increments from zero up to a certain maximum (maxcycles) before being reset to zero. At the beginning of each period, all LEDs for the individual colours are switched on. Depending on the configured RGB value, each colour is turned off when the cycle counter reaches a certain threshold. Since RGB values are typically encoded with one byte for each colour (i.e. 0-255), I directly use the colour values as "turn-off-thresholds". Furthermore, by increasing/decreasing the maxcycles value, I am able to dim the overall brightness. The maximum brightness is therefore achieved by maxcycles=255.

Implementing a custom IP coreSince the last time I was using the Xilinx tools for hardware design was over a decade ago, I found this video and this tutorial helpful as a starting point. A more detailed guide is provided by Xilinx in terms of ug1118.

With this background information, I was able to create my custom IP core via Tool → Create and Package New IP. In the dialogue, I selected Create a new AXI4 peripheral because I want to implement an MMIO interface. In the final dialogue, I chose Edit IP as a next step.

Vivado’s IP packaging tool already takes care of generating all the boilerplate code. By default, it implements an MMIO interface with four 32bit registers, which is exactly what I needed to control colour and brightness of two RGB LEDs.

In order to implement the RGB module, I added a Verilog source file and started implementing a module for driving the pins of a single RGB LED:

module rgbled(

output reg [2:0] rgb,

input wire [6:0] brightness,

input wire [7:0] red,

input wire [7:0] green,

input wire [7:0] blue,

input wire clk

);

// [...] see below

endmoduleThe rgbled module got a three-bit output signal rgb (one bit for each colour). Moreover, it has four input signals for the brightness, red, green and blue values as well as a clock input. If you are new to Verilog and wondering about the difference between reg and wire, I can refer you to this tutorial. In short, a reg signal is able to store some state whereas a wire is merely a connection.

As a first part of the module’s implementation, I added a cycle counter that resets itself to 0 when it hits a certain maximum:

module rgbled(

// [...] see above

reg [14:0] counter;

wire [14:0] max_cycles;

always @(posedge clk) begin

if (counter < max_cycles) begin

counter <= counter + 1;

end

else begin

counter = 0;

end

end

// [...] see below

endmoduleI decided to take brightness values between 0 and 100 in order to scale max_cycles linearly between 255 (brightness 100) and 25500 (brightness 1) with a special case for brightness 0:

module rgbled(

// [...] see above

assign max_cycles =

brightness > 100 ? 255

: (brightness == 0 ? 0

: 255 * 100 / brightness);

// [...] see below

endmoduleThis made it pretty straightforward to implement the on/off switching logic:

module rgbled(

// [...] see above

always @(posedge clk) begin

if (max_cycles > 0) begin

rgb[0] <= red > counter ? 1 : 0;

rgb[1] <= blue > counter ? 1 : 0;

rgb[2] <= green > counter ? 1 : 0;

end

else begin

rgb <= 3'b000;

end

end

endmoduleThis completed the rgbled module, which, however, still needed to be instantiated in the custom IP core. When creating the IP core, Vivado generated two Verilog files. I identified the file suffixed with S00_AXI.v to be the MMIO module and the other file being the top-level module. Looking into the MMIO module, I noticed a comment "Users to add ports here" at the top of the file where I added these two lines for the output signals:

// Users to add ports here

output wire [2:0] led0,

output wire [2:0] led1,

// User ports ends

// Do not modify the ports beyond this lineAt the bottom of the file, I found the comment "Add user logic here" and inserted two instantiations of my rgbled module. Looking at the auto-generated implementation, I spotted that the MMIO registers are named slv_reg0, slv_reg1, slv_reg2 as well as slv_reg3. I thus merely needed to decide on what bits in which register. to use for the RGB and brightness values. I ended up with these instantiations:

// Add user logic here

rgbled led0_control (

.clk (S_AXI_ACLK),

.red (slv_reg0[23:16]),

.blue (slv_reg0[15:8]),

.green (slv_reg0[7:0]),

.brightness (slv_reg1[6:0]),

.rgb (led0)

);

rgbled led1_control (

.clk (S_AXI_ACLK),

.red (slv_reg2[23:16]),

.blue (slv_reg2[15:8]),

.green (slv_reg2[7:0]),

.brightness (slv_reg3[6:0]),

.rgb (led1)

);

// User logic endsSince I modified the MMIO module’s ports, I also added two similar output ports to the top-level file and passed them to the MMIO module’s instantiation.

After these changes, I noticed a couple of changes in the Package IP view. First, the File Groups section didn’t have a green tick mark any more. Looking into this section, and following Vivado’s suggestion to merge changes solved this issue. A similar merge wizard was provided in the Ports and Interfaces section. Vivado automatically detected the led0 and led1 ports I added previously. There are no manual adjustments required in this section, yet I learned that I am able to define interfaces of certain types and map ports to these interfaces. One can thereby group signals into a common grouping and assist Vivado’s auto-connection wizardry.

I skipped the interface definition, entered the Review and Package section and packaged my IP core. I got back to my block design and added the newly packaged IP core to the design, ran the auto-connection wizardry and made the led0 and led1 port external (as previously done with the GPIO_0 interface, see part 1). The address of the MMIO device is automatically assigned by Vivado but can be changed in the Address Editor after opening the block design. Before re-generating the bitstream, I uncommented the lines for the RGB LEDs in the constraints file and inserted the corresponding signal names (led0_0[0] to led0_0[2] and led1_0[0] to led1_0[2]).

Note, if you want to edit an IP core at a later point in time, you can right-click on the core in your block design and select Edit in IP Packager.

Eventually, I generated the bitstream for this new design and exported the result with File→ Export → Export Bitstream File.

Using the custom IP coreWith the new bitstream at hand, I implemented a zybo_gpio_demo_rgb component for testing the MMIO interface. This component uses buttons 0 to 3 to cycle through some colours and brightness values of the two RGB LEDs. You can find the code in the genode-zynq repository. Most of it is self-explanatory if you have followed part 1. Yet, let me briefly show how the MMIO interface is accessed:

#include <util/register.h>

/* [...] */

class Demo::Rgb_leds : public Platform::Device::Mmio

{

private:

struct Led : Genode::Mmio {

struct Color : Register<0x0, 32> { };

struct Brightness : Register<0x4, 32> { };

void color(uint32_t c) {

write<Color>(c); }

void brightness(uint32_t b) {

write<Brightness>(b); }

};

Led led0 { (addr_t)local_addr<void>() };

Led led1 { (addr_t)local_addr<void>() + 0x8 };

public:

Rgb_leds(Platform::Device &device)

: Platform::Device::Mmio(device)

{ }

void led0_color(uint32_t c) {

led0.color(c); }

void led0_brightness(uint32_t b) {

led0.brightness(b); }

void led1_color(uint32_t c) {

led1.color(c); }

void led1_brightness(uint32_t b) {

led1.brightness(b); }

};In Genode, MMIO interfaces are easily accessed via the register framework. We only need to inherit from Platform::Device::Mmio (or Genode::Mmio) and define a few registers. Platform::Device::Mmio gets the mapped device address from the platform driver whereas Genode::Mmio expects the address as a constructor argument. Here, I used the latter to define the Led struct that comprises the Color and Brightness registers of a single LED. The template arguments of the Register class specify its offset and access width (in bits). The registers can be easily read resp. written with the template methods read() and write() as seen in Led::color() and Led::brightness(). I then instantiate the Led struct twice using the local_address() method of Platform::Device::Mmio to get the mapped device address.

In order to add the newly implemented component to the GPIO demo, I added the following start node to run/zybo_gpio_demo.run script:

<start name="zybo_gpio_demo_rgb">

<resource name="RAM" quantum="2M"/>

<route>

<service name="Pin_control"> <child name="zynq_pin_drv"/> </service>

<service name="Pin_state"> <child name="zynq_pin_drv"/> </service>

<service name="IRQ"> <child name="zynq_pin_drv"/> </service>

<service name="Platform"> <child name="platform_drv"/> </service>

<service name="ROM"> <parent/> </service>

<service name="CPU"> <parent/> </service>

<service name="PD"> <parent/> </service>

<service name="LOG"> <parent/> </service>

</route>

</start>Furthermore, I modified the pin-driver configuration as follows:

<config>

<!-- zybo_gpio_demo_mio pins -->

<in name="Btn4" bank="1" index="18" irq="rising"/>

<in name="Btn5" bank="1" index="19" irq="rising"/>

<out name="Led4" bank="0" index="7" default="on"/>

<policy label="zybo_gpio_demo_mio -> Btn4" pin="Btn4"/>

<policy label="zybo_gpio_demo_mio -> Btn5" pin="Btn5"/>

<policy label="zybo_gpio_demo_mio -> Led4" pin="Led4"/>

<!-- zybo_gpio_demo_sw pins -->

<in name="Sw0" bank="2" index="0" irq="edges"/>

<in name="Sw1" bank="2" index="1" irq="edges"/>

<in name="Sw2" bank="2" index="2" irq="edges"/>

<in name="Sw3" bank="2" index="3" irq="edges"/>

<out name="Led0" bank="2" index="8" default="off"/>

<out name="Led1" bank="2" index="9" default="off"/>

<out name="Led2" bank="2" index="10" default="off"/>

<out name="Led3" bank="2" index="11" default="off"/>

<policy label="zybo_gpio_demo_sw -> Sw0" pin="Sw0"/>

<policy label="zybo_gpio_demo_sw -> Sw1" pin="Sw1"/>

<policy label="zybo_gpio_demo_sw -> Sw2" pin="Sw2"/>

<policy label="zybo_gpio_demo_sw -> Sw3" pin="Sw3"/>

<policy label="zybo_gpio_demo_sw -> Led0" pin="Led0"/>

<policy label="zybo_gpio_demo_sw -> Led1" pin="Led1"/>

<policy label="zybo_gpio_demo_sw -> Led2" pin="Led2"/>

<policy label="zybo_gpio_demo_sw -> Led3" pin="Led3"/>

<!-- zybo_gpio_demo_rgb pins -->

<in name="Btn0" bank="2" index="4" irq="edges"/>

<in name="Btn1" bank="2" index="5" irq="edges"/>

<in name="Btn2" bank="2" index="6" irq="edges"/>

<in name="Btn3" bank="2" index="7" irq="edges"/>

<policy label_suffix="zybo_gpio_demo_rgb -> Btn0" pin="Btn0"/>

<policy label_suffix="zybo_gpio_demo_rgb -> Btn1" pin="Btn1"/>

<policy label_suffix="zybo_gpio_demo_rgb -> Btn2" pin="Btn2"/>

<policy label_suffix="zybo_gpio_demo_rgb -> Btn3" pin="Btn3"/>

</config>For a brief test run, I let the platform driver know about the new MMIO device by (temporarily) adding the following lines to the board/zynq_zybo_z7/devices file:

<device name="rgbleds" type="my_rgbleds">

<io_mem address="0x43c00000" size="0x1000"/>;

</device>To enable access to this MMIO device, I also added the corresponding policy to the platform-driver configuration:

<policy label="zybo_gpio_demo_rgb -> ">

<device name="rgbleds"/>

</policy>After copying the bitstream file to my SD card, I was able to change the colours and brightness of both RGB LEDs by using the four push-buttons below the LEDs. However, since the zybo_gpio_demo_rgb uses an MMIO device that is only available if my custom bitstream is loaded, I would prefer to perform the bitstream load procedure at run time with Genode so that I can automatically start the corresponding software component after the bitstream has been loaded.

Switching bitstreams at run timeIn this section, let’s look into how we can load bitstreams at run time in Genode. The mere reconfiguration of the FPGA is pretty straightforward, yet, it also has its implications on the availability of custom platform devices. To accommodate this use case, I created the depot archive pkg/drivers_fpga-zynq.

As illustrated by the figure above, the pkg/drivers_fpga-zynq subsystem requires two ROM session (devices_manager.config and policy) as well as a File System session. In return, it acts as a platform driver by providing a Platform service. The policy ROM contains the platform-driver configuration for this GPIO demo:

<report devices="yes"/>

<policy label_suffix="zynq_pin_driver -> ">

<device name="gpio0"/>

</policy>

<policy label_suffix="zybo_gpio_demo_rgb -> ">

<device name="rgbleds"/>

</policy>The <report> node instructs the internal platform driver to generate a devices report whenever its state changed. This report is used by the Zynq Driver Manager to determine when a certain device became available. In consequence, the Zynq Driver Manager may generate a new init.config to start the corresponding driver component. The driver components and corresponding device dependencies are specified in the manager’s configuration. For starting zybo_gpio_demo_rgb once the my_rgbleds device became available, I am using the following configuration:

<config>

<driver name="zybo_gpio_demo_rgb" device="my_rgbleds">

<binary name="zybo_gpio_demo_rgb"/>

<resource name="RAM" quantum="2M"/>

</driver>

</config>The devices_manager.config provides the specification of bitstreams and the devices they implement. Instead of adding the rgbleds device to the static devices ROM, as I did in the previous section, we can let the pkg/drivers_fpga-zynq subsystem know about what devices become available by what bitstream. For the two bitstreams I generated previously, I’m using the following devices_manager.config.

<config>

<bitstream name="zybo_z720_rgb-bitstream.bit">

<devices>

<device name="rgbleds" type="my_rgbleds">

<io_mem address="0x43c00000" size="0x1000"/>;

</device>

</devices>

</bitstream>

<bitstream name="zybo_z720_gpio-bitstream.bit"/>

</config>Note that I packaged both ROMs (devices_manager.config and policy) into a raw/zybo_gpio_demo archive.

The pkg/drivers_fpga-zynq subsystem eventually reads the user-provided bitstreams from its File System session. Moreover, the internal fpga_drv component, which is responsible for bitstream loading, gets its configuration from the /config file. This file thus specifies what bitstream shall be loaded into the FPGA, e.g.:

<config>

<bitstream name="zybo_z720_rgb-bitstream.bit"/>

</config>By simply changing the content of this file, we are able to switch between bitstreams. At this point, it becomes clear why the zybo_gpio_demo_mio component generates a state report. By adding a ROM filter component to the run/zybo_gpio_demo.run script, I can easily realize bitstream switching:

<start name="rom_filter">

<resource name="RAM" quantum="1M"/>

<provides>

<service name="ROM"/>

</provides>

<route>

<service name="ROM" label="state"> <child name="report_rom"/> </service>

<service name="ROM"> <parent/> </service>

<service name="CPU"> <parent/> </service>

<service name="PD"> <parent/> </service>

<service name="LOG"> <parent/> </service>

</route>

<config>

<input name="state">

<attribute name="value"/>

</input>

<output node="config">

<node type="bitstream">

<if>

<has_value input="state" value="yes"/>

<then>

<attribute name="name"

value="zybo_z720_rgb-bitstream.bit"/>

</then>

<else>

<attribute name="name"

value="zybo_z720_gpio-bitstream.bit"/>

</else>

</if>

</node>

</output>

</config>

</start>Of course, I also need to instantiate all the components shown in the above figure. Let’s start with replacing the platform_drv with the pkg/drivers_fpga-zynq subsystem:

<start name="platform_drv" caps="1000" managing_system="yes">

<binary name="init"/>

<resource name="RAM" quantum="24M"/>

<provides> <service name="Platform"/> </provides>

<route>

<service name="ROM" label="config">

<parent label="drivers.config"/>

</service>

<any-service> <parent/> <any-child/> </any-service>

</route>

</start>Next, I added the Zynq Driver Manager, a subsystem for the dynamic drivers, and a VFS server:

<start name="zynq_driver_manager">

<resource name="RAM" quantum="2M"/>

<route>

<service name="ROM" label="devices">

<child name="report_rom"/>

</service>

<any-service> <parent/> <any-child/> </any-service>

</route>

<config>

<driver name="zybo_gpio_demo_rgb" device="my_rgbleds">

<binary name="zybo_gpio_demo_rgb"/>

<resource name="RAM" quantum="2M"/>

</driver>

</config>

</start>

<start name="dynamic_drivers" caps="500">

<binary name="init"/>

<resource name="RAM" quantum="5M"/>

<route>

<service name="ROM" label="config">

<child name="report_rom"/>

</service>

<service name="IRQ"> <child name="zynq_pin_drv"/> </service>

<any-service> <parent/> <any-child/> </any-service>

</route>

</start>

<start name="vfs">

<resource name="RAM" quantum="8M"/>

<provides><service name="File_system"/></provides>

<route>

<service name="ROM" label="fpga.config">

<child name="rom_filter"/>

</service>

<any-service> <parent/> </any-service>

</route>

<config>

<vfs>

<rom name="config" label="fpga.config"/>

<rom name="zybo_z720_gpio-bitstream.bit"/>

<rom name="zybo_z720_rgb-bitstream.bit"/>

</vfs>

<default-policy root="/" writeable="no"/>

</config>

</start>The VFS component delivers the bitstreams and the fpga.config ROM generated by the ROM filter component via a File System session.

Last, I updated the import_from_depot line as follows:

import_from_depot [depot_user]/src/[base_src] \

[depot_user]/src/init \

[depot_user]/pkg/drivers_fpga-zynq \

[depot_user]/src/driver_manager-zynq \

[depot_user]/src/report_rom \

[depot_user]/src/rom_filter \

[depot_user]/src/vfs \

[depot_user]/raw/zybo_gpio_demo \

[depot_user]/src/zynq_pin_drv \

[depot_user]/raw/[board]-devicesGiving the modified run script a spin, I got this output:

build/arm_v7a #> make run/zybo_gpio_demo BOARD=zynq_zybo_z7 KERNEL=hw

...

[init -> report_rom] Warning: no policy defined for

label 'zynq_driver_manager -> devices'

[init -> report_rom] Warning: no valid policy for ROM request

'zynq_driver_manager -> devices'

[init -> report_rom] Warning: no policy defined for

label 'dynamic_drivers -> config'

[init -> report_rom] Warning: no valid policy for ROM request

'dynamic_drivers -> config'

[init -> report_rom] Warning: no policy defined for

label 'rom_filter -> state'

[init -> report_rom] Warning: no valid policy for ROM request

'rom_filter -> state'

...Of course, I forgot adding the policy definitions to the report_rom configuration, hence I added a policy node for each of the logged labels:

<config verbose="no">

<policy label="rom_filter -> state"

report="zybo_gpio_demo_mio -> state"/>

<policy report="platform_drv -> platform_drv -> devices"

label="zynq_driver_manager -> devices"/>

<policy report="zynq_driver_manager -> init.config"

label="dynamic_drivers -> config"/>

</config>Another spin on the run script produced the following output:

[init -> vfs] Error: ROM-session creation failed (ram_quota=6144,

cap_quota=3, label="zybo_z720_gpio-bitstream.bit")

[init -> vfs] Error: Could not open ROM session for

"zybo_z720_gpio-bitstream.bit"

[init -> vfs] Error: failed to create <rom> VFS node

[init -> vfs] Error: name="zybo_z720_gpio-bitstream.bit"

[init -> vfs] Error: ROM-session creation failed (ram_quota=6144,

cap_quota=3, label="zybo_z720_rgb-bitstream.bit")

[init -> vfs] Error: Could not open ROM session for

"zybo_z720_rgb-bitstream.bit"

[init -> vfs] Error: failed to create <rom> VFS node

[init -> vfs] Error: name="zybo_z720_rgb-bitstream.bit"I have not provided the bitstream files yet. For a brief test, I could simply copy the files into build/arm_v7a/bin and add them to the build_boot_image line. However, I had another solution in mind that makes use of Goa, a command-line-based workflow tool for the development of applications for the Genode OS Framework.

In order to provide bitstreams in form of depot archives, I added Vivado support to Goa. Fortunately, Vivado provides a command to export a project in form of a tcl script, which allows re-creation of the project. In this section, I will focus on how to use Goa's Vivado support.

First, I needed to export the Vivado project I want to package. Thus, in the Vivado’s TCL console, I entered the following command:

write_project_tcl -paths_relative_to /home/johannes/vivado_workspace /tmp/vivado.tclThe -paths_relative_to argument is essential because it converts the paths of the source files into relative paths. In my goa-projects repository, I created the subdirectory zynq/zybo_z720_rgb-bitstream/ to create a new Goa project and copied vivado.tcl into the src/ subdirectory of the new Goa project. By inspecting the tcl file, we can get a list of required source files. In my tcl file, the following lines caught my attention:

proc checkRequiredFiles { origin_dir} {

set status true

set files [list \

"[file normalize "$origin_dir/xilinx/project_2/project_2.srcs/constrs_1/imports/Downloads/Zybo-Z7-Master.xdc"]"\

]

foreach ifile $files {

if { ![file isfile $ifile] } {

puts " Could not find local file $ifile "

set status false

}

}

set paths [list \

"[file normalize "$origin_dir/../../[file normalize "$origin_dir/xilinx/ip_repo/rgbled_1.0"]"]"\

]

foreach ipath $paths {

if { ![file isdirectory $ipath] } {

puts " Could not access $ipath "

set status false

}

}

return $status

}The checkRequiredFiles procedure provides a first hint regarding what source files I have to copy into my Goa project. In this case, it lists the constraints file as well a the path of my custom IP core.

Note that Goa will mirror all files from the src/ directory to the archive’s build directory. Furthermore, it will use the build directory as $origin_dir when re-creating the Vivado project. The required files must therefore be copied into the src/ directory with their corresponding relative paths.

By adding an artifacts file to my Goa project, I further tell Goa what build artifact(s) to include into the bin/<arch>/zybo_z720_rgb-bitstream archive. Since Goa writes the bitstream to a file named after the pattern <project_name>.bit, the artifacts file must have the following content:

zybo_z720_rgb-bitstream.bitMoreover, as a bin archive is always tied to a particular CPU architecture, I set the target architecture to arm_v7a by adding a .goarc with the following content:

set arch arm_v7a

set jobs 1Note that I also restricted the number of jobs to 1 to reduce Vivado’s resource consumption. With these requisites, goa build produced the following error:

goa-projects/zynq/zybo_z720_rgb-bitstream$ goa build

Error: tool-chain prefix is not definedThis message hints at the fact that there is no built-in support for arm_v7a in Goa yet. I therefore defined the tool-chain prefix manually by adding the following line to the .goarc file:

set cross_dev_prefix "/usr/local/genode/tool/current/bin/genode-arm-"Now, goa build complained about a missing vivado binary:

goa-projects/zynq/zybo_z720_rgb-bitstream$ goa build

...

Error: build-directory creation via vivado failed:

couldn't execute "vivado": no such file or directoryOf course, I forgot to source the Vivado environment. After fixing this as follows, Goa was able to build the bitstream within a couple of minutes:

goa-projects/zynq/zybo_z720_rgb-bitstream$ source /tools/Xilinx/Vivado/2021.1/settings64.sh

goa-projects/zynq/zybo_z720_rgb-bitstream$ goa build

...

[zybo_z720_rgb-bitstream:vivado] 10 Infos, 0 Warnings, 0 Critical Warnings and 0 Errors encountered.

[zybo_z720_rgb-bitstream:vivado] write_bitstream completed successfully

[zybo_z720_rgb-bitstream:vivado] write_bitstream: Time (s): cpu = 00:00:11 ; elapsed = 00:00:13 . Memory (MB): peak = 3202.781 ; gain = 451.625 ; free physical = 1133 ; free virtual = 2434

[zybo_z720_rgb-bitstream:vivado] INFO: [Common 17-206] Exiting Vivado at Thu Oct 6 15:35:31 2022...With the successful build, I was ready to try goa export –depot-user jschlatow. The output reminds me to add a LICENSE and a version file. After adding these files, I was ready to export and publish the depot archive.

The very last missing piece of the puzzle was then to add the following arguments to the import_from_depot procedure in run/zybo_gpio_demo.run.

import_from_depot ...

jschlatow/src/zybo_z720_rgb-bitstream/2022-09-29 \

jschlatow/src/zybo_z720_gpio-bitstream/2022-09-27 \

...Now, when running make run/zybo_gpio_demo, I can use buttons 4 and 5 to switch between the two bitstreams.

For reproducing the demo, you can find the complete run script and source files in the genode-zynq repository. I'm assuming you have already cloned the main genode repository and the genode-zynq repository (see Genode 101: Getting started with the Zybo Z7). This also implies that you have created a build directory for arm_v7a.

As a prerequisite, you need to download the bitstream archives. This is achieved by the following two commands that must be run from within the genode worktree.

genode$ ./tool/depot/download jschlatow/bin/arm_v7a/zybo_z720_rgb-bitstream/2022-09-29

genode$ ./tool/depot/download jschlatow/bin/arm_v7a/zybo_z720_gpio-bitstream/2022-09-27Now, you are able to execute the run script as follows:

genode$ make -C build/arm_v7a run/zybo_gpio_demo BOARD=zynq_zybo_z7If you have followed the instructions for TFTP boot, you only need to press the reset button on the Zybo board and wait for the system to boot the newly built image. Alternatively, you replace the uImage file of your previously prepared micro SD card by build/arm_v7a/var/run/zybo_gpio_demo/uImage. When the system has booted, you are able to switch bitstreams with buttons 4 and 5. Both bitstreams allows you to use the four switches to control the LEDs next to them. Yet, the RGB LEDs can only be controlled when the zybo_z720_rgb-bitstream is loaded. Buttons 0 to 3 are used to cycle through different colours and brightness settings.

Comments