One of the most disturbing problem in fires is the human death toll, either because of people being stuck in the debris or due to the unconsciousness (generated by the toxic environment) or due to the delayed help. So, one of the most significant challenges faced by the rescue and search teams during a fire is the actual search of survivors and victims. As a direct result, The FIRST OBJECTIVE of this project is to develop a real-time drone technology system, capable of detecting humans in disastrous conditions, like fires.

In order to design and implement an autonomous robotic drone system, a local representation of the external world – obtained from its integrated network of sensors – is required to be acquired. So, the SECOND OBJECTIVE of this project is to develop and test an ultrasound obstacle detection unit.

The HoverGames Drone BuildThe primary build of the drone follows the kit instructions which can be found here: https://nxp.gitbook.io/hovergames/userguide/getting-started.

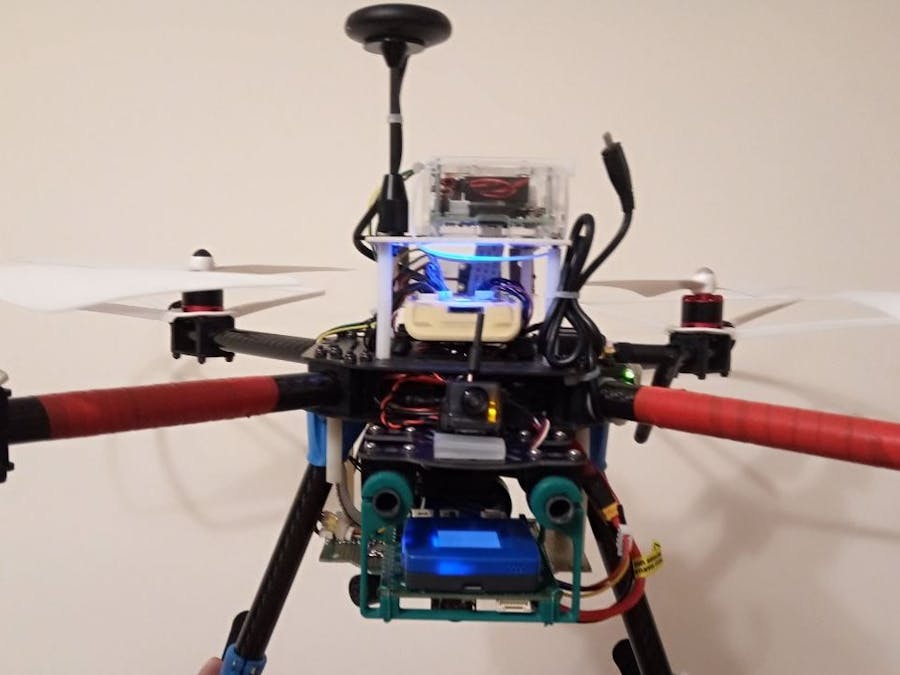

The final drone structure is based on the main configuration of the HoverGames drone on which have been added many other components that represent the physical implementation of the goals mentioned above but also many other support components were placed. A short video description of the drone is presented in the following video:

Human detection systemAll human bodies have the same basic structure, regardless of gender, race, or ethnicity. Even if at the structural level we are the same (all of us we have a head, two arms, a torso, and two legs), there is a large human variability given by the human physical appearance (in a direct relation with body shape, skin color, skeletal shape, health, age, etc.). This identical structure, of the human body, can be used to extract features able to describe and differentiate the human body from other objects and things in an image. So, based on these features, a machine learning model can be trained to learn the human body variabilities in order to detect humans in video streams.

A basic program for human detection (github.com/dmdobrea/HoverGames ® Human_Detecion/01_OpenCV-PoC => humanDetection.cpp) can use the Haar cascade, provided by OpenCV, and the Linear SVM model classifier to perform human detection in video streams. By instantiation HOGDescriptor object, the Histogram of Oriented Gradients (HOG) descriptor will be initialized. Then, through a call to the setSVMDetector the Support Vector Machine will be set to the pre-trained human detector, loaded via the getDefaultPeopleDetector() (trained using INRIA dataset) or via getDaimlerPeopleDetector() (trained using Daimler dataset) functions. Right now, the OpenCV human detector is fully loaded, and it just needs to be applied to some images.

Based on an infinite loop (implemented with a for structure), the program acquires a sequence of images from the camera connected to the Raspberry Pi system through an OpenCVVideoCapture interface (line 44).

The detectMultiScale method (line 57 or 59) constructs an image pyramid with scale=1.05 and a sliding window step size of (4, 4) pixels in both x and y directions. On each window, the HOG descriptors were computed and send it to the classifier. The detectMultiScale function returns a vector of rectangles (in found vector) where each box contains a detected human. Through the scale parameter of the detectMultiScale function, the algorithm performance-speed ratio can be influenced. A larger scale size parameter will evaluate fewer layers in the image pyramid, so this makes the algorithm faster. However, having a too large scale parameter (i.e., fewer layers in the image pyramid) can lead to low human detection performances.

The HOG detector returns a slightly larger rectangle than the real objects, so we slightly shrink the rectangles to get a more fitted output (in the lines 75 up to 78). In the last step, in line 80, the bounding boxes are drawn on the image. But, in some frames, you’ll notice that there are multiple, overlapping boxes detected for the same person, see below image - this happens, but not very often, and generates more human detection than they are. There are several approaches to deal with this problem (e.g., Non-Maximum Suppression), but each of them requires some computational power. In my case, the number of reported detected people is that number that appears 2 or 3 times in three successive detections.

This program (humanDetection.cpp) is only a proof of concept (PoC) – it proves the ability of a Raspberry Pi system (Raspberry Pi 3 Model B+, 1.4 GHz) that run it to detect humans. This basic implementation of the human detection software module has at least two significant drawbacks:

- First issue:the feature extraction component and the classification system, embedded into detectMultiScale function, it took a substantial amount of time to finish its execution, between 2.4 and up to 2.9 seconds;

- The second issue: the time delay between the moment when a subject appears in the front of the Raspberry Pi’s video cam and the moment when this image is sent to the algorithm is huge more than 10 seconds.

To solve the first issue, I used a multithread approach with three different recognition treads (working threads) running on three distinct cores of the Raspberry Pi system.

Here, we are considering T the entire time interval required to detect humans (to acquire an image, extract the features, and run the classification system) in the worst case. The main idea is to start, based on a timer, three different detection processes, delayed each with a time interval of T/3, on three different cores of the Raspberry Pi system - see the above figure. In the end, at each T/3 seconds, we will get the result of a human detection process. Now, considering the real situation and base on this approach, we will be able to acquire and process the input information at around one frame/second.

The entire code, for the human detection software unit (that runs on Raspberry Pi), can be found at github.com/dmdobrea/HoverGames/Human_Detection/03_Final_Solution/RaspberryPi => humanDetectionI2C_fin.cpp. The T interval (named t_cycle in the program, see the line 246) is measured in the lines 216 up to 246. Two measurements were done. This approach was required mainly because when the program starts the first measurement takes longer than the subsequent measurements. So, the first measurement (lines 216 up to 226) is used only to eliminate this transitory regime. Starting to line 267 and ending to 312 marks the place where the three threads (lightweight processes) were created and associated with cores: CPU1, CPU2, and CPU3. Based on the T interval, a POSIX clocks-based timer is created (line 352) and armed (line 369). The periodic timer has a delay of a second and a repeating interval of T/3 seconds (lines 362 up to 366). This timer has an associated thread function (procesStart – lines 489 up to 508), executed each time when the timer expiry. At each timer expiration, through a semaphore element, a new processing stage is started (AcqProcessCPU1, AcqProcessCPU2 and AcqProcessCPU3 function) on a different core – see the above figure. The processing result of each thread, the number of detected humans, is saved in a data vector (humanDetected) with five elements – each element is the result of a detection process from this moment, from a second ago, from two seconds ago, etc.

The human detection module results, the elements of the humanDetected vector, are sent to the FMUK66 flight management unit (FMU) at a specific request. The Raspberry Pi has three types of serial interface: the UART serial port, SPI, and I2C. Due to the lack of available UART ports of the FMU, only SPI and I2C buses remain as viable solutions for data transfer between the Raspberry Pi and FMUK66. But after an extensive search, I conclude that RPisupports only master mode for SPI, and as a direct result, the I2C bus remained the only reasonable choice. To do I2Ccommunication, the pigpio library was used to configure the Raspberry Pi system as an I2C slave device. From the pigpio library, the only function able to work with I2Cin slave mode is bscXfer. This function provides a low-level interface to the I2C Slave peripheral on the BCM chip. The bscXfer sets the BSC (Broadcom Serial Controller), writes any data from the transmit buffer to the BSC transmit FIFO, and copies any data from the BSC receive FIFO to the program’s receive buffer. The I2C slave management was done in another thread (slaveI2C) restricted to be executed only by CPU0 (line 325). The I2C slave address is set in code using the slaveAddress constant – the 0x4A address.

On the FMUK66 unit a second application was developed (github.com/dmdobrea/HoverGames/Human_Detection/03_Final_Solution/FMU66_FMU/daiDrone_HD => daiDrone_HD.cpp). This app. request data from the Raspberry Pi system, through an I2C connection, and display if one or more humans were detected. The application creates a background task that in the first section create and init several objects (I2C port – line 119 and lines 135 to 140, a semaphore – line 132, a timer – lines 144 up to 181) and in the second section is waiting in an infinite loop the occurrence of an event (lines 185 to 225). At a timer expiration event, the background task is unblocked and new recognition information is obtained from the human detection unit. The synchronization between the timer and the background task is achieved through semaphore.

A short movie that presents the working way of the algorithm for human identification, but which also highlights the algorithm performance is the following one:

Two other programs (that runs on Raspberry Pi and FMUK66) are offered at the address: github.com/dmdobrea/HoverGames/Human_Detection/02_I2C-FMU_to_RPi-PoC. These programs (which configure the Raspberry Pi and FMUK66 to behaves as an I2C master and I2C slave units) demonstrate the feasibility and the main concepts of data communication on the I2C bus.

The VideoCapture buffer generates the second issue. The FIFO VideoCapture buffer introduces a lag mainly because we do not have the computational power to process all the frames. Each available frame is stored, by the VideoCapture, in an internal FIFO buffer. At every frame, we get from the FIFO VideoCapture buffer, this frame is not the last one, but it is the first one inserted on the FIFO buffer – this is the main reason for seeing a lag of about 10 seconds - generated by the VideoCapture buffer. If at this lag, you add a variable delay of around 2.7 seconds caused by the human detection system, we can very well understand why such a system is impractical to be used in a real situation. The solution was to create another thread (GrabThread – lines 511 to 535) that grabs frames continuously at high speed in order to keep the VideoCapture buffer empty. The grabbed frames are inserted at the end in a local FIFO queue (line 527). The queue was implemented using the std::queue class template. Each one of the working threads (AcqProcessCPU1, AcqProcessCPU2, and AcqProcessCPU3) will get their data from the local FIFO queue (lines 555, 612 and 669), and in the second step will remove all the elements from the queue (lines 557-558, 614-615 and 671-672). The shared data between the grabs frame thread and the working threads was protected by a mutex - std::mutex.

Results and conclusions for the human detection system:

- The human detection performances are excellent, however, we observe the existence of few classification errors (the following references are to the above movie): no subject detection (time indexes: 4.32 and 4.35), a false positive detection (an object is incorrectly identified as a human at 4.47 time index) or multiple detection of a single human (time index 4.50). There ale other approaches that can be used to improve the overall performances but these methods are much slower than HOG.

- The use of multiple working threads on various cores was the only approach I have found to obtain recognition rates of 1 frame/sec. From the experience of this project, I think it would be best to have 2 frames/sec. There are solutions to solve this requirement, for example, to replace the Raspberry Pi system with a more powerful system (more cores and a higher working frequency).

- The human detection system uses at least seven threads (3 working threads - each of them on a different core CPU1, CPU2 CPU3, a thread for I2C slave communication, a thread used to grabs frames, a timer thread and the main thread) and generate a load average of 3.05 (for the last 5-minute) and a maximum load average of 3.39, now keep in mind that the Raspberry Pi is a quad-CPU – see these results in the following figure. This load average is a high utilization of the system, for a single program, but the Raspberry Pi is not an overloaded system.

To obtain the data presented above, first, find the process ID of the program:

$ ps –eaf | grep humanDetectionI2C_fin | grep –v grepNext, use the top command to display all the threads of humanDetectionprocess:

$ top –H –p PIDNow, pressing ‘f’ (having the top command running) go into field selection and select the option to display the core associated with each process. Use ‘q’ or ‘Esc’ to end field selection and to return to display information about what is happening in the system with the humanDetectionI2C_fin process.

- For the communication with the Raspberry Pi system, I used the I2C bus 1 of the FMUK66 FMU. On the same bus is connected to the external compass IST8310. From the moment when I coupled the Raspberry Pi,the drone begins to have a weird behavior – it starts to spin uncontrollably. The problem was not solved when I removed the Raspberry Pi system entirely. A short movie with this issue presented below:

I mention here: (a) the flights were done one of another, and (b) I flew with 1.10.0 drone software version. When I tested offline the IST8310 sensor, it worked and responded correctly to all the requests from the ist8310 command line. Searching on the Internet, I found that in the 1.10.0 version, the processing rate was increased to 1 kHz. On 1.10.1 the processing rate was decreased to 400 Hz – the 1 kHz processing rate was too much for some FMU. I tested the HoverGames drone with all the PX4 autopilot versions (1.10.0, 1.10.1 and 1.9.0 – with the last one I flight almost all the time) but no improvements.

I think that, from the perspective of all that happened with my drone and presented above, a significant improvement of theFMUK66 FMU can be achieved by moving the external magnetometer on the I2C bus number 2.

Tips & Tricks:

- Having a substantial load on at least three core of the system, the temperature of the BCM2835 (the SoC on the Raspberry Pi system) goes high – up to 58 … 60 Celsius degrees so that it can get pretty hot. It is straightforward to check the RPi temperature, so, please use:

$ vcgencmd measure_tempIn order to decrease the Raspberry Pi temperature, I decided to add a fan and several heat sinks to keep the temperature under control. The results were as expected; the temperature on the main processor decreased to 37… 40 degrees Celsius. As a side effect, the I2C communication between the FMUK66 FMU and the Raspberry Pi starts to have errors.

Three capacitors (1 x tantalum 10 uF and 2 x ceramic 150 nF) connected on the fan power supply lines solve this problem. It appears that the Raspberry Pi decupling network is under-designed.

- To compile the humanDetectionI2C_fin.cpp file into an executable, we must give the compiler at least 2 arguments – the source files which make up the program and the name of the executable. But in humanDetectionI2C_fin.cpp several functions from different libraries were used and required to be linked: OpenCV (different libraries), pigpio, POSIX thread (pthread) or from the realtime library. As a conclusion, in order to create the executable file please use the following command (I know that part of the OpenCV libraries are not necessary, but I have made many attempts to find the optimal solution and as a direct result I used many functions of OpenCV):

$ gcc humanDetectionI2C_fin.cpp –o humanDetectionI2C_fin.cpp –Wall -lopencv_core -lopencv_imgproc -lopencv_highgui -lopencv_ml -lopencv_video -lopencv_features2d -lopencv_calib3d -lopencv_objdetect -lopencv_contrib -lopencv_legacy -lopencv_stitching -lpthread -lrt -lpigpioOne proposed objective of this project was to develop an ultrasound obstacle detection system.

The most direct approach for solving this requirement is to use the USONIC port already presented on the FMUK66 FMU. So, I connected an HC-SR04 sensor, developed the code, compiled, and I got the following error:

../../src/drivers/distance_sensor/hc_sr04/hc_sr04.cpp:463:89: error: macro "px4_arch_gpiosetevent" requires 6 arguments, but only 5 given

px4_arch_gpiosetevent(_gpio_tab[_cycle_counter].echo_port, true, true, false, sonar_isr);The error was in the code (in the driver) developed by the NXP team. A print screen of this error is the following:

Searching for a solution to solve this problem, I found in px4_micro_hal.h header file the following information:

/* kinetis_gpiosetevent is not implemented and will need to be added */

# define px4_arch_gpiosetevent(pinset,r,f,e,fp,a) kinetis_gpiosetevent(pinset,r,f,e,fp,a)A print screen with this message is the following one:

So, from here, I conclude that it is impossible, now at least, to use the ultrasound sensor port of FMUK66 FMU, mainly because the code used to support the sensor is not fully implemented. Iain Galloway confirmed this issue.

As a result of these findings, I decided to develop a dedicated system based on a sonar array, to support the obstacle avoidance behavior of the HoverGames drone.

In the first approach, I used an HC-SR04 sensor. In a short time, I understand that the HC-SR04 sensor is not very reliable. The most annoying behavior was the missed values obtained from time to time. Also, it is not very accurate. In conclusion, I chose to use different ultrasonic sensors and I decide to use several MaxSonar-EZ sensors (4) - resolution of 1 mm, internal temperature compensated and a maximum range of 5000 mm.

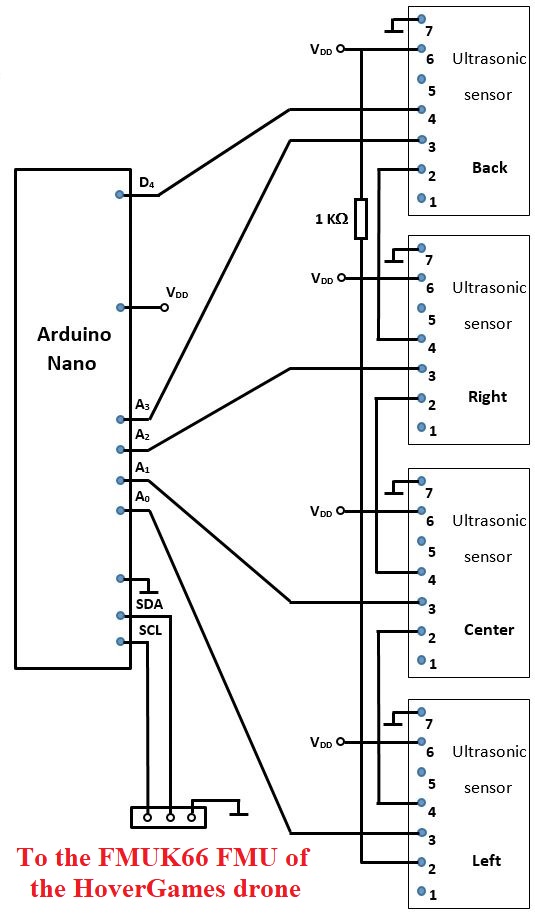

In the following figure, I present the schematic for the ultrasound obstacle detection unit. Three of the sensors were placed in front of the drone (HRLV-MaxSonar-EZ0) and one in the back position (HRLV-MaxSonar-EZ4) – see the above picture.

The MaxBotix sensors of the MaxSonar family have different outputs: analog voltage, pulse width, and RS232 serial. In this project I used only the analog voltage output – the output voltage of the sensor gets larger as the detected target moves further away from the sensor. A Nano Arduino was used to read and calculate the distance by multiplying the reading by a constant scaling factor.

To avoid interference between sensors (cross-talk) a chaining topology was used. In this mode, the first sensor (the one placed on the back position of the drone) will measure the distance, then trigger the next sensor and so on for all the sensors in the array. Once the last sensor has ranged (the left one in the above figure), it will trigger the first sensor (the one placed on the back position) and this loop will continue indefinitely. To start this process, the Arduino Nano microcontroller will set high, for a short period (at least 200 us up to 96 ms), the pin 4 of the first sensor from the chain, after that it will have to return it’s pin (D4) to a higher impedance state.

In the code repository on GitHub, for the ultrasonic obstacle avoidance system, two code sections exist. In the first section, a proof of concept for communication software modules is presented. The first module (github.com/dmdobrea/HoverGames/Ultrasonic_Detection/01_Comm_FMUK66-ArduinoNano I2C-PoC) runs on the Arduino Nano system and configure, through a Wire library, the system to become an I2C slave. The I2C lines A4 (SDA) and A5 (SCL) are used. The I2Cslave system has the 0x3d I2C address (line 8). In receiveEvent function (a function registered to be called each time when the slave receives data) the command sent from the FMUK66 FMU is got and saved in command variable (line 45). At each specific request from the master (FMUK66 FMU), the requestEvent function will respond in a particular way (lines 30 to 40). The second module runs on FMUK66 FMU and sends (lines 71, 72 and 73) three different requests to the I2C slave device. For each request, the FMUK66 FMUwaits for three bytes that are displayed - in line 57.

In the second code section (github.com/dmdobrea/HoverGames/Ultrasonic_Detection/02_UltrasonicMeasurementSystem) the main software modules that drive the hardware component (see the above figure) are uploaded. The Arduino Nanosoftware module first (a) configures the system as an I2C slave device (lines 29, 30, and 31) and (b) triggers the first sensor (lines34 to 37) – in this mode the ultrasonic chain acquisition will be started. From each ultrasonic sensor nine values are acquired at a sample time interval of 10 ms (lines 57-61, 66-71and 77-81). After the acquisition, a non-linear median filter is used to remove noise from the data buffers. In the filtering algorithm, the Quicksort algorithm is used as an efficient tool to sort the data buffers (lines 150up to 198). The four filtered ultrasonic sensor values are sent through the I2C bus at a specific request from the FMUK66 FMU.

The daiDrone_Ultrasonic module (runs on FMUK66 FMU) create a background task used to get the ultrasonic sensor values. The background task, in the first section code, create and initialize several objects (I2C port – line 43, a semaphore – line 57, a timer – lines 61 up to 98). The second code section is placed in a while loop (lines 102-120) and is used to keep the task alive in the system at all times and to get and display data from the Arduino Nano (lines 117, 118 and 119). When a specific condition happens – the user sends a stop command (lines 184-189), the execution of this loop is completed and, in the end, all the resources used are released – here only the semaphore (line 123). Right after the while command is a sem_wait function call (line 102). This is a blocking function call; if the semaphore has not been posted yet, we are going to block this task. With sem_wait, we wait for some system event to occur, like an interrupt service routine or interrupt, to post the semaphore (sem_postline 141) that it is waiting on. As soon as this semaphore is posted, this background task is made ready to run. So, when a timer expiration event occurs, the background task is unblocked and new ultrasonic distance information is obtained from the ultrasonic unit (line 117).

A movie that presents the ultrasonic distance measurement system is the following one (in the same movie the heat sensor and the human detection unit are shortly presented):

Tips & Tricks:

- In my opinion, the chain approach to drive the ultrasonic sensors presented in the above figure (the system schematic) does not bring any significant sensitivity improvements. I think that this result is given by the sensors mounting position (one central sensor and the two other sensors, left and right, placed at -45 and +45 degrees off-axis) that limit the cross-talk interferences. So, my recommendation is not to use the sensors chain-link configuration for this placement of the ultrasonic sensors.

- The sensors need to have a clear shot to the detected object. Even a small extension of the PCB in the bottom-front part of the sensor can cause a decrease in sensor sensitivity. So, remove all extra PCB from the front part of the ultrasonic sensors – see the next figure.

The HoverGames Drone is an amazing device, from where I learned a lot, and I had a great time building the hardware and developing the software modules.

I also encountered some problems. In my case was several little issues that, at least one of them, generated completely unwanted effects. I present here, in this paragraph, all of these problems to other people who work or will work with this drone can correct them – so, let’s learn from the experiences of others, it is a little bit not some painful and less expensive.

JST-GH connector

The JST-GH connector, placed on the cable from RC receiver to the flight management unit (FMU), had a connection problem. As a result, my drone crashed from around 10 meters, maybe more (see below figure) – a lot of damages (2 arms and 1 leg broken, one "T" support, two leg connectors with the drone body, the GPS rod, etc. – to name only a few of the mechanical damages).

The flight log file was uploaded and can be analyzed at the following address: https://review.px4.io/plot_app?log=01b3aa07-2645-4e52-95e3-353ea46487f8. Analyzing the flight log, I found that in the end part of the flight, the radio connection was lost and regained several times, see the following figure.

After this incident, I tested extensively, on several days, the FS-I6S transmitter (on an airplane), and no problems of any type emerged. From here, I assumed that the JST-GH connector to the FMU unit was the problem - it was replaced and from that point, I have had no other issues with radio control communication link.

The ESC bullet connectors

Building the HoverGames drone or making different improvements (new components were added, or placed in different other positions) requires several time to connect and reconnect the motors to the ESC. But the soldering quality of the female bullet connectors (attached on the ESC) is not very high, more of that the soldering was done only on one layer of the PCB. I had these issues on two of the ESC, where these connectors broke off, see the following images.

I know that these ESC were acquired from a different provider and were not build by NXP. However, the superficiality of the soldering can cause significant problems during the flight due to vibration and the high currents that pass through these bullet connectors.

Comments