For my second skill, I thought it would be interesting to experiment with voice & vision. By adding vision recognition to the Mycroft.ai platform we are giving sight to our favorite voice platform. Running Mycroft in it's Picroft version allows us to take advantage of many of the popular features of the Raspberry Pi. One of the most popular is the PiCamera.

Currently a community contributed skill,mycroft_skill-take_picture, exists that puts the picamera under voice control. However with the skill I wrote here, the picamera is not only under voice control, but the image is sent to a vision recognition service called clarifai for processing. The resulting image tags are then read back to you by Mycroft.

In the future, voice user interfaces and vision recognition will be combined to provide very useful products:

- Smart histology assistant. In the biomedical area, imagine how a pathologist works today. They are provided with a stained tissue sample on a slide and examine multiple fields of view under a microscope. After analyzing the characteristics they see, they formulate a list of differential diagnoses. What if the pathologist could now talk to the microscope, telling it to move from place to place and use a vision recognition model to give an opinion? Maybe, "Hey Bones, what cell type is this?" Instead of "Hey Mycroft, ..."

- "Hey Mycroft, What the heck is this?" In this type of application, you walk into the hardware store (good luck finding a helpful person), show the item of interest and Mycroft tells you not only what it is, but more importantly where to find it.

- Voice controlled robot vision. Imagine using your Raspberry Pi 3 as a the brain for your next mobile robot project with Picroft installed. Not only will you be able to tell it where to go and what to do, it could take a look at things and let you know what it sees!

The skill I have created is a far cry from the ideas above, but it does show how two great AI platforms can be combined. In fact, it really needs a lot more work to really be a skill, but it does serves as a prototype for the ideas above and it certainly is a great and fun way to experiment with clarifai.

As you may be able to tell from the video examples, it is very interesting and fun to hear what clarifai and Mycroft will respond with! I see this more of an example of how to get two platforms to work together rather than a mature or developed skill.

Mycroft.ai & clarifaiMycroft.ai is an open source voice assistant software package, written predominantly in Python. It comes in 3 different varieties:

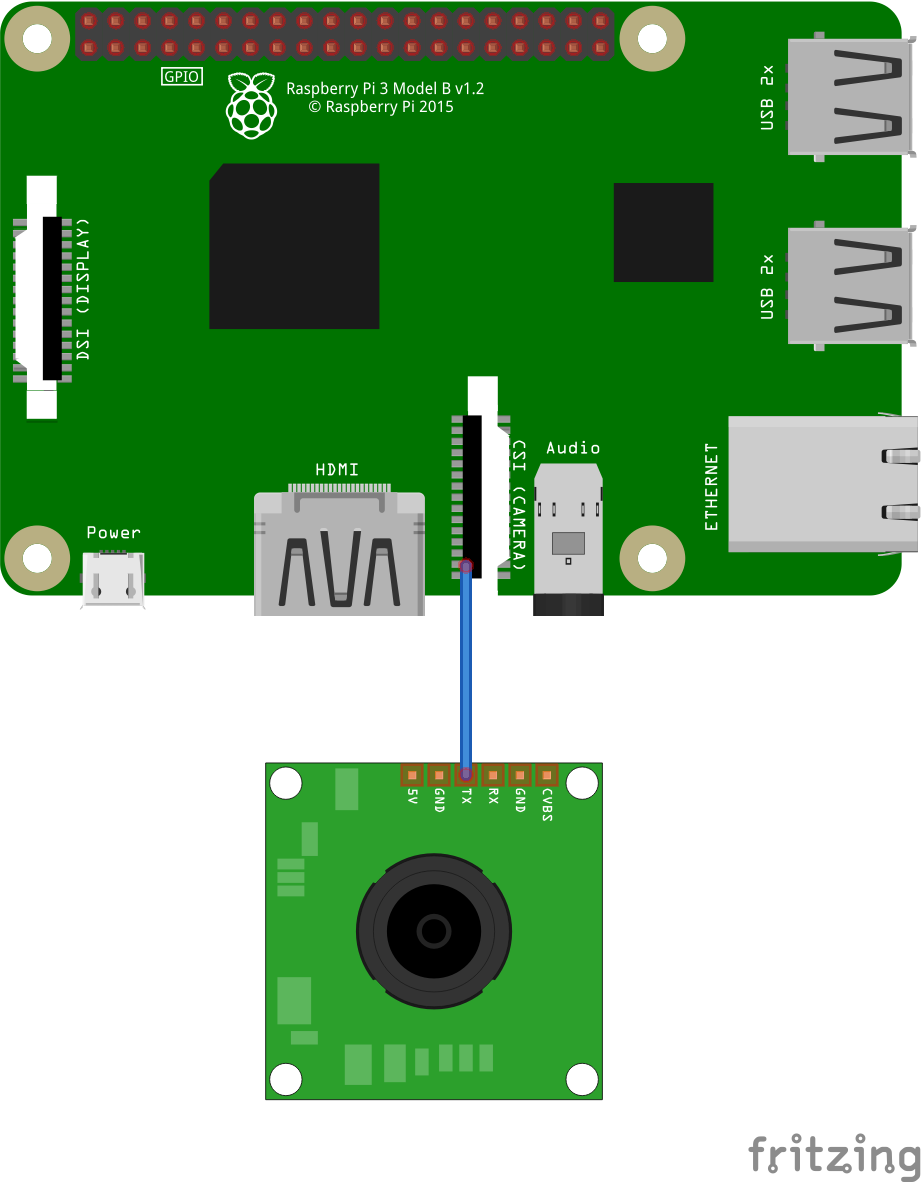

- A Raspberry Pi version called Picroft. This is version I have chosen to use.,

- Mycroft Mark 1 a very cool looking prototype physical enclosure based around raspberry pi than can be purchased from Mycroft.ai

- An Ubuntu 16.04 Desktop version

Like Alexa, Siri or Google Assistant, Mycroft responds to voice commands to enact skills. These skills are written in Python and as I explore more, it seems that if you can write it in Python, you can have it work as a Mycroft skill.

What is clarifai? There is no better explanation than their own from their Developer Guide:

The Clarifai API offers image and video recognition as a service. Whether you have one image or billions, you are only steps away from using artificial intelligence to recognize your visual content.

The API is built around a simple idea. You send inputs (an image or video) to the service and it returns predictions.

The type of prediction is based on what model you run the input through. For example, if you run your input through the 'food' model, the predictions it returns will contain concepts that the 'food' model knows about. If you run your input through the 'color' model, it will return predictions about the dominant colors in your image.

What this means to me is that I can use a python API to quickly and easily send image files to a clarfai "model" or even one of my own. In return, clarifai will send me back a list of tags or concepts it finds in the image.

In this simple test case "skill" we simply have Mycroft report back the tags or concepts associated with an image at or over a certain confidence threshold with clarifai's general model.

We could, however, create a custom model for the detection of neoplastic (cancerous) characteristics of cell types for our histology assistant. We could train up a model on our catalog of store items. We could also make a custom model of the inside of a nuclear reactor facility before it gets hit by and earthquake, tsunami and partial melt-down to aid in autonomous of semi-autonomous exploration of it afterwards.

So I hope that this "skill" helps you dream up and develop interesting combinations of voice and vision! And also gives a sense of some of the challenges you may encounter getting several platforms to work together.

Installing the clarifai Python APITo use clarifai you'll have to setup your account and get API keys. The free level of clarifai allows for:

Search and predict, 5,000 free operations per month, 10,000 free inputs and Train your own model 10 free custom concepts.

I recommend reading the developer docs prior to starting your adventures in clarifai.

In order to use clarifai with Mycroft, we need to install the clarifai Python API. I started the python interpreter on picroft and determined that picroft is running Python 2.7.9 and luckily the clarifai API works with Python2.

After,

sudo pip install clarifai

You aren't done! When the install process gave some error messages, I carefully read these messages, I saw that clarifai required the python Pillow library.

But it is not sufficient to just:

sudo pip install Pillow

You will also have to:

sudo apt-get install python-dev python-setuptools

This should allow you to install Pillow and then use the clarifai Python api.

However, I want to say that I recreated this string on instructions from memory and some sketchy notes! So my advice for you is that you should do as I did, carefully read the error messages and brave the somewhat treacherous waters of google searches and stackoverflow.com.

Actually the reverse order of these instructions should get it going for you! But the description above is closer to the way it happened for me. Good grief Charlie Brown! If I got this all to work, you can too!

Skill Creation StrategyThe best way to learn how to create skill from Mycroft is to read the documentation on skill creation, Creating a skill, and then try your hand at writing one! The Geeked Out Solutions YouTube channel has some excellent videos specifically devoted to Mycroft. I highly recommend looking at both prior to creating your own skill.

The Mycroft.ai commuity forum is an active and friendly place to learn more about mycorft and skill creation.

A skill resides in its own folder with a appropriate name under the /opt/mycroft/skills/ directory. The bulk of the activity of the skill takes place in a file called __init__.py I have found it a bit challenging to debug my python code in this file by editing the code in the template skill first.

So what I did in developing this skill, in writing my prior one, is to create a python script file that has the essential functionality of the skill. By running this under Python on the CLI, I can debug in a more comfortable way prior adding in the extra layers of complexity that come with interacting with Mycroft. I was happy to see that,btotharye, who is a professional programmer adopt a similar strategy is his video tutorial, Mycroft - Creating First Skill TSA Wait Times.

This skill has 2 components or intents. With the first, you can ask Mycroft to send an image file to clarifai. The image must be located in the /TestImages directory folder of the the skill and must end with a .jpg file extension. He will respond with the concept tags generated by clarifai's general model with 98% confidence. The following phrases, from the LocalImageKeyword.voc file allow this:

look at

let's take a look at

what do you see in

could you look at

It's really that simple. To understand how to connect these phrases with a file name to to send to clarifai, see the section on RegularExpressions below.

The second intent in this skill will take a picture using the pi camera, send the image to clarifai, which will then send the concepts found at 90% confidence.

look at this

take a look at this

what do you see

Perhaps we should add some phrases that are associated with taking pictures, such as "smile" or "say cheese" however this skill is more about analyzing an image than about just taking a picture.

Regular ExpressionsIn my first Mycroft project I did not take advantage of regex, but I specifically wanted to try this feature out and this project gave me the opportunity to do so.

Regular expressions(regex) are a method of text pattern matching. Regular-expressions.info defines them this way:

A regular expression (regex or regexp for short) is a special text string for describing a search pattern. You can think of regular expressions as wildcards on steroids. You are probably familiar with wildcard notations such as *.txt to find all text files in a file manager.

The site is also a great place for learning about regex.

In the context of Mycroft, regex allow for adding a feeling of responsiveness, flexibility and customization to your voice interface experience. Regex allow us to provide variable data to our intents. For this project, we can have Mycroft take a look at any image in the /TestImages directory by a name we specify.

This is accomplished in the skill-smart-eye/regex/en-us/imagefile.rx file:

(take a look at|what is in|look at|look at this|could you look at) (?P<FileName>.*)

The pipe,'|', operator, acts as an OR statement and will seek a match to what you tell Mycroft when this skill is activated.

The (?P<FIleName.*> ) captures the word following one of these phrases and assigns it the variable name of FileName. In this case it allows Mycroft to select out the name of the file from your utterance. The asterix, '*', captures any possible matches to whatever is spokenafter the phrase. This is about as simple as a Regex can get. You can imagine that much more powerful Regex combinations can be made to further enhance the responsiveness, flexibility and customization to your voice interface experience.

You can see here how my intent uses the FileName variable in the __init__.py file:

def handle_local_image_intent(self, message):

fileName = message.data.get("FileName")

general_model = self.clarifai_app.models.get("general-v1.3")

image_file = '/opt/mycroft/skills/skill-smart-eye/TestImages/' + fileName + '.jpg'

You must ensure that the variable is the exact same as it has been specified in the imagefile.fx folder. It's that easy to share the get the information from the utterance into the site of action, __init__.py!

Experimenting with my design, however, I feel that saying:

Hey Mycroft, look at cat

or

Hey Mycroft, could you look at butterfly

is a bit clunky and unnatural. I really what to say something like, "Hey Mycroft, could you look at the file named cat.jpg in the TestImages folder". While this is verbose, it feels more natural than the stutter step command above. But my intention in writing this part of the skill was to debug the connection between Mycroft and clarifai, not to fully form the interface.

The second intent of this skill is to look at images captured by the picamera and then sends the resulting image to clarifai and report back any tags or concepts detected with greater than 90% confidence.

Parsing the concepts returned caused me a bit of an issue. My first attempts to look at the returned JSON seemed to returned a single large value for 1 key. After a lot of trial and error and a bit of looking about sample code, I came up with the following:

response = general_model.predict_by_filename(image_location,min_value=0.90)

j_dump = json.dumps(response['outputs'][0],separators=(',',': '),indent=3)

We grab the JSON response from the clarifai general model in a variable called response. You can see these are the concepts returned at minimum of 90% confidence. The clarfiai API is that easy to use, it almost read like a sentence in a natural language!

We take the JSON and grab the 0 index value from the outputs key. This is the large set of concepts returned. We then convert this dump into a load and iterate deep, ['data']['concepts'][index]['name'], into the JSON tree of key:value concept names by an index number.

j_load = json.loads(j_dump)

index = 0

results = ""

for each in j_load['data']['concepts']:

results = results + j_load['data']['concepts'][index]['name'] + " "

index = index + 1

The for each statement above, allows for a simple and elegant approach to handling our inability to know how many concepts, at any confidence level, will be returned. We could specify a maximum with the clarfiai API or limit our replies, but in this stage that would not be helpful. In future development, this might be useful due to the cognitive overload hearing many concepts would cause.

Picamera Use IssuesThe python test script to access the picamera worked well from the start. When I integrated the picamera functionality into the skill's __init__.py file, at first it didn't work,then after some tweaking it did work, but only once!

The file /var/log/mycroft-speech-client.log and /var/log/mycroft-skill.log are very useful files to look at if your skill is not working. After some looking, I found an error dealing with a "vchiq instance".

Having learned my lesson from past experience, I did a google search, and found that in order the access the camera, the user executing the Python program or Mycroft skill in this case needed to have a file permission to do this. In Linux most everything is a file, even a device. To access the picamera, a user needs access to a file called /dev/vchiq. So the user executing the python test script had access, but the user, called pi, executing the __init__.py did not. Hence the failure to take the picture at all. Following the directions from Raspberry Pi – failed to open vchiq instance [Solved] demonstrates how to change the user permissions for this file to allow access to the device. I've placed a pdf of this post in my github repository for this project in case the link goes stale.

The next problem followed as I was only able to take one picture, then I was not able to use the camera again. This was due to an error I made in the instantiation of the camera instance. Initially I was executing this statement

self.camera = picamera.PiCamera()

every time the intent handler was called. However, this object was being preserved in between called, causing a conflict upon "reinstantiating" it(I wonder if I can use this persistence to useful effect with future skills?). By moving the above statement to the __init__ function:

def __init__(self):

super(SmartEyeSkill, self).__init__(name="SmartEyeSkill")

self.clarifai_app = ClarifaiApp(api_key='***Your API Key Here')

self.camera = picamera.PiCamera()

...

I was able to solve this issue and able to call the picamera over multiple times successfully.

The main lesson here is that is good to have a decent handle of the Linux command line and always be ready to work through error messages and understand the nature of the Mycroft "enclosure".

ExperimentingWhy 90% for the picamera intent and why the black background?

In my initial experimentation with clarifai, the tags or concepts returned from the test images were excellent. When I initially placed some of the objects you see below in front of the picamera with a white background, the results were purely awful. Looking at the test images, I saw that many of them had dark backgrounds. So I added a black background and the results were better, but not great. Lowering the confidence level to 90% greatly improved things in most cases as you can see in the examples below.

I think some work is needed on the quality of the images I am sending and in working with the models available on clarifai. What I find most interesting is not the specific "noun" tags returned, but the more conceptual tags returned. Such as "cute" for our cat, which is of course very true.

Comparing Apples and Oranges:

ConclusionsOnce completed to this stage, I realized what I had created was easy to use platform for experimenting with voice and vision. The project allowed me to explore the use of regular expressions to provide some "generality" to the voice user interface. The project also allowed me to explore.

Combining voice and vision into a useful skill will clearly take some more work and perhaps a bit more focus on a specific voice controlled vision recognition task.

If you are interested in pursuing this, I encourage you to muscle through the Python module dependency issues and linux issues to get this working and please share your efforts here on Hackster.io! I can't wait to see what you do with the image concepts returned by clarifai!

Comments