This project will outline how to build a smart vision system using a Xilinx ZYNQ UltraScale+ SoC. The idea is to obtain camera frames from a WIFI camera module and pre-process them using a custom PYNQ overlay which allows applying custom image processing filters into the programmable logic (PL).

First we are going to use Vivado HLS to design a custom convolutional image processing IP core. Then we are going to design the hardware architecture around the Ultra96 development board. Finally we are going to use the PYNQ framework to implement the complete image processing pipeline using simple Python functions to control the hardware operation.

Vivado HLS allows one to generate HDL IP cores by coding the algorithm in C /C++. Needless to say this speeds up development by quite a bit. Applying filters to images is basically a2D convolution process. With that in mind we are going to leverage the reVision framework to implement custom image processing in hardware.

XFOPENCV Library

We'll leverage the reVision framework from Xilinx to build the Convolutional IP core using Vivado HLS. Download the Xilinx OpenCV library from https://github.com/Xilinx/xfopencv

By cloning the repository:

git clone https://github.com/Xilinx/xfopencv

Now switch under the /examples folder and make a copy of it. The copy the tcl file from the Standalone_HLS_Example under the HLS_Use_Model. This folder shows how to accelerate the dilate function from OpenCV. in this project however we are going to implement the Filter2D function which implements 3x3 convolutions. Since the filter coefficients can be set via the AXI4 control bus this makes the HDL IP quite flexible.

The Ultra96 board is built around a ZYNQ UltraScale+ chipset (xczu3eg-sbva484-1-e) so we'll have to modify the script file so that the exported IP is build around this chipset version. Note that one cannot use IP which are built for ZYNQ 7000 devices due to the differences in architecture.

Under the Start menu open the command line version of Vivado HLS. Switch to the foder directory and issue:

vivado_hls -f script.tcl

This will simulate the testbench, synthesize the IP core and export it. Alternatively you'll can use the Vivado_HLS GUI as shown below. The only data needed is the timing requirements of the IP as well as the uncertainty. After post-synthesis we can observe that the timing is met.

Once the core is synthesized we have to export it

Now, under the generated solution folder go under /implementation/ip and copy paste the generated ZIP file under a folder that we'll name it IP.

xilinx_com_hls_filter2D_hls_1_0

This is the IP core of the image processing function. At this stage we have synthesized the convolutional IP core which is ready to use. This concludes the generation of the Image processing HDL IP core. We leveraged the Xilinx XV library and exported the part for the Ultra96 ZYNQ UltraScale+ SoC. The next step is to create a hardware system around the created IP.

Image processing hardware architectureFor this project I used Vivado 2018.3. Crete a new project by selecting Ultra96 as the development platform.

Add the constrains file which specifies the pin voltage levels. This is given on the Ultra96 board website (attached below).

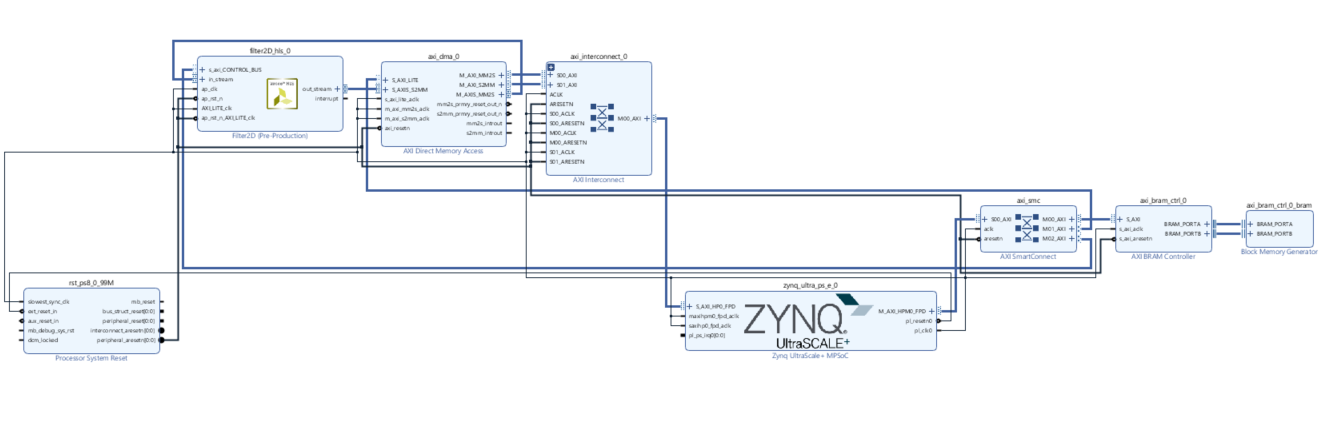

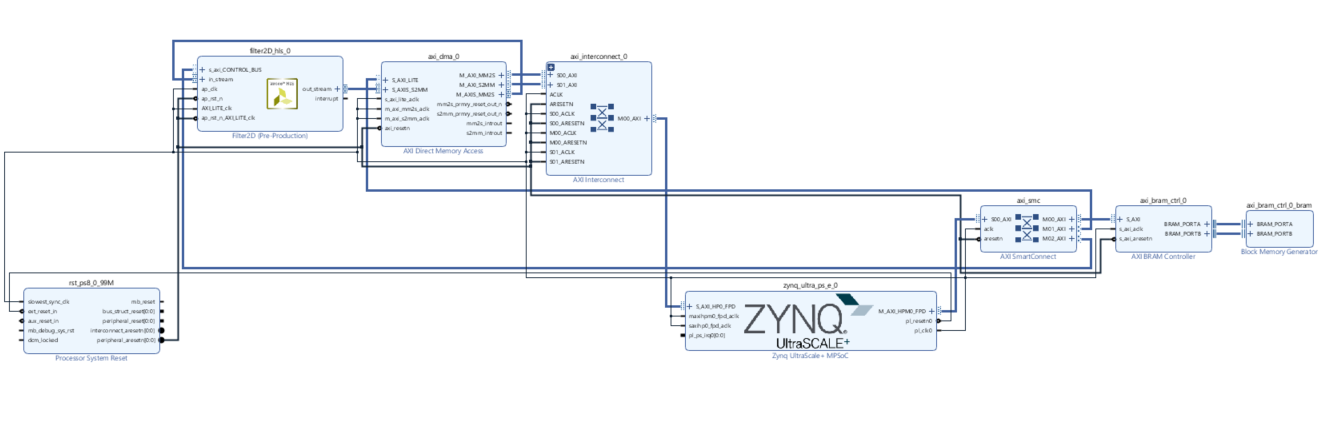

For this block diagram setup we'll be using:

- ZYNQ UltraScale +

- AXI Interconnect IP

- DMA IP

- Filter2D (This is the generated IP)

- AXI BRAM

On the ZYNQ UltraScale we'll have to make some modification after applying the preset in order to enable the high performance data transfer between the DMA and SoC. To do so, on has to enable the Full Power Domain High Performance slave bus.

The AXI interconnect will be used to control the AXI Control bus of the DMA, Filter2D, BRAM. The AXI interconnect is connected to the master AXI HPM0 FPD.

The Filter2D is an AXI stream component with a control bus. The AXI control bus is used to configure the filter coefficient via a memory mapped interface. The output of the filter is connected to the input of the DMA streaming interface. The input of the filter is connected to the stream output of the DMA engine. We need to disable the scatter gather mode of the DMA to operate in in simple mode.

Finally we'll also add an AXI BRAM which is memory mapped to the same interconnect as a slave.

The ZYNQ UltraScale is a heterogenous platform. The board contains a 64-bit quad core Cortex-A53, 32 bit, deterministic Cortex R and a PMU core. Since we have multiple address spaces we have to make sure we assign the correct ones. The address space for the attached peripherals is shown below.

After building the hardware we have a block diagram as shown below:

Now after the synthesis and the implementation stages we can generate the bitstream file used to program the PL Once this is done, first we export the block diagram and then the bitstream file. We have to place both of these under the same folder and name them the same name.

As one can see we are barely scratching the surface when it comes to resource usage. Compared with ZYNQ7000 series SoC, UltraScale devices have ample resources.

This concludes the hardware design of the project. The next step is to write the firmware that will control the developed IP core.

SoftwareUltra96 comes installed by default with Petalinux. For this project we are going to chuck that away and use PYNQ. This is a Ubuntu based Linux distribution tailored for ZYNQ and ZYNQ UltraScale+ devices.

PYNQ

To set-up PYNQ download the version 2.3 of the image for the Ultra 96 board and burn it to the SD card using image disk maker.

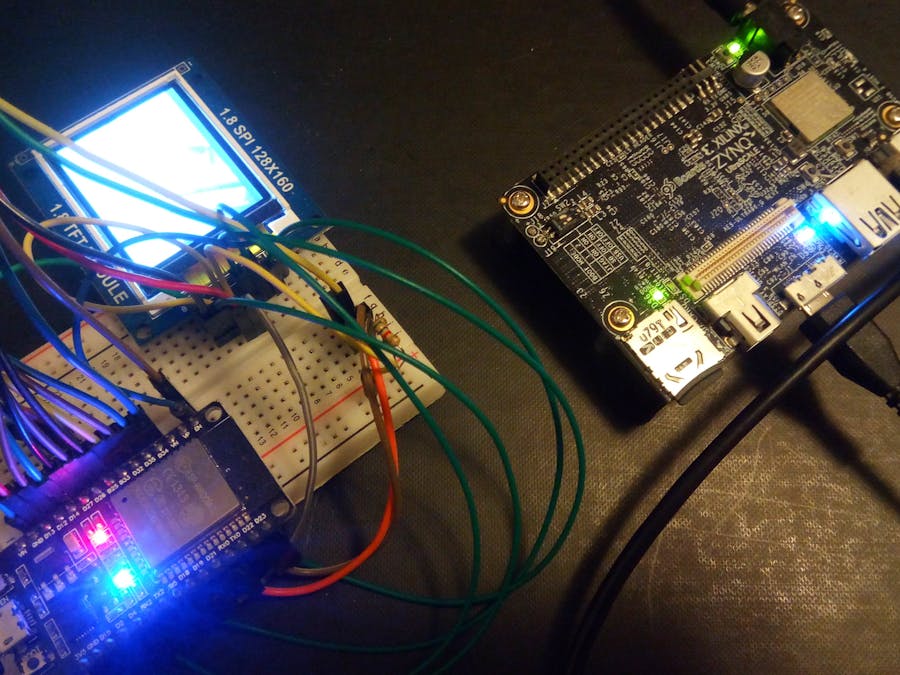

The simplest path is to plug in the power brick and connect via the USB micro port. The board is going to enumerate as an Ethernet device.

I found out that Firefox does not play nice with the Jupyter server so I had to use Microsoft Edge browser but YMMV. Log in by entering the IP 192.168.3.1 when connected via USB port.

Now use WinSCP to transfer the complete PYNQ_FIlter folder. After the files are copied we can now create a new Jupyter notebook to read image files from either the camera or statically and per-process on the PL side.

The next step once we have verified that PYNQ is working is to test the HDL IP core. Copy the folder where we exported the block diagram and bitstream under the home folder on PYNQ. Then create a Jupyter notebook. Import the libraries and then import the overlay. To make sure we have a working system we can query the overlay.

The snapshot below shows that the hardware has been downloaded successfully on the PL (Programmable Logic) side. Now we have to develop a driver so that we can leverage the IP.

At this point we have verified the hardware architecture so we need to explain the filter IP. To test the IP we are going to use a configure the filter as a SobelX and then as a SobelY filter.

Below you can observe the output when the SobelX hardware function is called.

I did the same thing with the Canny edge detector IP module. The results is shown below:

Using a 400x225 image size one can get around 685 frames per second. To test the speedup we can time the python version of OpenCV and compare it with the hardware version.

The TCL file of the block diagram and the bit-stream together with the Jupyter notebook have been uploaded on GitHub. You can use this to duplicate or extend the system with additional filters

Next installment will show how to expand this system with a imaging sensor taken from an IP camera.

Comments