Project Motivation and Overview

The idea of developing WITRA was motivated by the concept of teleoperation of robots. Intended to be performed in a more intuitive manner that with the already existing products, the teleoperation of a robot arm is based of the movements of the human arm. Instead of using joysticks or smart devices, such as tablets, the user is able to operate the robot by simply moving his/her arm, in a very natural way. The goal here is that the robot arm act like an extension of the user's body, moving in the space with a trajectory that corresponds to the trajectory of the users hands. Furthermore, the wearable interface (WITRA) is intended to be fully wireless, providing the user with a more natural experience, without cables laying around.

Intuitiveness is here the keyword. A study performed at NYU Polytechnic School of Engineering by Vinicius Bazan, head of this project, showed that using a wearable interface for robot arm operation gives better performance than using other intuitive devices, such as videogame controllers, smartphones and tablets. WITRA is thus intended to enable any user - with or without technological background - to teleoperate a robot arm with the same grade of success of using his/her own arm to perform taks.

Teleoperation tasks include bomb defusing, operation in hazardous environments, such as ratioative rooms, deep-ocean-equipment maintainance, among many others.

One further use for WITRA is for situations where the user is not strong enough to lift an object an can perform this task using a robot arm, but doing this as if he/she himself/herself were picking the object.

The components used for this project are described in details as follows.

Components

Getting the user's arm movement

The human arm has basically 7 rotational degrees of freedom (DOF), namely: 3 at the shoulder, 1 at the elbow and 3 at the wrist. However, unless the robot arm is antropomorphical and built with the ecxat same DOFs of the human arm, the robot and the user's arm won't have the same geometry. An interessant approach to the implementation of WITRA is to make it possible to operate any type of robot, not only antropomorphical ones.

Moreover, to make it possible, an initial ideia would be to measure the angle of rotation of certain joints on the user's arm (different DOFs) and use this information as reference for the position of each rotational joint of the robot arm. However, as said before, this would actually make sense only for the case of an antropomorphical robot. For, say, a SCARA or cylindrical robot, it would be just as natural as using a tablet app for operating the robot, since it would consist of a simple correlation of actions (rotation of one joint at the user's arm or, for example, tilting the tablet) and results (the rotation of one single joint on the robot arm).

For this purpose we've implemented a spatial correlation between the hand of the user and the robot's end effector. This comes into play by measuring each DOF of the human arm and using this information to calculate its foward kinematics, resulting on a (x,y,z) position of the users hand. On the other side, we want to control the robot's trajectory with cartesian coordinates as reference. This will be explained soon.

In order to measure each rotation of the human arm, inertial sensors (IMUs) are used. In fact, three of them are used: one at the user's hand, one at his/her forearm and the thrid one at his/her arm. Each IMU is capable of calculating its yaw, pitch and roll. and thus we have 9 measurements of angle. With this (redundant) information, one can assess the value of each rotation of each of the 7DOF of the user's arm, in order to calculate the foward kinematics.

The IMUs used are the Razor 9DoF model.

Using Toradex module and data processing

The data from the three sensors is sent to a Toradex module over serial interface and processed using a real-time application. As previously mentioned, once we have the measurement of the 7DOFs of the user's arm, it is possible to calculate the forward kinematics of the human arm. This gives a (x,y,z) position, which is the input, after proper mapping, for the inverse kinematics of the robot arm. In other words, given a certain desired position for the end effector of the robot, it is possible to calculate a set of angles of rotation of its joints.

Finally, the calculated angles of rotation of each robot joint will be sent over ethernet to the controler of the actual robot, which can be miles away from the user, thus making the teleoperation possible.

Using the Toradex module will make the project totaly mobile and wireless. A previous version of the project used a PC instead of the Toradex module and thus many cables were necessary. The biggest problem was that the PC was not so mobile and added difficulties on taking the project to any place. With the implementation of a embedded computer everything will be easily at the user's hands.

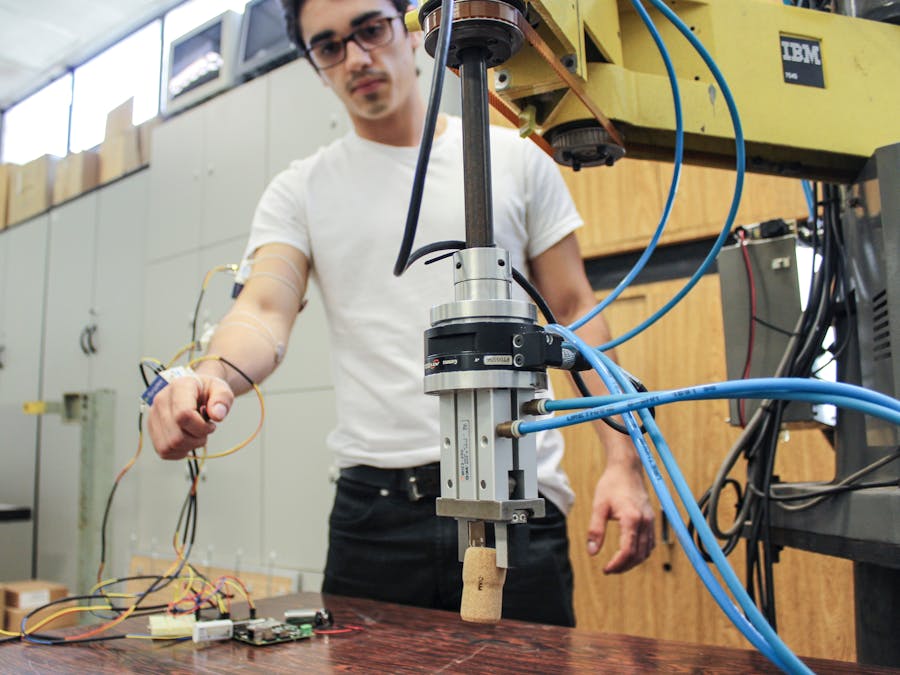

Robot Arm

The robot used in this project is a SCARA 7545 from IBM. This is a very practical robot, since it can reach a wide range of positions in space, in a better manner when compared to a cartesian robot.

This robot has 4 degrees of freedom (DoF): 3 rotational and 1 prismatic. This configuration allows the robot to reach a great variety of positions in space, creating a versatile work envelope and also being faster than a cartesian robot.

The wide range of positions that this robot can reach in space is due to a wide reach of its joints. The first rotational joint can rotate from 0 to 200 degrees; the second, from 0 to 135 degrees; the prismatic joint can move from 0 milimeters (upper position) to 200 milimeters (lower position); the last joint (tool rotation) can rotate from -180 degrees to 180 degrees.

System and Software

The software is responsible for acquiring data from the IMUs, processing (i.e, filtering and calculating the direct kinematics of the human arm and mapping to the robots workspace) and sending data over TCP/IP. The basic scheme is shown below:

This IMU presents an accelerometer, a gyroscope and a magnetometer. It is possible to get the raw data from these sensors. However, there is an embedded processor used to perform the filtering and fusion of the data, in a way to produce stable and reliable output. The output data can be read in terms of Euler Angles, Quaternions or Linear Acceleration.

The inertial sensor also has a magnetic field compensation software and thus the data is not influenced by variations of the magnetic field surrounding the sensor.

The data can either be viewed on LP-Reseach's software (and also recorded and played back) or it can be acquired using a C++ code, using the libraries provided by the manufacturing company.

Below is a video explaining and demonstrating the IMU in work:

Bluetooth USB AdapterBluetooth USB adapters are a bit difficult to find nowadays because almost every laptop, PC, smartphone or tablet already has built in bluetooth capability. Thus, we tested a few bluetooth dongles and ended up finding one that works well with the Toradex Colibri running Windows CE 7.

The problem with bluetooth dongles is that, although they are pretty much cheap, a lot of them have obsolete drivers or do not get recognized by a PC or by the Toradex module.

Basically we followed the information from the Toradex website. At this link, there is some information like "Any USB dongles based on the CSR BlueCore4 chipset (BC04) works with the MS WinCE USB Bluetooth Stack". We recommend that you use one of the indicated bluetooth USB adapters. We are using the DELOCK Bluetooth USB 2.0 Micro Cl2 10m V3.0 EDR. It was impossible to find it in Brazil, so we had to order from Germany. This may be a problem for people in countries like Brazil, since here we only find those obsolete, not-functioning dongles mentioned above.

The installation process was straight forward. Toradex provides the flash installers to add bluetooth support here. We are using Windows CE 7 and the Windows CE 6.0 flash installer works fine. One just has to download it, extract and paste the .cab file to the flash disk on the Toradex module. Then, simply double click it and it will be installed.

After that, the device should be found by your computer or smartphone. You can pair with it, like we did:

Finally, you will find the file "bthpnl", a Bluetooth Manager that was installed on your Toradex module after you've installed the Flash Installer. Double click it and you can scan nearby bluetooth devices and add to a trusted list the ones you want to be communicating with it.

Now you should be ready to go!

IMU Razor 9DoFThis IMU is sold, among other stores, by Sparkfun in the U.S.A. (https://www.sparkfun.com/products/10736). It features 3 sensors: an ITG-3200(MEMS triple-axis gyro), ADXL345 (triple-axis accelerometer), and HMC5883L (triple-axis magnetometer). Thus we have 9 Degrees of Freedom of measurement. The IMU has also a on-board ATmega328 for data processing. Thus, this is a very convenient IMU and is somehow a low-cost product. One can find a tutorial online on how to program and implement it on a project at https://github.com/ptrbrtz/razor-9dof-ahrs/wiki/Tutorial).

Besides, there is an available Firmware for the Razor 9DoF available at https://github.com/ptrbrtz/razor-9dof-ahrs through which is also possible to callibrate the sensors and select the way of outputting data. This firmware is very satisfactory and provides the user with a variety of programming and setup options such as callibrating sensor by sensor, choosing the data output between text and binary format. It also compensates the YAW reading in order to avoid a initial drifting, common in simpler IMUs. The Firmware is coded into Arduino language (since the onboard processor is an Arduino-like). However, there are also sample codes for Processing (animation on the screen), C++ (for data aquisition - only works on Linux, Unix and MacOSx operation systems) and Android (for communication wirelessly over Bluetooth with an Android device). Moreove, this IMU outputs data over a serial interface. Hence, it is also possible to hook up a Bluetooth modem to it in order to make the data transfer wireless. The modem we are using is a Bluetooth Mate Silver.

When not using the IMU wirelessly nor programming it, the board can be connected to the computer either using a FTDI cable (to connect to the computer’s USB port) or using a FTDI board. We decided to use a FTDI board. It’s important to say that there is a 5V and a 3.3V version of the FTDI cable/board. This IMU requires a 3.3V one.

Furthermore, a LiPo battery is also used in order to power the whole thing. To make things easier, we chose a rechargeable LiPo battery and a LiPo battery USB chager. The whole setup is show below.

A key factor in this project is the kinematics of the human arm. We use it in order to convert the angles measured by the IMUs to the position of the user's wrist. This position is mapped to the robot's working space and used as reference of position for the robots end-effector. In this manner, WITRA is applicable to any kind of robot (cartesian, cylindrical, spherical, SCARA, antropomorphic) for which we can calculate its inverse kinematics.

We deal with a simplified geometry, that is, considering only the main 7 degrees of freedom (DoF) and ignoring the movement of the scapula and forearm pronation. Thus, the DoFs considered are: 3 for the shoulder, which acts as a spherical joint; 1 for the elbow; and 3 for the wrist, also a spherical joint.

Therefore, in this text we calculate the direct kinematics of the human arm. Our goal is to, given the rotations of each joint of the human arm, calculate a trasnformation matrix that contains information about the position of the wrist in cartesian coordinates, as well as the spacial orientation of the hand. The transformation matrix we want to develop has the form of

Each rotation (DoF) at the human arm is represented by a 4x4 matrix Ai, with i = 1, ..., 7. Furthermore, our reference frame (x0,y0,z0) is placed at the shoulder. Hence, the 7 matrices are

The resulting matrix is given by

This matrix, Awrist has the same strucutre of the transformation matrix shown in (1), where

Therefore, it is possible to calculate the position of the human’s wrist in terms of cartesian coordinates, given the rotation in each joint. The rotation of each joint can be measured using inertial sensors (IMUs).

You can find more details regarding direct kinematics in N. I. Badler, D. Tolani. Real-Time Inverse Kinematics of the Human Arm. Unversity of Pennsylvania Scholarly Commons, 1999. and J. J. Craig. Introduction to Robotics - Mechanics and Control. Pearson Prentice Hall, 2005.

Toradex + MATLAB: IMU data acquisitionIn the video you can see the data from the IMU's spacial orientation being plotted in MATLAB. Previously the intention was to send data from the IMU to Colibri over bluetooth. However due to problems using the Windows CE bluetooth stack, the communication was switched to serial interface.

The IMU Razor 9DoF is connected to COM3 on X16 pin group. To read the data on this COM port, I followed the tutorial present at the developer section of Toradex website at http://developer.toradex.com/knowledge-base/how-to-use-uart

Basically one needs to configure the COM port and the use

ReadFile(portHandle, receiveBuffer, 23, &noOfBytesRead, NULL);

to read from it and store the read data in receiveBuffer.

After manipulation of the data contained in this buffer, a string is sent over TCP/IP and the module as client

sprintf(sendBuffer, "%.2f,%.2f,%.2f\n",angles[0],angles[1],angles[2]);

retVal_S = send(commSocket, sendBuffer, strlen(sendBuffer), 0);

On MATLAB side, a TCP/IP server is created and the received data is read and separated into the indivudal angle values. A code example is shown below:

t = tcpip('192.168.27.101', 23, 'NetworkRole', 'server');

fopen(t);

while ~KEY_IS_PRESSED

data = fscanf(t');

data = char(data);

[yaw,pitch,roll] = strread(data,'%f%f%f','delimiter',',');

flushinput(t);

pause(0.2);

end

fclose(t);

Along with the above code, the plot() function is used to plot the values of yaw, pitch and roll continuously on the screen.

Here we have a picture of the code working:

In order to control the position of the SCARA robot in our project, we need to develop the Inverse Kinematics of it so that it's possible to calculate each degree of freedom (DoF) in terms of the desired (x,y,z) position. The SCARA robot has four DoFs, namely, three rotational and one prismatic, as seen in the figure below:

The robot has rotational and prismatic constraints: theta1 can go from 0 to 200 degrees in anti-clockwise direction; theta2 goes from 0 to 135 degrees in clockwise direction; d3 is limited from 0 to 200 milimiters downwards and theta4 goes from -180 to 180 degrees.

We want, therefore, to calculate the values of theta1, theta2 and d3 based on the values of (x,y,z) at the end effector. The value of theta4 is not regarded here, since it will be dealt separately in the furute as pure orientation of the tool, not contributing for the position of the end-effector. It's quite simple to model the inverse kinematics of this robot. When seen from above, it is like a double-pendulum, as shown in the next figure:

Thus, one can calculate the values of theta1 and theta2 from the values of x and y using the law of cosines:

Furthermore:

Applying the law of cosines to OAB once more:

and, finally,

Note that there is also another solution for theta1 = beta - phi and theta2' = - theta2. However this solution does not agree with the robot rotational constraints.

Finally, the value of d3 is directly derived from the value of the z-coordinate. That is: d3 = z.

Hence we complete the inverse kinematics of the SCARA robot.

Creating a 3D Animation of the SCARA Robot in MATLABOn this video we show how we are using the Robotics Toolbox available here with MATLAB to generate a 3D animation of the SCARA 7545 robot from IBM. This is the robot used in this project. In the video Vinicius discusses how to use the toolbox, some of its functions and the running animation. The code uses the inverse kinematics described on the previous section.

Here is a snapshot of the program working:

A Big Difference Between Work Envelopes

This is nice challenge to WITRA because since the human arm and SCARA have different kinematics and work envelope geometries, it is not an easy task to accomplish. Generally speaking, the work envelope of the human arm is a section of a sphere. That is, we can reach, with our hand, a great variety of positions in space that are limited by a sphercial-like boundary. On the other hand, SCARA's work envelope, when seen from above is like shown on the next figures:

Thus, one can notice how different from our arm's work envelope this is.

Mapping From One to the Other

To procede with the geometrical mapping, a decision was made: make it as simpler as possible. Therefore the "trick" is to find sections of each work envelope that have similar geometries. Regarding the human arm, when seen from above, in a horizontal plane, the user can reach a space that resembles a section of a circular ring, as shown in the figure below:

Picture source: http://www.theergonomicscenter.com/portal/workstation.shtml

In this manner, we can consider a feasible work envelope for the human arm, when seen from above, a section of a circular ring with 140 degrees of amplitude. Similarly, there is a section of SCARA's work envelope that has the same geometry. This space is reached, basically, when theta1 is rotated from 20 degrees to 160 degrees. The next figure shows how each final work envelope looks like.

Now it is easy to map from one to the other by simply applying a linear relationship between (x,y) coordinates from the user arm's work envelope to SCARA's one. Finally, the z position is simpler to deal with and linearly mapped from the z-coordinate of the user's arm to d3 ou z-coordinate from SCARA.

In conclusion, we now have a way to translate the positions that the user can reach to goal positions on the robot's end-effector.

Teleoperation Test #1 - Using 1 IMU and Colibri with 3D AnimationAfter we showed, on the previous sections, how to acquire IMU data on Colibri and send information over TCP/IP to MATLAB, as well as how to create a 3D animation using the Robotics Toolbox, now WITRA performs its first Teleoperation test.

An IMU is positioned at the user's hand and its spatial orientation is converted to (x,y,z) position of the hand in terms of the coordinates of the wrist. Thus the hand acts like a rod fixed on a ball-joint. This position is our reference position.

Thus we can find:

x = r*cos(phi)*cos(theta)

y = r*cos(phi)*sin(theta)

z = -r*sin(theta)

These coordinates are mapped into (x,y,z) goal position for SCARA's end-effector. On the code side, as already shown updates 6 and 7, the inverse kinematics is performed for SCARA and the 3-dimensional representation of the robot can be animated.

Below is a video documenting the test:

Impedance Control Implementation on SCARAA Quick Video Showing SCARA with Impedance Control

The Purpose of an Interaction Control

There are numerous ways to control industrial robot manipulators. However, many of them fail in the sense that they do not provide surrounding objects and people with enough safety. Thus, although the goal of WITRA is to make it possible for the user to teleoperate a robot arm and, therefore, not share the same environment as the robot, we still want to guarantee that the objects on the robot’s surroundings do not get damaged by robot operation. Impedance control arises as a good option in this case, once it deals with controlling the interaction between the robot and its environment.

Impedance control consists of controlling the dynamic relationship between force and position, instead of controlling only force or position. This way, it is possible to enable the robot to behave as a second-order system: mass-spring-damper. These characteristics can be modified as needed.

Impedance control was first presented by Hogan in 1985 and according to his work, the robot manipulator and its environment can be modelled as a impedance-admittance relationship, with the robot being an impedance and the environment, an admittance.

| | Impedance | Admittance |

| Input | Displacement or velocity | Forces |

| Output | Forces | Displacement or velocities |

That is, we set a goal position for the robot and if it is off this position, it will produce on a force on what is preventing it to go to that position, namely, the environment.

Another advantage of Impedance Control is that it can be used to perform free movements as well as movements with contact between the robot and the environment. Unlike other control methods, it is not necessary to switch the controller when contact is performed and thus instabilities are avoided.

Hence, impedance control is capable of tolerating collisions without loosing stability. This is a very important characteristic in WITRA’s application field, since the environment is, in most cases, non-structured and unpredictable (e.g.: In deep-ocean maintenance).

ImplementationBasically, our goal is to have the robot follow a setpoint position, as well as have a desired velocity of the TCP (Tool Center Point). We model the TCP’s balance point equations with the environment. Given that we want to control the dynamic relationship between the manipulator and the environment, the equations for spring and damper forces, are, respectively:

Where K and B are the desired spring stiffness and damping. X0 and V0 are the desired position and desired velocity, respectively, and X and V are the actual position and velocity.

The position X is related to the joint angles through the forward kinematics and, thus, is a function of the joint variables:

X = L(Ө)

where Ө is a vector joint variables. Similarly, V can be related to the joint velocities thought the Jacobian Matrix:

V = J(Ө) Ө’

Where Ө’ is the first derivative of Ө. Finally, the joint torques are related to the force at the TCP though

There this Jacobian matrix for this last equation is the force-torque Jacobian.

Hence, it is possible to calculate the joint torques by knowing the values of K, B and the desired position and velocity at every instant.

Finally, the impedance behavior is shown on the next equation:

Where F_int are the interaction forces between the manipulator and the environment. These forces are measured using a six-axis force sensor at the robot’s end-effector.

Besides that, we can show the equation that models the manipulator dynamics in terms of torque, accounting for the interaction torque (tau_int):

On the right-hand side of the equation, the first term is the inertial term, the second, the torque that depends Coriolis forces, the third accounts for torques that depend on velocity, such as friction and the last term is the torque related exclusively to position, such as gravity.

The next figure shows a block-diagram of the implementation. With this control implementation, it is possible to calculate the desired torques at the joints of the manipulator.

There are many issues which can arise from the method previously shown. One of them is that torque control can lead to instabilities of the robot, due to a few factors, among them the mechanical transmission that inputs friction factors that cannot be neglected. Other ways of implementing Impedance Control can be explored, such as the position-based one.

On this implementation, we try to obtain an equation that calculates the new position of the manipulator based on the desired dynamic characteristics, performing a position control of the joints. The next equation deals with the displacement X_a that is used to correct the position setpoint. Using the interface force measured by the force sensor:

Furthermore, knowing the desired position X_v, the final position is calculated by

X_c = X_v – X_a

The final block-diagram is given by:

On this video you can see how we send information over TCP/IP from a PC to SCARA's server with the end-effector's cartesian position information.

On the robot side, the server expects a setpoint information continuously. This setpoint is set as the setpoint for the Impedance Controller.

The server expects a string of the form:

{"Command":"Impedance","X":0.2442,"Y":0.5075,"Z":-0.0785}

which contais four pieces of information: the mode of control, x-coordinate, y-coordinate and z-coordinate, on this order, for the robot's end-effector.

We'are using Hercules [1] to create a TCP Client and send strings with information to the robot's server.

This way we can wirelessly set the goal position for SCARA's end-effector continuously. The next step is to integrate this with WITRA and make the wearable interface send this piece of information and remotely operate the robot.

Previous Direct Kinematics Method

Earlier we showed in details how to perform the direct kinematics of the human arm. That method, however is very useful when we have the rotations in each joint calculated in a chain. That is, when, for example, the elbow rotation is calculated relatively to the upper-arm. This would be the case if a potentiometer or encoder were attached to the user's elbow. To illustrate this fact, recall the figure shown previously, that has all the seven rorational axes of the human arm:

How We Measure Angles with the IMUs

The final version of WITRA has 3 IMUs positioned on specific positions at the human arm: one at the upper-arm, one at the forearm and one at the hand, as shown below:

Thus, each IMU can measured the orientation its respective section of the arm. Nevertheless, a IMU measures absolute values of Yaw, Pitch and Roll, that is, in terms of a world reference.

Now, let's take the example of the angle measure at the elbow. In this sense, we would not have a direct measure for the elbow rotation, for example, as we would have when using a encoder/potentiometer. This issue is also valid for each DoF at the wrist. Therefore we have two options:

- Use geometric relations to compare the Yaw, Pitch and Roll values from the two IMUs (at the upper-arm and forearm) to develop an expression for the value of the elbow rotation;

- Use a simpler geometrical approach that takes as input for the direct kinematics the absolute orientation of each section of the arm.

A Simpler Way of Analisys

The second option is more accurate and less calculation-consuming for our application. Calculating the Direct Kinematics at each iteration of the program (around every 50 miliseconds) is crucial and plays a very important role in translating the user's arm movement to robot's trajectory. Hence, a geometrical approach is used. The basic and only-one concept used here is the representation of any position in terms of spherical coordinates.

Spherical coordinates are represented by a radius of sphere, r, and two angles: theta and phi. The first is measured on the horizontal x-y plane and the second, on a vertical plane formed by the z-axis and r.

Now, consider that the upper-arm is treated of r on a spherical coordinate system that has it's origin at the user's shoulder. Thus we can find its other end cartesian position by doing a quick calculation:

On the same manner, it is possible to now attach a coordinate frame to the users upper-arm end (or elbow, beginning of forearm) and procede with the same calculation method. This new frame is non-inertial. However it has only translation, no rotation. Thus the position of the wrist (end of forearm) in terms of the elbow can be calculated as

Finally, we attach a frame to the wirst and calculate the position of the center of the hand in terms of the wrist:

Now we have the coordinates of each section of the human arm in terms of a coordinate frame with origin at the base of each respective section. That is, we have the (x,y,z) coordinates of the hand in terms of the position of the wrist; the coordinates of the wrist in terms of the position of the elbow; and the coordinates of the elbow in terms of the position of the shoulder, taken as the reference. Finally, in order to find the position of the hand in terms of the reference at the shoulder, we can simply add the intermediate coordinates calculated above and thus have:

A graphical represetation of what as said above is shown next:

We thus complete the mathematical derivation of the direct kinematics of the human arm. This simple calculation is applied on our code to calculate the position of the human hand.

Teleoperation Test #2 - Using 2 IMUs and Colibri with 3D Animation in MATLABHere is a video to show our second teleoperation test. We want/need to test WITRA with a 3D animation before we move on to the actual SCARA robot due to safety. We want to make sure that the motion capture works properly and is reliable. On the video you can see the performance. This system works based on what we have already show:

- How we calculate the inverse kinematics of SCARA;

- How to create a 3D animation in MATLAB and show to continuously update it in real-time. The code and implementation is found there;

- How the mapping from the user's work envelope to the robot's one is made. It is quite simple and that enables us to have a simpler code, by using a linear mapping;

- The first teleoperation test performed. By that time we were using only one IMU to find the position of the user's hand and with that information reset, continuously, the goal position of the Tool Center Point (TCP) on SCARA

- The human arm direct kinematics is explained in details.

For this teleoperation test we have:

- Two IMUs positioned at the user's arm: one to measure the orientation of the upper-arm and another to measure the orientation of the forearm;

- These IMUs are connected to Colibri + Iris over the Serial interface;

- Data is received and the direct kinematics of the human arm is calculated;

- The workspace of the user is mapped into the workspace of the robot;

- The goal position of the robot's TCP is sent over TCP/IP in real-time to the PC running MATLAB. For this purpose we use a WiFi dongle connected to the USB port

- The goal position is received on MATLAB using the tcpip() and fread() functions

- The inverse kinematics of SCARA is calculated and the animation is plot on the screen

The schematic of the process is shown below:

Video: Teleoperating SCARA using WITRA with 2 IMUs

What we can show you now!

Now we can show you in action the purpose of developing WITRA: to create a simple and intuitive interface to teleoeperate ANY robot manipulator. We wanted to make it as simple as possible, so that no "rocket science" knowledge is required. Therefore, the interface we have now is quite easy to understand:

There are 2 IMUs positioned at the user's arm. One goes on the upper-arm to capture the movements of this section, and the other goes on the forearm as does the same job for this section. The data collected from these two sensors (Yaw, Pitch and Roll) is sent over UART to Colibri. On Colibri's code, this data is processed and the direct kinematics of the human arm is calculated. This enables us to find the position of the user's wrist every 40 miliseconds. This information is send to SCARA's server in the form of

{"Command":"Impedance","X":0.2442,"Y":0.5075,"Z":-0.0785}

The command "Impedance" sets the control mode to Impedance Control. On the robot's code, the inverse kinematics is calculated, finding then the values of position for each joint. Notice that SCARA has 4 DoFs, but only the first 3 are responsible for positioning the end-effector.

The visual feedback closes the loop and the user changes the the robot's position continuously.

How it looks likeAnd this is a picutre of WITRA with two IMUs. The next step is to add the third and last IMU at the user's hand, in order to get a finer movement. New videos and updates coming soon!

WITRA has come to its final step with the addition of the third (and last) IMU. Now we have 3 IMUs:

- One at the upper-arm to capture the shoulder rotations;

- One at the forearm to capture the elbow rotations;

- One at the hand to capture the wrist rotations;

So far, we had only 2 IMUs on WITRA, which gave us the kinematic calculation of the position of the wrist of the user. Now, with the third IMU at the hand, we can calculate the position of the center of the hand of the user. Since the wrist behaves as a ball-joint, a much finer teleoperation method is achieved.

Now that we showed you how the connections on WITRA are made, we hope to post soon a video showing the interface actually working. For now, you can watch below a brief video regarding 3rd IMU addition:

Validating WITRA motion capture with QualisysQualisys

As a motion capture system, or mocap, Qualisys consists of a set of motion capture cameras that can record information of spatial position of light-reflecting markers. Qualisys is a high-precision system and is widely known. We have used this system to validate the data acquisition system on WITRA.

On the video below, extracted from youtube, you can see the system working. On the video you can see 12 cameras. We've only used 5, which already gives us great motion data. Each camera, after proper calibration of its measurement volume, captures the image of each reflecting marker. The image of the markers is extracted from the rest of the image. Thus, identifying each marker, Qualisys records the motion data in three coordinates.

Double-system Motion Capture

WITRA uses three IMUs (Inertial Measurement Unit to capture the motion of the human arm. We've simultaneously captured the arm's movement with WITRA and Qualisys (using four markers: one at the shoulder, one at the elbow, one at the wrist and one at the hand). We wanted to guarantee that the motion capture system we've created on WITRA is realiable. For this purpose we compared the data from WITRA and from Qualisys in a graphical manner.

On WITRA's side, the information of each IMU is used to calculate the direct kinematics of the human arm. We can, thus, calculate the spatial position of each arm joint : shoulder, elbow, wrist plus the hand. On the side of Qualisys, each marker's motion is captured, giving us the (x,y,z) coordinates, over time, of each marker, as you can see below:

Comparison

With these two sets of data, we used MATLAB to create animations of the human arm over time. The red link shows the upper arm, the green link shows the forearm and the blue link shows the hand. On the left side you can see the animation created from WITRA's data and on the right side, from Qualisys's data. Below, you see the picture of Vinicius moving his arm.

WITRA QUALISYS

WITRA is now validated as a reliable motion capture system.

3D-printed IMU casesIn order to finalize the project, it was importante to give it a better look. So we've printed cases for the IMUs using a 3D printer. This helps protect the sensors, as well as makes the attachment to the user's arm better.

We, therefore, developed a very simple 3D model in SolidWorks, as you can see below. The model has mouting pins to place the IMUs and four holes to attach the flexible straps that hold the whole thing still on the arm of the user.

The model was then saved in .STL format. This is the format which is read by the 3D-printer software. After importing the .STL file on the software of the printer, three copies of the case were sent to print (one for each IMU). The final result is seen next.

Next you can see a few pictures of the IMUs mounted on the 3D printed cases. We also made tags for each case, so that we can easily identify each specific IMU (and show to everyone our supporting partners =) )

What WITRA is now

We got to our last version of WITRA. After several improvements and updates, WITRA has now a final form. It consists of:

- Three inertial sensors (IMUs)

- One on/off button

- One 9V battery

- Toradex Colibri + Iris

- One WiFi USB adapter

Each inertial sensor is positioned at one important section of the human arm + hand. Thus, each inertial sensor is used to measure the spatial orientation of one section: upper-arm, forearm and hand, since the inertial sensor outputs its value of YAW, PITCH and ROLL. That means that the angles of rotation of each joint of the human arm (shoulder, elbow, wrist) are calculated. The positioning of the sensors is shown below:

WITRA's schematic

The next figure shows how each of the componets is connected with one another. We use the X16 pin group to connect the three IMUs over serial interface. The on/off button is connected to GPIO SODIMM 133, which reads high or low. The power supply can be a wall adaptor or the 9V battery used here for practicity. On Iris's usb port, a WiFi USB Adapter is connected, so that data can be sent wirelessly to the robot's server and control unit. The latter consists of a PC in which SCARA's code runs, as well as a control unit, to which 4 EPOS [1] are connected, each one controling a SCARA motor (DoF) over a CAN network.

Finally, you can see in details next which pins are user from the X16 connector on Iris. Since there is only one 3.3V pin, all the IMUs are connected to this pin, as well as to the adjacent GND pin.

To sum up, WITRA has a very simple concept and, thus, consists of a quite simple interface. Feel free to ask further questions about the hardware, by contacting me.

How WITRA's code worksKey-points of WITRA's code

Developing WITRA required a multi-threading implementation, in order to meet the real-time constraints. For that purpose we had to learn and implement a program that would perform the following tasks:

- Read from 3 COM ports continuously;

- Check for interrupt on a GPIO pin;

- Parse incoming data;

- Calculate the direct kinematics of the human arm;

- Map the user's reachable space to the robot's one;

- Format an outgoing string to be sent to a TCP server to which the robot is connected;

- Send this data over TCP/IP;

- Save a datalog containing teleoperation information in order to be possible to repeat those movements again and again;

- Do all that in real-time.

The platform used was Windows Embedded Compact 7, and the programming environment, Visual Studio 2008. Before starting coding, a few steps were followed:

- Prepare the programming environment, following the instructions on [1];

- Create a new VC++ project in visual studio [2];

- Install WiFi drivers for Ambicom Wifi USB adapter.

Structure

With that done, we could start coding. The basic structure of the code is shown below:

That is, each of the three IMUs is connected to a COM port through which they send serial data to the Colibri module. There is also a interrupt event triggered by the gripper button. The workerThread(), called MainThread() in our program is responsible for doing all the calculations. It receives data from the three IMUs, parses the strings, filters noise and then calculates the direct kinematics of the human arm. After that, the user's reachable space is mapped to the robot's work space. With the four variables calculated (px, py, pz, gripper), a string is formatted and sent to the TCP server on the robot's side. A statechart of the code is shown next, in order to better illustrate how the data flow happens.

WITRA is versatile

Since WITRA is a motion capture system in its essence, we wouldn't develop it only for the purpose of working with robots. Therefore, we've implemented a message formatting way that can be read and interpreted by many different systems.

The string that is sent over TCP/IP to a TCP server is formatted using a Rapidjson standard [8]. The send string has the following form:

"{\"Command\":\"Impedance\",\"X\":%.4lf,\"Y\":%.4lf,\"Z\":%.4lf,\"Gripper\":%d}"

Or, in a text form (example values):

{"Command":"Impedance","X":0.6500,"Y":0.0000,"Z":0.0000,"Gripper":1}"

That means that the control mode is set to Impedance control, X, Y and Z position of the robot's end-effector is set to (0.6500,0,0) and the gripper is closed.

As one can notice, the string can be easily changed to send any parameter desired by the user/developer. Furthermore, at the other end, the code has only to have rapidjson implemented (you can get it from [8]) in order to interpret and parse the string and retrieve useful information.

Hence, WITRA could be not only used with robot arms, but also with other applications, such as:

- Drones - controlling their flight position by moving the arm

- Mouses - control the pointer on the screen

- PowerPoint presentations - have you ever thought that the movie "Minority Report" could become reality?

- Remote control cars

- 3D mouses for CAD

- Gaming

- Motion capture and analisys in physiotherapy sessions.

A whole range of possibilities can be achieved and it is only up to the developer to choose and implement them.

A guided explanation

The next video shows in details, line by line, how the final code works. It is quite long (32 minutes), but whatever you need to understand about the code is present there. I hope you enjoy it!

Where can I find the code?

The final code can be found at https://github.com/VBazan/WITRAv11_FINAL. This repository contains the include files provided by Toradex (they have to be downloaded for the projects to work), as well as two VS2008 projects:

- WITRAv11-FINAL

- REPEAT_TELEOPERATION

The first one is the actual code from WITRA. The second is an application that reads from the saved datalog files and repeats the last saved teleoperation movements.

References

[1] - How to setup development environment for WinCE and VS2008

[2] - Create a new VC++ project

[3] - How to include libraries to VC++ project in VS2008

[4] - How to use Interrupt library

[5] - How to use UART

[6] - Wifi

[7] - How to send and receive data over Ethernet

[8] - Rapidjson

Our final videoFirst of all, I would like to thank everyone who has supported, helped, followed the project on Toradex Challenge. Each one of you is part of it! You can see below what could be accomplished up to this point of the challenge. I hope you like the video. It is divided in two main parts: the first is a explanatory part giving details about the system and development; the second shows all the tests performed and the results reached.

By participating on the Toradex Challenge, a new horizon was created to WITRA. The initial idea of developing a wearable interface that would make human-robot interaction more natural arose in January of 2013 when I, Vinicius Bazan, was studying as a visitor student at the Polytechnic Institute of New York University. At that time, I was researching at the Mechatronics Lab and my professor, Dr. Vikram Kapila, came to me with the idea of a jacket that someone could wear and teleoperate a robot arm. We developed that idea further and created the first version of a wearable interface. However, at that point I was using Arduino microcontrollers to get data from the sensors and a PC with MATLAB to control a very small robot arm.

Back to Brazil in August 2013, I started thinking of my senior design project and talked to Prof. Dr. Glauco Caurin, who is an expert in Manipulation Robotics and Rehabilitation Robotics. He advised me to start using a Toradex computer in module and create a newer, better version of my wearable interface, but now with a dedicated, embedded system. So that new development began. With new and better sensors and Toradex Colibri + Iris in hands, a new range of possibilities became real.

It was quite of a journey to learn how to implement the system and to develop this wearable interface from scratch. It was, though, a breathtaking experience. I, personally, could not imagine that I would learn so much about embedded real-time systems by digging into this project.

At this point, I can say that WITRA has become a useful and feasible system that can be applied in many situations, not only in robot teleoperation. As stated on [Update 19] How WITRA's code works, the way WITRA sends data is very transparent and thus, it can be easily readapted to work with different other systems, such as gaming, controlling mouses and PowerPoint presentations, motion capture and analisys for physiotherapy sessions, drone remote control, among many others.

A few important resultsOur other 19 updates show in details every result and goal that was achieved. I'd just like to present two more important results achieved:

- WITRA has a positioning precision of the order of millimeters;

- The time elapsed, in one iteration, from getting new values from the IMUs until the positioning message being received by the robot's server is around 42 miliseconds. That means that around 24 times per second a new goal position is sent to the robot and thus the motion is very fluid and in real-time. This provides the user with a very natural and intuitive experience. By the way, just for comparison, 24 times per second is also a stablished speed for frame change in movies. That is, a lot of videos we see today are in 24 frames per second, and the frame transition is very fluid and not perceptible.

There are so many applications that can be further developed that it is impossible to say that WITRA's development came to an end. Therefore, together with Prof. Glauco Caurin, I plan to continue the development of WITRA, in order to make it even more realiable, robust and precise. We intend to create new applications, in special we intend to use WITRA in rehabilitation robotics and physiotherapy. Imagine a patient that has any kind of problem on his/her arm due to an accident or stroke. This patient has to undertake exams that assess his/her moving capabilities. WITRA can then be used to record data about arm movement, that can be later analysed and compared to other patients. We could even think of an application in which the same patient wears an exo-skeleton and the therapist wears WITRA. Then, the therapist moves his/her arm in a desired way to stimulate the patient's arm with the exo-skeleton, which will repeat the movements of the therapist's arm.

I truly believe that WITRA can become an interface that not only shows research results, but that can indeed be used in daily life to help people overcome difficulties in their lives. For that to happen, just a little bit of will power, support and imagination is needed.

Final acknowledgementsFinally, I would like to thank all the institutions and people that helped me throughout the project development and competition. My special thanks to:

- Toradex and Toradex Challenge's partners and sponsors for the opportunity, resources and space for developing something that could really improve our life experiences;

- The Mechatronics Laboratory at the University of São Paulo (EESC) for the facilities and support;

- All the faculty and members from the University of São Paulo;

- Professor Dr. Glauco Caurin, who helped me always think out of the box and create innovative solutions;

- My family and friends, specially my parents and Isabela Baldim, without whom I could never move forward;

- My coleagues at NYU-Poly in New York, for the initial idea of a wearable interface and for all the knowledge they provided me with, in special, Prof. Dr. Vikram Kapila, Jared Frank, David Lopez, Valentin Siderskiy and Giancarlo Gramazio;

- The Physiotherapy Laboratory NENEM (Núcleo de Estudos em Neuropediatria e Motricidade) at Universidade Federal de São Carlos for the help with using Qualisys motion capture system to validate WITRA's motion capture;

- All the people that at some point supported me.

Thank you all! As final statement, I would like to say what came to my mind since the beginning of my participation of Toradex Challenge, in June of 2014:

DREAM BIG

MAKE IT SIMPLE

GO WITRA

Vinícius Bazan.

_3u05Tpwasz.png?auto=compress%2Cformat&w=40&h=40&fit=fillmax&bg=fff&dpr=2)

Comments