I have a few Hackster.io projects that I want to improve by combining the projects.

Combining what I have learned on these projects greatly improves the mobility scooter project. The voice recognition capabilities from the Google AIY project opens up many possibilities for improving mobility scooters. Likewise the Walabot sensor is an improvement over the Infineon radar sensor used in the original mobility scooter project. These same technologies, voice recognition and radar sensors also apply to various types of fork lift trucks and other industrial equipment , but my initial focus will be for mobility scooters.

The robot assistant will use a WiFi hotspot attached to an LTE cellular network and use one RPi for the Google Assistant and another RPi to run Android Things. The combination of these two systems will provide very good platform to test robotic applications , eventually using vision processing to help direct an arm with a gripper to do useful tasks.

Why two computers in this project?

The Google Assistant portion of the project has such an important role that we never want the robot to be at a loss for words, or too busy with a mobility task to listen to the user of the robot assistant. So it seems best to separate the Google Assistant from the motion control portion of the project. The fully developed robot assistant will include a camera to guide a manipulator arm. This is a fairly compute intensive process making the dual computer design choice very much needed and not optional. The Walabot radar sensor API runs well on the Raspian operating system. The project deadline did not permit the consideration of porting the Walabot API to Android Things.

A link to the Cloud

My cell phone will be setup to create a WiFi hot spot for running Google Assistant and providing connections to the Cloud for all the creative robot behaviors I intend to add with the help of Android Things.

There is quite a bit of building to do, but thankfully there are some very good instructions that have been written at these two links.

Instructions for Getting Started with Google AIY projects

Instructions for Getting Started with Android Things on RPi

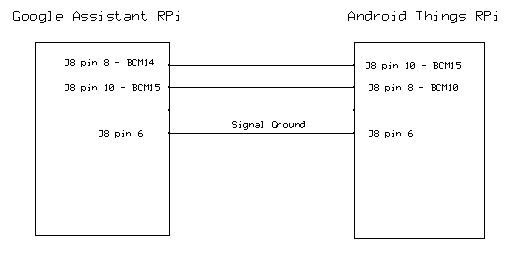

How to interface the two computer systems

I suppose I could use the WiFi network supplied through the cell phone WiFI hot spot to carry the data in between the two computers, but two reasons suggest not to do that in the short term.

- I don't have much experience writing that type of code and the project deadline is rapidly approaching. Using the WiFi network is definitely worth exploring in the long term, since both computers will be working with the Cloud.

- A UART based interface between the systems keeps the data delivery very immediate and a bit more secure from the rest of the world. It is good to have a backup communications interface in this multi computer project.

Future Work - Robot Behaviors

- Remotely Controlled Security Camera with face recognition

- Keep Track of Object Locations in a room ( Where did I leave my car keys?)

- Voice Commanded motion of the Robot

- All the other cool Google Assistant behaviors

Searching For Good Example Code

To come up the curve quickly on Android Things I have looked over many sample projects on GitHub. Some of the projects worked better than the others. This is not the fault of the writer of the example code since Android Things and TensorFlow and similar systems have been evolving rapidly. The following projects have been sources of inspiration for this project.

There is a sample project link page for a wide range of topics to help a person get started with Android Things.

Sample Projects for Android Things

The Basics of Android Things

I am so good at grabbing example code and messing with it that I tend to be bad at the finer points of setting up Android projects and using Android Studio the way it really should be used, I recommend a starter guide for people like me who need at times extremely basic information on Android Things.

Getting Started with Android Things on RPi 3

Walabot Radar Sensor Developer API

Time did not permit adding Walabot functions to the robot. From prior experience on my other Walabot project adding Walabot functions is not very difficult.

Walabot Radar Sensor Developer API

Building the Robotic Assistant

One or Two servos are not Enough

In following RPi for a little while I have learned not to expect RPi to control many servos without using an additional servo controller board. So I am including a servo controller board to my project.

I have included a GitHub project showing how to initialize and use the PWM controller board.

GitHub PWM Controller board project

This will allow me to control the speed, steering, camera azimuth and camera elevation. That still allows me to control 12 more functions, like PWM controlled headlights , brake lights and turn signals.

Tech Details on a 16 channel PWM controller

Wrapping things up for the Design Contest Deadline

I have demonstrated sample projects relating to:

- TensorFlow for image processing, and future servo control work.

- Walabot API for spatial awareness for robotic assistant

- UART communication for communication between RPi boards

- Google Assistant for future voice user interfaces for the robotic assistant

- I2C communications for controlling up to 16 servos

I have assembled a powerful combination of hardware and software systems. My only regret is not having more time to create a large amount of code to demonstrate the possibilities of the system. Work is ahead for this project as more software is developed to add cool robotic behaviors.

_copy_P5AWOdT4dX.png?auto=compress%2Cformat&w=48&h=48&fit=fill&bg=ffffff)

Comments