Introduction

On average, Americans will generate approximately 5 pounds of trash per day. But despite the many initiatives in place to teach people good and proper recycle habits, many recyclable items are still ending up disposed as landfill waste. We wanted to create a solution by developing an intelligent trash bin system that would identify recyclable items in a user’s hand before throwing them away, and direct the user to the correct bin for appropriate disposal.

The proposed IntelliBin prototype consists of three trash bins housed in a wooden enclosure, flexible red, white, and green LED strips adhered to the inner lining of the opening to each trash receptacle, a Raspberry Pi 3 Model B, and a Logitech C240 webcam. The Raspberry Pi and Logitech webcam are used to perform the image processing required to identify various waste objects placed in view of the camera. Once a waste object has been identified, the Raspberry Pi illuminates one of the three LED strips, directing the user to the appropriate trash bin.

Video of project on THIS page!

Summary of Materials and Costs (Below)

Raspberry Pi 3 Model B $43.25

Logitech C270 Webcam $25.0

16GB MicroSD Card $15

3 x Flexible LED Strips $15

12V Wall Wart Adapter $10

DC/DC Converter $3

MOSFETS $3

Total $114.25

BackgroundThe Raspbian Operating System

The operating system used to host our project was Raspbian Jessie; a derivative of the Debian Linux distribution. Knowledge of how to use the linux command shell is necessary in order to compile and run the C++ programs for this project. This component is fairly trivial, as many basic and advanced commands can be learned from a variety online sources.

OpenCV

OpenCV is the open source computer vision API (Application Program Interface) used to accomplish all of the image processing requirements for this project. It contains all of the functions required to filter the images captured from our webcam, display text and cursors to visually demonstrate image tracking, and to determine the onscreen area that each object takes up. This API can be installed on the Raspberry Pi using the instructions from this link:

wiringPi

Interacting with GPIO pins of the Raspberry Pi without the aid of any pre-existing libraries requires having to write custom drivers and utilizing appropriate system calls provided by the underlying linux kernel, which would add another layer of complexity to this project. An alternative to this is to use an API called WiringPi; designed to significantly simplify the way in which a developer can interact with the GPIO pins on the Raspberry Pi. Using this software library, all GPIO operations can be simplified to setting the direction of a GPIO pin and the value it takes (HIGH or LOW, if one is designated as an output).

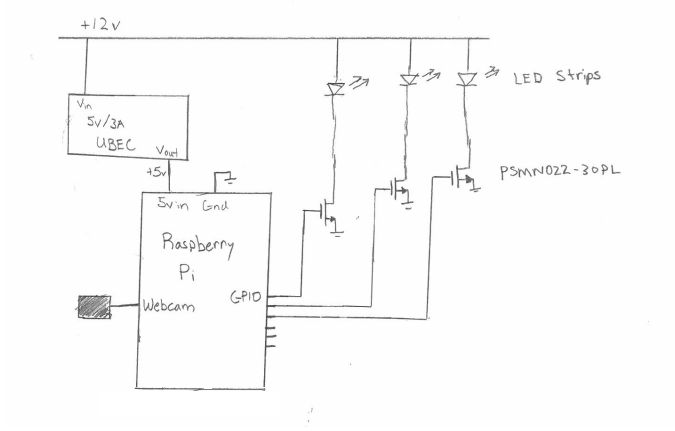

Providing System Power and Amplifying GPIO Signals Using MOSFETs

Another concern for this project was delivering power to different components. The LED Strips chosen for this project require 12 volts to power on, whereas the Raspberry Pi requires only 5 volts. In addition, the maximum output voltage for any GPIO pin on the Raspberry Pi is 3.3 volts. To solve the issue of delivering adequate power to the Raspberry Pi, a DC/DC converter was used to down-step a 12 volts to 5 volt. Then in order to allow for the triggering of each of the 12 volt LEDS via the 3.3 volt pins, three PSMN022-30PL NMOS transistors were used as amplifying switches.

IntelliBin is expected to be able to identify certain colors pertaining to different categories of waste (such as landfill, paper, and recyclable containers), and flash the LED strip above the correct wasted bin to dispose of the trash in. The two biggest goals were to interact with the Raspberry Pi’s onboard GPIO and implementing the object tracking.

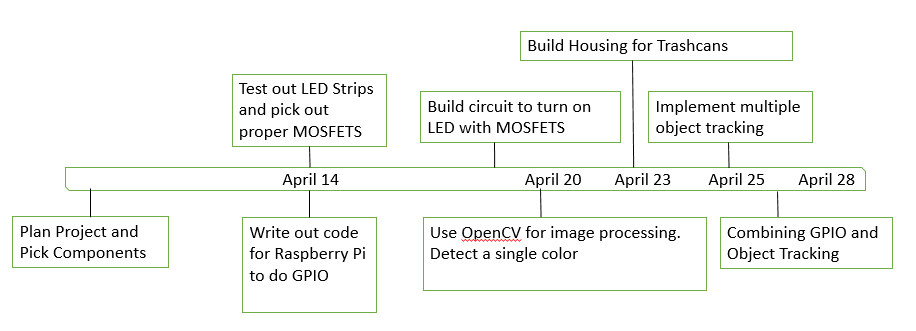

DesignPrior Plans

The Initial plan was to use a barcode scanner system to identify the objects to be thrown away and use a mechanical system to sort through trash. However, this idea was found to be very impractical, because when observing how individuals dispose of waste, it becomes very apparent that the vast majority of people would prefer to avoid a system with an extra step to throw away trash. Instead we imagined that a system which could automatically identify waste objects brought within proximity of the waste bin would be more appealing to people, as it would involve minimal user interaction with the trash bin itself and would yield the impression of effortlessness. This has multiple benefits over the the former system, such as requiring less maintenance due to there being no mechanical systems and preventing the spread of bacteria and germs since the user would not be required to physically interact with the system for it to work.

Choosing the Appropriate Color space For Image Processing

BGR (Blue, Green, Red) is one of the most commonly known color space domains, as it is used in nearly all modern devices to displaying images in their “true color” form. However, BGR values for a given pixel depend highly upon the lighting of the captured image. Since the IntelliBin was meant to be placed in environments where there are many moving objects (i.e. people) and items of different color, BGR was not a very reliable color space for this project to operate in. So for this reason and to allow for easy and high accuracy distinction between colors the image processing in this project operated in HSV (Hue, Saturation, Value) color space.

Addressing the Issue of Background Noise

Background noise was one of largest concerns surrounding this project. The environment the onboard camera surveyed consisted of many moving objects (i,e, people) and objects of multiple colors. So to ensure that this project operated correctly, the colors the IntelliBin was programmed to detect were chosen so that they were highly distinguishable from other objects for this project were chosen to be highly distinguishable colors used in the project were chosen so that they were distinguishable from the background of the objects then chose the appropriate maximum and minimum H,S, and V values for the colors corresponding to each of our objects (Orange for paper, Purple for container, and Red for landfill), filtered our image by checking for any values between the predetermined minimum and maximum HSV values, and outputting those images to a binary (threshold) matrix.

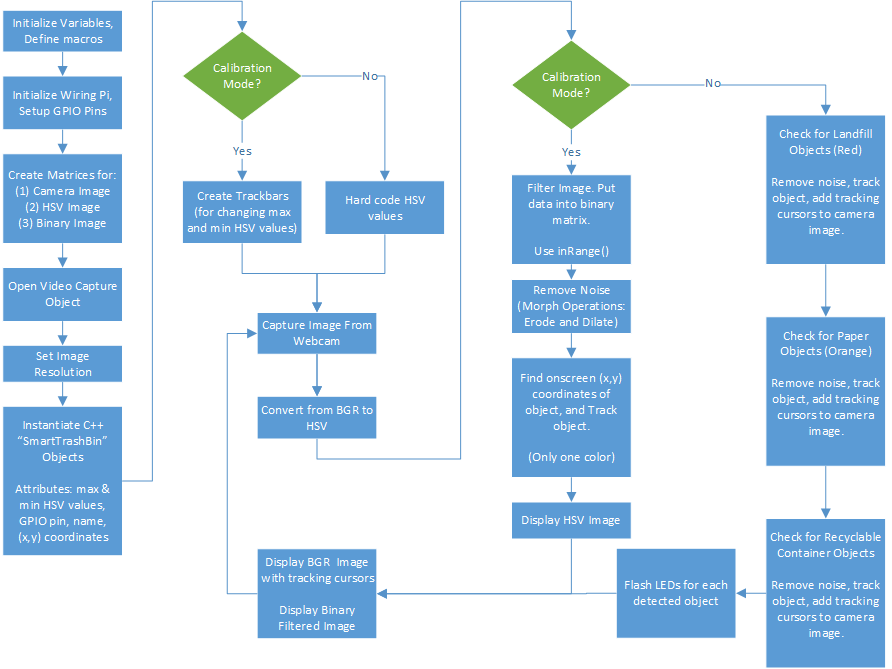

Software Architecture

From a high-level standpoint, the code for this project accomplished the required image processing by capturing individual image frames from the USB webcam, scanning the captured image for any of the three pre-programmed colors, tracking those objects on screen, and outputting a signal from the Raspberry Pi GPIO corresponding to the objects that it detects in the captured image.

First, an image is captured from the webcam and converted from BGR to HSV color space. Then the HSV image is check for any values that fall within the range of our precoded HSV values using the OpenCV function inRange(). Any values detected within this range are placed into its corresponding pixel location in a binary matrix. Noise will be present in this binary matrix resulting from objects of slightly similar color in the background. In order to remove this noise, the binary image is eroded (i.e. some pixels are diminished in size or completely eliminated) and then dilated, expanding the size of the remaining pixels in the binary matrix.

Once this is completed, the image is checked for any contours using the findContours() function. If any contours are found, the x and y coordinate of the center of the object can be calculated using the moments method. This coordinate data is then written to a temporary object of class waste (which we have defined ourselves in the file SmartTrashBin.h), and the object file is pushed onto a vector, containing all of the objects that have been detected (according to our precoded HSV values) in view of the camera.

Finally, after the objects have been identified and pushed onto the waste object container (vector), they are then unpacked via a for-loop, the tracking cursors are placed over their respective locations on the captured BGR image, and the corresponding LEDs on the IntelliBin are flashed via the corresponding GPIO pin on the Raspberry Pi.

LED control circuitry

Three GPIO pins were used to control the LED control circuitry. The LED strips used require 12 volts for input and already contained current limiting resistors. In order to power the LED strips, 3 MOSFETS were used, specifically the PSMN022-30PL. This MOSFET handles up to 30 V and 30 A, giving a lot more overhead. In addition, the gate threshold max is 2.15 V, allowing the 3.3 V GPIO pins to turn the MOSFETS on.

The circuit diagram can be found in Figure 4. Each MOSFET has its source connected to ground, and the drain connected to the LED strips. When the MOSFET is on, current will flow through the LED, turning them on. For the DC/DC converter, a 5V/3A UBEC, universal battery eliminator circuit, was used to regulate the 12 volt input voltage down to 5 volts. As opposed to using a regulator, a UBEC also provides low noise and is much more reliable. This was used to power the Raspberry Pi.

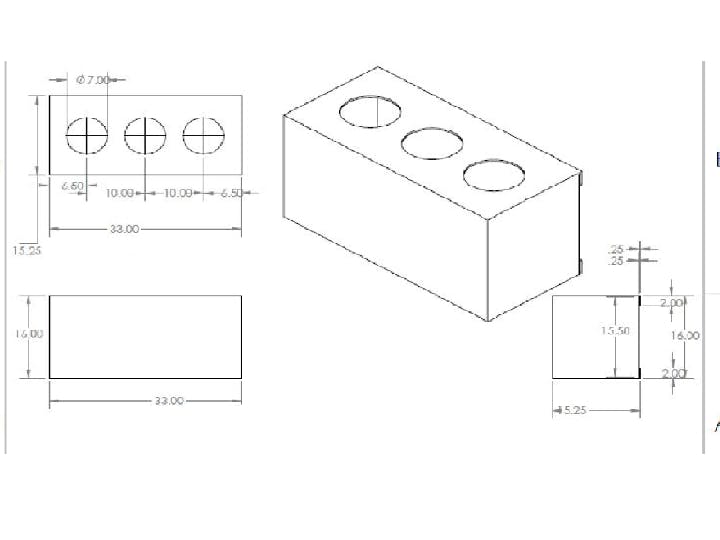

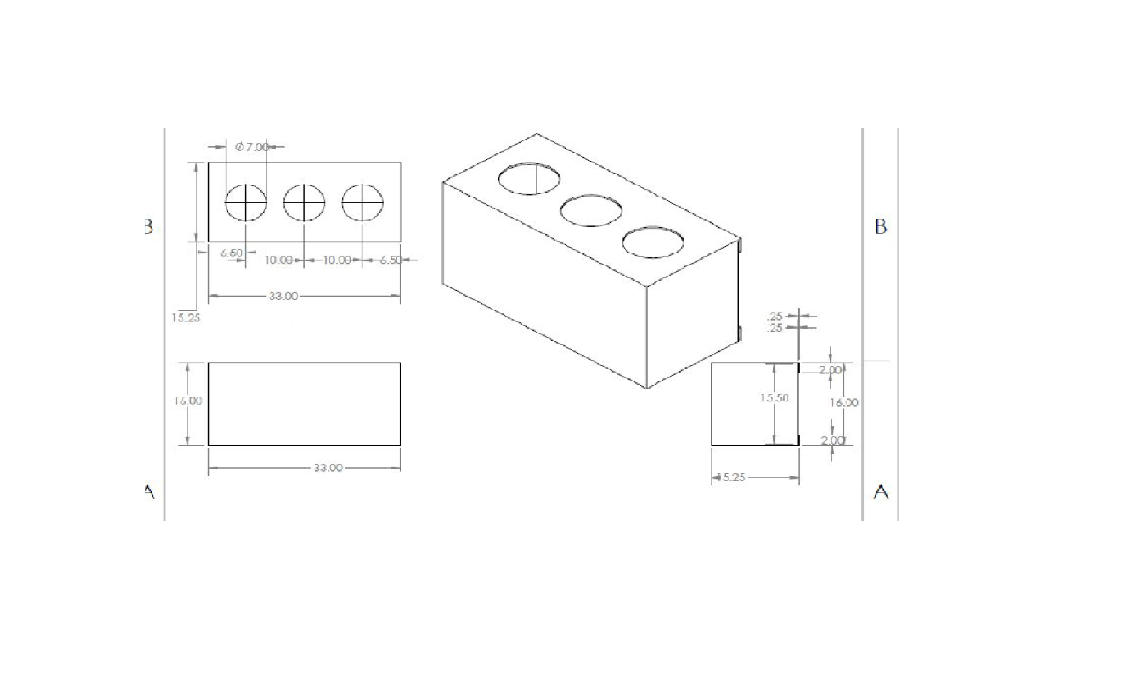

Enclosure

An enclosure made from Medium Density Fiber was created for the final product and was designed similarly to some waste bin enclosures seen around the CU campus. The measurements of the enclosure can be found in Figure 5. The enclosure has three holes on the top that allows the user to drop off trash from the top. The Raspberry Pi and LED control circuitry were located directly under its top portion, and the webcam was mounted on the top.

Discussion

The project was successful and capable of recognizing different colors. After adjusting the the HSV filter values, all 3 colors were detected and IntelliBin was able to flash the correct LEDs. When multiple items were shown the camera, each respective LED flashed as desired. The project was not so much teaching orientated, but including a screen to inform users may have been helpful.

An issue that was encountered was detecting false positives. The system was able to filter out single colors very well, but other similarly colored objects placed in sight of the webcam would also be detected causing some of the LEDS to flash unexpectedly. To resolve this, distinct colors were picked as well as minimum and maximum object areas.

Additionally, the speed of the camera made it difficult for the Raspberry Pi to keep up. There was a 2 to 4 second delay and sometimes would take time for the Pi to register the object. Getting a faster microprocessor or using a Raspberry Pi camera module would assist in speeding up the update rate of the camera.

There are more features that can be introduced to this project. For instance, geometry detection and logo recognition would make it easier to detect specific objects. Aluminum cans and plastic bottles can be generalized and each object can be identified. The problem of this method is determining the actual material of each object. There would need to be more sensors in an attempt to detect different materials. A hall sensor can be used to detect aluminum can, certain sensors can be used to detect plastics, and the integration of a machine learning system would certainly make this project more interesting. However, with the time we were allotted to create a final project, implementing and integrating all those features would have been a very difficult process. All in all, truly detecting and distinguishing objects from one another can be a very difficult task.

Conclusion

A Raspberry Pi and a webcam allowed us to implement our design fairly quickly. The majority of our project was implemented in code. The most difficult portion of our project was ensuring that our our MOSFET amplification circuit operated properly, as there were multiple occasions where we would accidentally short the Gate to the 12V wall wart output and break the transistor (Our MOSFETS were designed to be biased at a Gate to Source threshold voltage of 1.7V). This project has much potential for future development. Although only color detection was used to identify trash, there are a multitude of strategies that can be combined and used to detect different types of trash. This tool may prove to be successful in an environment to teach people what type of trash belongs where and could even be used to automatically sort trash.

References

Raspberry Pi 3 GPIO

https://www.element14.com/community/docs/DOC-73950/l/raspberry-pi-3-model-b-gpio-40-pin-block-pinout

WiringPI to Physical GPIO mapping

WiringPi Blink Example

http://wiringpi.com/examples/blink/

PSMN022-30PL MOSFET Datasheet

http://www.nxp.com/documents/data_sheet/PSMN022-30PL.pdf

OpenCV Color Detection Tutorial

http://opencv-srf.blogspot.com/2010/09/object-detection-using-color-seperation.html

Khounslow. “Tutorial: Real-Time Object Tracking Using OpenCV.” YouTube. YouTube, 11 Mar. 2013. Web. 29 Apr. 2016. <https://www.youtube.com/watch?v=bSeFrPrqZ2A&src_vid=RS_uQGOQIdg&feature=iv&annotation_id=annotation_307976421>.

Khounslow. “OpenCV Tutorial: Multiple Object Tracking in Real Time (1/3).”YouTube. YouTube, 07 Aug. 2013. Web. 29 Apr. 2016. <https://www.youtube.com/watch?v=RS_uQGOQIdg>.

“Thisismyrobot.” : Getting a Logitech C270 Webcam Working on a Raspberry Pi Running Debian. N.p., n.d. Web. 29 Apr. 2016. <http://www.thisismyrobot.com/2012/08/getting-logitech-c270-webcam-working-on.html>.

Notes/Appendix

Command to build project (must have OpenCV installed):

g++ -ggdb `pkg-config –cflags opencv` -o `basename main.cpp .cpp` main.cpp SmartTrashBinObjects.cpp `pkg-config –libs opencv`

Command to run:

sudo ./main

Comments