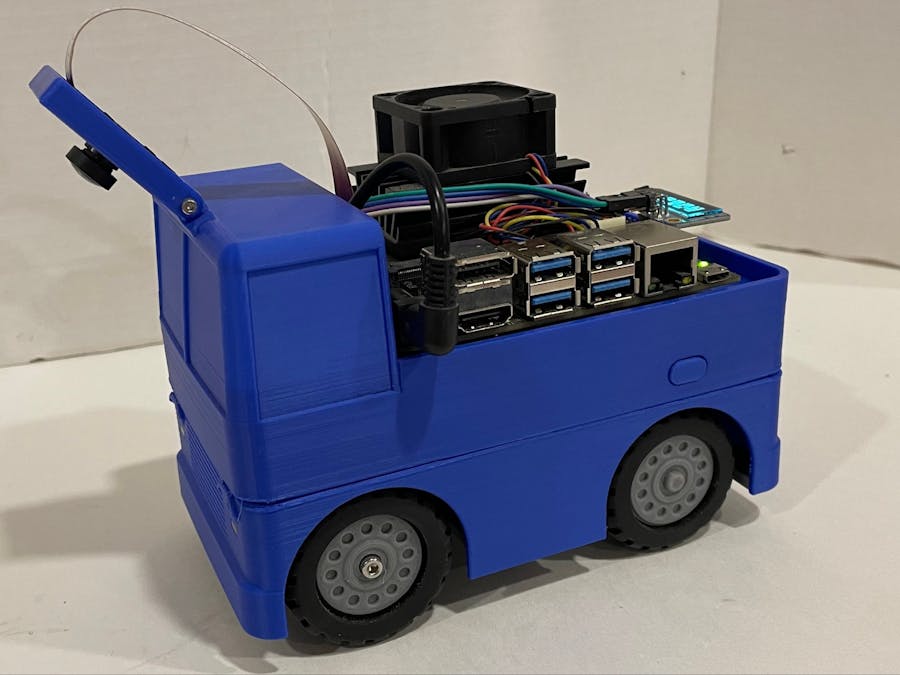

The JetCar is a 3D printed car around the Jetson Nano development board from NVIDIA with minimal additional electronics for driving. Just using the camera stream as input, it can not only follow street markings but also automatically turn left or right at intersections where allowed. Through machine learning it recognizes direction arrows, stop texts and stop lines on the street. The model architecture is a U-Net. It creates very visual class images that are processed in the firmware, which is written in Python controlled by a Jupyter notebook. The user connects to the car from a host computer via WiFi and the operator simply requests direction changes for the next intersection. But it only turns, if the direction is not restricted by a direction arrow on the street.

The project includes the mechanical design, electronics design, firmware and tools for data preparation, model training and street map generation. The documentation describes all parts in detail. All source codes and binaries are made available in GitHub.(https://github.com/StefansAI/JetCar).

The documentation is meant to help anyone to build this car at home, to try it out and to tinker with it.

Mechanical Parts and Assemblyhttps://github.com/StefansAI/JetCar/blob/main/docs/Assembly.md

The documentation in GitHub and Video 1 starts with the mechanical design of the car itself in Fusion360 and goes over the assembly of the 3D-printed parts (Video 2). The files for all parts are available for download here: https://github.com/StefansAI/JetCar/tree/main/mechanical

https://github.com/StefansAI/JetCar/blob/main/docs/SD%20Card%20Setup.md

GitHub and Video 3 describe how to download the NVIDIA JetPack SDK for the Jetson Nano and run the install script on the Nano to install everything needed. (https://github.com/StefansAI/JetCar/blob/main/firmware/install.sh)

https://github.com/StefansAI/JetCar/blob/main/docs/Data%20Preparation.md

The ImageSegmenter application for the PC was written to manually mark classes in recorded camera images and to create augmented datasets from it for the training (Video 4)

The executable can be downloaded here: https://github.com/StefansAI/JetCar/tree/main/tools/bin/ImageSegmenter and the full source code can be found here: https://github.com/StefansAI/JetCar/tree/main/tools/source/ImageSegmenter

Model Traininghttps://github.com/StefansAI/JetCar/blob/main/docs/Model%20Training.md

GitHub and Video 5 describe the training in Colab. The training notebook can be downloaded from here: https://github.com/StefansAI/JetCar/blob/main/tools/pytorch_unet_mobilenetv3_colab_catalyst_20.ipynb

A fully trained model file example, ready to be used is here: https://github.com/StefansAI/JetCar/blob/main/firmware/jetcar/notebooks/JetCar_Model_MobileNetV3_Medium_Map_Traing_N19.pth

StreetMaker Applicationhttps://github.com/StefansAI/JetCar/blob/main/docs/Model%20Training.md

GitHub and Video 6 describe the provided PC application called StreetMaker. It allows designing and printing a street map for the car to drive on.

But this application has added features to create virtual camera images of the map together with the class code mask and augmented datasets for the training. The predictions from the training notebook can be viewed together with the input.

The executable with 3 example maps can be downloaded here: https://github.com/StefansAI/JetCar/tree/main/tools/bin/StreetMaker and the full source code here: https://github.com/StefansAI/JetCar/tree/main/tools/source/StreetMaker

Firmware and Debugginghttps://github.com/StefansAI/JetCar/blob/main/docs/Debugging.md and Video 7

The firmware is written in Python to run under a Jupyter notebook on the Jetson Nano. It contains only few files named here:

The files can be found here: https://github.com/StefansAI/JetCar/tree/main/firmware/jetcar/notebooks

In short: The mask is passed on to the LaneTracker object, which first finds the lane limits and then the class codes in own lane and the lanes left and right. LaneCenter extracts run-length coded object lists to track arrows, texts and turns.

Since debugging a system in real-time id difficult, the driving notebook has a recording feature that allows storing all camera images together with the mask from inference and a log file in a special folder, that can be zipped up and downloaded to the PC. A Visual Studio Code offline debugging environment is described how to go through each frame and mask stepping through the firmware code or simply how to enable all the different print outputs variables to analyze the output log.

The offline debug files are located here: https://github.com/StefansAI/JetCar/tree/main/firmware/offline_debug

OperationVideo 8 of the series below demonstrates how the JetCar is navigating the printed out street map, how it stops at any intersection with stop text and stop line and how it obeys the arrows, ignoring turning requests that are not allowed.

Here are the links to the mini-series of 8 videos available in YouTube.

JetCar Part 1 - Introduction:

JetCar Part 2 – Assembly:

JetCar Part 3 - Firmware Setup:

JetCar Part 4 - Data Preparation:

JetCar Part 5 - Model Training:

JetCar Part 6 - StreetMaker:

JetCar Part 7 - Firmware:

JetCar Part 8 - Demonstration:

_t9PF3orMPd.png?auto=compress%2Cformat&w=40&h=40&fit=fillmax&bg=fff&dpr=2)

Comments