According to the EPA, Indoor Air Quality can be 2-to-5 times worse than outdoor air. With the current pandemic, people are spending even more time indoors, at home. To best handle pollutant loads indoors requires constant air exchange with clean air. Increase in events like Forest Fires also result in ambient surrounding air quality being quite bad.

Office buildings often have better HVAC systems and air filtration, and so spending more time at home now might expose people with Asthma and Allergies to even more pollutants and irritants.

1-in-5 people in the US suffer from Allergies, and 1-in-12 suffer from Asthma, and the economic cost of exacerbations, doctor office visits and ER visits is quite high. However the good news is that research has shown that reducing indoor air pollutants results in improved comfort, health and productivity for building occupants. Avoidance of asthma triggers also helps to reduce exacerbation rates and result in better overall Quality-of-Life (QoL) for patients with severe and difficult-to-threat asthma.

Therefore visibility into air quality and exposure is more important than ever.

However, most air quality monitors track a few general parameters and it is difficult to correlate the information with how one feels and get actionable insights. Allergies and irritant sensitivities are as personal as the number of available irritants. Some are sensitive to humidity, and some to dust, some to pet dander. The sensitivities may also be confounded because they may have a delayed effect to the irritants.

By tracking how a person feels in addition to the air quality, we can better predict and offer recommendations to the user to improve air quality and/or reduce exposure and therefore improve health.

Solution - Birds Eye ViewIn this solution, we track particulate matter (PM1.0, PM2, 5 and PM10 values using the Plantower PMS7003), temperature, pressure, and humidity (using a BME280 breakout).

[Note: The aim was also to track CO2 using an MH-Z19 sensor, but since the m5stack has only one UART port available, we leave it as an optional exercise for the reader. One will need a UART multiplexer to connect both the PMS7003 and MH-Z19 to the m5stack Core 2 unit.]

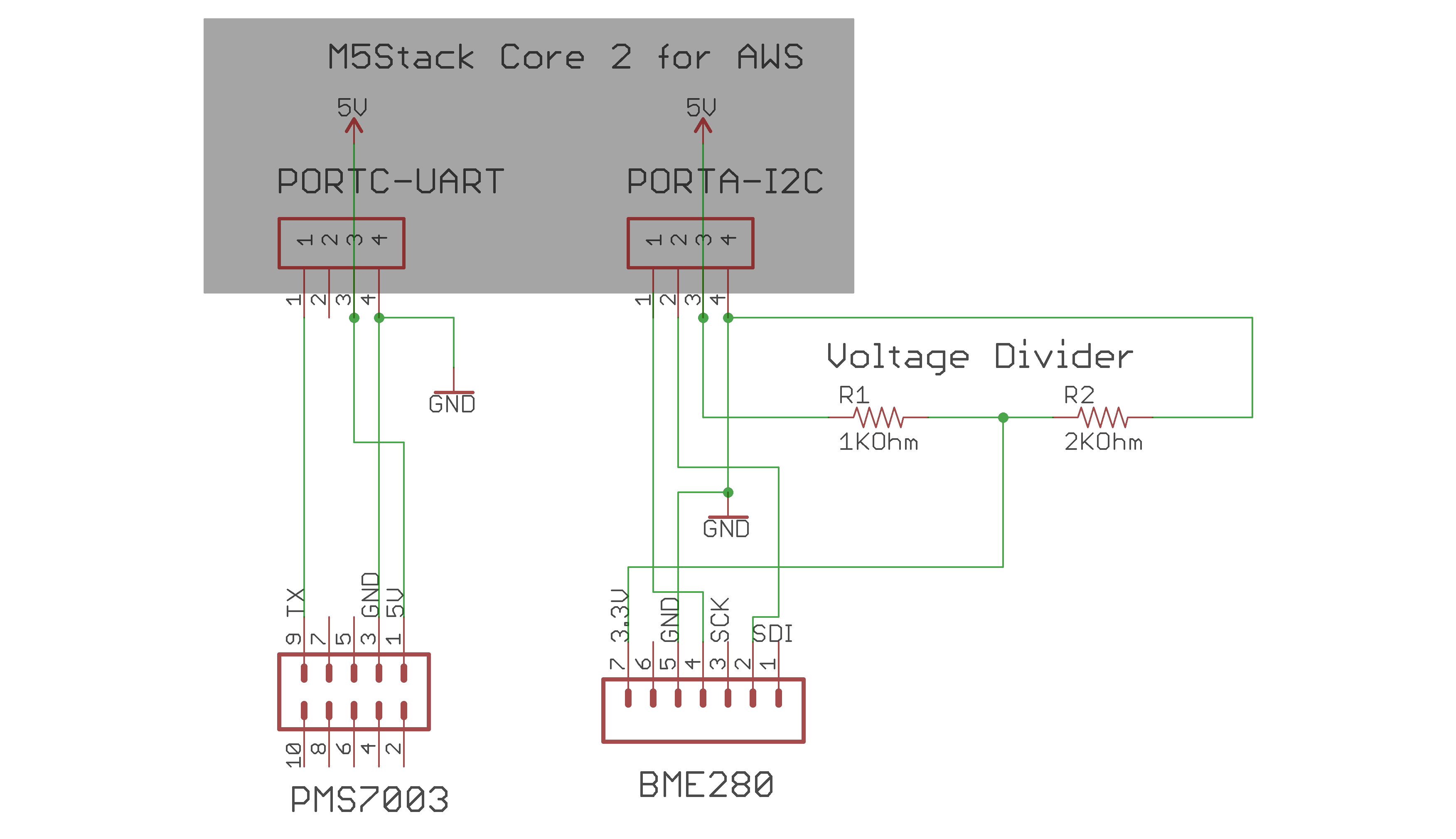

The Schematic is as shown:

The BME280 breakout (from Adafruit) is 3.3-5v tolerant, but expects the input voltage to match the MCUs signal thresholds. The m5stack operates on 3.3v logic, but the Grove ports (Port A, Port B and Port C) all provide 5v. For this reason, a simple voltage divider was used to reduce the voltage to 3.3v before feeding it to the BME280 breakout.

We also utilize the built-in mic (the SPM1423) for cough and sneeze detection. The sampled audio data is fed to on-board TinyML classifier based on Edge Impulse to infer if a cough or sneeze has occurred. The porting of the Edge Impulse model to run on the ESP32 based m5stack proved to be a great challenge. We'll discuss this in greater detail below! 😊

We send this data to AWS IoT Core using device shadow. An IoT Rule is used to send this data over to AWS IoT Analytics to prepare it for consumption by AWS SageMaker. Training data is used to train an ML model for predicting the 1 hour likelihood of coughs or sneezes. The window of time for prediction will be adjusted based on the accuracy of the model.

We also compute and display an Air Quality Index (based on the PM2.5 data currently, but we can also compute it for various parameters and then display the dominant element). In order to make the device approachable and useful to the user, care has been taken to design a User interface to display the values at quick glance, as well as display a color-coded Air Quality Index (with the light bar on the bottom animating the AQI color as well, so any changes can be quickly seen by the user from afar).

We'll also compute an Health Quality Index (HQI) based on the sensor data and cough/sneeze data. This is done on the AWS Cloud for flexibility (so the implementation can be worked on and changed as appropriate without having to modify the device firmware). This data is fed back to the device using the "desired" state of the device shadow of IoT Core. We will need to modify the UI to also display the Health Quality Index. The band at the top can be split into 2, with the left side showing AQI and the right side showing the Health Quality Index (current and predicted).

We can display the current Health Quality Index as well as the 1 hour forecast Health Quality Index. The goal is that the advance warning would be sufficient to make changes - improve air quality via opening windows, running air purifier, HVAC system etc, or reducing exposure (by moving to an alternate room with better air quality or an in-room purifier). Subsequent development would be the generation of recommendations based on multi-room sensor input and outdoor air quality data.

The following architecture diagram shows the different components and flows:

This solution is different from existing air quality monitors because it attempts to predict wellness based on user data in addition to environmental data and machine learning.

Solution Discussion - HardwareIn this project, we utilize the FreeRTOS port and platformIO/VisualStudio IDE for building, as mentioned in the AWS Samples. Elements from Cloud Connected Blinky, Smart Thermostat, Smart Spaces and Factory Firmware have been utilized in the Breathe Right project.

The following image shows the various tasks spawned. Mutexes are used to protect shared data where appropriate:

1. Clock update - show the clock in the UI. This is useful since the user will know what the current time is when he/she is checking the screen for data.

2. In a similar vein, we also display the power status. Since the device has a battery, this will be useful for a portable deployment. [An event callback also displays the WiFi status.]

3. We also spawn a task to animate the light bar. We use this to display the Air Quality Index color, which can be updated. The animation effect shows the color snaking around the m5stack.

4. There are two tasks spawned for the sensor data. One to read the PMS7003 UART stream data and translate that to PM concentrations as per the datasheet. The other task periodically updates the UI and also the global data that the AWS thread will be reading from. This split allows us to aggregate and compute rolling averages, for example, while continuing to sample the air quality on a more continual basis.

5. Cough/sneeze data also spawns two tasks. The first one is a microphone task that reads audio data from the microphone and writes to a double buffer. The second task is the inference task that reads from the double buffer and then performs a continuous inference on the audio data to classify coughs/sneezes/noise. This is used to update global variables (protected by mutex) to record the number of coughs and sneezes in the interval. [When the AWS thread reads this data to send to IoT Core, it also resets the count for the next period]. This way, we can track the number of coughs or number of sneezes per minute (or for additional intervals), which will help filter out the odd cough/sneeze.

6. Last but not the least is the AWS task to send data to the device shadow. It updates the shadow once a minute. We can change this interval to a larger interval in a real-life deployment, as necessary. We can also the device shadow "desired" state to tell the device how often to send the updates, for example.

Solution Discussion - User InterfaceOne of the goals was to make the device very user friendly. One of the common issues with existing air quality monitors is that you either need to pull out a smart phone/app to view the data, or the display is quite cryptic and minimal.

The m5stack comes with a touch screen display, and the FreeRTOS port has available the LVGL library that makes UI design and implementation a much easier task with many available widgets.

The UI itself has 2 tabs - the main tab displaying the air quality data and a message tab, showing debugging messages (this tab can be removed for final deployment but is useful for debugging).

The main screen has been laid out to show all the necessary data in a simple manner. The screen background is also color coded to match the computed Air Quality Index, and the light bar on the bottom is also updated to match that.

The UI also shows the time, power status, and WiFi strength as these would be useful to the user.

The video below shows the Air Quality monitor in action with a simulated "bad air" event (using an incense stick - I'm Indian after all! :-) ). You can see the colors changing as the computed Air Quality Index changes.

Edge Impulse is great for training and deploying TinyML models that can be deployed on the MCU. This is great where you have a need for local classification without having to go through the network or to another edge device. For example, we want to to be able to detect and classify coughs and sneezes. We'll collect audio samples continually and then run continuous inference on those to detect the coughs or sneezes.

There are many tutorials available on the web for Edge Impulse. I'll refer you to one such on Hackster itself - Cough Detection with TinyML on Arduino. You'll need to do cough AND sneeze detection, and therefore will need more training data for coughs and sneezes.

However, there is not much information available for deploying the Edge Impulse model to an ESP32, especially on FreeRTOS. I had to figure it out by getting very familiar with the esp freertos build system, as well as the build requirements for the Edge Impulse library - much more than I had anticipated! 😊 The good news is that I got it working! The not so good news - ML models require a lot of tweaking and adjusting! It comes with the territory I guess.

First point to note is that Edge Impulse is a C++ library. It can have a C Linkage, but that precludes you from using the continuous classification. I got around that by implementing a C++ implementation calling the classifier (and the mic data), but it also calls into Core2AWS libraries, some of which don't expect a C++ invocation. For example, I couldn't include core2forAWS.h library - for the microphone init. I got around that by including microphone.h directly. Phew!

When you download an Edge Impulse deployment, you can pick an Arduino library, or a C/C++ library. Please pick the Arduino one!! We cannot use that as is, as we're not running under an Arduino environment, but we'll get past that, don't worry!

We need to add porting information for esp32 under main/edge-impulse/edge-impulse-sdk/porting, as well as modify the ei_classifier_porting.h file. We'll also need to define __ESP32__ in the CMakeFiles.txt for the build system

I also ported the nano_ble33_sense_microphone_continuous example from Arduino to FreeRTOS, and using the SPM1423 and i2s_read.

However, I found that the model window I had defined was a bit too much for the m5stack with all the other processing it was already doing. The mic sampling was faster than the inference could handle and it resulted in samples getting dropped. The model will have to be fine-tuned to make it smaller and more efficient, and the other code on the m5stack also needs to be optimized to reduce processing.

Solution Discussion - PMS7003 and UARTI also ran into some issues utilizing the Core2ForAWS UART libraries. I fixed those issues and was finally able to get solid and reliable data from PMS7003 but it definitely added a significant delay. The Core2ForAWS UART library on GitHub has been updated due to my findings.

Solution Discussion - SageMakerWhile the SageMaker/IoT Analytics pipeline/path has been plumbed, there has not been enough time to collect enough training data to run and create a model and deploy that. But all the basic plumbing has been done to enable and facilitate this. As with the Edge Impulse model, this too will need some work in figuring out the best parameters and training for a good model.

Running the CodeThe link to the GitHub Repository for this project in included, and it has further information via READMEs on how you can deploy and run it.

ConclusionThis solution is different from existing air quality monitors because it attempts to predict wellness based on user data in addition to environmental data utilizing machine learning. It is hoped that this approach will help give better visibility and actionable insights into air quality and how to manage it and expose to it to manage a healthy outcome (or at least an improved comfort and health level than before!)

There are still many steps required for this project to see a final deployment form and it is hoped that this can help others in pursuing this approach and adding to it.

_3u05Tpwasz.png?auto=compress%2Cformat&w=40&h=40&fit=fillmax&bg=fff&dpr=2)

Comments