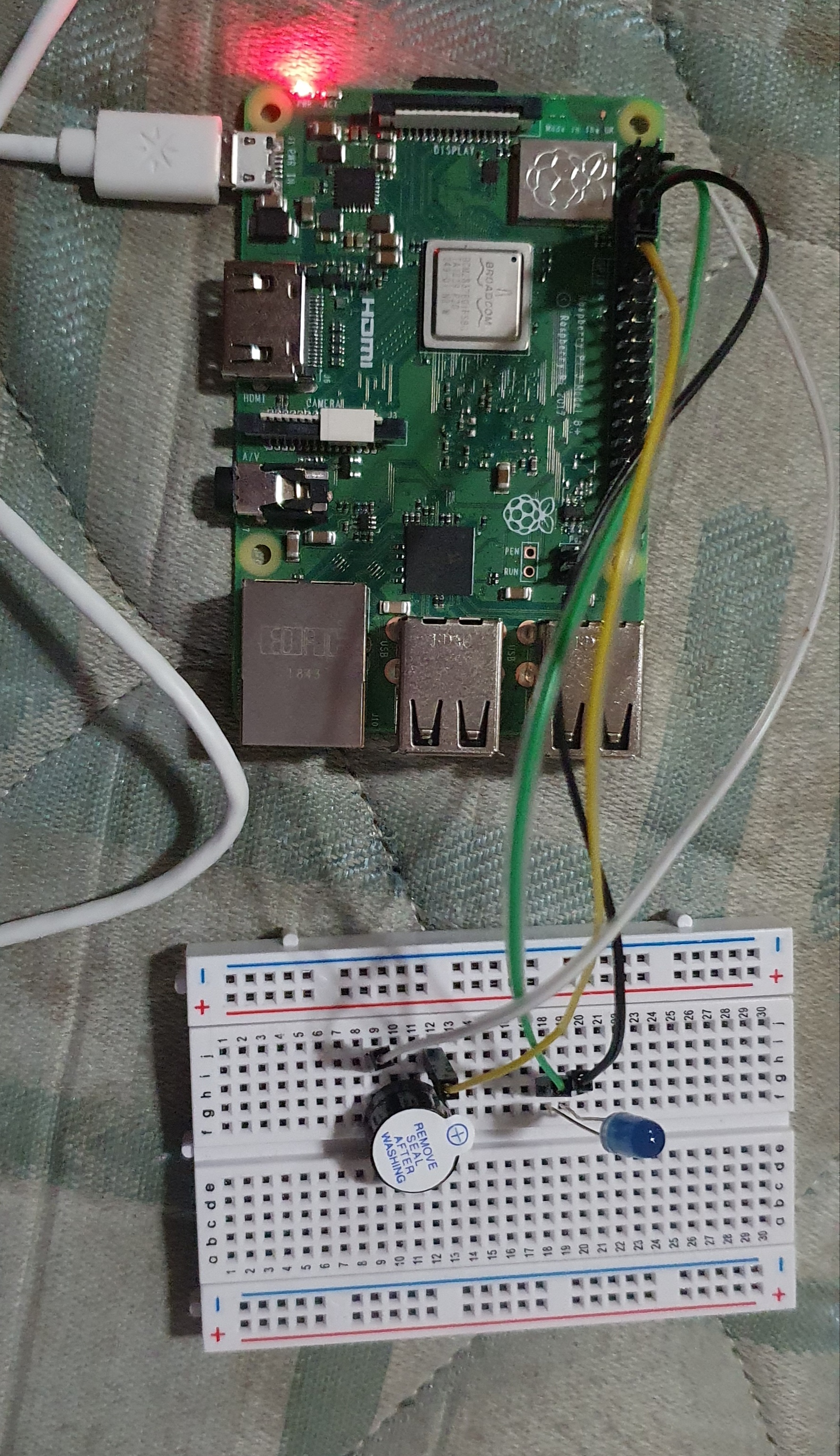

The basic idea and the reason to work on this project is quite simple. The project uses the concepts of computer vision, real time image detection and Convolutional Neural Networks. As our everyday tasks are being more automated and the use of man work has been decreased due the enhanced capabilities and the era of Artificial Intelligence has made the machines much more advanced than before. As, Computer Vision is currently the hot topics in the AI industry, from Self Driving Cars to the Amazon Smart Store, all of them are working on the fundamental concept of computer vision. Therefore I created a project which uses computer vision to detect the hand gesture and execute a command associated with that gesture, the LED will blink up and the buzzer will turn on.

I started with the data collection process and to minimize my data cleaning process, I made my own data by using my webcam to capture the images of different gestures of same size, so that the process of resizing them is not required, and they were stored in the form of grayscale images.

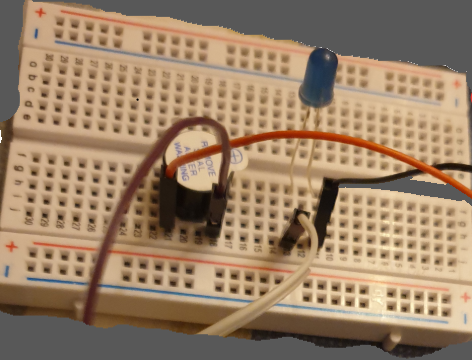

We then need to install some libraries like NumPy, OpenCV and Tensorflow to make our training model, capturing the real-time data from the user and testing our model. To train my model, I made 3 ConvNet layers and added MaxPooling to maximize the feature extraction from images. The model was then compiled and saved. The saved model was then transferred to raspberry pi as the processing power of RaspberryPi is quite slow.

Execute the script of train.py to train the data and save the model to your computer.T hen execute the Prediction.py script. Rememberto change the path of the video to your computer. The scripts will pop up three windows:

- Media Player, where video will be running.

- Your webcam window, where you can see your image and the place where to put your hand in.

- A ROI window, which tells you the lighting condition of your background. Always ensure that this windows is totally black until you place your hand in it.

NOTE: This project is a part of assignment submitted to Deakin University, School of IT, Unit SIT210 - Embedded Systems Development

Comments