The choice between a fun project and serious assistance.In fact, I’ve found a contest of a dream: a lego robot controlled by voice. This is so cool!I wanted to do something really useful and try to combine this with the fun.I refreshed my knowledge on the possibilities of lego mindstorms, remembered that I could use voice control and began to think about various ideas: window washer, shoe shine.During the cleaning the apartment, next to the recently assembled Lego Technics model, the idea was born.

What if I can put a cordless vacuum cleaner on the robot chassis and it will drive and vacuum itself?The two basic usage cases appeared:1) Cleaning a predefined area2) Cleaning an indicated area

Any idea should be checked on the early stages.

To me, it was fundamentally important not to imitate the action, but to ensure that the robot cleans for real. This meant using the main brush in the kit (as in the picture above). The main brush copes with this job excellently. However, there are nuances:1) It is very heavy (the vacuum cleaner weighs 1, 2 kg, the main brush – 0, 7 kg, Turtle itself – 1, 2 kg)2) In the working position, the brush is quite tight against the floor and rests on small rollers.

It means that:1) Very robust chassis is needed to support such weight.2) Turns should be performs with a raised brush so that there is no friction between the floor and it.

First of all, it was necessary to verify the potential feasibility of the idea.I was sure in the ability to provide a rigid structure, it was an understandable engineering task. Raising the brush is also a solvable task. In case of correct identification of the gravity center, relatively small efforts are needed. The most important question remained on the mechanical part of the feasibility of the project: whether the motors will be able to carry such a heavy load. I found a paper on the Internet about the power of Lego Mindstorms motors, but I still wanted to make sure that everything works. To imagine how the system would work, I tested the prototype around the apartment through the remote control and used the Wizard of Oz technique, inventing commands and simulating their execution. During prototype testing I faced with the fact that plastic tracks slip on the floor, which in the future will greatly complicate the orientation of the position of the robot on the floor. Therefore, special protective hemispherical pads for furniture were urgently purchased and all the tracks were glued with them. This helped a lot, the ride became better. However, the motors could not stand the weight of the vacuum cleaner with a brush. As a result of iterative tests, an original design gearbox was created that withstood such a thing. The construction was hard, but it was driving.

After that Lego Mindstorms set was bought. Tests have shown that large EV3 motors can withstand a vacuum cleaner. It is very good that the EV3 has rubber tracks: the positioning of the robot is good due to the good friction between the tracks and the floor surface.As you can see in the following video during development, the design has been substantially modified. Three places were mainly reinforced:1) The junction of the platform for the vacuum cleaner with the base2) Mounting motors and tracks to the base3) Change the position mechanism of the vacuum cleaner

Final design contains parts from other Lego Technic sets.

Python on mindstorms

Installation manual (https://www.ev3dev.org/docs/getting-started/) is really very good. Everything is clear. Installation manual is really very good. Everything is clear. The image on the card was recorded with the second shot. Do not forget to do this as the administrator.

Alexa

I had Echo 2 generation from Germany, so after turning it on, it began to communicate with me in German =) The connection went without problems, except that the initialization in the Wi-Fi network the Echo should be at least 2 meters from the router. I accidentally found it out.She began to speak English herself.

Alexa-Mindstorms

It was a tricky part. In Russia, neither in the AppStore nor in Google Play, the Alexa application is not available. I didn’t find how to do this through the web control panel (may be it is possible, but I don’t know how). In the beginning, I tried to install underground Amazon, but it also did not help. It turned out that in the AppStore I changed the store to American and installed the Alexa application, through which I successfully paired Alex and Mindstorms.Step-by-stem missions (https://www.hackster.io/alexagadgets/lego-mindstorms-voice-challenge-setup-17300f) are reallygood. Everything worked without problems. The only thing, I would like add is a general scheme of interaction, it will speed up understanding.

Python for EV3

I rarely write a code and this is my first robot.

There is a list of links that I found useful for lego-robot design:

- Basic things in the construction of the python language: https://www.learnpython.org/

- About Lego EV3 sensors and motors control:

- API description of python for ev3dev: https://ev3dev-lang.readthedocs.io/projects/python-ev3dev/en/ev3dev-stretch/spec.html

- Many useful code examples and explanation: https://sites.google.com/site/ev3devpython/

- Some videos of Dave Fisher in his youtube channel: https://www.youtube.com/watch?v=RljRPpXF96w

Development cycle of Turtle’s software was the following:

1) Scenario description (textual/graphical)

2) Sub-task breakdown (stages)

3) Python code writing

4) Local testing (on a table or next to a computer area)

5) Testing with completely real environment (I walked with the computer with the wire connected to the turtle)

6) Placing a working script in Alexa's environment: writing a skill and handler implementation.

For now, there are three completed scenarios for Turtle mark II: two for the vacuum cleaner mode and one for the party mode.

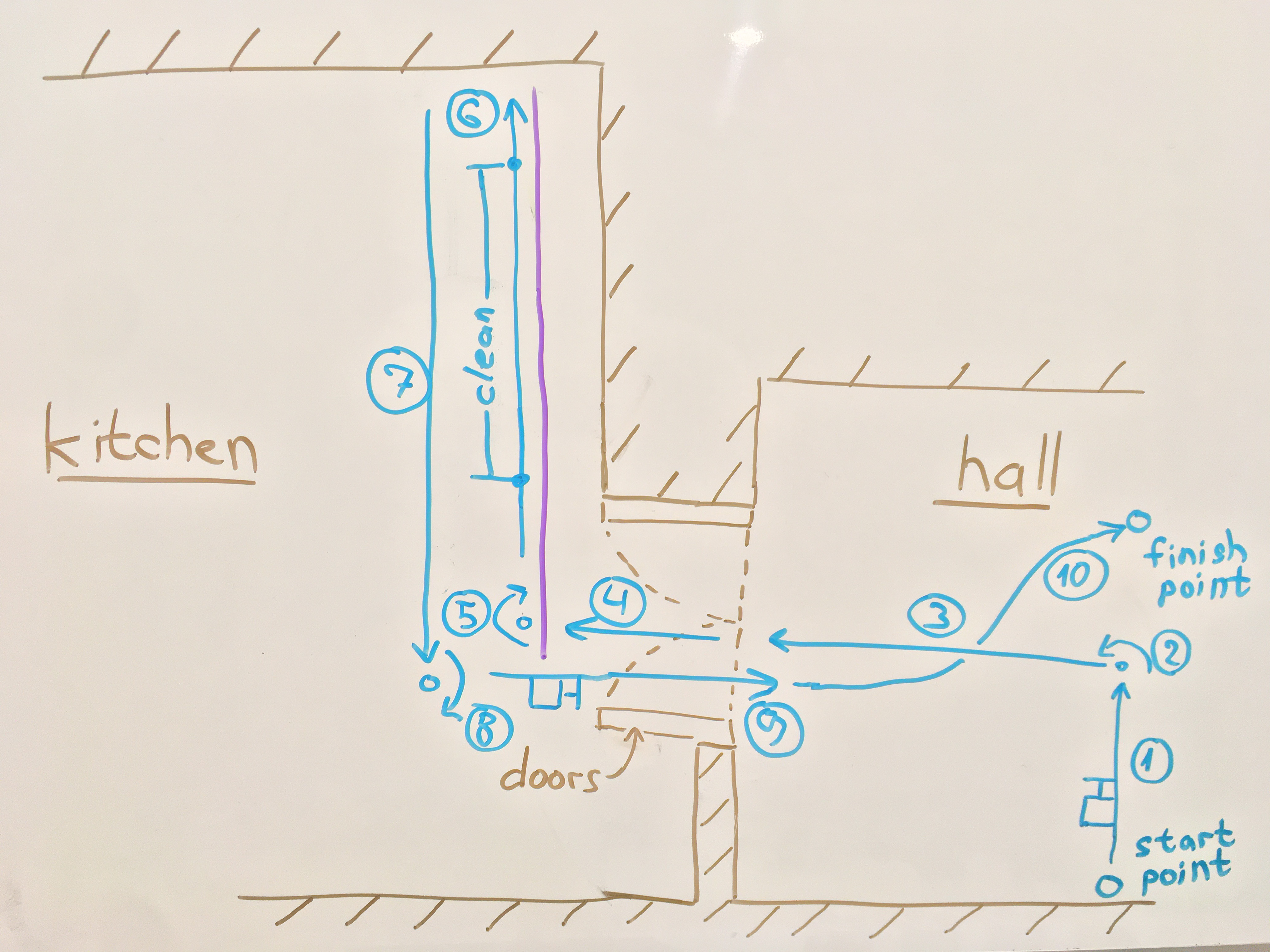

Scenario#1. Vacuum cleaner mode: predefined area cleaning

Part 1. Actions

Initial data

1. Some area in the kitchen is predefined to clean (behind step 5 and 6).

2. Start point in the hall is predefined.

3. Finish point in the hall is indicated by an active beacon.

4. The line is used for precise position of Turtle.

You can see a demonstration of the script in the first video above.

Basically, the scenario#1 consists of 10 steps. Below, I give the description of the most interesting part: from step 3 to 6.

After the left turn (step 2) the Turtle goes from the hall to the kitchen. It goes until black line is reached. During the movement Turtle checks that the door to the kitchen is open. If not, the Turtle asks the user to open it and continues its movement.

# hall -> kitchen

obstacle = 0

while cl.value() > 15:

if obstacle == 0:

self.drive.on(85, 85) # was 85. TEST

if ir.value() < 15:

self.drive.off()

obstacle = 1 # kitchen door is closed

#TODO: ALexa speak

sound.speak('It seems the door to the kitchen is closed, please open it')

if ir.value() > 15:

obstacle = 0

# black line in the kitchen is reachedAfter the line is reached, the Turtle prepares its position for line following (step 5).

# one body size forward (was 1.7)

self.drive.on_for_rotations(50, 50, 1.3, brake=True)

# turn right until black line is reached

while cl.value() > 15:

self.drive.on_for_degrees(100, -100, 20)Alignment of the position of the robot for further following the line (after step 5).

Good explanation of PID line follower was found here: https://medium.com/kidstronics/lego-pid-the-ultimate-line-follower-45d4e517572b

# follow line Part_1: Turtle alignment for 6 seconds

self.drive.cs = ColorSensor()

self.drive.follow_line(

kp=11.3, ki=0.05, kd=3.2,

speed=SpeedPercent(30),

follow_for=follow_for_ms,

ms=2000

)The Turtle turns the vacuum cleaner on by changing the angle of the platform, which is controlled by medium motor.

# down and on the vacuum cleaner

self.updown.on_for_rotations(SpeedPercent(-50), 2The Turtle continues to follow the line for 12 seconds (clean section between step 5 and 6).

# follow line Part_2: basic cleaning (10 seconds)

self.drive.follow_line(

kp=11.3, ki=0.05, kd=3.2,

speed=SpeedPercent(30),

follow_for=follow_for_ms,

ms=12000

)Robot is getting ready to reach the corner of the kitchen (end of step 6).

# follow line Part_3: prepare to stop (reach kitchen corner)

while ir.value() > 20:

self.drive.follow_line(

kp=10.0, ki=0.05, kd=3.2,

speed=SpeedPercent(20),

follow_for=follow_for_ms,

ms=500

)

self.drive.off()Part 2. Alexa

After script is tested in the real environment it is time to fire it by tell Alexa to do so!Firstly, in Alexa developer console, in JSON editor a new command is added.For scenario#1 it is

"name": {

"value": "clean"

}in section

"name": "CommandType",

"values": [Second added sample to the sample section:

"samples": [

"activate {Command} mode",

"move in a {Command}",

"fire {Command}",

"activate {Command}",

"platform {Command}",

"{Command} the kitchen please",

"{Command} me",

"{Command} trash"

]After building Model, Alexa starts to understand the command “Clean the kitchen please”.

Finally, the handler in python code should be determined.New command preset with invocation variation is added to related section.

class Command(Enum):

"""

The list of preset commands and their invocation variation.

These variations correspond to the skill slot values.

"""

MOVE_CIRCLE = ['circle', 'spin']

MOVE_SQUARE = ['square']

PATROL = ['patrol', 'guard mode', 'sentry mode']

FIRE_ONE = ['up', 'lift', 'lyft']

FIRE_ALL = ['all shot']

CLEAN_KITCHEN = ['clean kitchen', 'kitchen', 'clean']

FIND_ME = ['find', 'find me', 'found me', 'come to me', 'come']

REMOVE_TRASH = ['remove', 'remove trash', 'trash']All code from the part 1 is placed inside the related handle preset command.

if command in Command.CLEAN_KITCHEN.value:

ir.mode = 'IR-PROX' # put the infrared sensor into proximity mode.

#self._send_event(EventName.SPEECH, {'speechOut': "Turtle is coming. What a lovely day!"})

# go straight (start -> hall)

self.drive.on_for_rotations(100, 100, 10, brake=True)

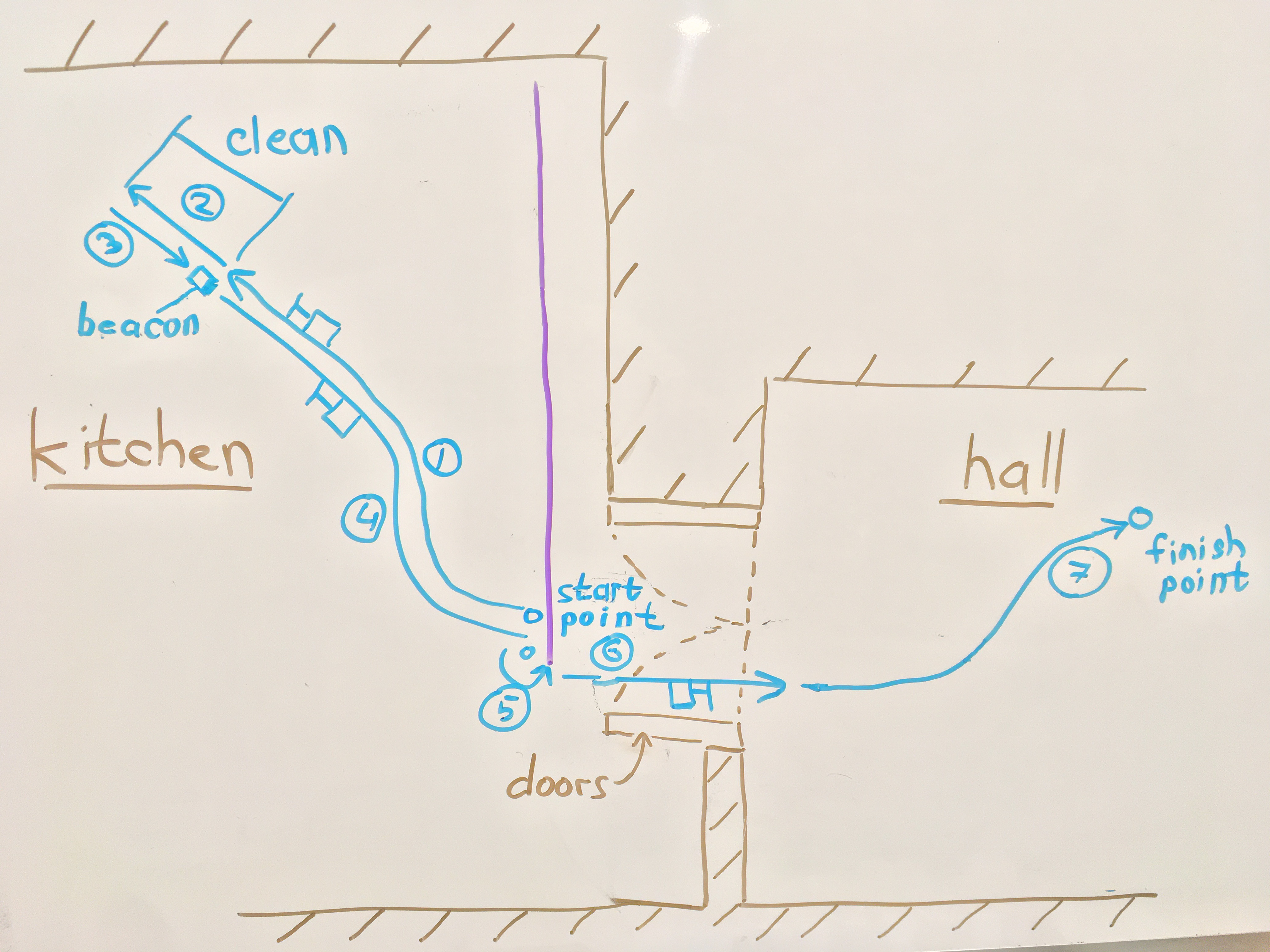

...Scenario#2. Vacuum cleaner mode: cleaning indicated areas

Initial data

1. Some area in the kitchen is indicated by a beacon.

2. The start point in the kitchen (line) is predefined (path from the hall could be added from scenario#1).

3. The finish point in the hall is indicated by an active beacon.

You can see a demonstration of the script in the first video above.

Part 1. Actions

As in the scenario#1 the most interesting part of the scenario#2 will be given: from step 1 to 4.Until the desirable distance to the beacon is not reached (from -5 to 5) the Turtle reads data from the infrared sensor and moves according to the formula: self.drive_smooth.on(distance[0]*4, 50, where self.drive_smooth = MoveSteering(OUTPUT_B, OUTPUT_C). That’s step 1.

# go to beacon from black line

distance = ir.heading_and_distance()

sound.speak('Garbage spot detected!') #TODO: Alexa talk

flagTarget = 0

while flagTarget != 1:

self.drive_smooth.on(distance[0]*4, 50)

movements.append(distance[0])

index = index + 1

distance = ir.heading_and_distance()

if distance[1] > -5 and distance[1] < 5:

flagTarget = 1

self.drive_smooth.offSteps 2 and 3 are quite simple, when the Turtle reaches the beacon it turns the vacuum cleaner on and clean a small area near beacon.

# clean area

# down and on the vacuum cleaner

self.updown.on_for_rotations(SpeedPercent(-50), 2)

# one body size forward

self.drive.on_for_rotations(50, 50, 2, brake=True)

self.motorB.wait_while('running')

# go back to area start point

self.drive.on_for_rotations(-50, -50, 2, brake=True)

self.motorB.wait_while('running')

# up and off the vacuum cleaner

self.updown.on_for_rotations(SpeedPercent(100), 2)

sound.speak('Garbage cleaned, no more litter please.') #TODO: Alexa talkNow is a tricky part. On the step 4 the Turtle should return to the start point. The problem is that during the step 1 the moving trajectory of the Turtle could be complex (it is some curve). In step 4 Turtle must repeat all the same movements from step 1, but only in the reverse order. To do so all movements during step 1 are saved in array: movements.append(distance[0]). During the step 4 the Turtle moves according to movements array values, that are read from the end to start and the Turtle moves back (-50).

# go to kitchen start point

i = len(movements)-1

while i != -1:

self.drive_smooth.on(movements[i]*3, -50)

sleep(0.005) #with cleaner 0.005, without cleaner 0.0055

i = i - 1

self.drive_smooth.offPart 2. Alexa

As for the scenario#1 in Alexa developer console, in JSON editor new command is added (“remove”).

"name": "CommandType",

"values": [

{

"name": {

"value": "remove"

}

},Sample is added ("{Command} trash").

"samples": [

"activate {Command} mode",

"move in a {Command}",

"fire {Command}",

"activate {Command}",

"platform {Command}",

"{Command} the kitchen please",

"{Command} me",

"{Command} trash"

]New command preset with invocation variation is added to related section (REMOVE_TRASH).

class Command(Enum):

"""

The list of preset commands and their invocation variation.

These variations correspond to the skill slot values.

"""

MOVE_CIRCLE = ['circle', 'spin']

MOVE_SQUARE = ['square']

PATROL = ['patrol', 'guard mode', 'sentry mode']

FIRE_ONE = ['up', 'lift', 'lyft']

FIRE_ALL = ['all shot']

CLEAN_KITCHEN = ['clean kitchen', 'kitchen', 'clean']

FIND_ME = ['find', 'find me', 'found me', 'come to me', 'come']

REMOVE_TRASH = ['remove', 'remove trash', 'trash']Code from the scenario#2 part 1 is placed inside the related handle preset command.

if command in Command.REMOVE_TRASH.value:

ir.mode = 'IR-SEEK'

index = 0 # for movements

movements = [] # history of all distance[0] during the journey to the beacon

...Scenario#3. Party mode: delivery of treats to guests

In this mode Turtle entertains guests by delivering them treats (candies, cookies, pizza, etc.). Guest#1 takes something with active beacon, for example it could be a toy bear. Then, Guest£1 asks the Turtle through Alexa to find them. After that Guest#2 want some treats, Guest#1 through toy bear to Guest#2 and so on. Thus this scenario is entertainment element of a party.See second demo video above.

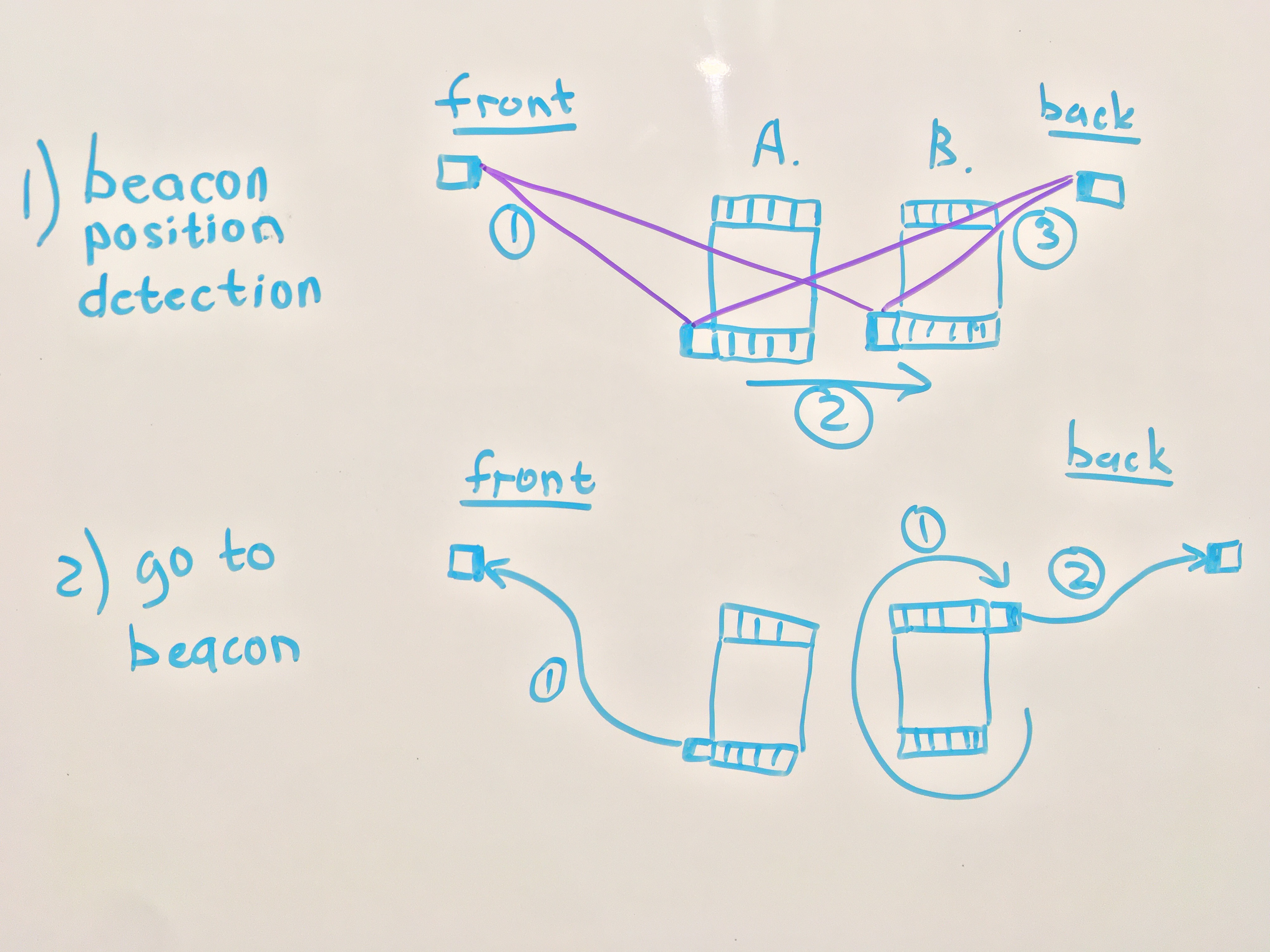

Part 1. Actions

The base of this scenario is Turtle movements to the active beacon (step 2.1 and 2.2). This was already described in the scenario#2, step 1.Before the Turtle starts to move to the beacon, the direction should be determined. Otherwise, the Turtle may start moving in the wrong direction. This situation appears when the beacon is behind the Turtle.To solve this, before start movement the position of the beacon relative to Turtle should be determined.In addition, the values from the infrared sensor changes from time to time, which also increases the probability error.

The solution consists of 3 steps.At step 1.1 the average value of distance in point A is collected.

while count < 10: # get average value of istance[1] in position 1 (initial)

distance = ir.heading_and_distance()

pos1_distance += distance[1]

count += 1

pos1_distance = pos1_distance / 10At step 1.2 the position of the Turtle is changed.

self.drive.on_for_rotations(-40, -40, 3) # change Turtle location from position1 to position2

self.motorB.wait_while('running')At step 1.3 the average value of distance in point B is collected.

while count < 10: # get average value of istance[1] in position 2

distance = ir.heading_and_distance()

pos2_distance += distance[1]

count += 1

pos2_distance = pos2_distance / 10After that, the distance values in the positions A and B are compared and, if necessary, the Turtle turns around.

if pos2_distance <= pos1_distance:

self.drive.on_for_rotations(40, -40, 3) # turn around Turtle

self.motorB.wait_while('running')Part 2. Alexa

As for scenario#1 and #2 in Alexa developer console, in JSON editor a new command is added (“find”).

"name": "CommandType",

"values": [

{

"name": {

"value": " find"

}

},Sample is added ("{Command} me")

"samples": [

"activate {Command} mode",

"move in a {Command}",

"fire {Command}",

"activate {Command}",

"platform {Command}",

"{Command} the kitchen please",

"{Command} me",

"{Command} trash"

]New command preset with invocation variation is added to the related section (FIND_ME).

class Command(Enum):

"""

The list of preset commands and their invocation variation.

These variations correspond to the skill slot values.

"""

MOVE_CIRCLE = ['circle', 'spin']

MOVE_SQUARE = ['square']

PATROL = ['patrol', 'guard mode', 'sentry mode']

FIRE_ONE = ['up', 'lift', 'lyft']

FIRE_ALL = ['all shot']

CLEAN_KITCHEN = ['clean kitchen', 'kitchen', 'clean']

FIND_ME = ['find', 'find me', 'found me', 'come to me', 'come']

REMOVE_TRASH = ['remove', 'remove trash', 'trash']The code from the scenario#2 part 1 is placed inside the related handle preset command.

if command in Command.FIND_ME.value:

ir.mode = 'IR-SEEK'

sound.speak('On my way!')

# start -> kitchen

...Vacuum cleaner mode1) Increase Alexa's usage. Including reporting via Alexa the status of what is happening with the Turtle.2) Setting the vacuum cleaner to charge station when the Turtle returns to the finish point.3) Eliminate usage of lines for precise movement by using more advanced algorithms.

Party mode: delivery of treats to guestsMake a lifting platform so that you do not have to lean to the robot.Almost everything is ready: the Up command is prepared and tested, which is controlled by the medium motor. The design of the prototype lift construction is implemented, but does not hold the platform stably and needs to be improved.

R2D2 mode: Turtle controls external systemsTurtle connects to the Lego rail and you can control Lego train by your voice.

I am going to continue work on the project. Stay tuned!The project page will be updated, or links to update will be shown.

_dyynx0bnuf_8u4Xi07d4z.png?auto=compress%2Cformat&w=48&h=48&fit=fill&bg=ffffff)

Comments