This project demonstrates how to build a battery-operated edge device that measures the pressure level of car tires using machine vision, and alerts users if the tires are unsafe to drive on.

As a car drives in front of the device, images of its tires are captured and classified as either "full" or "flat". An indicator light on top of the device changes color to notify users of the result. Any tire showing "red" is at very low pressure and should be fixed before driving further on it.

Edge Impluse was used to design and train an image classification model that accurately distinguishes between images of tires at normal pressure (full) and low pressure (flat) levels. The model is deployed to and runs on an OpenMV Cam within the device.

This prototype serves as a proof of concept that can be adopted by any industry where vehicles are regularly inspected as a way to reduce inspection times and increase safety.

BackgroundVehicle safety is paramount to anyone driving on the road. Every day drivers rely on properly maintained cars and trucks to get them from place to place without harm.

Multiple industries are tasked with making sure large quantities of vehicles are routinely vetted as being safe for the road:

- Truck Weigh Stations: Operated by the DOT, these stations verify that trucks on the highway comply with safety regulations. Inspectors physically check trucks to make sure they meet required standards.

- Autonomous Car Fleets: Large fleets of autonomous cars will be serviced at centralized depots. Regular maintenance ensures that car systems are calibrated accurately and operating as expected.

- Rental Car Returns: Rental companies need to make sure cars are in safe working condition before being lent out to the next customer. Multi-point safety checks must be conducted before cars are again serviceable.

While entire checklists must be covered to validate a vehicle as safe, one critically important check is tire pressure.

Having the correct amount of air pressure in each of a vehicle's tires is vital for safe driving. Underfilled or flat tires hurt the overall handling of the vehicle, making it less responsive and slower to stop, and can lead to extremely dangerous tire blowouts on the road.

Cars and trucks are typically outfitted with tire pressure monitoring systems (TPMS) that alert drivers about low tire pressure via a dashboard warning light.

However, the limits to TPMS are that its warning is only viewable to inspectors who can access the vehicle's interior, and it relies on a single sensor inside the tire to capture such a crucial safety measurement.

In environments where many vehicles are manually inspected, such as the ones listed above, there is a need for an automated, offboard way to accurately measure tire pressure.

With this, inspectors would no longer need to enter vehicles, and accurate readings would instead be taken remotely. Such a system would shorten inspection times while providing added safety validation.

This project aims to solve this problem with a prototype device that quickly determines a tire's pressure from outside the vehicle using machine vision, and notifies inspectors of the result.

How it WorksThe device consists of a camera and electronics, housed on an adjustable stand, that can be deployed anywhere cars drive by in order to measure tire pressure levels.

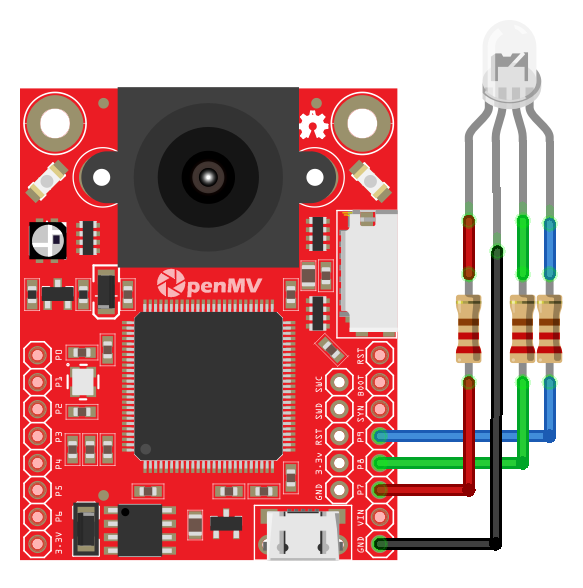

An OpenMV Cam H7 Plus microcontroller board provides the device's computing power and machine vision capabilities.

The OpenMV Cam includes an onboard camera, processor, and storage. Python scripts can be used to programmatically capture, save, and process images, as well as control the board's I/O pins. The board fits into the device's 3D printed housing, with its camera extending through a hole in the front face.

An RGB LED is connected to the OpenMV Cam's I/O pins with jumper wires and fits through a hole in the top of the housing. Power is provided to the board by a connected LiPo battery.

A backplate secures all the electronics and battery within the housing, which then couples into the 3D printed stand.

The assembled device is placed to the side of where target cars will be located, aimed so the camera's field of view will cover the position of a single tire with an additional 6-12 inch buffer area surrounding it.

This is approximately 3 ft. from the door side of the car, with the electronics housing tilted slightly upward.

Once the device is powered on, a Python script loaded onto the OpenMV Cam automatically begins to execute.

The script runs continuously, looping through the following processing steps:

- Capture an Image: A 240x240 pixel grayscale image is captured with the onboard camera and stored in the Cam's flash memory.

- Classify the Image: The image is processed through a trained TensorFlow image classification model that classifies it as either "full", "flat", or "no-tire".

- Notify the User: The RGB LED is illuminated green, red, or yellow depending on which target class the image was determined to be.

The TensorFlow model used to classify each image has been trained on a labeled dataset of example images from three classes:

"Full" images of properly inflated tires; "Flat" images of underfilled tires; and "No-Tire" images of non-tire objects like the side of a car or empty garage.

See the Build Instructions section below for more details on the creation of the labeled dataset that was used.

Through repeated exposure to hundreds of example images during training, the model learned which image shapes and features can be used to accurately differentiate between the three classes.

Images captured by the OpenMV Cam are processed by the trained model, which outputs prediction scores for each class based on how well the input image matches the shape and feature discriminators the model learned.

Whichever class - "full", "flat", or "no-tire" - has the highest prediction score is considered the singular classification of that image.

The notification light is programmed to change its color - to "green", "red", or "yellow" - based on the classification of the image.

The processing workflow runs at ~5 frames per second, which is fast enough to provide real-time results for slow-moving cars.

Beginning with a car's tire initially out of view of the device, captured images are correctly classified as "no-tire" and the indicator light shows yellow.

As a car drives in front of the device and its tire enters the camera's field of view, captured images are classified as either "full" or "flat" depending on the tire's pressure level, and the indicator light changes to green or red accordingly.

Users, or inspectors, simply need to monitor the color of the notification light as a car drives by to validate the state of its tires.

If the notification light changes to green as a tire crosses the device, inspectors know the tire is properly inflated and safe to drive on. A red notification light indicates the tire has low pressure and needs to be filled before driving further.

Build InstructionsBelow are end-to-end instructions on how to build, prototype, and deploy the flat tire classifier device.

Begin this project with an initial setup of the device's hardware. Next, use OpenMV IDE to create a labeled dataset of images from three target classes - "full", "flat", and "no-tire".

Edge Impulse is then used to design and train an image classification model using the dataset. The trained model is exported to the OpenMV Cam and tested.

Finish the project by connecting an RGB LED to the microcontroller and updating the Python code to make it function as a notification light.

Initial Hardware SetupThe image classification model employed by the device will perform best if it is trained on example images captured at the same perspective as the device when it is fully assembled and positioned at ground level.

Ensure the Open MV Cam H7 is positioned correctly during upcoming dataset creation steps by first placing it in the same 3D printed housing and stand used for the final deployment.

This initial setup does not require an LED or battery.

Step 1: Print Housing and Stand

Model files (.stl) for the housing and stand are provided in the project's attachments. 3D print each file to create the system's three parts - the housing, backplate, and stand.

Sanding may be required to fit the housing's arms into the holes of the stand. The backplate pressure fits into the back of the housing to enclose the electronics.

Step 2: Fit OpenMV Cam

The OpenMV Cam H7 Plus fits inside the housing with its camera extending through the hole in the front face.

The Cam H7 pressure fits into its position, and its pins can provide a tighter fit by bending them slightly outward towards the walls of the housing.

A hole in the bottom of the housing provides access to the Cam H7's USB socket.

Create a Labeled DatasetA custom dataset of labeled images is required to properly train the image classification model that the device will employ.

The aim is for the model to accurately distinguish images as one of three classes - "full", "flat", or "no-tire" - which can be achieved by exposing the model to many example images of each class during training.

The training relies on a dataset of example images that are each paired with a label of the class they represent. Each class is defined to include specific content:

- Full: Images near-centered on a single tire that is properly filled (~45 psi). The whole tire must be viewable and fill the majority of the frame.

- Flat: Images near-centered on a single tire that is underfilled (~10 psi). The whole tire must be viewable and fill the majority of the frame.

- No-tire: Images of non-tire objects like the side of a car or empty garage. Images of partial tires are included in this class.

These definitions support the device's intended application. As a car drives past the device, images of the garage background, car panels, and partial tires should be classified as "no-tire". "Full" and "flat" classes should only be categorized when the car's tires move entirely into the camera's frame.

Build the dataset by capturing a wide variety of images that fit the definitions of each class. Image diversity ensures the model gets exposed to the many differences that can exist between images of the same class.

OpenMV IDE and a connected OpenMV Cam H7 Plus are used to collect and organize images for the dataset.

These instructions assume you have access to a vehicle, a small area to drive, and the ability to alter the vehicle's tire pressures.

Step 1: Setup Capture Area

Begin the process by setting up a laptop and the initial hardware in an area where the subject vehicle is located.

The vehicle should be able to be repositioned slightly forward or backward by a few feet throughout the capture process.

Position the initial hardware setup ~3 ft. away from the side of the vehicle to match how the device will be deployed in its final setup. Connect the Cam H7 in the device to the laptop through a USB cable.

Step 2: Initialize a New Dataset

Open OpenMV IDE on the laptop. From the 'Tools' menu, click 'Dataset Editor' then 'New Dataset'.

When prompted, create a new folder called tire-dataset and select it. This folder will contain all of the dataset images and related files. The folder is opened within the Dataset Editor panel within the IDE.

Step 3: Edit Capture Script

A dataset_capture_script.py Python file is automatically created within the dataset folder and opened inside the IDE editor window. This script is used to initialize camera settings for the Cam H7 before capturing images.

Images collected for this dataset should be captured in square dimensions (240x240 px) and grayscale color. Both these settings will ensure images match the input formatting expected by the model.

Edit the camera configuration code block in the script by updating the sensor.RGB565 value to sensor.GRAYSCALE and adding a line defining the image size with sensor.set_windowing((240, 240)).

The updated code block should be as follows:

sensor.reset()

sensor.set_pixformat(sensor.GRAYSCALE) # Set pixel format to GRAYSCALE

sensor.set_framesize(sensor.QVGA) # Set frame size to QVGA (320x240)

sensor.set_windowing((240, 240)) # Set 240x240 window.

sensor.skip_frames(time = 2000)With the Cam H7 connected to the laptop via USB cable, click the "Connect" button in the lower-left corner of the IDE.

Click the "Start" button in the lower-left corner to begin executing the data_capture_script.py script. Once running, a live preview window of what the camera sees, with configuration settings applied, is viewable in the Frame Buffer panel of the IDE.

Step 4: Capture Images

Begin with the vehicle parked and its tires fully inflated (~45 PSI). Use the live preview window in the IDE to position the device so the camera is centered on a single tire, as defined by the "full" class above.

In the Dataset Editor panel of the IDE click the "New Class Folder" button and enter the class name "full" when prompted. This creates a new full.class subfolder within the tire-dataset folder to store images for this class.

Click on the full.class folder within the Dataset Editor menu to highlight it. Any new images captured for the dataset are placed into whichever class subfolder is currently highlighted.

With the camera in position, begin collecting "full" class images by clicking the "Capture Data" button. Each click captures and saves a JPEG image. Between each click, slightly vary the position and angle of the camera to produce small differences between successive images.

Collect "full" images at different tire rotations by periodically moving the vehicle a few feet forward or backward, repositioning the camera, and continuing the collection process. Varied rotations ensure that the model doesn't learn a specific hub cap rotation angle as part of the class features.

Repeat this process until an entire set of "full" images is collected. The total number of images used in this project was 300 per class.

Next, let the air out of the vehicle's tires until they are considered flat (~10 PSI). Create a new "flat" class and highlight the flat.class subfolder in the Dataset Editor menu.

Repeat the collection process, this time with images that meet the "flat" class definition. Vary distance, angles, and tire rotation during the process and continue until the entire set of 300 "flat" images are collected.

Finally, create a new "no-tire" class and add images to the no-tire.class folder using a similar process as above. These images should meet the "no-tire" class definition and include images of non-tire objects, the side of the car, and images with partial tires. Continue until the entire set of 300 "no-tire" images are collected.

When complete, the tire-dataset folder should include full.class, flat.class, and no-tire.class subfolders that contain 300 example images each, for a total of 900 images. A labels.txt file was also generated which includes the three class names.

A copy of dataset images collected for this project is hosted here.

Build and Train a Machine Learning ModelEdge Impulse is used to design, train, test, and build a TensorFlow image classification model that is used by the device. The platform provides an end-to-end, web-based workflow that guides users through each of these steps.

The labeled dataset created earlier is used by Edge Impulse to train the image classification model and test its performance. The performance accuracy achieved by the model gives insight into how well it will perform once deployed on the device and used to classify newly captured images.

These instructions assume you have an Edge Impulse account.

Step 1: Create a New Project

Edge Impulse organizes work into projects, where input data, workflow progress, and other project details are stored together. Create a new project to begin the image classification process.

From the main dashboard of Edge Impulse (or the user icon dropdown) click "Create new project". When prompted, enter the project name tire-classification and click "Create new project".

A new tire-classification project is created and the website redirects to the project's dashboard page, which shows an overview of the project's details.

Step 2: Upload the Dataset

Use OpenMV IDE to upload the labeled dataset to the newly created Edge Impulse project.

If the dataset is not still open in the Dataset Editor of OpenMV IDE, open it from the 'Tools' menu. Select 'Dataset Editor', then 'Open Dataset'. Select the dataset's top-level tire-dataset folder and click Open.

Link OpenMV IDE to your Edge Impulse account to enable the upload. In OpenMV IDE, from the 'Tools' menu, select 'Dataset Editor', then 'Export', then 'Login to Edge Impluse Account and Upload to Project'. Type in your Edge Impulse username and password when prompted and hit OK.

A dropdown dialog appears prompting you to select a project from your Edge Impulse account. Select the tire-classification project and hit OK.

Next, a Dataset Split dialog will prompt you to select how to split the data between training and testing sets. Leave the default 80/20% split and hit OK.

A progress bar appears showing the upload progress. After it is complete a dialog detailing how many images were uploaded to the project is shown.

In Edge Impluse, click Data Acquisition in the navigation menu to see an overview of the data uploaded to the project.

This page allows you to see which individual images were split between the training and test sets. Click any image name to see a preview of it.

Step 3: Impulse Design

Within Edge Impulse, an impulse is a chain of processing blocks that are arranged and configured to accomplish a certain machine learning task. Different blocks are used to collect, preprocess, and create outputs from project data.

Design an impulse for this project that formats input images, generates image features, and uses transfer learning to build an image classification model.

Begin by clicking Impulse Design in the navigation menu to see the project's default impulse layout.

The impulse includes an Image Data block that defines how input images will be resized during processing. Keep the default values for this block. When processing occurs, the 240x240 pixel input images will be downsampled to 96x96 pixels. This is a standard image size used by some of the transfer learning models available on Edge Impulse.

Click 'Add a processing block'. In the popup menu, click 'Add' on the Image block item. The new Image block appears in the impulse layout. Keep the default values of this block, which is used to define the input into the final learning block.

Click 'Add a learning block'. In the popup menu, click 'Add' on the Transfer Learning block item. The new Transfer Learning block appears in the impulse layout. Keep the default values of this block, which define the inputs (images) and outputs (class labels) that the block will use.

Finish designing the impulse by clicking 'Save Impulse'. Image and Transfer Learning items are added under Impulse Design in the navigation menu.

Step 4: Image Feature Generation

Images can be converted into a list of features to better work as inputs into machine learning models. Generating these features is required before training can take place.

Click on Image in the navigation menu. This opens the Parameters tab of the Image page, which allows users to view raw image data and alter processing parameters before feature extraction. Since the dataset images were collected in grayscale color, select 'Grayscale' for the color depth option. Click 'Save Parameters'.

The Generate Features tab is opened. Click the 'Generate Features' button to begin the process. Once complete, a reduced-dimension version of the dataset features is displayed in a 3D visualization.

Explore this chart to see the separation and clustering of images from each class in 3D space. Clear delineations between the classes can indicate that the model will perform well when learning to distinguish between the classes.

Step 5: Transfer Learning

With the dataset processed and converted into features, it can now be used to train an image classification model. Next, configure the model's learning parameters and initiate the training.

Begin by clicking Transfer Learning in the navigation menu, which opens the Transfer Learning page. The Neural Network Settings window allows users to configure both the training settings and the architecture of the neural network that will be used.

Training settings control how the process of training is performed, including the number of training cycles conducted and the learning rate. These parameters affect how many times the model is exposed to the training data and the size of adjustments made to the model's weights and biases as it learns. Both parameters can affect the overall accuracy that the model achieves.

Leave the training settings at their default values for this project.

Neural network architecture settings control the structure, size, and type of network that is used in the model. The input layer (generated features) and output layer (3 class features) remain constant, but architecture settings can adjust all the model layers in between. Different model types may result in large differences in performance.

Edge Impulse enables users to utilize transfer learning, where a model that has already been pre-trained on a large dataset of images is trained further for a specific task. Transfer learning saves on training time and benefits from learning that has already taken place. Use a well-established, pre-trained model for this project's image classification task.

Click 'Choose a different model' and then 'Add' for the MobileNet V2 96x96 0.35 option. The MobileNet V2 model is specially designed to work on embedded devices and achieve high accuracy in image classification tasks.

Click 'Start Training' to begin the training process. An output console will show the training progress, including its validation accuracy after each training epoch. This val_accuracy value should increase as the model gets exposed to more data and better learns how to correctly classify the images.

After training is complete the Model window shows statistics about the model's performance. Validation set accuracy is displayed, as well as a confusion matrix and feature explorer.

The confusion matrix shows how often images were correctly classified, as well as which classes they were incorrectly classified as.

The matrix above shows that 100% of the "no-tire" validation images were correctly classified as "no-tire". This is understandable because the feature explorer showed a clear separation between the "no-tire" images in the feature space.

The majority of "full" and "flat" images were correctly classified (97.8% and 94.7%), but a few percent of images were misclassified as the opposite. This makes sense because there was less separation between the "full" and "flat" classes, making it harder for the model to distinguish them with 100% accuracy.

Step 6: Model Testing

With the model fully trained it can now be applied to the test portion of the data that was initially split from the training data. Applying the model to the test data will validate its performance further.

Click on Model Testing in the navigation menu. The Model Testing page shows a list of all dataset images included in the test split. Click 'Classify All' to begin the classification process.

When the process is complete, results from the testing will be displayed, including an overall accuracy value and confusion matrix.

The testing validation shows 100% accuracy when classifying test data images. This is the best result possible and implies that the model will perform very well when deployed on new images.

If your model did not achieve a high accuracy during testing there are a few changes that can be made:

- Investigate the quality of images in the labeled dataset to ensure that they conform to the class definitions that distinguish them. More images may need to be captured to add to the training and testing sets.

- Tune the training and model architecture parameters to see if a better accuracy can be achieved. These parameters can have a large effect on the model's performance.

When the model reaches the desired accuracy it is ready to be deployed and used on the device.

Deploy the ModelThe trained model exists on Edge Impulse and must be deployed to the OpenMV Cam within the device. Once deployed, the model can be tested in real-time on images that are captured live from the device's camera.

Step 1: Export the Model

Begin by first exporting the trained model from Edge Impulse.

Click on Deployment in the navigation menu. On the following page click on 'OpenMV' then 'Build'. An ei-tire-classification-openmv-v1.zip zip file is downloaded.

Unzip the file to access a directory that contains three files: labels.txt, trained.tflite, and ei_image_classification.py.

Step 2: Copy Files to the OpenMV Cam

The labels.txt file contains a list of the three image class names. The trained.tflite file is the trained TensorFlow model. Both files need to be copied onto the OpenMV Cam's onboard storage to be utilized.

With the OpenMV Cam connected to the computer via USB cable, select and copy labels.txt and trained.tflite, then paste them into the top-level of the OpenMV Cam's file system that shows up as a connected storage device on the computer.

Step 3: Add Prediction Overlays

Next, open the ei_image_classification.py file in OpenMV IDE. This script includes all the Python code for configuring the OpenMV Cam, capturing images, and processing them through the TensorFlow model.

The content of the Python script is shown here:

import sensor, image, time, os, tf, pyb

sensor.reset()

sensor.set_pixformat(sensor.GRAYSCALE)

sensor.set_framesize(sensor.QVGA)

sensor.set_windowing((240, 240))

sensor.skip_frames(time=2000)

net = "trained.tflite"

labels = [line.rstrip('\n') for line in open("labels.txt")]

clock = time.clock()

while(True):

clock.tick()

img = sensor.snapshot()

# default settings just do one detection... change them to search the image...

for obj in tf.classify(net, img, min_scale=1.0, scale_mul=0.8, x_overlap=0.5, y_overlap=0.5):

print("**********\nPredictions at [x=%d,y=%d,w=%d,h=%d]" % obj.rect())

img.draw_rectangle(obj.rect())

# This combines the labels and confidence values into a list of tuples

predictions_list = list(zip(labels, obj.output()))

for i in range(len(predictions_list)):

print("%s = %f" % (predictions_list[i][0], predictions_list[i][1]))

print(clock.fps(), "fps")After the code does an initial configuration of the camera, it enters a while loop that continues to run as long as the microcontroller has power.

At each iteration of the loop, a new image is captured from the camera using sensor.snapshot(). The image img and the model path net are then passed into tf.classify(), which performs the image classification.

The classification results in a prediction value (a number between 0 and 1) being calculated for each of the three images classes. These values sum to one and correspond to the model's confidence that the image belongs to each class.

Labels and prediction values are stored in predictions_list.

As camera images are continuously captured and classified by the model, the script prints the model's prediction values to the serial terminal.

To make viewing these values easier, overlay them on the Frame Buffer camera preview by adding the following line inside the script's second for loop:

img.draw_string(5, 10*i+5,"%s = %f" % (predictions_list[i][0].upper(), predictions_list[i][1]))Step 4: Test on Live Images

Test how the model is performing on live images by running the script and watching the prediction values change as the camera views different objects.

With the OpenMV Cam connected to the OpenMV IDE, click the 'Start' button to begin running the ei_image_classification_script.py script.

While watching the Frame Buffer preview, point the camera at various objects in the capture area. As the camera moves, prediction values for "full", "flat", and "no-tire" update with every frame.

When the camera is pointed at a non-tire object, the "no-tire" value should be closest to 1. When pointed at a tire, either the "full" or "flat" prediction values should be the largest based on the pressure of the tire.

Add a Notification SystemAs is, the model's prediction results are only viewable when the OpenMV Cam is connected to OpenMV IDE through the serial terminal or Frame Buffer window.

Create the final version of the device by connecting an RGB LED to the microcontroller to use as a notification light. This will allow users to see the result of the highest predicted class while the device is battery-operated and disconnected from a computer.

Step 1: Connect the RGB LED

Begin by first disconnecting the OpenMV Cam from the computer and removing it from the 3D printed housing.

Connect an RGB LED to the microcontroller following the diagram below. The R, G, and B leads of the LED are connected to pins 7, 8, and 9, respectively, using 330-ohm resistors. The ground lead is connected to the GND pin.

Connections can be made with jumper wires. Electrical tape should be used to cover the resistor connections to prevent any short circuits between the exposed wires.

The position of the LED within the housing puts it in close contact with the back of the microcontroller board. Electrical tape can be placed on the back of the microcontroller to prevent any unwanted contact between LED wires and the board's components.

Step 2: Update Python Script

Next, the ei_image_classification_script.py is modified to add additional functionality for controlling the RGB LED.

With the script opened in the OpenMV IDE editor, add the following line under the initial Python imports to import the Pin object from the pyb library:

from pyb import PinUse Pin to initialize the three GPIO pins connected to the RGB LED as outputs by adding the following lines below where net and labels are defined:

# Define output pins for RGB LED

pin_r = Pin("P7", Pin.OUT_PP)

pin_g = Pin("P8", Pin.OUT_PP)

pin_b = Pin("P9", Pin.OUT_PP)As outputs, the pins can be programmatically turned on or off by setting them to high or low, respectively. Different LED colors can be created by controlling the combination of R, G, and B pins that are on or off.

The aim is to set the LED color based on the label of the largest prediction value from the model. Add the following color_by_label function under the previous code block:

# Function to set RGB LED color based on input label

def color_by_label(label):

if label == 'flat':

# Red

pin_r.high()

pin_g.low()

pin_b.low()

elif label == 'full':

# Green

pin_r.low()

pin_g.high()

pin_b.low()

elif label == 'no-tire':

# Yellow

pin_r.high()

pin_g.high()

pin_b.low()This function takes a label (ex. 'flat') as an input and sets the pin states to create a defined color (ex. red) for that label.

Prediction values exist for each of the labels, but the prediction with the highest value is most important. This maximum prediction value represents the class that the model has determined the image most likely belongs to. This is the singular result that is of interest to users.

Add the following lines of code inside the script's second for loop, under where prediction_list is defined:

# Update RGB LED color based on the highest predicted class

max_prediction = max(prediction_list, key=lambda item:item[1])

color_by_label(max_prediction[0])This code determines the maximum of all the prediction values and sends the corresponding class label into the color_by_label function.

Now, with each iteration of the script's while loop, an image is captured, classified, and the color of the RGB LED is updated based on the strongest predicted classification.

The ei_image_classification_script.py script is complete. A final version of it is included in this project's attachments.

Step 3: Save main.py Script

So far the classification script has only been run within the OpenMV IDE with the OpenMV Cam connected. However, the script needs to be run on the microcontroller while it is disconnected from the computer and power by the LiPo battery.

With ei_image_classification_script.py open in OpenMV IDE and the OpenMV Cam connected via USB, from the 'Tools' menu select 'Save open script to OpenMV Cam (as main.py)'. This will save the script as main.py on the OpenMV Cam's flash drive.

The OpenMV Cam is configured to automatically run the main.py script that's loaded on its flash drive whenever it is powered on and booted up. By saving the script in this manner, it will automatically start running once a LiPo battery is plugged into the disconnected board.

Step 4: Connect Battery and Deploy

The device is now ready to be completely assembled and deployed for use.

Begin by disconnecting the OpenMV Cam from the computer. Connect the LiPo battery to the board using the JST plug. The board will power on and boot up once the battery is plugged in.

With the RGB LED and battery connected to the board, carefully fit it inside the 3D printed housing, with its camera extended through the hole in the front face.

Fit the LED bulb through the hole in the top of the housing from the inside. Place the battery behind the LED and enclose the housing using the backplate.

The device can be placed anywhere cars are present. Position the device 3 ft. away from the sides of vehicles, with the camera aimed at the vehicle's tires.

As cars drive by the device, observe the notification light change in real-time depending on what is in view of the camera.

This project aims to solve the industry-level problem of manually inspecting tires for large quantities of vehicles.

The prototype device demonstrated how machine vision can be used to quickly classify images of tires based on their fill level. Inspectors can now be notified about the status of a tire without having to physically access it. This capability can save time and increase safety in the inspection process.

While this prototype device shows potential, there are improvements that would make it more applicable at an industrial scale:

- Different Tire Types: The labeled dataset of tire images created for this project were collected from a single vehicle, with a single type of tire. For the machine vision algorithm to successfully work on a broader scale, the dataset would need to include more examples of different tires, including different brands, sizes, hubcap styles, colors, and sidewall depths.

- Different Tire Pressures: The labeled dataset included only two classes of tire images, either "full" or "flat". In real applications, it would be beneficial to have a larger list of pressure categories that tires could be categorized as. For example "45-50 psi", "40-44 psi", "35-39 psi", etc. This could be accomplished by building out a new dataset with an adequate amount of example images from each of these classes.

- Notification Improvement: The prototype device uses a colored LED to notify inspectors of the result of the image classification. In real applications, it would be more useful if the notification was sent out in an email or text message, including a picture of the tire being evaluated. This would save the inspector from having to monitor the LED continuously and instead wait for messages to arrive.

Comments