I was supposed to showcase an RT-Thread Etherkit board for a school event, but we hit a wall immediately the board required a dedicated programmer hardware that we simply didn't have. My prof showed some board to use instead during our lecture, one showed my pique interest RT-Thread Vision Board. It had a camera. It looked interesting.

I pivoted immediately. Instead of a complex IoT demo, I decided to build something visual and tangible: A Smart Color Sorting Robot. In an industrial setting, this is the logic that sorts packages or separates plastic types for recycling. For me, it was a way to save the presentation.

The "AI" Trap (The "How")Like everyone else in 2025, my first thought was "Let's use AI!"

I jumped into Edge Impulse to train a Machine Learning model. Ideally, it would learn to recognize "Red Object" vs "Blue Object." In reality? It was a disaster.

- Model 1: Detected everything as "Brown" or "Black."

- Model 2: I improved the dataset and got 70% accuracy on paper. But in the real world, it was confident but wrong. It would look at a red ball and scream "Blue!"

I realized I was over-engineering it. I didn't need a neural network to tell me what color a pixel was. I scrapped the AI and switched to Hue Detection (Math-based color tracking). It was faster, didn't need training, and was incredibly sensitive to color changes. Sometimes, the old ways are better.

The Language Barrier & The Arduino BridgeThe Vision Board is powerful, but there was a catch: the documentation is almost entirely in Chinese, and English support is non-existent.

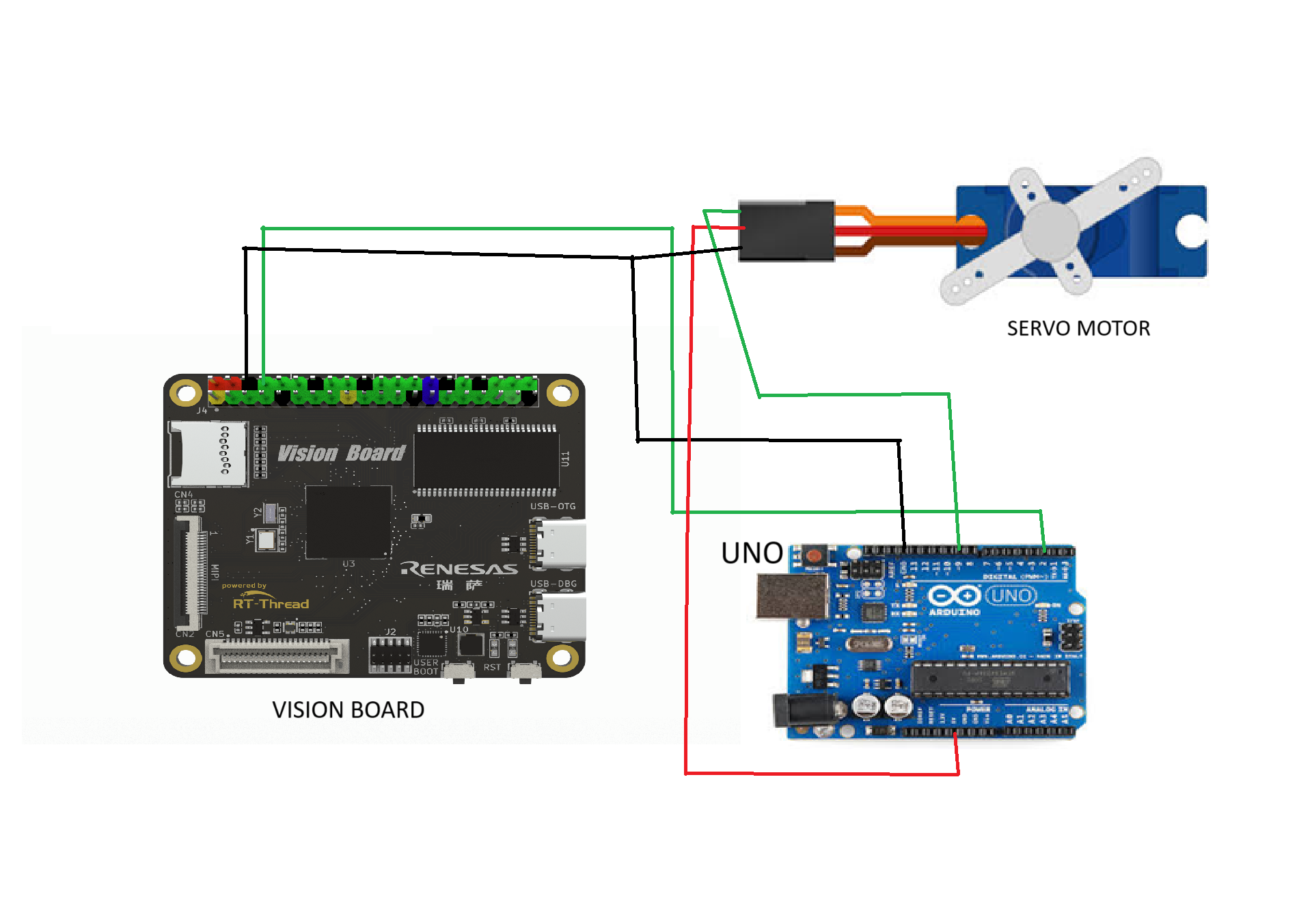

I needed to move a Servo motor. On the Vision Board, exposing the PWM pins required navigating a new IDE (RT-Thread Studio) and digging through translated datasheets. I didn't have time for that.

The Solution: I used an Arduino Nano as a "Translator." The Vision Board does the heavy lifting (Vision Processing) and sends simple text commands over UART to the Arduino. The Arduino which has thousands of English tutorials that handles the Servo.

The "Vibe Code" Moments (The Struggle)Connecting the two boards was where the real headache began.

1. The MOSFET Mistake: The Vision Board sends 3.3V signals. The Arduino reads 5V. I tried to use an IRF540N MOSFET as a logic level shifter. Bad idea. That MOSFET is designed for driving motors, not high-speed data. It completely blocked the signal.

2. The Baud Rate Jitter: I set the communication speed to 115200 thinking "faster is better." Instead, the servo twitched and jittered like it was possessed. It turns out SoftwareSerial on Arduino can't handle those speeds. Dropping to 9600 fixed it instantly.

3. The "Ghost" Signals: Initially, the code sent a message for every frame. "Red, Red, Red, Red..." 30 times a second. The Arduino buffer got clogged, and the servo lagged behind reality. I had to write a "State Change" logic so the camera only speaks when the color changes.

The Victory Lap (Sort of)It’s 3:00 AM. The code finally compiles. I hold up a red object.

The Vision Board sees it.

The Arduino receives the signal.

The Servo snaps to 40 degrees.

Honestly? My reaction wasn't "Eureka!" It was "Meh... finally."

I don't have a 3D-printed chassis, and the wires are a mess, but the logic holds up. If I had another month, I’d add a conveyor belt and an LCD screen to make it a true industrial prototype. But for a last-minute pivots to save a school symposium? It works.Authors Note: Generative AI are being used.

Comments