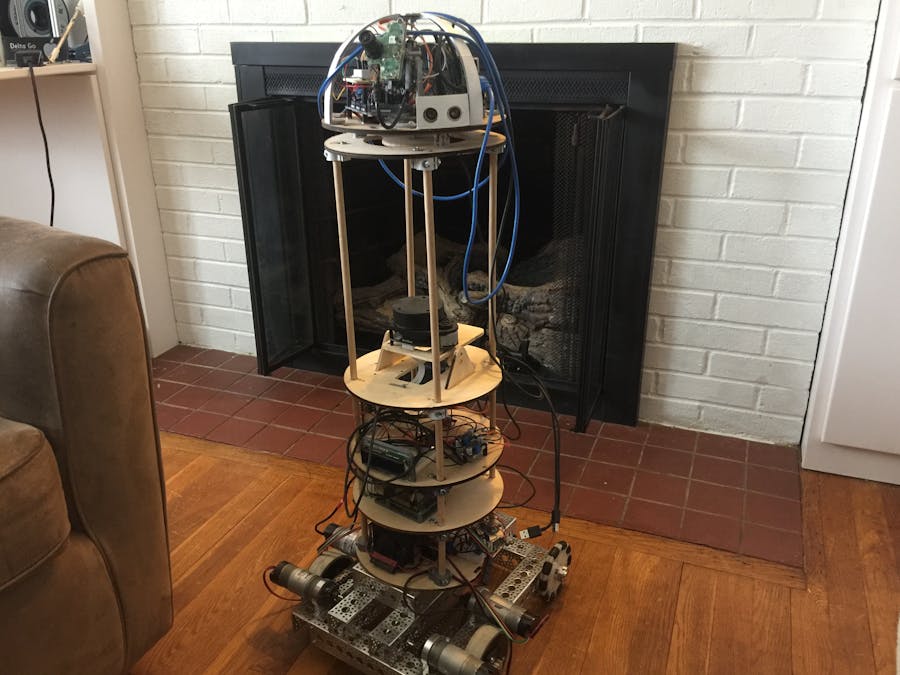

I have been developing an autonomous home-assistant robot. This robot was entirely designed, created, and built from scratch with no use of a kit or directions. In the process of creating this, I taught myself how to use ROS, Python, C, HTML, CSS, OpenCV, and other complex systems/languages.

The robot is able to travel around the house independently using Simultaneous Localization and Mapping (SLAM) with Robot Operating System (ROS) for navigation. It knows where to go based on pre-set waypoints. This machine is a prototype of a low-cost robotic assistant for the average person to use in their home. It combines facial recognition, navigation, and a web-control page, as well as other easy-control options such as gesture-control, Wii remote, and even hand-guided. The robot uses many Proportional Integral Derivative (PID) control systems which allows the robot to self-balance, making it more user-friendly, lighter weight, and maneuverable.

The potential applications of this robot include seeking out specific individuals to remind them about calendar events, deliver items such as specific medications at specific times, or in-house mobile monitoring and companionship for home-bound individuals such as an elderly people with dementia. The skills could be tailored to specific needs of elderly or disabled individuals.

This is an ongoing project. The core hardware is in place and has proven it works. I have the main software already implemented. The next steps (I am currently limited by funding) are to acquire a battery to make the robot cordless and to upgrade different components to improve the robot’s functionality.

I originally designed this robot to be self-balancing, but due to funding I had to take away that feature. I hope to add that back in, as I think that it adds value to the robot.

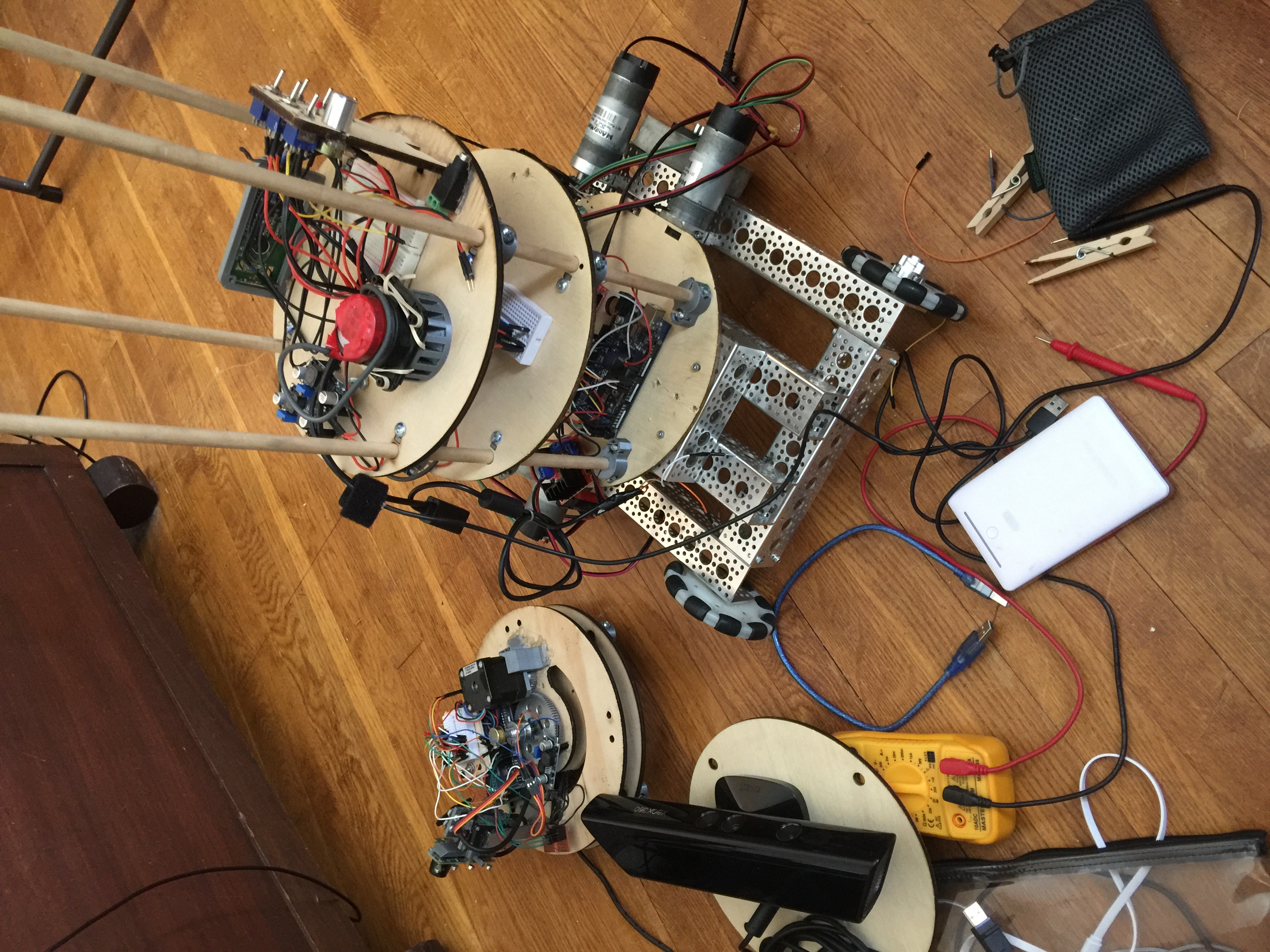

The robot is completely controlled by ROS, which makes navigation and general control of the robot simpler. The robot is split into two parts: the head, and the main body. On the head, a Raspberry Pi is setup with ROS, and is connected to an Arduino Mega and a webcam. This pi, streams the webcam data with ROS, and talks with the Arduino to control the heads rotation and camera position. The base section of the robot also consists of a Raspberry Pi, RPLIDAR A1, and a Teensy 4.1 micro controller. This Raspberry Pi tells the Teensy where to move the robot, and streams the Lidar data.

One problem that I encountered was that the Lidar sensor is recording data on a 2D plane. When this robot is self-balancing, the Lidar would not always be facing strait up when driving around. My solution was to make a swivel platform that the Lidar sits on, which tilts to keep the Lidar flat.

_wzec989qrF.jpg?auto=compress%2Cformat&w=48&h=48&fit=fill&bg=ffffff)

Comments