Ever wonder how difficult it is for deaf people parenting hearing and speaking children, since infant to teen and beyond?

Parents face unique challenges in each phase of parenting.

Newborn babies do not speak. Only communication they know is to cry. They cry when hungry, they cry when fussy, they cry when in pain. Imagine a deaf parent, little way from babies crib, they hear nothing! Baby's cry is unheard. Baby might be in real pain or discomfort which needs immediate attention but her scream will be unheard. How about a device which will listen to baby's cry and notify deaf parents via haptic feedback on a wearable device in real time?

Pre-schoolers are different than newborns. They learn new ways of communication, they learn to speak but problem with deaf parents remain the same. They are not able to hear what their little ones are trying to communicate. But on a positive side, kids can learn to use smart phone or tablets and can distinguish different image or icons. Yes, I am talking about Augmentative and Alternative Communication (AAC) for deaf parents not hearing impaired children!

How about an app running on a tablet through which young children can communicate with their deaf parents? App will send haptic signal to a wearable device and deaf parent would be able to recognize the signal pattern.

Older children may prefer to just talk instead of tapping on icons. How about mapping similar actions through voice?

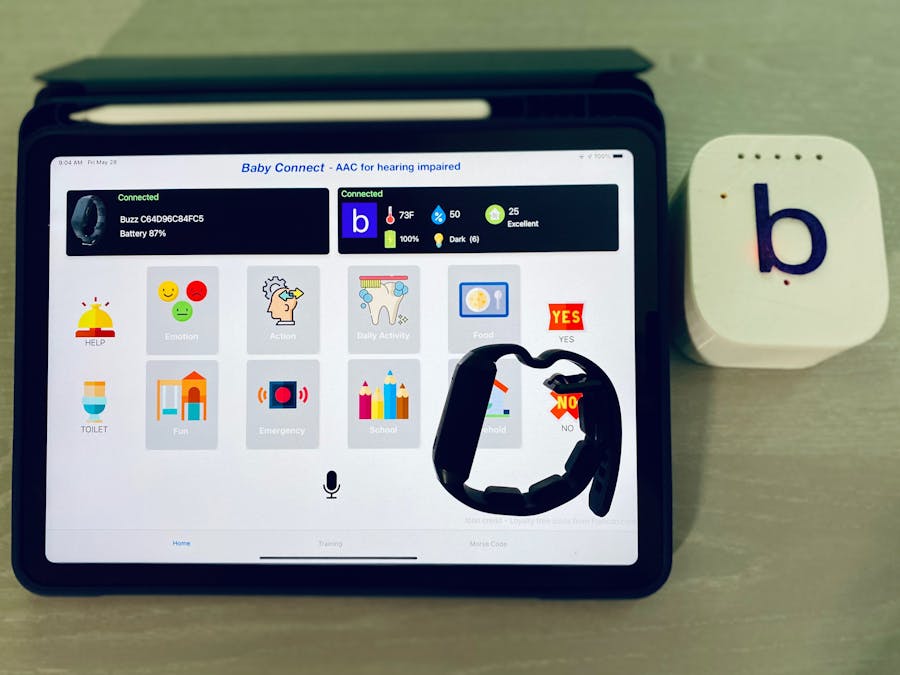

Baby ConnectIntroducing "Baby Connect", an app running on tablet. App is connected to Neosensory Buzz over the bluetooth and sends haptic feedback via vibration when an icon is tapped on the screen or a word is spoken holding down the on-screen record button.

The system comes with a device equipped microphone and environment sensor to monitor IAQ ( Indoor Air Quality), temperature, humidity, ambient light. Microphone records surrounding sounds and analyze them using machine learning algorithm to determine is baby is crying.

Baby Connect device is powered by 1100mAh Lipo battery with TP4046 charging module and a 5V booster which powers Nano BLE Sense. I have tested the device connected to App over BLE and battery lasted for 32 hours which is pretty good in my opinion.

Thinking behind the appAugmentative and alternative communication (AAC) is a way for a child to communicate when the child does not have the ability to use speech as a primary means of communication. While researching about AAC, I realized same technique can be applied to hearing impaired parents. But then I started thinking how to translate AAC actions ( icons or images ) to vibration. I was looking for to come up with a coded pattern which will represent different AAC actions. Recently I was watching a movie where a person stuck in an abandoned basement for months was trying to communicate using Morse code through via a bulb. In Morse code, there is DOT and DASH. Using just one channel with different pattern we can represent all the alphabets of english language. I decided to adopt the similar concept to represent AAC actions. Using Neosensory Buzz, we actually have 4 channels ( 4 motors ) and can make hundreds of different patterns.

After combining the concept of AAC and Morse code, I mapped each AAC actions ( such as Like, Thank You, Hungry etc ) to a visual pattern with DOT and DASH. One difference from Morse code is that, in Morse code, signals are sequential such as DOT DOT DOT ( S) or DASH DASH DASH ( O) but here, as we have Buzz with 4 motors which can vibrate independently, we have 4 channels. So "LIKE" is represented by DOT, DOT, DASH ( motor 1, 2 and 3 vibrating simultaneously)

DOT is represented by low frequency 50 and DASH is presented by high frequency 255. So above "LIKE" action is represented by [50, 50, 255, 0] and repeat 40 times ( 40 frames )

Similarly I have mapped several other AAC actions to vibration as shown in above image.

Train Your BrainAll these mapping may sound complicated and you must be wondering how your brain will know what vibration pattern means what! But you will be surprised how quickly your brain can learn these patterns.

In the past, it was believed that human brain does not grow or change after a certain age, acts like static organ but recent study and research has proved that neural network changes over time, creating new pathways and deleting old pathways. This is called brain-plasticity. Researchers have studied drivers brain before and after taking taxi-driving test in London city and they observed new neural pathways developed in brain after the test.

Similarly, haptic feedback can be used to send some visual signal or audio signal to brain using regular visual or audio neural pathways. Over the time and practice, brain will accept haptic feedback and process them as if they are received from retina or ears, substituting a lost sense.

It's also proven that new senses can be developed. For example we don't usually feel anything except smell while in deep sleep but can train our brain to respond to some haptic feedback even while we are in sleep, creating new senses.

On the training page, app will present different cards to the user and also vibrate the buzz. User has to guess the correct card. If selection is not correct, card will be highlighted red. If selection is correct, card will be highlighted green and next set of cards will be shown. With practice over the time, user would be able able to map the vibration with the icon.

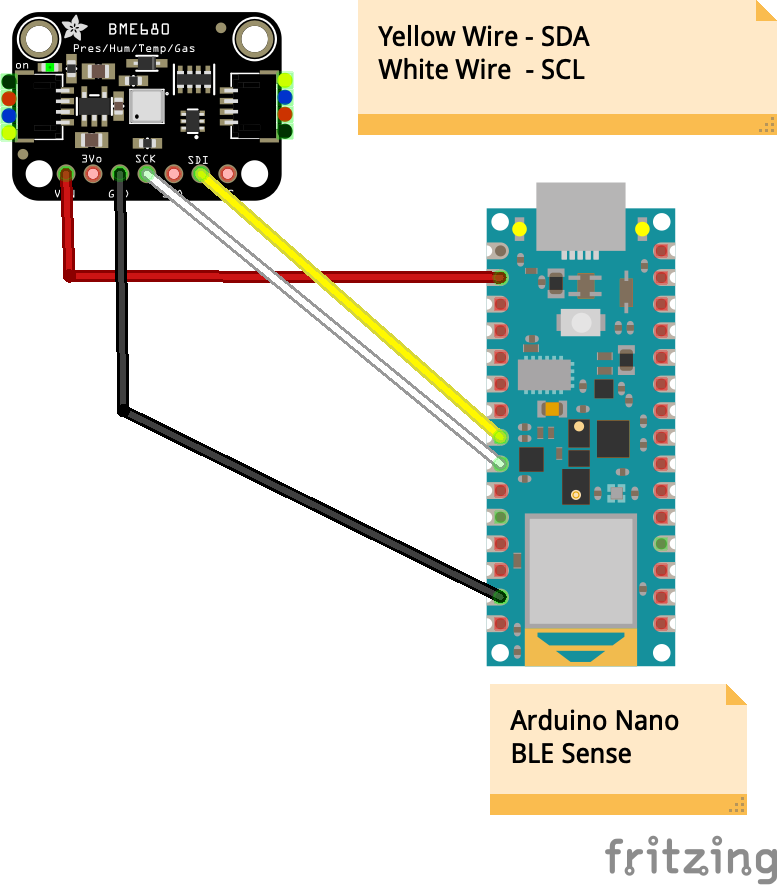

Baby's Activity MonitoringThe Baby Connect device is running Edge Impulse inference on Arduino Nano BLE Sense microcontroller which has in-built microphone. Microphone records 3s audio sample and classifies the audio into 4 labels - noise, hungry, fussy and pain.

I have collected 5 minutes of noise data from different background noises such as people talking, kitchen faucet, chopping vegetables, AC, Fan etc. About 3 minutes of hungry, fussy & pain.

For baby cry dataset, I have used https://github.com/gveres/donateacry-corpus repo which was part of a campaign. I also collected audio from youtube. While analyzing different types of baby cry, I found a pattern for hungry vs pain. When baby is in pain, the cry continuously with a break just to breathe. But when they are hungry, you will notice some gaps in between. But it's very hard to separate hungry vs fussy. At present my model is able to classify noise vs baby cry with around 85% accuracy but it's not quite good in separating hungry vs pain vs fussy. I need lot more data to make this work better. I will start a "donate" campaign pretty soon to collect hundreds of data from all over the world and enhance the model. I will also open source the EI project so that other can contribute.

When a cry is detected by BLE sense, it sends data to the app via bluetooth and app vibrates the Buzz. At the same time it turns all 3 LEDs to RED in case parent miss the vibration. LEDs are lite on until user presses "+" or "-" button on the buzz.

Baby's Environment MonitoringNano BLE Sense is connected to BME680 sensor which provides very precise reading for temperature, humidity, ambient light and indoor air quality ( IAQ). Use can press the power button on the Buzz to feel the data through vibration. Reading from sensor is mapped to 0-255 range and sent to the buzz.

With latest Neosensory firmware you can now program all 3 buttons and LEDs on the buzz. Advance users can communicate via Morse code using the Buzz buttons. Checkout the demo below.

Voice/Speech TranslationFor elder kids or advance users, tapping on icons may seem time consuming. Instead user can tap and hold the microphone button on the app and speak the action. App will transcribe the captured voice into text and map that to the AAC action.

This is work in progress. I am integrating with AWS Amplify to leverage AWS Prediction.

Project Demo

Comments