Poor sleep has been a constant struggle for me. I’ve tried sleep apps, wearables, and smart alarms, but nothing truly worked. Wearables are uncomfortable at night, my phone can’t track sleep accurately from across the room, and none of these tools understand how my bedroom’s environment affects my rest. I wanted a solution that simply lives in my room, silently working to improve my sleep without requiring me to do anything.

The IdeaThat’s why I'd like to crearte SomnoSphere, a smart bedside lamp that uses advanced sensor fusion to understand my sleep patterns, respond to my environment, and gently wake me at the ideal moment. No straps, no apps running overnight, just a seamless device that blends into your bedroom while working smarter than any wearable.

SomnoSphere listens to your body through contactless mmWave radar, senses the air and light around you, and uses edge AI to learn how your environment shapes your sleep. Then it adapts by adjusting its light, providing insights, and helping you build better habits.

Theory of OperationAt the heart of SomnoSphere is a mmWave radar that tracks micro-movements, respiration rate, heart-rate patterns, and restlessness, all without touching the user. This data is fused with environmental metrics from temperature, humidity, CO₂/air-quality, and ambient light sensors.

Using Edge Impulse, these signals are processed and classified into sleep-related states such as restful sleep, light sleep, REM-like movement patterns, or arousal events. Environmental readings are correlated with disturbances to identify patterns like room temperature changes preceding wake-ups or CO₂ buildup affecting sleep depth.

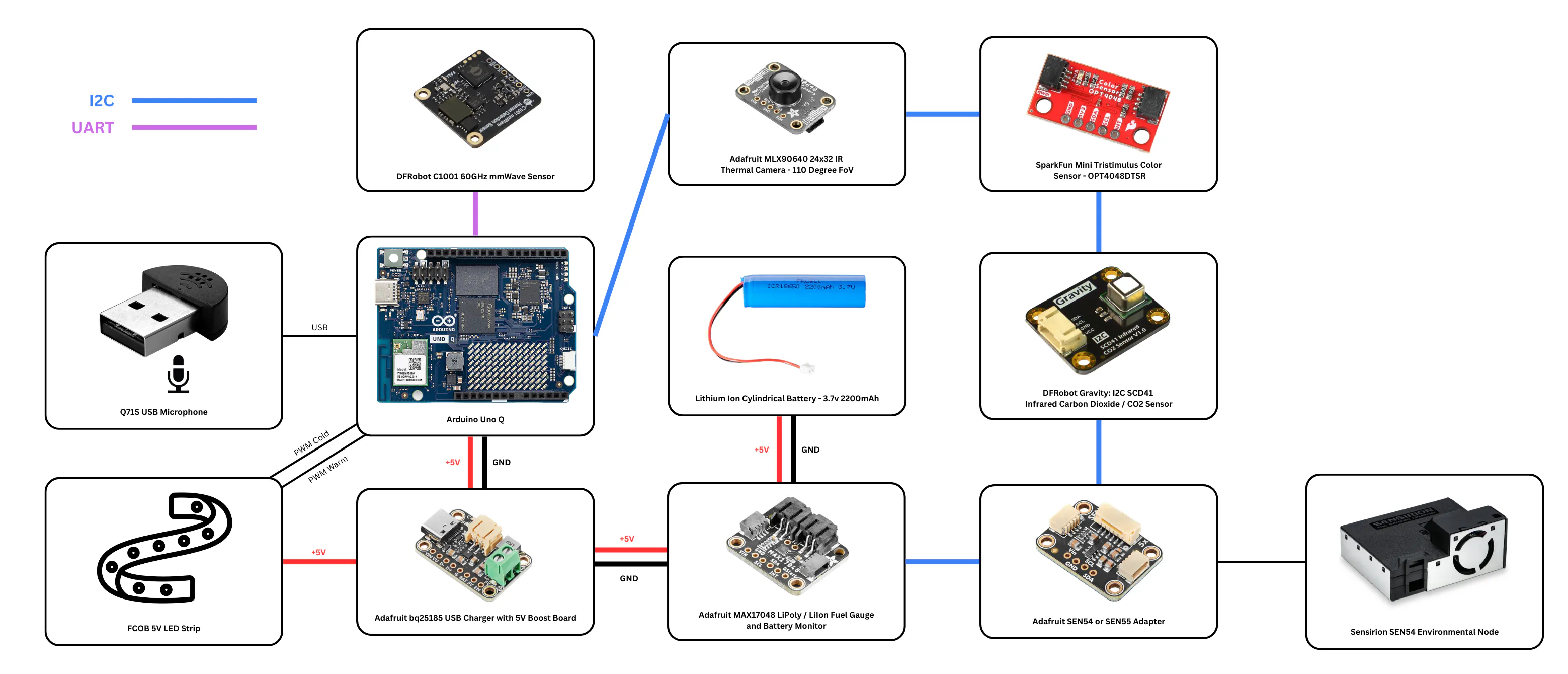

The Arduino Uno Q runs these models fully on-device, enabling private, real-time inference. Based on the analysis, the lamp adjusts brightness and color temperature to support melatonin production at night and create a gentle, biologically aligned wake-up light in the morning. Optional WiFi/BLE connectivity syncs nightly summaries to a companion app.

The result is a hands-off, privacy-first system that understands how you sleep and makes the bedroom an active partner in improving it.

The Data PipelineI needed a system that could handle the high-speed data of a radar and a thermal camera while simultaneously monitoring the slow-moving trends of air quality. To achieve this, I leveraged the unique dual-brain architecture of the Arduino Uno Q.

The STM32 microcontroller acts as the device's spine. It never sleeps. Its job is to relentlessly poll the hardware so the main processor doesn't have to wait. I implemented a "Mailbox" architecture here, continuously parsing data from the radar, thermal camera, and environmental sensors and sending it to the MPU using Remote Procedure Calls (RPCs).

Communication between the real-time sensors and the AI brain happens via Remote Procedure Calls (RPC). The MCU sends a serialized "Super-Packet" containing synchronized data from every single sensor every 5 seconds. This decoupling ensures that my heavy AI processing never blocks the critical timing of the radar.

Running on the Linux core, a Python application acts as the conductor. I trained a multi-modal model using Edge Impulse. By feeding it the thermal image (to see posture) and the radar motion data (to feel movement), the model classifies the context of the room in real-time. The system then looks at the AI's classification and overlays the environmental data. As an example: If the AI says "Restless" and the SCD41 sensor reports "CO₂ > 1200ppm," the system flags this timestamp. It understands that the restlessness is likely caused by poor air quality, not just random tossing and turning.

Finally, the system closes the loop. If I wake up in the middle of the night (detected by a sudden shift in thermal posture), the MPU commands the MCU to fade the LEDs to a dim, warm glow; enough to see, but not enough to break my melatonin cycle. Every morning, the system compiles a "Sleep Report" accessible via a web dashboard. It doesn't just tell me I slept poorly; it tells me why, showing me the exact correlation between my room's temperature, the air quality, and my deep sleep cycles.

Hardware OverviewThe Digital Cortex (Compute):

The heart of the system is the Arduino Uno Q (4GB RAM variant) with a unique Dual-Brain Architecture, which is critical for real-time sensor fusion. The STM32 MCU handles the precise timing requirements of the C1001 radar UART stream and the I2C polling of environmental sensors, ensuring no data packet is ever missed. Leveraging the Linux environment and 4GB of RAM, the Qualcomm MPU core runs the Edge Impulse inference engine. It ingests the "Super-Vector" (fused data from all sensors) to perform heavy classification tasks, differentiating between a person reading a book vs. someone in deep sleep, without bogging down the real-time sensor acquisition.

The Perception Layer (Sensors):

All sensors, except the radar sensor which communicates over UART were wired using the I2C Qwiic connector of the Uno Q, allowing for quick prototyping without needing to solder. This significantly reduced the development time.

Adafruit MLX90640 IR Thermal Camera (110° FoV)

- The Hardware: A 24x32 pixel thermal array with a wide 110-degree Field of View, capable of seeing the entire bed and nightstand area.

- Why It Was Chosen: Unlike a standard optical camera, the MLX90640 respects user privacy (no faces are visible) and works in total darkness.

- The Fusion Role: Posture & Presence Verification. While radar detects movement, it can be fooled by a moving fan or curtain. The Thermal Camera validates that the moving object has a human heat signature. It also provides the "Visual Context" to the model, distinguishing between a vertical heat blob (sitting up/awake) and a horizontal one (lying down/asleep).

- The Hardware: A high-frequency radar module capable of detecting micro-movements (breathing/heartbeat).

- Why It Was Chosen: It provides "X-Ray" like capabilities, detecting vital signs even through heavy duvets where thermal cameras might be blocked.

- The Fusion Role: Vitality & Depth. This sensor adds the temporal dimension to the fusion model. It confirms if the "Heat Blob" seen by the MLX90640 is actually breathing rhythmically (Deep Sleep) or irregularly (Apnea/Restlessness).

- The Hardware: A photoacoustic NDIR sensor that measures Carbon Dioxide (400-5000 ppm) with high accuracy.

- Why It Was Chosen: High levels (>1000ppm) directly correlate with grogginess and sleep fragmentation.

- The Fusion Role: Causality Analysis. The system correlates CO2 levels with the Radar's restlessness data. If the fusion model detects increased tossing and turning coincident with a CO₂ spike, it flags "Poor Ventilation" as the root cause of the bad sleep.

Sensirion SEN54 Environmental Node

(Interfaced via an Adafruit SEN54/55 Adapter)

- The Hardware: An all-in-one module measuring Particulate Matter (PM1.0, PM2.5, PM4, PM10), VOC Index, Humidity, and Temperature.

- Why It Was Chosen: It provides a complete "Respiratory Health" picture.

- The Fusion Role: Thermal Comfort Profiling. By fusing the Room Temperature (SEN54) with the Body Surface Temperature (MLX90640), the system can calculate a personalized "Thermal Comfort Envelope," learning exactly what room temperature produces the user's deepest sleep.

SparkFun Mini Tristimulus Color Sensor - OPT4048DTSR

- The Hardware: A high-precision sensor that measures not just brightness (Lux), but the Color Temperature (CCT) and Color Coordinates (CIE x,y) of ambient light.

- Why It Was Chosen: Standard Lux sensors are color-blind. The OPT4048 allows the system to detect "Melatonin Suppressing" blue light.

- The Fusion Role: Circadian Latency Tracking. The system tracks exposure to cool light (>4000K) in the hours before bed and correlates it with the "Sleep Onset Latency" (time to fall asleep) measured by the Radar, quantifying exactly how light pollution affects the user.

- The Hardware: A compact, driver-free USB microphone with an omnidirectional pickup pattern, capable of capturing clear audio in a 360-degree radius.

- Why It Was Chosen: It connects directly to the USB Host interface of the Arduino Uno Q, allowing the Linux core to ingest high-bandwidth audio data directly without taxing the MCU or requiring complex I2S wiring. Its ultra-small form factor allows it to be discreetly embedded in the lamp's base without disrupting the aesthetic.

- The Fusion Role: Acoustic Anomaly Detection. While the radar detects motion, it cannot detect noise. This sensor feeds audio data into a dedicated Edge Impulse model to classify snoring (internal disturbance) vs. environmental noise (external disturbance), providing the missing context for why a "micro-wake" event occurred.

The Actuation and Power Layer:

- The Hardware: A "Flip Chip on Board" (FCOB) LED strip capable of variable Color Temperature (Tunable White).

- Why It Was Chosen: High density (seamless light) and the ability to mimic natural daylight (Cool White) or sunset (Warm Amber).

- The Fusion Role: Adaptive Intervention. If the fusion model detects "Wakefulness" in the middle of the night, it doesn't just turn on; it emits a faint, non-stimulating red/amber glow. If it detects "Morning," it shifts to blue-enriched white to suppress melatonin.

Adafruit bq25185 USB / DC / Solar Charger

- The Hardware: A robust power management module.

- Why It Was Chosen: To ensure stable 5V delivery to the power-hungry sensors (Radar/Thermal) and manage the system's power rails efficiently, protecting the sensitive algorithms from brownouts.

The design separates the device into two functional assemblies: the Logic Base (housing the computing core and sensors) and the Optical Diffuser (the lampshade).

The entire chassis is designed for standard FDM 3D printing, requiring no support structures for the primary surfaces. The modular architecture allows for easy access to the internal electronics. The sensor array is mounted on a dedicated sled within the base, ensuring the thermal camera and radar are strictly aligned with the bed level.

The upper lampshade is fabricated using "Vase Mode" (Spiral Vase) slicing settings. This fabrication technique produces a seamless, seam-free cylinder. The single-wall thickness provides optimal translucency, allowing the internal LED array to diffuse evenly without harsh hotspots, creating a soft, uniform glow essential for non-intrusive night lighting.

All components are printed in White PLA (Polylactic Acid).

SomnoSphere utilizes a multi-modal sensor fusion architecture. Rather than relying on a single monolithic model, the system employs a cascading classification pipeline. Processing is distributed across three distinct models (Visual, Audio, and Physiological) running on the Arduino Uno Q, the outputs of which are aggregated into a final Anomaly Detection block.

1. Visual Pose Estimation (Thermal)

This model is responsible for detecting human presence and determining posture to contextually enable or disable downstream sleep tracking.

- Sensor Hardware: Adafruit MLX90640 IR Thermal Camera (110° FoV).

- Input Data: 24x32 pixel temperature array.

- Preprocessing:

- The raw temperature grid is normalized to a grayscale image representation (0–255 pixel intensity).

- The image is resized to 32x32 pixels using a "fit longest axis" method (preserving aspect ratio with padding) to standardize input dimensions for the Convolutional Neural Network (CNN).

- Data Acquisition:

- The dataset consists of 50 samples per class.

- Data was captured in the target deployment environment (right next to my bed) to account for specific background thermal noise.

- Target Classes:

idle(Empty bed/room).sitting(User detected in upright posture; implies reading or wakefulness).lying_down(User detected in horizontal posture; triggers Sleep Staging model).

2. Audio Event Classification (Snore Detection)

This model monitors acoustic disturbances to correlate sleep fragmentation with respiratory noise or environmental sounds.

- Sensor Hardware: Integrated Microphone (via USB/Audio Adapter).

- Data Ingestion:

- Data was collected directly from the device to the Edge Impulse ingestion service using the Linux CLI.

- Command:

edge-impulse-linux --disable-camera - Sampling Strategy:

- Window Size: 1,000ms (1 second).

- Dataset Size: Approximately 1 minute of total audio per class.

- Target Classes:

snore(Rhythmic respiratory noise).noise(Background ambient sounds, HVAC, street noise).

3. Physiological Sleep Staging (Radar)

This classifier determines the sleep phase based on vital signs extracted from the mmWave radar. Note:To conserve compute resources and reduce false positives, this model is conditionally activated only when the Visual Pose model classifies the state as lying_down.

- Sensor Hardware: C1001 60GHz mmWave Sensor.

- Input Features:

- Heart Rate (BPM).

- Respiration Rate (RPM).

- Movement Energy Index.

- Algorithm: K-Nearest Neighbors (KNN) classification. KNN was selected for its efficiency in clustering low-dimensional scalar data (vital signs) into distinct sleep states.

- Target Classes:

awake(High movement energy, variable heart rate).light_sleep(Low movement, stable respiration).deep_sleep(Minimal movement, rhythmic/lowest respiration rate).

4. Sensor Fusion & Anomaly Detection

The final stage of the pipeline merges the outputs of the classifiers with high-resolution environmental telemetry to identify the root causes of sleep disturbances.

- Input Vector:

- Environmental Scalars: CO₂, Temperature, Humidity, VOC Index, PM1.0, PM2.5, PM4.0, PM10.0, Brightness (Lux), Color Temperature (Kelvin).

- Classifier Output: snore detection boolean.

- Logic: The system effectively creates a time-series correlation matrix. An anomaly is flagged when an environmental threshold is breached coincident with a negative sleep state.

- Example: If snore is detected AND PM2.5 > Threshold, the system logs a respiratory irritation event.

- Example: If awake is detected AND Color Temp > 4000K (Blue Light), the system logs a circadian disruption event.

SomnoSphere operates on a dedicated finite state machine (FSM) running on the Arduino Uno Q. This engine continuously evaluates the outputs from the machine learning classifiers against real-time environmental telemetry to execute actuation commands and structure data for post-sleep analysis.

1. Sleep State Logging

Upon the classification of a user in a light_sleep or deep_sleep state:

- Action: The system suppresses all illumination to ensure total darkness.

- Data Persistence: A timestamped entry is generated containing the specific sleep phase, current heart rate, respiration rate, and a snapshot of all environmental scalars (CO₂, Temp, etc.). This granular logging frequency at 0.2Hz allows for high-resolution hypnogram generation.

2. Wake Event & Circadian Actuation

If the physiological model classifies the user state as awake during defined sleeping hours (e.g., 22:00 – 07:00), or if the visual pose model detects a transition from lying_down to sitting:

- Actuation: The system activates the CCT LED array in "Night Navigation Mode."

- Brightness: Limited to <10% (Low Lux).

- Color Temperature: Fixed at ~2200K (Warm Amber).

- Rationale: This specific spectral output is designed to provide sufficient visibility for navigation (e.g., restroom visits) while minimizing blue light emission to prevent melatonin suppression and facilitate a rapid return to sleep.

- Data Persistence: The event is logged as a

wake_eventwith an associated timestamp.

3. Disturbance & Anomaly Correlation

The system runs a parallel thread for event detection logic:

- Audio Triggers: If the Audio Classifier outputs

snore> confidence threshold. - Environmental Triggers: If any environment sensor reading records an anomaly

- Action: These events are flagged as

disturbances. The system captures the timestamp and effectively "tags" the current sleep session segment with the specific cause (e.g.,disturbance_snoreordisturbance_air_quality). These tags are critical for the root-cause analysis engine.

4. Post-Session Reporting & Visualization

At the conclusion of a sleep session (triggered manually or by a "morning" time threshold), the system generates a comprehensive visual report fusing the logged datasets.

- The Hypnogram Timeline: A linear time-series graph visualizing the progression of sleep cycles.

- Y-Axis: Sleep Stages (

Awake,Light Sleep,Deep Sleep). - X-Axis: Time (Session Duration).

- Disturbance Overlays:

- Anomalies: Environmental spikes (Temperature, CO₂, PM2.5) are overlaid as color-coded vectors on the timeline to show correlation with

awakestates. - Snore Events: Indicated as discrete markers along the timeline, highlighting periods of respiratory fragmentation.

This visualization allows the user to instantly correlate environmental factors with sleep quality, transforming raw sensor data into actionable wellness insights.

Next StepsWhile the current version of SomnoSphere has transformed how I understand my sleep environment, I see several exciting opportunities to expand its capabilities using the Arduino Uno Q's dual-core architecture.

- Smart Wake-Up Synchronization:My goal is to move beyond simple time-based triggers. I plan to implement a feature where the system detects my phone's alarm schedule (or listens for the specific alarm frequency) to initiate a "Sunrise Simulation." By fading the LED strip from deep red to bright daylight 15 minutes before my alarm rings, I can wake up more naturally during a light sleep cycle.

- Home Assistant Integration:Since the SomnoSphere is already processing rich environmental data, I want to integrate it into my broader smart home ecosystem via MQTT. This would allow the lamp to synchronize with the circadian lighting logic of the other lights in my room, ensuring that even if I override the lamp manually, it automatically matches the color temperature of the rest of the house.

- Expanded Audio Classification: Currently, the Edge Impulse model focuses on snoring, but the microphone can do so much more. I plan to train a new audio model to classify:

- Alarm Ringing: By detecting when my alarm starts and stopping the timer when I actually get out of bed (verified by the thermal camera), I can calculate a "Sleep Inertia" metric: tracking exactly how long I procrastinate in the mornings.

- Loud Anomalies: I want to add an additional layer that detects loud sounds, flagging these as critical anomalies on the timeline.

Comments