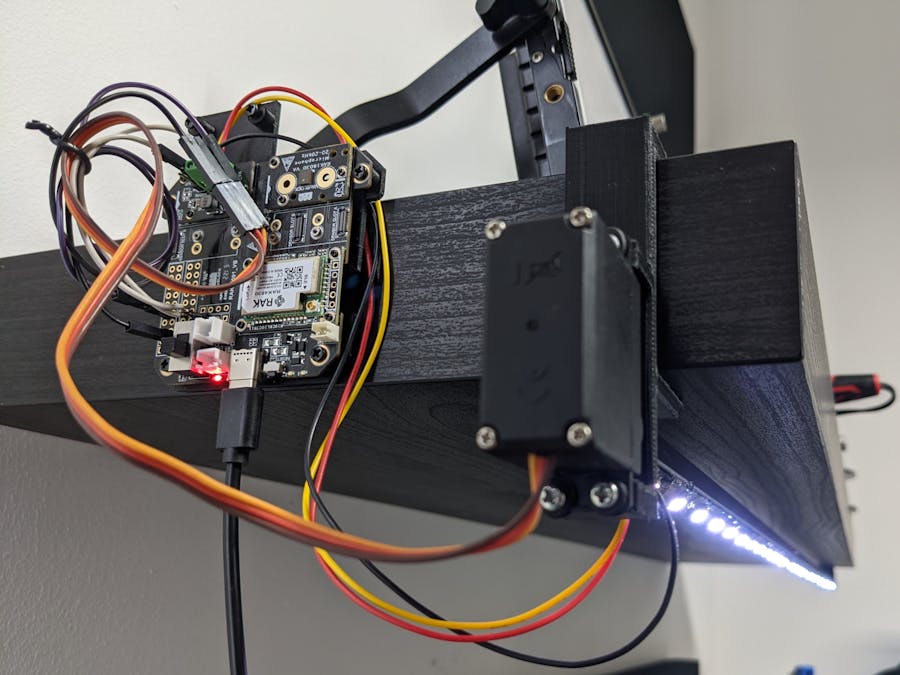

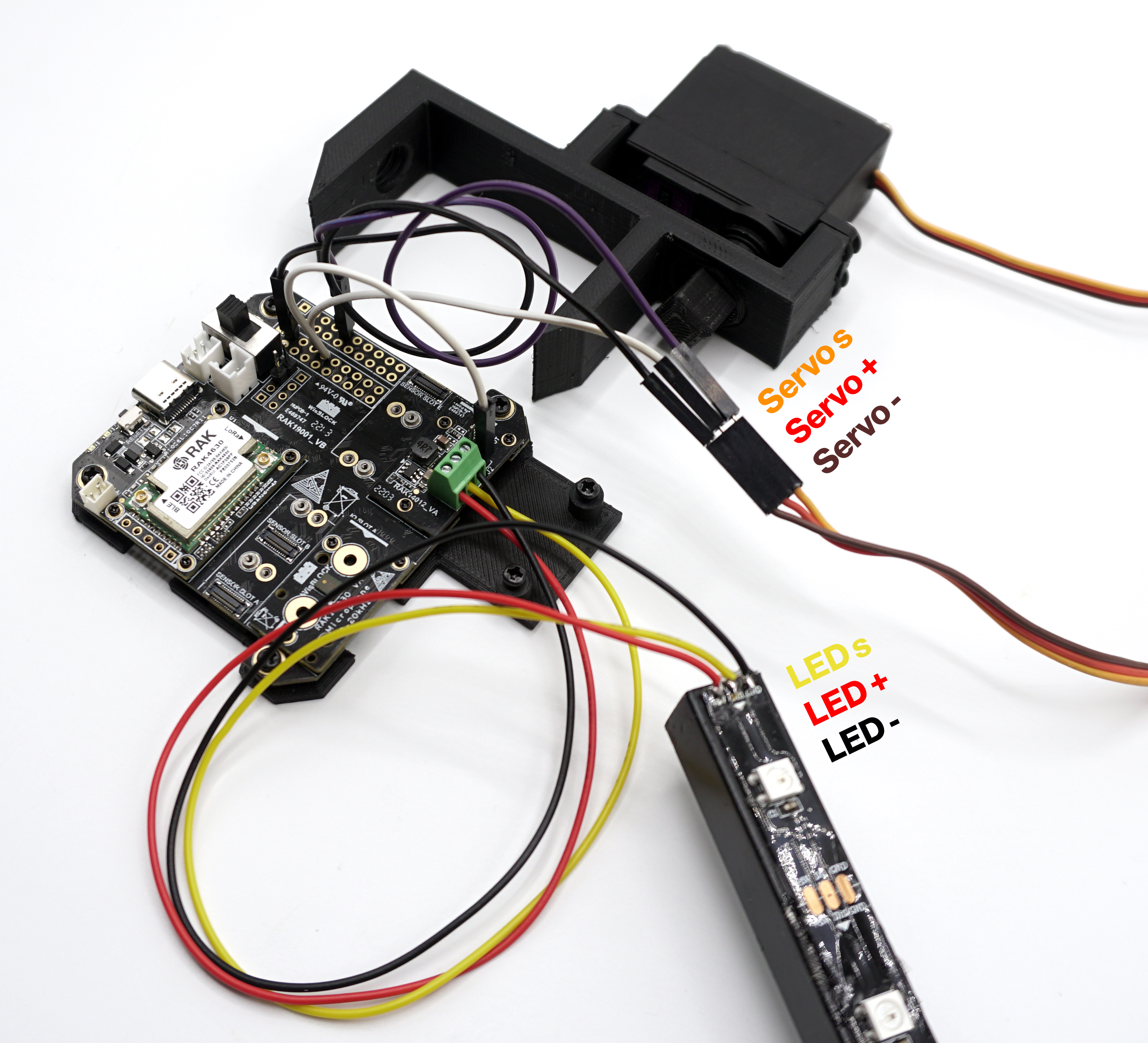

Normally, the smart devices in our home are subject to internet availability and third-party services to work correctly, our data is everywhere in the cloud and we experience the effects of latency as our commands are processed and the action required is performed. This project tries to solve this problem by making the most of the microcontrollers we commonly use, implementing a system capable of processing voice commands in real-time, controlling a motor and an addressable LED strip, all locally, without the need for internet, allowing us to control a LED desk lamp by voice, to adjust it to our mood.

The HardwareUsing a voice recognition model made in Edge Impulse, I am able to classify a voice command that set the lighting status of my LED desk lamp and also controls the light direction for a better-lit workspace.

Training the ModelEdge Impulse lets you create your audio dataset from different sources, directly from the end device (if it supports the ingestion service) or using your smartphone and personal computer, I decided to do it with my PC + headset for an easier workflow and the demonstrate the model's ability to identify audio from a different source than the one used for its training.

I recorded 10 seconds samples saying several times the keywords and then split them into 1 second windows.

My dataset consists on +4 minutes of each keyword:

- Hey Lamp.

- Work mode.

- Party mode.

- Study mode.

- Turn off.

- Noise.

- Unknown.

This project is public on Edge Impulse so you can clone it, modify it and test it!

Impulse designMy model consists of an Audio (MFCC) processing block + a Classification (Keras) learning block:

Important:

- Window size: 999 ms

- Window increase: 500 ms

- Didn't touch the settings of the MFCC.

Model performance results

I achieved quite a good accuracy, validated with the "Live classification" functionality with new data, the model was performing very well.

Note: a 100% accuracy is not always a good sign, this may mean that your model is overfitted and will struggle on classifying new data. Please check the real behavior of your model by using the "Live classification" with different data than the one used for training or spend a try and error process changing the model parameters and staying with the better results ones.

Deploy the model back to the microcontroller- In the Deployment section, create an Arduino library, without enabling the EON compiler:

- Unzip the downloaded library.

- Set the PlatformIO environment to program WisBlock boards by following this guide.

- Open the PlatformIO project attached.

- Copy the library to the /lib folder of the project:

- Build the code and flash the WisBlock core.

- Test the project!!

I am happy with the results of the project, I demonstrate to myself that we are underusing the resources of our today's microcontrollers and that they can achieve more than we think. Edge computing is a very powerful modality of solutions, making your projects capture the environment data, process them, and take actions, everything in just one place.

I have to thank RAKwireless for making the prototype to the final product process as easy as attaching some sensors with screws and coding, knowing that I have an Industrial standard project without a custom PCB that would require a lot of wait and errors to solve. Also, Edge Impulse let me create tinyML models without deep knowledge of the area, from dataset creation to the model deployment back to the microcontroller.

The project can be improved in different ways, one of them is deploying the model using a native SDK of a certain microcontroller, for example, ESP-IDF for ESP32 or Pico-SDK for RP2040, those are also core options on the WisBlock ecosystem that I could have used, this would let me achieved lower inference and DSP times and better stability, despite everything, very happy with the results, this type of application has a lot of potential.

_M6kErcYJ84.png?auto=compress%2Cformat&w=40&h=40&fit=fillmax&bg=fff&dpr=2)

Comments