I recently made an impulse purchase - the Intel Arduino 101. I have no idea why I bought it. I'm sure somewhere in my mind I just wanted to play with the accelerometer and gyro features, whatever the idea was it was a great one!

You see the Intel 101 has lots of extra features above and beyond the amazing Arduino Uno it is attached to. Firstly it's your standard Arduino Uno. Looks like it, smells like it and walks like it.

Let's see the Intel 101's built-in extras:

- 6 axis Gyro

- Compass

- Thermometer

- Accelerometer

- BLE - Bluetooth low energy

- 128 Node Hardware Neural Network from General Vision

It has a 128 node hardware neural network. Yes, you heard me right, the 101 for less than $40 has all this in it. It is a total bargain. The chip is made by a company called General Vision, and I have spoken to them to clarify certain features, and also worked with Intel to fix a few bugs (which you can find fixes for in the head of their repo on GitHub).

So firstly, some URL reference points :

So without further ado, I got down to messing about with the Neural Network side of things.

Now before I continue I want to say that this is a work in progress. Coding-wise I have everything working and finished. But the effectiveness of the NN leaves a lot to be desired! Maybe as a community we can work together to see if there are better ways to solve the problem.

So consider this a starting point.

101 NN FeaturesThe 101 has an Intel Curie chip on it, which has a 128 Node Hardware Neural Network. The NN has 2 modes: RBF or KNN. It also has 2 distance modes: L1 or LSUP (The 101 intel Curie Chip Specification).

The NN has the following features:

- Train a neuron with a 128 byte vector

- Save the knowledge in the network

- Load the knowledge back into the network

In order to do all this from an Arduino sketch you must link into your sketch the 101 pattern matching library. Follow the Arduino IDE instructions to get this library downloaded to the IDE and linked into your sketch on the Arduino IDE. It works the same as any other library. Use the library manager to download it; once you include the header file from the library, the Arduino linker will pull in all the necessary source to resolve your symbols.

So from now on I presume you have downloaded the Intel 101 library into your Arduino IDE. The library open source code is here:

Repository and Instructions on installing the Intel 101 Arduino Patter Matching Library

The General Vision Neural NetworkThe 128 NN was made by a company called General Vision. You can see what they are up to here:

To understand how the NN works you should read the general vision guide here:

- 101 Hardware NN Overview - How the NN works

If you are interested in neural networks and the how they work, read up on wikipedia: Neural Networks Overview

Some quick notes about the GV NN. There are two main modes, K nearest neighbor (KNN), and radial basis function (RBF). My 101 sketch code supports both modes too, and you can switch between them easily with the main menu. There are also 2 distance modes, these are L1 and LSUP. Note when you switch between these 2 distance modes, you need to retrain the NN.

When learning/teaching the NN, we want to use the RBF mode only. We can switch to KNN mode after we have trained the NN.

What is This Project?I have put together the source code sketch that you can use to upload to your Intel 101 and it will allow you to control the neural network on it via a menu on the serial monitor.

In order to make the NN useful, I have set it up to read from a local SD card, the NMIST data training and test set.

Training Data and Test DataI soon got the 101 NN working and decided to test out the capabilities. I would use the MNist OCR data. For those unfamiliar with this data, it is basically a set of single digits between 0 and 9 that have been handwritten and scanned in. There are 4 files we need. They are in pairs: you have the data file that contains all of the actual image data, and a corresponding label file that contains the label of the image.

MNIST is a set of data collected that contains thousands of hand drawn single digit characters from 0-9. There are 2 sets of data: training data to train the NN, and test data to see how well it has learned.

Each dataset is split into 2 files: the labels (the description of the data) - so the label 0 for a picture of a zero, 1 for a picture of a 1, etc. through to 9; the second file in each dataset is the actual picture of the character from 0 through to 9. So basically 2 sets of data - training and test data, and 2 sets of file labels, and the raw image data. The image data and the label data are contiguous in a single file, as we wouldn't want 30,000 files for 30,000 images! The file open and read would take eons!

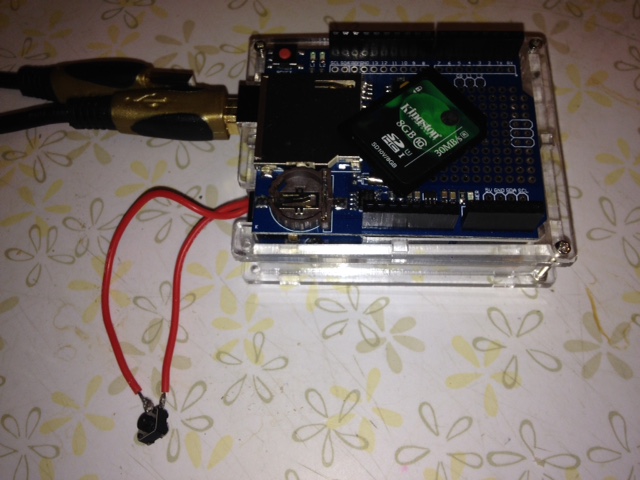

Setting Everything UpFirst copy the data files from MNist on to the SD card. The SD card I used is a 32GB one, and I formatted it to be a single FAT32 partition. You can use this tool: SD Card Formatter For Windows.

Just use normal OS file copy to put the MNist files on it, and make sure they are in the root folder (or change the code accordingly!).

You should have 4 files in the root folder:

You can change the file names on the SD card, just make sure you also change them in the code!

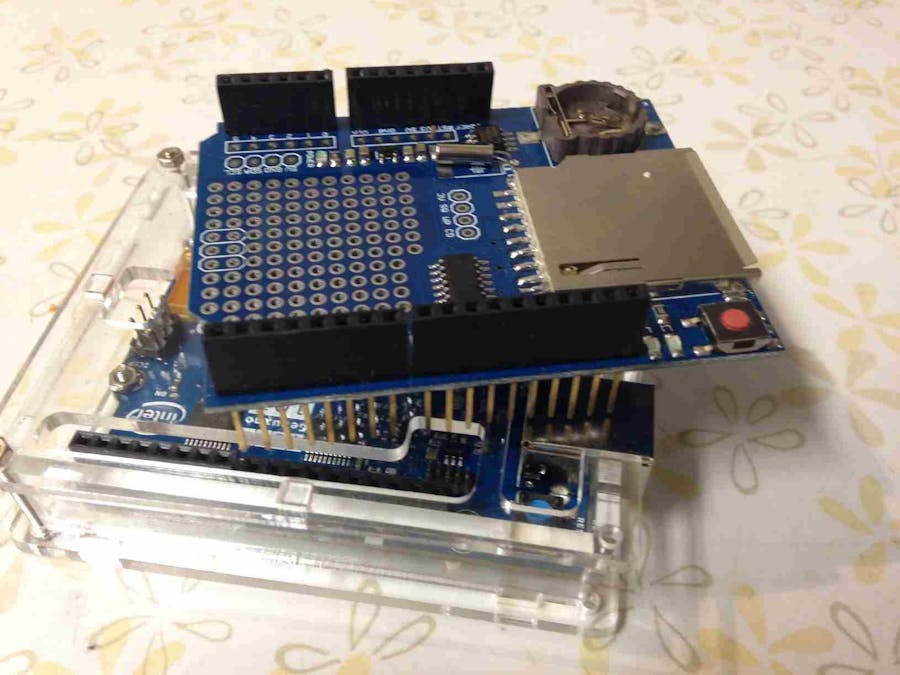

Put the SD card into the SD card holder on the data shield. Put the data shield on the 101.

Plug in the 101 to your PC via the USB port and select the port number that the 101 is plugged into. Before you do anything else, perform a Get Board Info from the IDE (under the Tools menu), mine returns this:

- BN: Arduino/Genuino 101

- VID: 8087

- PID: 0AB6

- SN: AE6774EK61101AA

Don't bother carrying on until you can get a good bi-directional command link between your IDE and the 101 board. This must be working before anything else!

Now we have the board connected, build and upload the source code.

Download the code from my public repository: My Repo.

Use the following folder: Intel101NMistNNEve

All the code is in here, the other folders are just experiments, etc.

This folder contains the sketch (ino file) and also, if you are using Visual Studio 2015, the solution and project file.

Note - To use Visual Studio 2015 with the Arduino you need the Visual Micro extension: Download Visual Micro extension for Visual Studio IDE.

So build and upload the code to the 101!

Setup the Arduino IDE for Intel 101Make sure you install the PME library for the 101, download it from the Intel 101 pattern matching GitHub page (also see bottom of this page): 101 Pattern Matching Repo.

Also make sure you download the Intel Curie board support from the Board Manager. Search for 101 and then you will be able to install it.

Now install the Curie Time library as well (why not install all the Curie libraries for the future, e.g., BLE, IMU, etc.).

This project also uses SD card support, so again install this in the IDE: Here is the Adafruit SD Card lib for the IDE.

Once you have the 101 libs and board and SD card support installed in the IDE, you are good to go.

Note - You do not need to include these libs from the IDE option, as the libs are already #include'd in the source code. You just need to make sure they are installed on your machine in the correct location.

Running the codeWhen you run the code on the 101, you can perform the following menu options via the serial monitor.

So after uploading the code to the 101 (with the correct USB port number set) and starting the Serial Monitor from the IDE, the serial monitor will display the menu when the 101 is not running an operation:

- 1. Clear NN - Clear the NN Data

- 2. Train NN - Train the NN using the training data on the SD card

- 3. Test NN with training data - Run this to see how many characters the NN can recognize from the TRAINING data on the SD card - not the test data. This should be 100% as the data being tested is the same as the data the NN used to learn

- 4. Test NN with test data - This time test the NN with the test data on the SD card. The NN has never seen this data before, so this is a real test of how good the NN is at classifying the images

- 5. View Neural Network State - See all the states the NN is in

- 6. View all neurons - Print out the values of all the 128 neurons

- 7. View committed neurons - Print out the states of only the committed neurons - the neurons that have learned something

- 8. SD Card Info - Show all the SD card info, use this to test the SD card is working with the 101

- 0. Toggle NN type : KNN or RBF - Change NN mode

- Z. Toggle Distance L1 or LSUP - Change the NN distance mode

- R. Toggle testing only known categories - Only test categories that are known about

- X. Toggle testing with only known images - Only test known images

- N. Toggle training until no new committed neruons - Keep running the training data until he network stops learning, ie iterate with the training data until no new committed neurons

- G. Set Global context to 1 - The global context

- U. Super test - Train the NN with the training data, then test it straight away with the test data. A complete run - train and test together

- P. Print out user commands - See what commands you have used so far, a user command history

- E. Print previous results - See the last set of results for the NN

- D. Double number of test images - Increase the number of images to test

- L. Load the NN - Load the persisted NN from the SD card

- S. Save the NN - Persist the NN data to the SD card

Note that the menu options are case sensitive (so u is not the same as U).

First make sure your SD card looks okay and the board can read it. Note that I use the data logger board; you don't have to. There are other connections and boards you can use, the data logger though is super cool as it just bolts straight on top - no cables, no power, no ground to worry about.

So when I select option 8 (SD Card Info), I get this output:

- Check the SD

- Check SD

- SD card GOOD-wiring is correct and a card is present.

- Card type:SDHC

- Volume is FAT32

- Volume size (bytes): 3650519040

- Volume size (Kbytes): 3564960

- Volume size (Mbytes): 3481

- Files (name, date and size in bytes):

- SYSTEM~1/ 2016-10-10 18:40:32

- WPSETT~1.DAT 2016-10-10 18:40:32 12

- INDEXE~1 2016-10-10 18:40:34 76

- NEURDATA.DAT 2000-01-01 01:00:00 17276

- NEURDKNN.DAT 2000-01-01 01:00:00 1364

- TESTI.DAT 2016-10-09 10:31:46 7840016

- TESTL.DAT 2016-10-09 10:31:54 10008

- TRAINI.DAT 2016-10-09 10:32:06 47040016

- TRAINL.DAT 2016-10-09 10:32:20 60008

- Checking files

- Found the train data file.

- Found the train labels file.

- Found the test data file.

- Found the test labels file.

- The system is now set and ready to run.

The image format for each OCR character is 28x28 with a single byte for the colour of the pixel. So this is too big for the 101's synapses or neuron data size which is 128 bytes.

I decided to trim the data down from 28x28x8 bits (999 bytes), to 28x28x1 bit (900 bits or 110 bytes). This means it fits into the data size. We monochrome the image first, so the pixel is on (i.e. it has 1 for that bit) if pixel "colour value" > 5, or off otherwise. Obviously this is an arbitrary value, you can change it in the code.

ResultsSo I trained the data using the settings already setup (default from repo) and these are the results of training and testing.

Training the NN

First when training in RBF mode we stop when we run out of nodes. These are the details when we have trained with LSUP mode:

Learnt image types

- NN Cat:1 Learnt:28 images. (This is the character 0)

- NN Cat:2 Learnt:33 images. (This is the character 1)

- NN Cat:3 Learnt:23 images.

- NN Cat:4 Learnt:27 images.

- NN Cat:5 Learnt:24 images.

- NN Cat:6 Learnt:17 images.

- NN Cat:7 Learnt:24 images.

- NN Cat:8 Learnt:26 images.

- NN Cat:9 Learnt:18 images.

- NN Cat:10 Learnt:25 images. (This is the character 9)

So we learn a total of 245 images before we run out of neurons to use.

Now let's try RBF mode using L1 distance mode.

- NN Cat:1 Learnt:48 images. (This is the character 0)

- NN Cat:2 Learnt:61 images. (This is the character 1)

- NN Cat:3 Learnt:48 images.

- NN Cat:4 Learnt:46 images.

- NN Cat:5 Learnt:51 images.

- NN Cat:6 Learnt:36 images.

- NN Cat:7 Learnt:42 images.

- NN Cat:8 Learnt:48 images.

- NN Cat:9 Learnt:36 images.

- NN Cat:10 Learnt:52 images. (This is the character 9)

We stopped learning when we got to 468 images. So this type of setup resulted in us using more image data.

Testing the NN

Now when I test the NN using the same data - the training data, these are the results. Note that this is the same data we used to teach the NN, so it has seen these characters before.

So using RBF mode here is distance mode LSUP:

NN got:

- 229 correct out of 245 images tested

- Distance mode: LSUP

- Classification mode: RBF

The testing with training data operation took 11 seconds to perform

So using RBF mode here is distance mode L1:

NN got:

- 425 correct out of 468 images tested

- Distance mode: L1

- Classification mode: RBF

The testing with training data operation took 21 seconds to perform.

So testing the training data using KNN mode here is distance mode LSUP (learnt in RBF mode):

NN got:

- 28 correct out of 245 images tested

- Distance mode: LSUP

- Classification mode: KNN

The testing with training data operation took 11 seconds to perform.

So testing the training data using KNN mode here is distance mode L1 (learnt in RBF mode):

NN got:

- 48 correct out of 468 images tested

- Distance mode: L1

- Classification mode: KNN

The testing with training data operation took 22 seconds to perform.

There are improvements needed with the way we make the data smaller. Trimming the image down to monochrome seems to make the data fit into our vector size, but it seems to lose valuable features.

Why not try out the NN with the test data (this is the data the NN has never seen before - use menu option 4 not 3).

How can we look at the images first, rate them accordingly, then train with that data ? Well, as a project for yourself, I will tell you the way forward with this is to look for features. Why not look for horizontal lines and vertical lines, curves, etc.? Using these features, you can train the NN, and then convert the test images to the same feature set and test it.

ExperimentingThis project is just to get you started. You will notice there is a huge amount of code that does all kinds of things, ready for you to experiment with. You can change the network settings, learn your own data, save and restore the knowledge in the NN. So have fun, mess about with it and put some of your own ideas and changes below for the community.

I for one think this is an excellent way to get started with Machine Learning and a good introduction to Neural Networks and AI (fuzzy pattern recognition). The hardware NN is already setup, even if it is limited in its size and capability. I think Intel was hoping it would be used with the on-board sensors, like the gyro and accelerometer, especially using wearable tech, so maybe the MNist is a bad example to start with.

ConclusionThanks for reading, have fun with the NN and come up with some novel or useful ideas for it. After all, for less than $50 you have a 128 hardware NN with an Arduino, BLE, a gyro, etc.

Thanks for reading.

Marcus

Problems1. If you build/compile the code and see this:

#include <CuriePME.h>

You have not installed the Intel 101 pattern matching lib files correctly. There are a few places they can be, but you are looking for the CurieTime.h.

Go here: 101 Pattern Matching Repo

Download the code as a zip file (under clone or download on the GitHub page), click download zip!

There are instructions on installing libs for the Arduino IDE here: How to install libs in the Arduino IDE.

From the IDE, click Sketch->Include Library->Add .zip, then navigate to the zip you downloaded from the Intel 101 PM GitHub page above.

2. If you build/compile the code and see this:

#include <CurieTime.h>

You have not installed the Curie Time library. Install this as above.

Here is a full list of libraries if you are interested: Arduino IDE libs

_baVEVgguW1.jpg?auto=compress%2Cformat&w=48&h=48&fit=fill&bg=ffffff)

_3u05Tpwasz.png?auto=compress%2Cformat&w=40&h=40&fit=fillmax&bg=fff&dpr=2)

Comments