My mom gave a Darth Vader toy to my kids, and of course my first thought was: "wouldn't it be cool if he could hear commands and speak?". Well, that idea put me on the path to giving good ole Darth Vader ears and a mouth with the power of TinyML!

I went back and forth on which board to use, but ultimately settled on the Syntiant TinyML board. It's small, low power, has a microphone and an accelerometer, is programmable with Arduino, and is supported by Edge Impulse. It also has a uSD card slot so I could store the audio files and the TinyML model directly on the uSD card.

The Syntiant TinyML board had pretty much everything I needed, except I didn't know how to play audio files on it. I had played around with the TMRpcm library on the Arduino Uno, so I had a LM386 amplifier module and a 3W 8 Ohm speaker. However, that library is not compatible with the SAMD architecture. After a bit of searching, I was able to find the AudioZero library (specifically, a rewrite of it that works with SdFat) which is compatible with SAMD boards and more importantly the Syntiant TinyML board.

Now, I needed some.wav files of Darth Vader speaking. I did a quick google search and downloaded a couple of.wav files: one that says "What is your bidding, my master?" and the other is of him breathing. AudioZero has similar requirements for the wav files as TMRpcm so I followed the wiki on how to configure the wav files in Audacity.

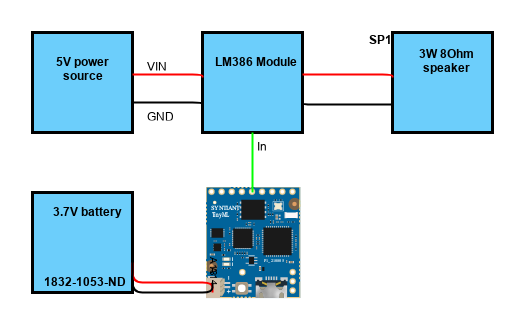

I then connected the Syntiant board (via pin 5) to the LM386 amplifier module which connects to the speaker. The speaker requires 5V @ 600 mA, so I reworked a USB cable to provide the necessary power. The TinyML board can output 5V, but for low current application (ie < 200 mA). The circuit looks like this:

I mounted the breadboard to Darth Vader's back and routed the speaker wires to the front where I mounted the speaker.

I then ran an example sketch to at least validate that the board could play.wav files and that the circuit was correct. This was the sketch code that I ran:

#include <Arduino.h>

#include "TinyML_init.h"

#include "SAMD21_init.h"

#include "SdFat.h"

#include "AudioZero.h"

extern SdFat SD;

File myFile;

void setup(void) {

SAMD21_init(1); // Setting up SAMD21 (0) will not wait for serial port,

// (1) will wait and RGB LED will be Red

if (SD.begin(SDCARD_SS_PIN)) { // Check if SD card inserted

myFile = SD.open("breathe2.wav", FILE_READ);

AudioZero.begin(22050);

AudioZero.play(myFile);

delay(100);

AudioZero.play(myFile);

AudioZero.end();

myFile.close();

delay(2000);

myFile = SD.open("talk5.wav", FILE_READ);

AudioZero.begin(22050);

AudioZero.play(myFile);

AudioZero.end();

myFile.close();

}

}

void loop() {

}With the AudioZero library, it was actually fairly straightforward to test it out. In little time I had audio outputting to the speaker from the.wav files on the Syntiant TinyML board uSD card!

The next step was then setting up my keyword spotting TinyML model so Lord Vader could recognize my commands. I went to my go-to tool for this: Edge Impulse. Since I was working with the Syntiant TinyML board, I followed Edge Impulse's detailed tutorial on using their tools and parameters to create a model.bin file. I recorded data for 3 categories: "speak", "breathe", and "z_openset". I collected about 2.5 minutes of each with about a 1 second window. Following the default parameters in the tutorial, I had excellent results:

The link to my public project can be found here.

I then deployed as build firmware. This is where I ran into some issues. I figured I would just tailor the "on_classification_changed" method in the firmware source and add the AudioZero code from my standalone program. However, when that function was triggered, classification worked but the board would hang when it attempted to play the audio. I reached out to both the Syntiant forum as well as the Edge Impulse forum. After digging further into the code, I found out that I was having interrupt register conflicts between the AudioZero library and the Edge Impulse Syntiant firmware.

This is where Atul from Syntiant REALLY helped me out. We had a brief chat and he sent me some simplified Syntiant code that just runs inference with a model loaded from the SD card. With that simplified code, I no longer had the interrupt conflicts and I had a working end to end listening and talking Supreme Commander of the Imperial Army!

So far, the kids love speaking to him. Even though the model was trained on just my voice, it does a pretty good job of picking up my kid's voices as well. This was a fun project. Syntiant and Edge Impulse make it really easy to make an end to end solution that is highly accurate. I can't thank Atul enough for going above and beyond and providing me the sample code to make this solution possible.

Comments