Initial Interviews

Older male cafegoer at Berkeley Espresso

This person claimed that the last time he wanted to use his smartphone was five minutes before the time of the interview. In short, he wanted to make a call and did not have his phone. However, the long story is more interesting than the summary would assume. The cafegoer was reading the paper at the start of the interview, and was probably doing so five minutes before as well. He noticed that a larger crowd of people dressed in blue and gold entered the cafe, and suddenly he wanted to call his friend to ask if the game was over. When he reached in his pocket, the phone was not there. He mentioned that the reason he forgot his phone was because he left it charging before he left the house. Normally, he leaves the phone in a conspicuous location so he doesn’t forget it. He was shocked when he reached in his pocket and didn’t feel his phone.

When asked to reimagine making his call with a smartwatch, he imagined a completely voice-activated device. He even mentioned that he wouldn’t want any sort of touch interface. The interviewee noted that he is concerned about privacy, perhaps in part because of his generation. Rather than having to hold up the watch to his mouth like a secret service agent, he envisioned an earpiece so that all he would have to do to initiate a call would be to utter something like “call Phil” or “call 123-4567.” His greatest concern was being inconspicuous, not so much because he wanted to keep his conversations private, but more so because he doesn’t want to bother others around him. He is willing to step out and make calls, but hopes that the smartwatch interface could help prevent the need.

Finally, and most interestingly, given that the device would have an earpiece, he contemplated about why the “watch” would need to be on the wrist at all. He mentioned that because most of the control would be through a voice interface, the device could go on other parts of the body, such as all on the ear, or in a wallet (someday).

Younger male grassroots fundraiser near Shattuck and Vine

When initially asked about difficulty accessing his smartphone, this interviewee spoke of issues with using the GPS while driving or with loading data over a poor network. When asked to clarify about the latter, he began to talk about his smartphone usage while out in the field. His organization prefers their fundraisers not to have their phones out, however the rule is not intolerant of use and certain cases for phone uses are de facto permissible.

He mentioned that once, he got a person to stop and listen to his spiel (he is advocating for an organization for LGBT marriage rights). At the end of his talk, the person asked to see the organization’s website, which the interviewee remarked is often a sign that the person is not comfortable about donating money. However, in this instance, the interviewee felt driven to “call their bluff” so to speak and pull up the organization’s website on his smartphone. However, the website would not load, and the interview plummeted from feeling relieved to have his phone on him to feeling devastated.

Asked to reimagine the situation on a watch, he imagined a watch device that was tailored to his job. The watch would actively “listen” to whatever a client might be saying. For example, if the client mentioned some query to the effect of “see website,” rather than the interviewee having to manually press a touch screen and pull up the website, instead the phone would automatically pull up the website. (Note that the interviewee did not mention about having a network issue anymore, so perhaps a way around this would need to be integrated into the automatic interface). When asked why it was so important not to have to use a touch screen, he mentioned that body language was incredibly important in convincing somebody to donate. With a wrist-mounted device that operated on voice trigger, he would still be able to talk with his hands and convey his enthusiasm while the client glanced at the website. Finally, he mentioned that somehow, there would need to be some display wide enough so that the client could view the website.

Younger female ice cream store worker in Epicurious Garden

This interview was the most difficult to conduct, but was perhaps the most insightful because the interviewee was in general not acquainted with the idea of smartwatch technology being used in a work scenario. When I asked her about a situation where she did not have her smartphone available but needed to use it, I was expecting to hear about a situation where she was working. However, like both other interviewees, she mentioned about her phone’s battery lets her down and how calls do not work sometimes.

Asked to clarify about making calls, she began to speak of a time when the gate to the restaurant area was closed and needed to be open, but she needed to call her manager to open it. The phone was in her pocket at the time, although sometimes she keeps it in her backpack. Asked whether keeping her phone in her backpack makes it even harder for her to use it, to my surprise, she responded no. She mentioned that most of the time, her job wasn’t too busy to prevent her from walking to her backpack and picking up her phone. This is important because it suggests that users could be selective about when they would want to have devices on them. Regardless, continuing with the story, when she tried to call her manager, the call did not even reach a dial tone before failing. She had obtained the number from a paper list (likely the issue), and instead she relied on a co-worker to dial for the manager. She felt inadequate.

It was difficult to ask the interviewee to imagine the situation with a smart device. Perhaps to the question’s vagueness, she mentioned how she would have a smartwatch capable of secret agent-like ability, including flipping buildings and master key capability. While I was initially dismissive of her responses, she mentioned that it would be strange to her to both be at her job and have a smartwatch, which she viewed as something extraordinary and not something quotidian. When prompted to imagine a non-superpower watch to deal with the gate situation, she envisioned not still not calling her manager at all. Rather, her watch would come equipped with a key to unlock the gate. She imagined the key as a tiny physical device, as opposed to an electronic system like card keys. When asked why she herself didn’t have a key yet, she remarked that she was a newer worker and there was delay in getting the key to her. Her last mention was that “if a watch does more than tell time and have alarms, it’s a secret agent watch."

Commonalities

All interviewees spoke about the shortcomings of their phone’s functionality before any situational difficulty. Only after probing would they talk about how an environmental constraint (e.g. having no free hands) caused them trouble as opposed to an inherent issue with their phone (e.g. internet was too slow). This is significant because it suggests that a smartwatch, in addition to solving issues with use narratives, should also attempt to address or bypass issues that affect smartphones’ functionality (e.g. uses bookmarks only and caches them rather than relying on a network connection).

While the three interviewees come from different backgrounds, one common theme in how they addressed technology was speech, and the social implications surrounding speaking. For the cafegoer, speech carried an element of privacy and felt personal, not something to be broadcasted to others. The fundraiser depended not just on his speech, but on keeping his language engaging and up-to-date. The ice cream store worker noted that she made calls and spoke because it was expected of her from her job--she probably would not feel the need to speak to a watch.

I would like to explore this area of speaking and communicating with contextual awareness of one’s environment. Hopefully the watch can afford some novel forms of interaction if it can take advantage of the way its wearer is interacting with the world.

Brainstorm

Gesture-based IFTTT that changes the watch’s appearance body language. If the wearer is gesticulating wildly, the watch’s screen and body will yield expression in tandem.

IFTTT will listen after a missed call for details over who else the watch could call as a replacement

Redesign clock app to be more invasive. The watch will only tell time and do nothing else when user “shouldn’t” be distracted by watch

Based on where you put the watch, calling procedure will be different (e.g. if watch is on wrist, very conspicuous protocol for making calls, such as calling out whom you want to call. If watch in pocket, you will have to slyly make phone calls)

Body language rather than gestures. Gestures used to control an interface, but the interface follows body language, rather than being directed by it. Example, program IFTTT to recognize “hot out.” Based on weather data, and whether the user is wiping sweat off their forehead or fanning themselves, the watch will display hydration info.

Similar vein: IFTTT for fundraisers, with voice interface. If watch hears “I want to see the website,” watch will pull up website. User pre-programs “want to see website.”

IFTTT might recognize sadness… If I am “sad” for the day, if I motion as if I’m about to cry, or if I’m frustrated, it will be motivating to me. Maybe call my friend or something.

Contacts app: on meeting somebody, will add them to contacts… Or do something.

Native networking platform: on network failure, pulls up a cached version of the page (using a large cache… or try to “scrapbook” it on whatever data the watch can scrounge for just for kicks)

Speech enhancer! When you are speaking and trying to convince somebody, the watch will talk and back you up. Sort of like a fact checker… If I make a claim in my argument, the watch will pull relevant sources and read a short blurb aloud. This could be redesigning the Wikipedia app….

Facial recognition Snapchat. When you make a strange face, the snapchat will snap it with AI-fueled caption. Caption example, given “angry” body language and current weather conditions, snaps my face in an argument and captions: “Jasper not pleased. 52 degrees Berkeley.” Point: humorous. Homage to Markov chain Facebook status generator. Actually, doesn’t have to snap your face--can just snap something random.

Redesign the native battery platform. Rather than just indicating battery level, predict places the user might be when the battery will die as incentive. Present places to charge.

Ring/draw attention if the user leaves the device behind on accident while in the charger.

Redesign the native settings/privacy interface to include just a few physical keys within the watch’s body itself.

Redesign the native call interface to use GPS or crowdsourced data to point to an alternative phone in the event that the phone fails to make a call

Favorite Idea

I am going to redesign IFTTT (If This Then That) to follow body language because it is takes advantage of the smartwatch’s ability to be “lead” as a device, rather than the user shaping their motions around it. This will be used in the context of speaking and persuasion.

More about IFTTT: https://ifttt.com/wtf

Prototype

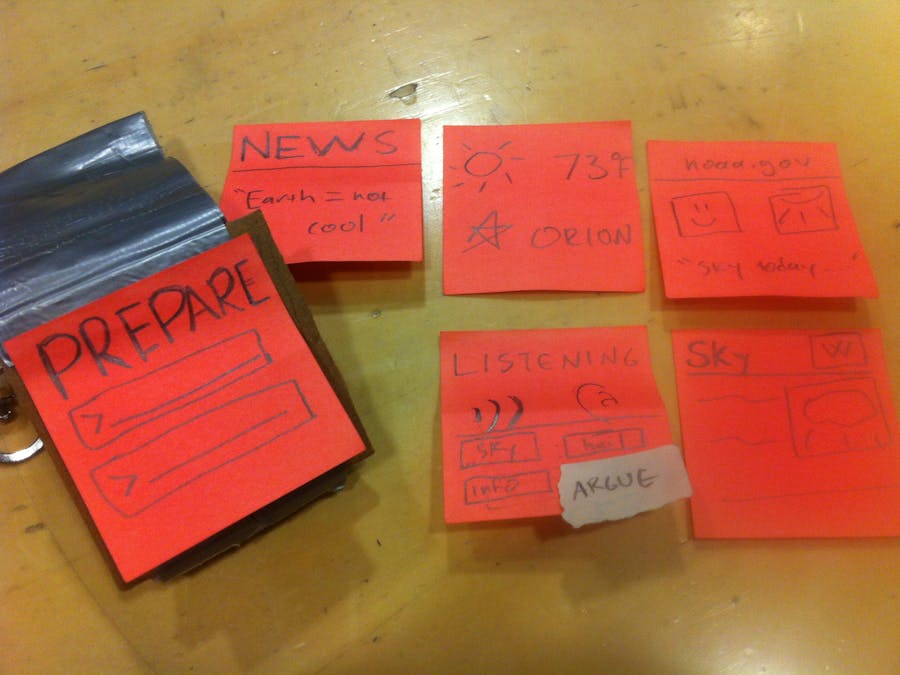

The prototype is constructed of a cardboard bezel and a duct tape strap held around the user’s wrist with a clip. Post-it noted simulate the screen, and can be added and removed to simulate screen transitions.

The app being redesigned is IFTTT, which is a platform that allows users to add functionality to their phone by creating recipes. Recipes are the fundamental unit of IFTTT, and consist of a trigger (e.g. posting a photo to Instagram) and an action (e.g. add said photo to my Dropbox): if <trigger> then <action>. One selects triggers from “channels,” which might be the Facebook app, the native Android call app, or the device itself. IFFFT has so far been largely confined to mapping a given app’s action to another. I would like to explore the possibility of adding another channel: physicality and speech. Thus, body language and words would be triggers to actions on the phone. For example, repeated use of the word “why” and shaking one’s arms could be a trigger to an action to pull up snippets from informational articles on the watch.

Ideally, the user would hard-code some recipes, although the watch would learn and add, modify, and delete recipes over time. The idea is to make the watch follow the user, not the other way around.

User Evaluation

The goal I tested was for the user to give a speech on the sky and the weather to an audience. The (one-person) audience could give feedback, debate, and request info. The watch interface should support the user in catering to her audience’s demands, in providing visual aid, and in enhancing her body language.

Immediately upon testing the prototype on my friend Husna, I noticed something critical: she needed to think about programming the watch to align with her speech. She constantly structured her speech around ensuring that the watch was “up-to-date” with her speaking. Thus, the interface failed to be passive--in fact, in served as quite the opposite: an active element shaping the wearer’s language. The result was a sort of dressed-up PowerPoint, which is not at all what I had in mind.

Specifically, the “Prepare” screen, where the user might type IFTTT recipes was completely foreign to the user. Text boxes meant for user-defined input about the upcoming speech were intended to allow the user to customize what content might result as a result of the user’s body language or implicit verbal triggers. For example, if I input “sky,” then the watch may be more prone to pulling up the weather screen when talking about heat and cold. Instead, the user completely bypassed the prepare screen. This highlights a possible discontinuity in the purpose of the app. The app should seamlessly complement the speaker’s case, but is already demanding input and management from the user.

Next, it turns out that while I had assumed that pulling up pages to support the user’s case would receive heavy use, it only received marginal use. It does make sense, though, as the user does not need to be reminded about facts that they already discuss in their argument. Plus, using facts as a visual aid tends to be limited to less common circumstances (such as soliciting donations for a specific organization). I do not yet wish to scrap this idea, however. I would like to come up with a way to make the watch serve as a factual visual aid; what remains is to think to what would be most relevant to the user.

Tying in with the above, Husna made use of the info pages brought up by the watch when she needed to clarify parts of argument, or to convince somebody about her point. I designed four info screens, which I thought may have been too few. However, it turned out to be informational overload, with Husna struggling to incorporate all of the information presented into her argument. This could be resolved either by having the some actions be very conspicuous changes. An example is yielding noise and a Google Search result answering a question after being triggered by neither discussant knowing the answer, but only changing quietly to weather the weather screen when weather is being discussed. Essentially, the interface demands stratification for the actions.

Overall, the most important step in producing the next iteration is identifying the role that the watch should play in a conversation. Rather than just assuming that a flood of pertinent information will be useful to the wearer, I need to examine more closely which parts of a conversation exist that the device can meaningly augment. I am especially interested in quirky responses from a watch, so that the user treats the watch as sort of a pet as opposed to a dictionary. This is just another idea in establishing the niche that a piece of cold metal should occupy in a lively and uncertain human conversation.

Comments