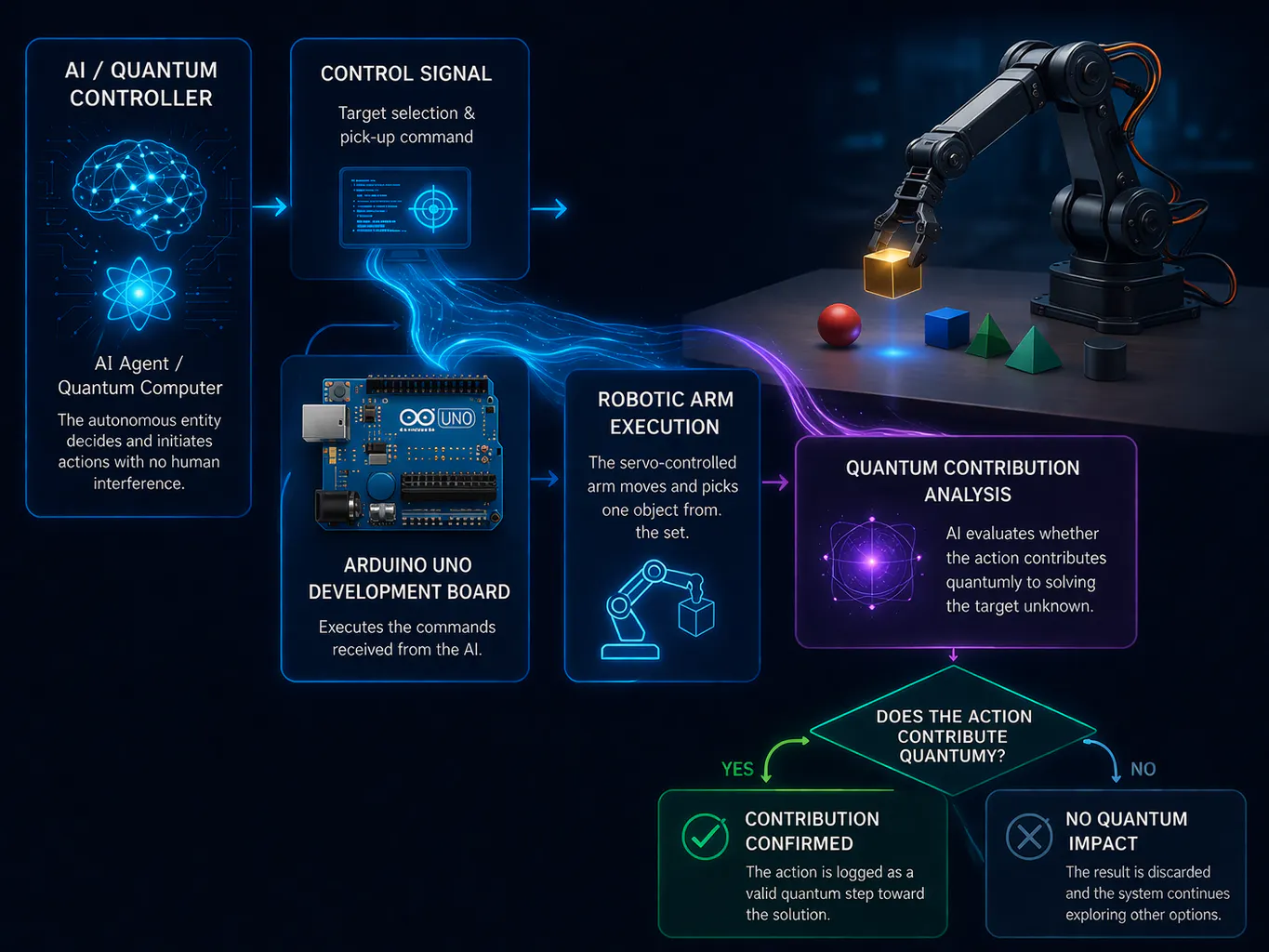

This project introduces a novel experimental architecture where an AI or quantum backend directly controls a robotic arm through an Arduino UNO, enabling autonomous interaction with a set of physical objects.The system operates without human intervention. The AI selects an object, sends commands via API, and the Arduino executes the movement using servo motors. The robotic arm performs a physical action (pick/place), which is then evaluated by the AI to determine whether it contributes to reducing uncertainty in a quantum-inspired problem space.Each interaction is treated as a probabilistic experiment. The AI continuously refines its strategy using feedback from previous actions, forming a closed-loop autonomous learning system.The core idea is to bridge macro-scale physical interaction with quantum-level decision logic, creating a hybrid experimental platform for studying causality, entropy reduction, and emergent intelligent behavior.

Macro-Quantum Interaction Node (MQIN) Module

MQIN Module: Interface unit for the analysis and stabilization of causal entropy in macro-quantum systems with localized resonance.

Read more

_ztBMuBhMHo.jpg?auto=compress%2Cformat&w=48&h=48&fit=fill&bg=ffffff)

![evive Robotic Arm Kit [Add-on]](https://hackster.imgix.net/uploads/attachments/588967/evive_robotic_arm_1920x1280_kbfZsRxEoe.jpg?auto=compress%2Cformat&w=48&h=48&fit=fill&bg=ffffff)

Comments