Growing food has always been an interest to me. I have always been perplexed how we have so much “advanced” technology yet people in the US are still going hungry every day. I am convinced that the future of farming in the US depends on technology. For my contribution, I plan to understand mushroom optimal mushroom growing conditions in an attempt to automate mushroom farming.

For a more detailed "story" of how the project progressed, please check out my blog post on Medium. I've also included some of the technical roadblocks I had along the way.

The Business Case for HeliumMost mushroom growing is located in a controlled, indoor environment that should certainly have access to WiFi. This is means that the Helium IoT platform is adding an unnecessary layer of complexity. However, the Helium IoT platform accomplished a few things:

1) Certificate renewal when communicating with Cloud Providers. With Google IoT Core, devices need to recreate JWT tokens when communicating via MQTT. All of this management is removed which gives the developer more time to develop the product.

2) Incentivized Network. While most mass produced mushroom growing happens in a controlled environment, there are many mushrooms that are difficult to cultivate outside of nature (True Morel, Truffle, Chanterelle). If we want want to understand how quickly these mushrooms can grow (especially using the same techniques as above), we will need to expand our network to forests, parks, and the like. By adding Helium early in the project, we can build a rock solid architecture that isn't likely to change later on in the project.

The Big PictureData about our mushrooms will flow from our IoT device to a public cloud where it can be stored and analyzed. We can use picture data to track the growth of the mushrooms. Among other things, tracking the physical grow of the mushrooms can be used to find the best growing medium. We can use temperature and humidity to track the optimal growing environment. Ideally, we could use other automation to control the environment, though that is not an immediate need of this project.

With mushroom-growers able to experiment with different growing environments, we can grow the highest quality mushroom with the least operational overhead.

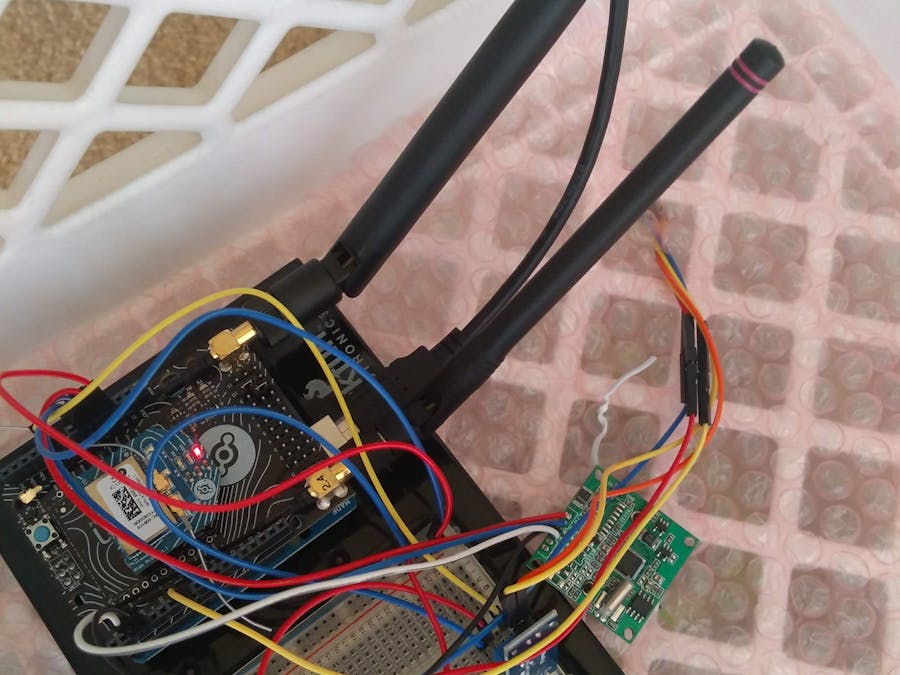

ArchitectureAfter setting up the Arduino and Helium Atom, the Arduino will begin uploading data to the Helium Network. If you set up the Google Cloud Integration, you'll receive the data on a Google Pub/Sub Topic. Data sent to this Pub/Sub topic trigger a Google Cloud Function to persist the data.

There are two processes that are trigger periodically: Device Reconfiguration and Image Assembly.

Device configuration is used to instruct the Arduino when to upload data. The Arduino is given a window of time where it may upload a collection of data. Typically, this is once every hour.

Because the Arduino can only send fragments of an image, a process must exist to reconstruct the image. This reconstruction process takes fragments out of a Google Datastore, concatenates them, and uploads the final image to Google Storage Bucket.

After the images have been uploaded to a Google Storage Bucket, the model training process begins. This involves manually creating bounding boxes around the mushrooms so that an image segmentation algorithm can later do this process for us. We can use a hosted labeling service so we have the options of using APIs in the future. After labeling our images, we can train our segmentation algorithm to recognize individual mushrooms. After our model is trained, we can deploy it using Google Cloud ML Engine. Following deployment, we can take assembled images and segment one image of many mushroom into many bounding boxes. These boxes and uploaded to a datastore and can be reported over to track the growth of an individual mushroom.

You should be able to use this guide to register your Atom and Element. You'll need to use the MAC addresses which are located on stickers. I had a MAC address that wrapped around to two lines which wasn't all that intuitive.

Before installing the MushroomBot Arduino program, you should read how Configuration works. You can use this example to explore how to change Configuration variables with your respective Cloud Provider. Despite the abstraction of message passing with Channels, there are differences in how your application code references configuration (Cloud Channels versus non-Cloud-Channel).

Setting Up the ArduinoAfter you are comfortable with Helium's data flow, you can run the code located on GitHub. You can use the default Arduino IDE but you'll need to install the "Helium" Library using this guide.

I have purchased the soil sensor and the DHT11 device on Ebay. While there might be cheaper options available, I bought the camera on Adafruit mainly for the well-documented client library. Sparkfun didn't have a similar sensor with an Arduino Library at the same price point.

Setting Up Google Cloud IoTBy this point, you've likely logged into Google Cloud and created a service account for Helium to use. You'll need to do a bit more work to make sure that data being sent is persisted.

First, create another service account with the following permissions.

After creating this service account and downloading the JSON credential file, you'll want to download the corresponding Google Cloud Functions and Google App Engine configuration from this Github Repository.

You'll want to follow the directions in the README but you'll likely have to rename your JSON credential file to service-account.json and place it in the root directory of the project. You'll also need to create a configuration file (configuration.json) that includes information about your device and Pub/Sub Queues.

You'll want to inspect the javascript files for constants and adjust accordingly.

When all is ready, you can deploy the whole application. All of the relevant scripts are located in the package.json and can be run with npm run <script-key>.

# deploy the Datastore

npm run production_index

# deploy the App Engine Instance

npm run production_gae

npm run production_cron

# deploy the Functions

npm run production_upload

npm run production_assemble

After deploying the application, you can trigger any of the workflows using the UI or the CLI.

To test either function, upload a message to the Pub/Sub queue. Please read through the source code and test cases to understand the message format for either functions

To run the Cron functions outside of their usual frequency, navigate to the App Engine Interface and select "Task Queues > Cron Jobs > Run Now."

If you have done everything correctly, you should see activity in your upload function.

You can also see photos in your bucket.

At this point, you are ready to grow your mushrooms! This is very exciting part as these things grow *fast*.

If you are looking for a mushroom growing kit, you can try the Back to the Roots kit. If you are looking to sell you mushrooms at your local farmer's market, you'll likely want to see what your local health department will allow you sell. Here is an excerpt from a brochure from the Illinois Department of Health:

Cultivated mushrooms, or commercially raised mushrooms (i.e., common button mushroom, portabella, shiitake, enoki, bavarian) must have documentation detailing their source.“Wild-type" mushroom species picked in the wild shall not be offered forsale or distribution.

As for growing tips, consult the guides offered from your source. You'll likely want to keep the mushrooms away from sunlight and want to keep the environment as humid/moist as possible. Note that you can usually grow multiple harvests from the same kit. I bought 3 kits at the start of my project because I figured I would mess up the first grow. When I got the second pack, I found the following surprise:

This is completely normal as the fungi is just trying to survive. As I stated earlier, this kit can be used several times so don't worry if something like this happens to you.

Setting Up DataturksThere are several options to create bounding boxes that our TensorFlow model will use. Labelimg is a great place to start if you don't want to pay for a hosted product. After Googling, I found a few two hosted solutions for uploading and labeling data: Dataturks and Labelbox. Hive offers a similar product but it looks like their product is for paying people to labeling your data. Given that Labelbox's tutorial had you outline images instead of using a surrounding rectangle, I decided to use Dataturks.

When you are ready to upload your photos to Dataturks, you can download this repository. I have included a Makefile that contains all relevant commands. You can use the following command to generate a list of publish URLs for your mushroom images:

make generate_dataturks_upload

You can use this file to upload your photos to a Dataturks project.

After uploading the photos, label them inside of the Web UI.

When you are all done labelling the images, you'll need to download the metadata-file associated with the images that you've labeled.

Save this file to dataturks/mushroombot.json or update the DATATURKS_JSON_FILE variable in the Makefile.

If you end up reading the blog article, you'll find out that I ended up using the model from the tensorflow/models repository. If I understand correctly, this model is based on Faster R-CNN. I don't have much background in object detection algorithms so feel free to bring your own.

After you have labeled all of you data in Dataturks, you are ready to train the object detection model locally. First, Next, you can build the Docker container that will be used to run the model locally.

m

Now generate PASCAL VOC formatted XML files and then convert the VOC files into a tfrecord example.

make generate_voc_from_dataturks

make generate_tfrecord_from_voc

You are now ready to train the model. You can edit the util/mushroombot.config file to update the learning rate and training steps.

make object_detection

make detect_image

All that is left is exporting the model to a TensorFlow SavedModel so it can be uploaded to Google Cloud ML Engine

make export_inference_graph

make upload_model_to_bucket

Finally, create the hosted model and test with some data

make create_model

make create_model_version

make create_gcp_json_input

make gcp_detect_image

# bounding boxes

[[0.24290436506271362, 0.34011751413345337, 0.3496599793434143, 0.4212002158164978], [0.3253544569015503, 0.20213723182678223, 0.4312695860862732, 0.2808675467967987], ..., [0.0, 0.0, 0.0, 0.0]]

# classes

[1.0, 1.0, ... , 1.0]

# probabilities

[0.5683782696723938, 0.5487096309661865, ..., 0.0]

# total predictions

100.0

After a picture is uploaded to a bucket, it will trigger a Google Cloud Function to analyze the image for mushrooms. We can record these observations and save them over time. This should give us a datastore to build reporting over. We will be using the helium-lft-gcf repo from before.

All that is left is to create our detection function and test uploading a photo! Deploy the detection function with the following command.

npm run production_detect

Then upload an image to your bucket and watch the function upload data about the mushrooms present in the picture.

I haven't figured out how to "localize" the mushrooms in the image. This would allow tracking the growth of a mushroom between pictures as well as compensate for movement in the camera between pictures (like rotations or adjustments).

Final SummaryOverall, I had a blast with this project. I would say I have done enough work to prove out the concept and most of the additional work isn't necessarily building on both Helium and Google Cloud. While I wasn't able to spend quite as much time outside of work on this, I think the end result is pretty neat. Please let me know here or on Twitter what you think of the project! Cheers!

_ztBMuBhMHo.jpg?auto=compress%2Cformat&w=48&h=48&fit=fill&bg=ffffff)

_3u05Tpwasz.png?auto=compress%2Cformat&w=40&h=40&fit=fillmax&bg=fff&dpr=2)

Comments