"Any Sufficiently Advanced Technology is Indistinguishable from Magic" - Arthur C. Clarke

A few months back my brother visited Japan and had real wizarding experience in the Wizarding World of Harry Potter at the Universal Studios made possible through the technology of computer vision.

At the Wizarding World of Harry Potter in Universal Studios the tourists can perform "real magic" at certain locations (where the motion capture system is installed) using specially made wands with retro-reflective beads at the tip. The wands can be bought from a real Ollivander's Shop which are exactly like the one's shown in the Harry Potter Movies but do remember: "It is the wand that chooses the wizard" :P

At those certain locations if the person performs a particular gesture with wand, the motion capture system recognizes the gesture and all gestures correspond to a certain spell which causes certain activities in the surrounding area like turning on the fountain etc.

So, in this Project I will show how you can create a cheap and effective motion capture system at home to perform "real magic" by opening a box with the flick of your wand :D using just a normal night vision camera, a Raspberry Pi and some electronics, and some Python code using the OpenCV computer vision library and machine learning!

THE BASIC IDEA:The wands which are bought from the Wizarding World of Harry Potter in Universal Studios, have a retroreflective bead at their tip. Those retroreflective beads reflect a great amount of infrared light which is given out by the camera in the motion capture system. So, what we humans perceive as a not-so-distinctive tip of the wand moving in the air, the motion capture system perceives as a bright blob which can be easily isolated in the video stream and tracked to recognize the pattern drawn by the person and execute the required action. All this processing takes place in real time and makes use of computer vision and machine learning.

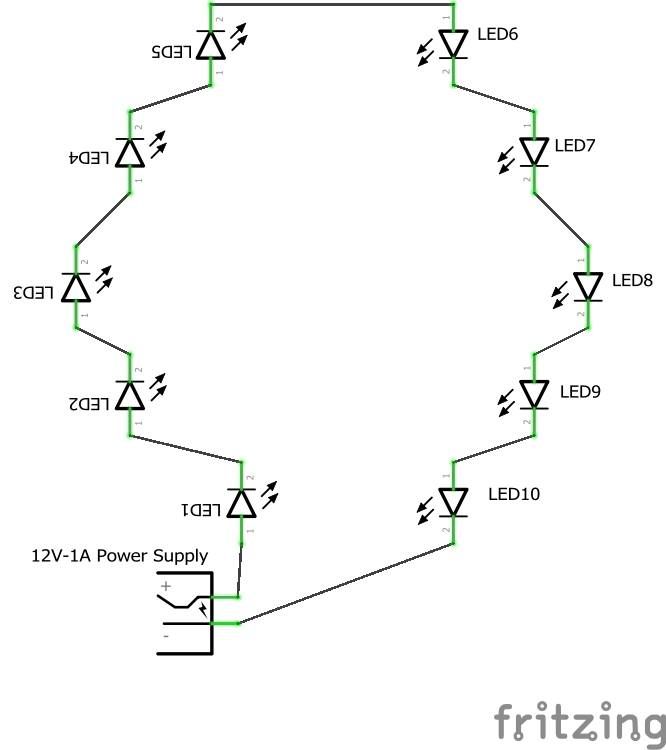

A simple night vision camera can be used as our camera for motion capture as they also blast out infrared light which is not visible to humans but can be clearly seen with a camera that has no infrared filter. So, the video stream from the camera is fed into a Raspberry Pi which has a Python program running OpenCV which is used for detecting, isolating and tracking the wand tip. Then we use SVM (Simple Vector Machine) algorithm of machine learning to recognize the pattern drawn and accordingly control the GPIOs of the raspberry pi to perform some activities. In this case, the GPIOs control a servo motor which opens or closes a harry potter themed box according to the letter drawn by the person with the wand.

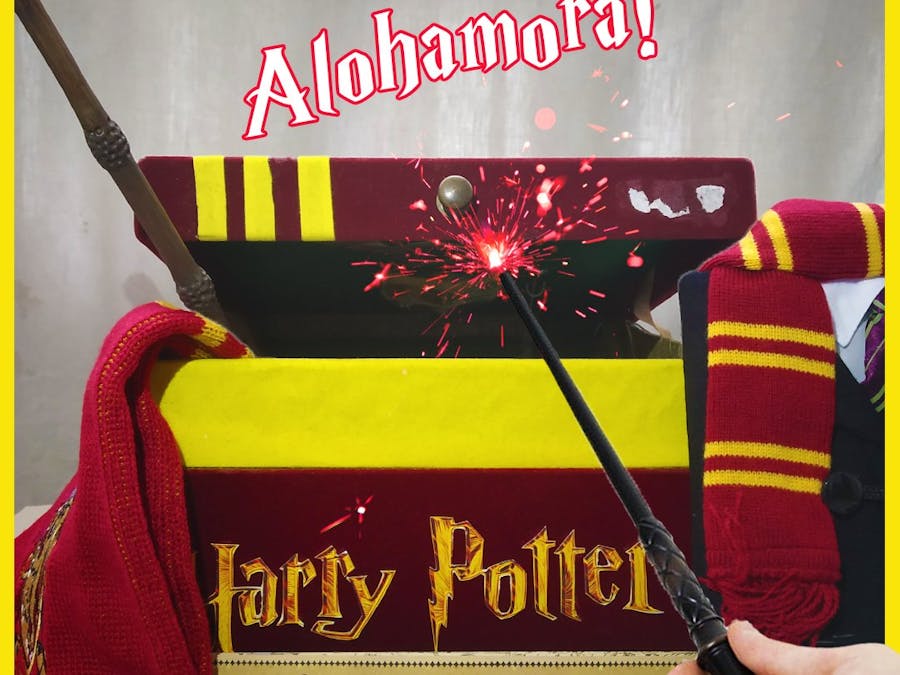

For making a Harry Potter themed Box, I just took an old box and printed out some colored images of various things like Harry Potter Logo, The Hogwarts Crest, the crest of each of the four houses etc. on glossy A4 size sheet and the pasted them on the box at various places.

NOTE: Don't worry if you don't have the wand from the Wizarding World at Universal Studios. Anything with a retroreflector can be used. So, you can use any wand-like stick and apply retroreflector tape, paint or beads at the tip and it should work as shown in William Osman's video:

Training the SVM Classifier Using Scikit-Learn:

For the purpose of recognizing the letter drawn by a person, I trained a machine learning model based on the Support Vector Machine (SVM) algorithm using a Dataset of handwritten English alphabets I found here SVMs are very efficient machine learning algorithms which can give a high accuracy, around 99.2% in this case!!

The Dataset is in the form of .csv file which contains 785 columns and more than 300,000 rows where each row represents a 28 x 28 image and each column in that row contains the value of that pixel for that image with an additional column in the beginning which contains the label, a number from 0 to 25, each corresponding to an english letter. Through a simple python code, I sliced the data to get all the images for only the 2 letters (A and C) I wanted and trained a model for them.

The Code That Makes It All Happen!!

After creating the trained model, the final step is to write a python program for our Raspberry Pi that allows us to do the following:

- Access video form the picamera in realtime

- Detect and track white blobs(in this case tip of the wand which lights up in night vision) in the video

- Start tracing the path of the moving blob in the video after some trigger event(explained below)

- Stop tracing after another trigger event(explained below)

- Return the last frame with the pattern drawn by the user

- Perform pre-processing on the frame like thresholding, noise removal, resizing etc.

- Use the processed last frame for prediction.

- Perform some kind magic by controlling the GPIOs of the Raspberry Pi according to the predcition

Since letter 'A' stands for 'Alohamora' (one of the most famous spell from the Harry Potter movies which allows a wizard to open any lock!!), if a person draws letter A with the wand, the pi commands the servo to open the Box. If the person draws the letter 'C' which stands for close (as I could not think of any appropriate spell used for closing or locking :P), the pi commands the servo to close the box.

All the work related to image/video processing, like blob detection, tracing the path of the blob, pre-processing of the last frame etc., is done through the OpenCV module.

For the trigger events mentioned above, two circles are created on the real-time video, a green and a red circle. When the blob enters the region within the green circle, the program starts tracing the path taken by the blob after that moment allowing the person to start creating the letter. When the blob reaches the red circle, the video stops and the last frame is passed to a function which performs the pre-processing on the frame to make it ready for predcition.

Comments