The autonomous car represents one of the most interesting fields to research due to its versatility in artificial intelligence (AI) applications. One of the most used AI techniques at the moment is the deep learning, which allows to control the cars or detect people on the street. However, all the efforts focus on improving the autonomy of cars and not paying attention to the activities that users (drivers) can perform while the car is in motion. Since users can stay for a long time inside the car as it moves from one point to another, it is necessary to develop an application that helps them to do their stay more comfortable. One possible application is to introduce the detection of emotions in the car by giving it the ability to recognise the user's emotions and be able to modify them by altering the internal conditions such as temperature or music

Therefore, the aim of this project is to introduce the emotion detection through a deep learning application that identifies the user and recognises his/her emotion to personalize the environment. For this project several hardware and software technologies are integrated to classify human emotions and identify users. Furthermore, this information is used to control some car parameters.

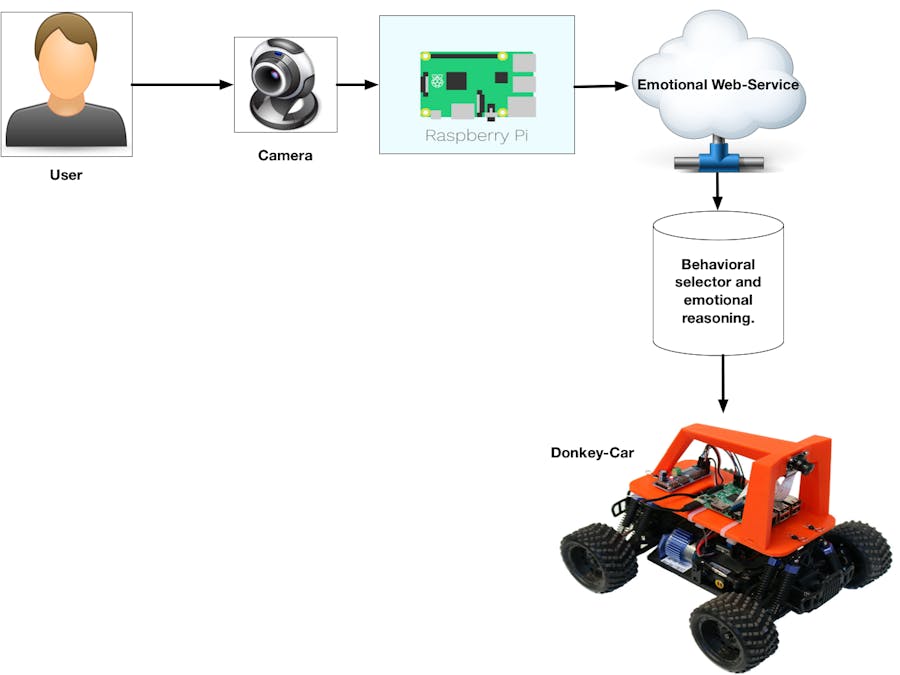

Due to the complexity of this project, we divided the system into two parts, one dedicated to the control of the car and the other focused on the identification of emotions and people. Each of these parts are explained in detail in the next section.

Hardware DescriptionThis section explains the hardware used in this project. The Donkey Car is an open-source platform for self-driving applications. the platform consists of a RC car, a PCA9685, a camera and a raspberry pi. The original API incorporates an application using Keras. This is one of the common tools for deep learning application and the Donkey Car uses it to self-drive (Figures 1, 2, 3).

In this project we don't use these APIs for self-driving, in our case we use the classic image processing to controlling the car. Through image processing the car can drive autonomously following a few lateral lines. The image processing system converts the captured image to a grayscale and then a blur filter is applied. The result of this conversation is used to detect the lateral lines by Hough Line Transformation.

Once detected the lateral lines, we calculate the angle of the line. Based on this angle we decide the angle to move the servomotor that controls the direction of the car. The angle obtained by image processing is showed in the figure 5, where the value of the angle has an oscillation between 35 and 55 degrees.

Figure 6 shows three lines. The red line represents the values of the gross angle, which is obtained after the detection of the line. The angle oscillates between 0 and 56 degrees. These angles cannot be used to control the direction of the car. Therefore, it is necessary to normalize the data according to the values needed to control the car following the step shown on the website of the donkey. To guide the steering of the car the servo needs values between 290-490. However, when we test it with these values we conclude that the servo moves very fast. For this reason, we decided to reduce the limits to 300-400 to avoid damaging the servo.

The acceleration control is determining by emotional state. However, the car starts with a standard speed that will change depending on the emotion. To recognize human emotion is necessary to have a dataset used to train the model (in this case we use KDEF dataset). For copyright reasons we do not supply this dataset in the repository. To train the model we decided to use the tensor flow as deep-learning tools. The dataset has 4900images with 7 possible emotions to classify Afraid, Angry, Disgusted, Happy, Neutral, Sad and Surprised. Before starting with thetraining of our classifier it is necessary to apply a pre-processing stage. First, the image is resized to 48x48 pixels and then used as input to our deep learning model.

At the same time, the system can identify the user. This is a relevant part as the system can customize the interior of the car as temperature, music, etc. To identify the user, the car detects the face and extracts an image vector of a size of 128 and stored it as a npyfile. Once the user sits in front of the car, the system detects the face and calculates the distance between the stored vector and the new vector as shown in the Figures 7 and Figure 8.

To be able to visualize the whole system we developed a streaming server that can be used to start the car and see a Donkey Car streaming camera. This page can be accessed through donekycar_IP:8000/index.html.

However, with the new embedded ARM system, deep learning models can be used once the uTensor is used. This tool was designed to use deplaning in ARM processors. More information can be found at https://github.com/uTensor.

We did a different experiment using the micro tensor and the ARM DISCO-F413ZH (Figure 11) board but we have a mistake when trying to train the model and show the image on the screen. For this reason, we decide to use a laptop as a device to recognize the emotion. we hope to finish this part by the end of this month.

E.Lundqvist, D., Flykt, A., & Öhman, A. (1998). The Karolinska Directed Emotional Faces - KDEF, CD ROM from Department of Clinical Neuroscience, Psychology section, Karolinska Institutet, ISBN 91-630-7164-9.

Comments