Hardware components | ||||||

| × | 1 | ||||

|

| × | 1 | |||

|

| × | 1 | |||

|

| × | 1 | |||

|

| × | 2 | |||

Software apps and online services | ||||||

|

| |||||

|

| |||||

Hand tools and fabrication machines | ||||||

|

| |||||

|

| |||||

A couple of days ago, a simple thought struck me 🤔: what if we had an actual pocket voice assistant—something that could answer our questions instantly, without pulling out a phone, unlocking it, opening an app, and typing or tapping around?

We all carry smartphones 📱, but interacting with a voice assistant on a dedicated device feels very different. It feels more direct, more natural—almost like talking to a tiny machine that actually listens 🎙️. That idea kept nagging me, so I decided to turn it into a real, working project.

The result is a compact voice assistant built on the Arduino Nano ESP32 ⚙️. You ask your question through a microphone, and the answer appears directly on a small OLED display 🖥️. No phone screen. No distractions. Just a button, your voice, and a response.

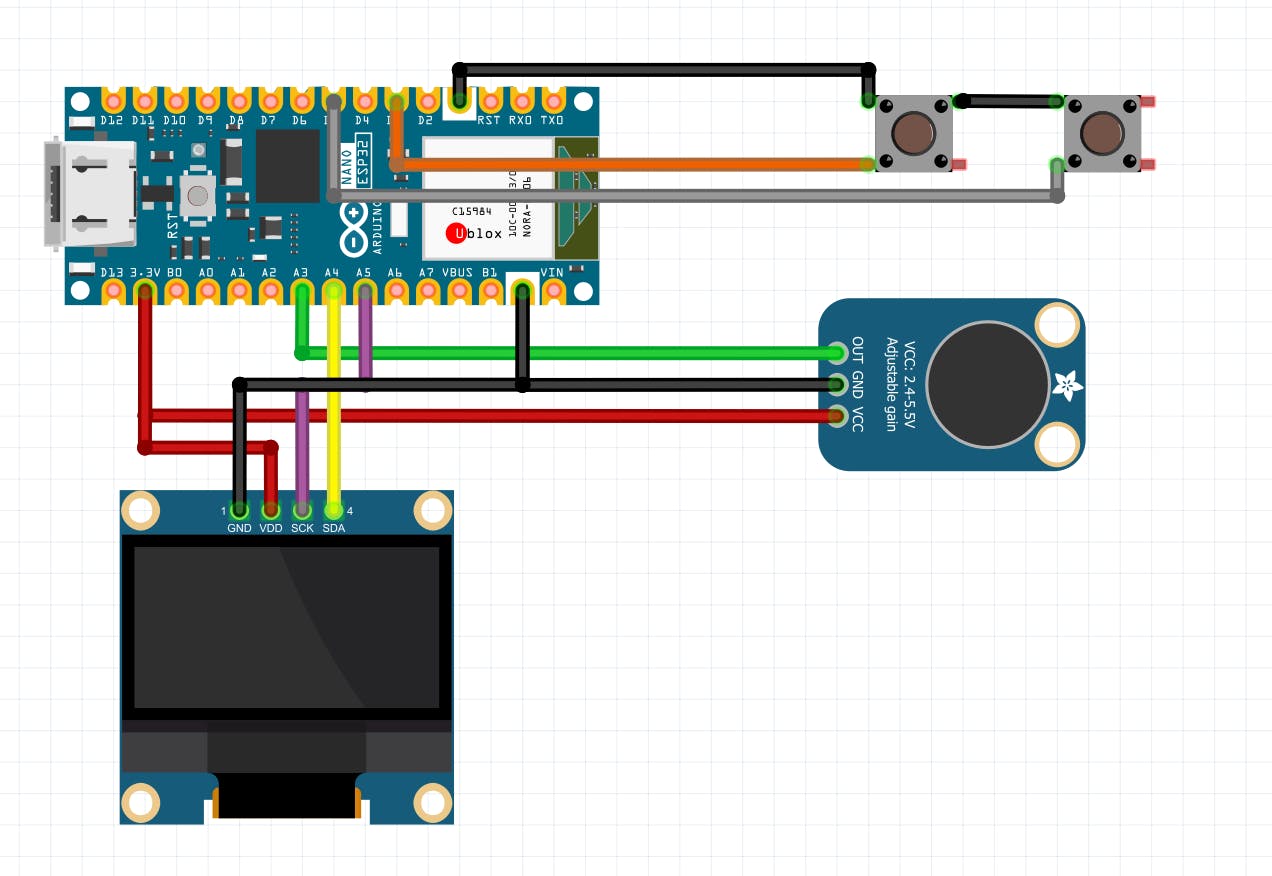

Components I used-OLED Display 0.96 (I²C) x 1

Arduino Nano ESP32 x 1

Tactile Push Buttons x 1

MAX4466 Microphone x 1

How I build it-The words you spoke is captured using a MAX4466 microphone module, converted into text using a speech-to-text model specifically which is Whisper, and then sent to an AI text generation model for generating a concise answer 🤖. The ESP32 handles Wi-Fi, processing, and display control.

Two push buttons make interaction straightforward 🔘—one to record your question and another to scroll through longer answers on the OLED. The display even handles long questions gracefully, so nothing feels cramped despite the small screen.

What makes this project exciting is its flexibility ✨. It uses Hugging Face APIs, which means you’re not locked into a single AI model. You can experiment, swap models, and choose whichever text-generation AI best fits your needs 🧠. The same idea can evolve into a study assistant, a technical helper, or even a portable AI companion for experiments and demos.

I also added a pong 🏓 game option in choose mode, from which there you can choose whether you want to play the pong game with the 2 buttons or use ai assistant. The difficulty in pong game gradually increases as per your score.

The Kill Switch🔴- There is also an option for a kill switch which returns to you to the mode choosing page. This is for exiting the ai or pong mode.

The Information Page 📃- There is also an information page that comes after the IoT HUB animation. This tells about the features and functions and the uses of buttons to make the control easy.

Pin Connections-Arduino Nano ESP32 Connections

Power & Ground

3.3V → OLED VDD

3.3V → MAX4466 VCC

GND → OLED GND

GND → MAX4466 GND

GND → One side of both push buttons (common ground line)

GND → One side of both push buttons (common ground line)

OLED Display (SSD1306 – I²C)

SCK / SCL → A5 (Nano ESP32)

SCK / SCL → A5 (Nano ESP32)

MAX4466 Microphone Module

OUT → A7 (Nano ESP32)

Push Buttons (2 Buttons)---

Button 1 (Record Button)

One pin → D8 (Nano ESP32)

Other pin → GND

Button 2 (Scroll Button)

One pin → D6 (Nano ESP32)

Other pin → GND

How you can build it-For simplicity, I can provide you with a pre-compiled, customized binary file that you can upload using any ESP32 flasher tool, such as ESP TOOL.

So, if you’re interested in the complete binary code or want to build this project yourself, you can mail me at garageiot98@gmail.com 📩.

Also, If you want, you can flash the attached code using Arduino labs for Micropython which works the same.

This project is not about replacing your phone 🚫📱. It’s about exploring a different way of interacting with intelligence—one that’s tactile, focused, and built with your own hands 🔧.

Sometimes the best ideas start small—small enough to fit in your pockets.# Forged with passion by IoT HUB

import network

import urequests as requests

import ujson

import time

from machine import Pin, ADC, I2C

import struct

import ssd1306

import random

SSID = " *WiFi SSID* "

PASSWORD = " *WiFi Password* "

HF_TOKEN = " *Hugging Face API KEY* "

WHISPER_URL = "https://router.huggingface.co/hf-inference/models/openai/whisper-large-v3"

LLM_MODEL = "google/gemma-2-9b-it"

LLM_URL = "https://router.huggingface.co/v1/chat/completions"

# Faster, shorter answers

WORD_LIMIT = 60 # was 120

MIC_PIN = 4

SAMPLE_RATE = 12000

MAX_DURATION_SEC = 3 # was 6, shorter clip = faster STT

MAX_SAMPLES = SAMPLE_RATE * MAX_DURATION_SEC

I2C_SDA = 11

I2C_SCL = 12

OLED_WIDTH = 128

OLED_HEIGHT = 64

OLED_ADDR = 0x3C

BTN_REC_PIN = 8

BTN_SCROLL_PIN = 6

i2c = I2C(0, scl=Pin(I2C_SCL), sda=Pin(I2C_SDA))

oled = ssd1306.SSD1306_I2C(OLED_WIDTH, OLED_HEIGHT, i2c, addr=OLED_ADDR)

oled.write_cmd(0xC8)

oled.write_cmd(0xA1)

oled.fill(0)

oled.show()

btn_rec = Pin(BTN_REC_PIN, Pin.IN, Pin.PULL_UP)

btn_scroll = Pin(BTN_SCROLL_PIN, Pin.IN, Pin.PULL_UP)

CHARS_PER_LINE = 16

LINES_ON_SCREEN = 4

all_lines = []

answer_start_idx = 1

scroll_offset = 0

q_full = ""

q_marquee_idx = 0

current_mode = "HOME"

menu_sel = 0

kill_switch_cooldown = 0

kill_switch_triggered = False

def check_kill_switch():

return btn_rec.value() == 0 and btn_scroll.value() == 0

def activate_kill_switch():

global current_mode, kill_switch_cooldown, kill_switch_triggered

print("Kill switch activated -> MENU")

current_mode = "MENU"

kill_switch_cooldown = time.ticks_ms() + 2000

kill_switch_triggered = True

while btn_rec.value() == 0 or btn_scroll.value() == 0:

time.sleep_ms(50)

time.sleep_ms(500)

def is_in_cooldown():

return time.ticks_ms() < kill_switch_cooldown

def show_kill_timer(start_ms):

elapsed = time.ticks_diff(time.ticks_ms(), start_ms)

remaining = max(0, 4000 - elapsed)

remaining_s = (remaining + 999) // 1000

oled.fill(0)

oled.text("Hold 4s...", 30, 20)

oled.text("Exit in: %d" % remaining_s, 35, 40)

oled.show()

def startup_splash():

print("Startup splash: IoT HUB see-saw")

duration_ms = 2500

start = time.ticks_ms()

x_center = OLED_WIDTH // 2

x_iot = x_center - 40

x_hub = x_center + 8

dx = 3

base_y = 30

amp = 6

while time.ticks_diff(time.ticks_ms(), start) < duration_ms:

if check_kill_switch():

time.sleep_ms(500)

return "INFO"

oled.fill(0)

x_iot += dx

x_hub -= dx

if x_iot <= 5 or x_hub >= OLED_WIDTH - 24:

dx = -dx

phase = (x_iot - (x_center - 40)) / 40

offset = int(amp * phase)

yi = base_y - offset

yh = base_y + offset

oled.text("IoT", int(x_iot), yi)

oled.text("HUB", int(x_hub), yh)

oled.show()

time.sleep_ms(60)

return "INFO"

def show_info_page():

print("Showing info page")

info_lines = [

"BTN A: Scroll",

" Move",

"BTN B: Record",

" Select",

"Both 4s: Menu",

]

start = time.ticks_ms()

while time.ticks_diff(time.ticks_ms(), start) < 4000:

if check_kill_switch():

time.sleep_ms(500)

print("Info exit via kill switch")

return "MENU"

oled.fill(0)

oled.rect(0, 0, 128, 64, 1)

oled.rect(1, 1, 126, 62, 1)

for i, line in enumerate(info_lines):

oled.text(line[:16], 6, 6 + i*9)

oled.show()

time.sleep_ms(100)

return "MENU"

def show_menu():

global menu_sel

print("Menu: sel=", menu_sel)

oled.fill(0)

oled.text("Choose mode:", 0, 0)

options = ["PONG", "AI "]

for i in range(2):

y = 20 + i * 12

if i == menu_sel:

oled.text("> " + options[i], 0, y)

else:

oled.text(" " + options[i], 0, y)

oled.text("- IoT HUB", 54, 56)

oled.show()

def menu_loop():

global menu_sel, current_mode

print("Entering menu loop")

last_scroll = 1

last_rec = 1

both_pressed_start = None

timer_shown = False

while True:

if is_in_cooldown():

time.sleep_ms(50)

continue

now = time.ticks_ms()

rec_val = btn_rec.value()

scroll_val = btn_scroll.value()

if rec_val == 0 and scroll_val == 0:

if both_pressed_start is None:

both_pressed_start = now

timer_shown = False

print("Both buttons pressed in menu")

elapsed = time.ticks_diff(now, both_pressed_start)

if elapsed > 2000 and elapsed < 4000:

if not timer_shown or elapsed % 1000 < 100:

show_kill_timer(both_pressed_start)

timer_shown = True

elif elapsed >= 4000:

activate_kill_switch()

show_menu()

both_pressed_start = None

timer_shown = False

else:

both_pressed_start = None

timer_shown = False

if scroll_val == 0 and last_scroll == 1:

menu_sel = 1 - menu_sel

print("Scroll: menu_sel now", menu_sel)

show_menu()

last_scroll = scroll_val

if rec_val == 0 and last_rec == 1:

if menu_sel == 0:

current_mode = "PONG"

print("PONG selected")

else:

current_mode = "AI"

print("AI selected")

return

last_rec = rec_val

time.sleep_ms(50)

def pong_game():

global current_mode

print("Starting pong game")

score1 = 0

score2 = 0

paddle1_y = 24

paddle2_y = 24

ball_x = 64.0

ball_y = 32.0

ball_dx = random.choice([-3, 3])

ball_dy = random.choice([-2, 2])

paddle_size = 10

paddle_speed = 4

both_pressed_start = None

timer_shown = False

last_update = time.ticks_ms()

while True:

if is_in_cooldown():

time.sleep_ms(50)

continue

now = time.ticks_ms()

rec_val = btn_rec.value()

scroll_val = btn_scroll.value()

if rec_val == 0 and scroll_val == 0:

if both_pressed_start is None:

both_pressed_start = now

timer_shown = False

elapsed = time.ticks_diff(now, both_pressed_start)

if elapsed > 2000 and elapsed < 4000:

if not timer_shown or elapsed % 1000 < 100:

show_kill_timer(both_pressed_start)

timer_shown = True

continue

elif elapsed >= 4000:

activate_kill_switch()

return

else:

both_pressed_start = None

timer_shown = False

if scroll_val == 0:

paddle1_y = max(4, min(60, paddle1_y - paddle_speed))

if rec_val == 0:

paddle1_y = max(4, min(60, paddle1_y + paddle_speed))

ai_speed = 1.5 + (score2 * 0.15)

ai_speed = min(2.8, ai_speed)

ai_error = random.randint(-3, 3) if random.random() < 0.4 else 0

target_y = ball_y + ai_error

if ball_y < paddle2_y - 1:

paddle2_y = max(4, min(60, paddle2_y - ai_speed))

elif ball_y > paddle2_y + 1:

paddle2_y = max(4, min(60, paddle2_y + ai_speed))

ball_x += ball_dx

ball_y += ball_dy

if ball_x <= 4:

score2 += 1

print("AI scores:", score2)

if score2 >= 5:

print("AI wins!")

oled.fill(0)

oled.text("AI WINS!", 40, 28)

oled.show()

time.sleep(2)

current_mode = "MENU"

return

ball_x = 64.0

ball_y = 32.0

ball_dx = 3

ball_dy = random.choice([-2, 2])

elif ball_x >= OLED_WIDTH - 5:

score1 += 1

print("Player scores:", score1)

if score1 >= 5:

print("Player wins!")

oled.fill(0)

oled.text("YOU WIN!", 35, 28)

oled.show()

time.sleep(2)

current_mode = "MENU"

return

ball_x = 64.0

ball_y = 32.0

ball_dx = -3

ball_dy = random.choice([-2, 2])

if ball_y <= 0 or ball_y >= OLED_HEIGHT - 1:

ball_dy = -ball_dy

if (ball_x <= 8 and abs(ball_y - paddle1_y) <= paddle_size):

ball_dx = -ball_dx + random.uniform(-0.5, 0.5)

ball_x = 10

elif (ball_x >= OLED_WIDTH - 8 and abs(ball_y - paddle2_y) <= paddle_size):

ball_dx = -ball_dx + random.uniform(-0.5, 0.5)

ball_x = OLED_WIDTH - 10

oled.fill(0)

oled.text("%d %d" % (score1, score2), 45, 0)

for y in range(max(0, int(paddle1_y - paddle_size)), min(OLED_HEIGHT, int(paddle1_y + paddle_size + 1))):

for x in range(1, 4):

oled.pixel(x, y, 1)

for y in range(max(0, int(paddle2_y - paddle_size)), min(OLED_HEIGHT, int(paddle2_y + paddle_size + 1))):

for x in range(OLED_WIDTH - 4, OLED_WIDTH - 1):

oled.pixel(x, y, 1)

for bx in range(max(0, int(ball_x) - 1), min(OLED_WIDTH, int(ball_x) + 2)):

for by in range(max(0, int(ball_y) - 1), min(OLED_HEIGHT, int(ball_y) + 2)):

oled.pixel(bx, by, 1)

oled.show()

time.sleep_ms(30)

def oled_show_current_view():

global q_full, q_marquee_idx

oled.fill(0)

if all_lines:

prefix = "You: "

if q_full:

pad = " " * CHARS_PER_LINE

base = q_full + pad

if q_marquee_idx >= len(base):

q_marquee_idx = 0

window = base[q_marquee_idx:q_marquee_idx + CHARS_PER_LINE]

if len(window) < CHARS_PER_LINE:

window = window + base[:CHARS_PER_LINE - len(window)]

q_line = prefix + window

else:

q_line = all_lines[0]

oled.text(q_line[:CHARS_PER_LINE], 0, 0)

for i in range(1, LINES_ON_SCREEN):

line_idx = answer_start_idx + scroll_offset + (i - 1)

y = i * 16

if 0 <= line_idx < len(all_lines):

oled.text(all_lines[line_idx][:CHARS_PER_LINE], 0, y)

oled.show()

def word_wrap_to_lines(text):

text = text.replace("\r", " ").replace("\n", " ")

words = text.split()

lines = []

cur = ""

for w in words:

if not cur:

if len(w) <= CHARS_PER_LINE:

cur = w

else:

lines.append(w[:CHARS_PER_LINE])

cur = ""

elif len(cur) + 1 + len(w) <= CHARS_PER_LINE:

cur += " " + w

else:

lines.append(cur)

if len(w) <= CHARS_PER_LINE:

cur = w

else:

lines.append(w[:CHARS_PER_LINE])

cur = ""

if cur:

lines.append(cur)

if not lines:

lines = [""]

return lines

def build_display_lines(question_text, answer_text):

global all_lines, answer_start_idx, scroll_offset, q_full, q_marquee_idx

print("Question transcript:", question_text)

print("LLM answer:", answer_text)

q_display = "You: " + question_text

q_lines = word_wrap_to_lines(q_display)

q0 = q_lines[0]

prefix = "You: "

if q_display.startswith(prefix):

q_full = q_display[len(prefix):]

else:

q_full = q_display

q_marquee_idx = 0

a_lines = word_wrap_to_lines("AI: " + answer_text)

all_lines = [q0] + a_lines

answer_start_idx = 1

scroll_offset = 0

def show_home():

global all_lines, answer_start_idx, scroll_offset, q_full, q_marquee_idx

all_lines = [

"Gemma Voice",

"Assistant"

]

answer_start_idx = 1

scroll_offset = 0

q_full = ""

q_marquee_idx = 0

oled.fill(0)

oled.text("Gemma Voice", 0, 0)

oled.text("Assistant", 0, 16)

oled.text("- IoT HUB", 54, 46)

oled.show()

print("Home screen shown")

def wifi_connect():

print("WiFi: connecting to", SSID)

wlan = network.WLAN(network.STA_IF)

wlan.active(True)

if not wlan.isconnected():

all_lines[:] = ["WiFi...", "", "", ""]

oled_show_current_view()

wlan.connect(SSID, PASSWORD)

while not wlan.isconnected():

time.sleep(0.25) # was 0.5

print(".", end="")

print()

cfg = wlan.ifconfig()

print("WiFi connected:", cfg)

all_lines[:] = ["WiFi OK", "", "", ""]

oled_show_current_view()

time.sleep(0.4) # was 0.7

show_home()

def record_while_button():

print("Waiting for record button...")

adc = ADC(Pin(MIC_PIN))

adc.atten(ADC.ATTN_11DB)

adc.width(ADC.WIDTH_12BIT)

buf = bytearray(MAX_SAMPLES * 2)

idx = 0

show_home()

last = btn_rec.value()

both_pressed_start = None

timer_shown = False

while True:

if is_in_cooldown():

time.sleep_ms(50)

continue

now = time.ticks_ms()

rec_val = btn_rec.value()

scroll_val = btn_scroll.value()

if rec_val == 0 and scroll_val == 0:

if both_pressed_start is None:

both_pressed_start = now

timer_shown = False

elapsed = time.ticks_diff(now, both_pressed_start)

if elapsed > 2000 and elapsed < 4000:

if not timer_shown or elapsed % 1000 < 100:

show_kill_timer(both_pressed_start)

timer_shown = True

continue

elif elapsed >= 4000:

activate_kill_switch()

return None

else:

both_pressed_start = None

timer_shown = False

v = btn_rec.value()

if v == 0 and last == 1:

print("Record button pressed, starting recording")

break

last = v

time.sleep_ms(10)

all_lines[:] = ["Recording...", "Release button", "to stop", ""]

oled_show_current_view()

start = time.ticks_ms()

both_pressed_start = None

timer_shown = False

while btn_rec.value() == 0 and idx < len(buf):

if is_in_cooldown():

time.sleep_ms(50)

continue

now = time.ticks_ms()

if btn_rec.value() == 0 and btn_scroll.value() == 0:

if both_pressed_start is None:

both_pressed_start = now

timer_shown = False

elapsed = time.ticks_diff(now, both_pressed_start)

if elapsed > 2000 and elapsed < 4000:

if not timer_shown or elapsed % 1000 < 100:

show_kill_timer(both_pressed_start)

timer_shown = True

continue

elif elapsed >= 4000:

activate_kill_switch()

return None

else:

both_pressed_start = None

timer_shown = False

v = adc.read()

v16 = (v - 2048) << 4

if v16 < -32768:

v16 = -32768

if v16 > 32767:

v16 = 32767

struct.pack_into("<h", buf, idx, v16)

idx += 2

time.sleep_us(1000000 // SAMPLE_RATE)

dur = time.ticks_diff(time.ticks_ms(), start)

print("Recording done, duration ms:", dur, "bytes:", idx)

if idx == 0:

print("No audio captured")

return None

all_lines[:] = ["Processing audio", "", "", ""]

oled_show_current_view()

return memoryview(buf)[:idx]

def make_wav(pcm_bytes, sample_rate=SAMPLE_RATE, num_channels=1, bits_per_sample=16):

byte_rate = sample_rate * num_channels * bits_per_sample // 8

block_align = num_channels * bits_per_sample // 8

subchunk2_size = len(pcm_bytes)

chunk_size = 36 + subchunk2_size

header = struct.pack(

"<4sI4s4sIHHIIHH4sI",

b"RIFF", chunk_size, b"WAVE", b"fmt ", 16, 1, num_channels,

sample_rate, byte_rate, block_align, bits_per_sample, b"data", subchunk2_size,

)

print("WAV built, size:", len(header) + len(pcm_bytes))

return header + pcm_bytes

def whisper_stt(wav_bytes):

# single quick attempt, no retries

headers = {"Authorization": "Bearer " + HF_TOKEN, "Content-Type": "audio/wav"}

print("STT: sending to Whisper, len:", len(wav_bytes))

all_lines[:] = ["Sending to STT", "", "", ""]

oled_show_current_view()

try:

r = requests.post(WHISPER_URL, headers=headers, data=wav_bytes)

print("STT HTTP status:", r.status_code)

txt = r.text

print("STT raw response:", txt)

transcript = None

try:

js = ujson.loads(txt)

if isinstance(js, list) and js and isinstance(js[0], dict) and "text" in js[0]:

transcript = js[0]["text"]

elif isinstance(js, dict) and "text" in js:

transcript = js["text"]

except Exception as e:

print("STT JSON error:", e)

r.close()

print("STT transcript:", transcript)

return transcript

except Exception as e:

print("STT HTTP error:", e)

return None

def limit_words(text, max_words):

words = text.split()

if len(words) > max_words:

return " ".join(words[:max_words]) + "..."

return text

def llm_answer(question_text):

payload = {

"model": LLM_MODEL,

"messages": [

{"role": "system", "content": "You are a helpful chatbot running on an ESP32 Nano voice assistant. Answer very concisely in 2-4 short sentences."},

{"role": "user", "content": question_text}

],

"max_tokens": 120, # was 200

"temperature": 0.6 # slightly lower

}

headers = {"Authorization": "Bearer " + HF_TOKEN, "Content-Type": "application/json"}

print("LLM: sending question to Gemma:", question_text)

all_lines[:] = ["Sending to LLM", "", "", ""]

oled_show_current_view()

try:

r = requests.post(LLM_URL, headers=headers, data=ujson.dumps(payload))

print("LLM HTTP status:", r.status_code)

raw = r.text

print("LLM raw response:", raw)

answer = None

try:

js = ujson.loads(raw)

if "choices" in js and js["choices"]:

msg = js["choices"][0].get("message", {})

content = msg.get("content", "")

if content:

answer = limit_words(content, WORD_LIMIT)

except Exception as e:

print("LLM JSON error:", e)

r.close()

print("LLM final answer:", answer)

return answer

except Exception as e:

print("LLM HTTP error:", e)

return None

def wait_scroll_mode():

global scroll_offset, q_marquee_idx, current_mode

last_scroll = btn_scroll.value()

last_rec = btn_rec.value()

max_offset = max(0, len(all_lines) - answer_start_idx - (LINES_ON_SCREEN - 1))

last_anim = time.ticks_ms()

last_marquee_step = time.ticks_ms()

both_pressed_start = None

timer_shown = False

print("Entering scroll mode, max_offset:", max_offset)

while True:

if is_in_cooldown():

time.sleep_ms(50)

continue

now = time.ticks_ms()

rec_val = btn_rec.value()

scroll_val = btn_scroll.value()

if rec_val == 0 and scroll_val == 0:

if both_pressed_start is None:

both_pressed_start = now

timer_shown = False

elapsed = time.ticks_diff(now, both_pressed_start)

if elapsed > 2000 and elapsed < 4000:

if not timer_shown or elapsed % 1000 < 100:

show_kill_timer(both_pressed_start)

timer_shown = True

continue

elif elapsed >= 4000:

activate_kill_switch()

return

if time.ticks_diff(now, last_anim) > 50:

oled_show_current_view()

last_anim = now

if time.ticks_diff(now, last_marquee_step) > 250:

if q_full:

q_marquee_idx += 1

last_marquee_step = now

v_scroll = btn_scroll.value()

if v_scroll == 0 and last_scroll == 1:

scroll_offset += 1

if scroll_offset > max_offset:

scroll_offset = 0

print("Scroll offset:", scroll_offset)

oled_show_current_view()

last_scroll = v_scroll

v_rec = btn_rec.value()

if v_rec == 0 and last_rec == 1:

print("Exit scroll mode button pressed")

while btn_rec.value() == 0:

time.sleep_ms(10)

break

last_rec = v_rec

time.sleep_ms(10)

def ai_mode_loop():

global current_mode

print("AI mode started")

wifi_connect()

last_rec = 1

last_scroll = 1

both_pressed_start = None

timer_shown = False

while True:

if is_in_cooldown():

time.sleep_ms(50)

continue

now = time.ticks_ms()

rec_val = btn_rec.value()

scroll_val = btn_scroll.value()

if rec_val == 0 and scroll_val == 0:

if both_pressed_start is None:

both_pressed_start = now

timer_shown = False

elapsed = time.ticks_diff(now, both_pressed_start)

if elapsed > 2000 and elapsed < 4000:

if not timer_shown or elapsed % 1000 < 100:

show_kill_timer(both_pressed_start)

timer_shown = True

continue

elif elapsed >= 4000:

activate_kill_switch()

return

else:

both_pressed_start = None

timer_shown = False

if rec_val == 0 and last_rec == 1:

pcm = record_while_button()

if current_mode == "MENU":

return

if not pcm:

all_lines[:] = ["No audio", "Hold BTN8", "to record", ""]

oled_show_current_view()

print("Loop: no audio, back to idle")

time.sleep(0.6) # shorter pause

last_rec = rec_val

continue

wav = make_wav(pcm, sample_rate=SAMPLE_RATE)

transcript = whisper_stt(wav)

if current_mode == "MENU":

return

if not transcript:

all_lines[:] = ["STT failed", "", "", ""]

oled_show_current_view()

print("Loop: STT failed, back to idle")

time.sleep(0.6)

last_rec = rec_val

continue

answer = llm_answer(transcript)

if current_mode == "MENU":

return

if not answer:

all_lines[:] = ["LLM failed", "", "", ""]

oled_show_current_view()

print("Loop: LLM failed, back to idle")

time.sleep(0.6)

last_rec = rec_val

continue

build_display_lines(transcript, answer)

oled_show_current_view()

wait_scroll_mode()

if current_mode == "MENU":

return

show_home()

print("Loop: finished one Q&A cycle\n")

last_rec = rec_val

last_scroll = scroll_val

time.sleep_ms(40) # was 50

def main():

global current_mode

print("Boot: starting multi-mode assistant")

mode = startup_splash()

print("After splash, mode:", mode)

mode = show_info_page()

print("After info, mode:", mode)

current_mode = "MENU"

show_menu()

while True:

if current_mode == "MENU":

menu_loop()

elif current_mode == "PONG":

pong_game()

if current_mode == "MENU":

show_menu()

elif current_mode == "AI":

ai_mode_loop()

if current_mode == "MENU":

show_menu()

elif current_mode == "HOME":

show_home()

time.sleep(2)

current_mode = "MENU"

show_menu()

time.sleep_ms(100)

main()

_3u05Tpwasz.png?auto=compress%2Cformat&w=40&h=40&fit=fillmax&bg=fff&dpr=2)

Comments