Nearly a fifth of all active duty police officers suffer from PTSD but many aren’t getting the help they need.

The ProblemSome 216,000 officers suffer from PTSD or some other form of emotional stress says Ron Clark, a retired Connecticut state trooper who now runs Badge of Life, a group that studies PTSD among police.

Early detection mental health and anxiety can be tricky, as signals are often buried. Many police officers with PTSD don’t even know they have a problem; they remain on the street, doing their job, often without help or support.

Our ProductWe’ve built Captain, a system for automatically detecting mental health and anxiety challenges in first responders as early as possible. Our solution uses emotion detection from first responder video footage, combined with audial signals, to detect early signs of anxiety and PTSD in first responders.

In order to train our model to detect mental health signals, we need real-time video footage of officers. How do we get that? We built a video reporting tool, integrated with Alexa, that guides an officer through the process of reporting an incident.

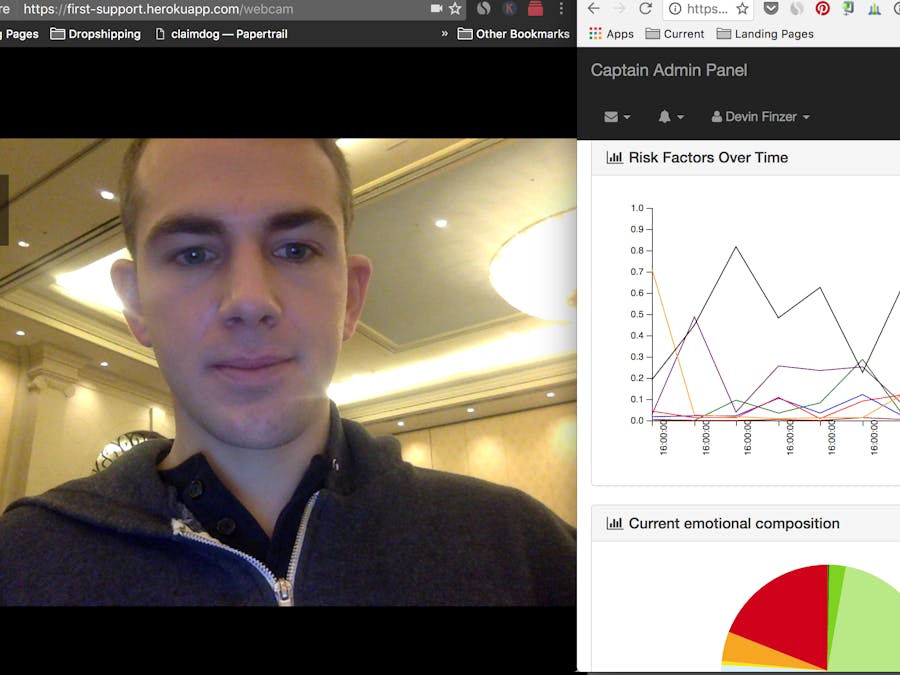

Let’s make that a little more concrete. In the below video, Devin, an officer with LVPD, is filing an incident. He opens up our kiosk and is guided through the process of producing his report. Alexa then guides him through a follow-up questionnaire (stop by our table to see this in action!)

While Devin is reporting his incident, our servers are running an analysis in the background, leveraging the Eyeris emotion recognition web API. We combine these signals with textual data signals (using the Watson API) in order to build a model of stress or anxiety. We tested this model on a corpus of YouTube videos containing police report footage (such as this one).

By using computer vision to generate new signals and algorithms, we can both provide insights into the mental health of a first responder, and follow up in real time with Alexa use the appropriate verbal queues. When Devin is finished with the main part of his report, Alexa guides him through follow-ups, taking into account the sentiment analysis performed in the video. He completes his report.

Admin dashboard for police departmentsBut how is this data surfaced? An administrative dashboard allows appropriate supervisors and analysts to view the data relating to mental health and emotional stability of their officers. As one month is just a starting point and we truly need to see long term data to provide appropriate support, it is important that the police department can see reports on Rob’s status over time.

To help us further visualize this data (and for the sake of a cool demo), we can also see this data in real-time as the officer completes his report.

We expect to be able to sell this product as a platform to police departments, such as LVPD, and municipalities where it will enhance the safety and security of their officers and the citizens they serve. In addition, our solution has the potential to save a department the size of LVPD in excess of 1200 man/hours per day.

We can talk all day about the features we can layer on top of this—getting data from body cameras, improvements to the stress algorithm using heart rate detection, smarter incident report auto-generation. But what made us most excited about this concept was a conversation with two officers who were on duty nearby this morning. They spoke passionately about how law enforcement is rapidly changing and becoming more aware of these issues. As they told us, “This is a solution that could save careers as well as lives.”

Comments