Last year (2020) showed us that we are not immune to more things that we had not thought. Not just new diseases which can suddenly flourish, but the social and mental consequences they could carry. The perfect example and the one that have impacted us the most is lock-down. According to a lot of sources, mental issues related to lock-down have rocketed during Covid-19 pandemic, not surprisingly given that we were not used to be more than a few days on our houses since the beginning of digital era. Problems with anxiety, stress, fear, are normal responses when there are uncertain times, moreover when there is the possibility to lose our jobs or people who we care about. These problems have been suffered by a large amount of people around the globe depending on many vulnerability factors such as the current age, educational status, special needs, previous mental health condition, being economically under-privileged and relatives being quarantined due to infection or fear of infection. The more the time we spent locked down, the more our mental health is compromised.

Proposed SolutionDrones are incredible machines which can provide incredible solutions still to be discovered. In this project I propose maybe a new one. Use drones to track people and let them have a safer way to go out and enjoy a hike or a running outdoors. The idea is to register to the centimeter where people have walked and for how long, upload that information to a Maps application so others can check them out and decide where would be a safe place to go out for a breath taking. This would help lessen the stress and anxiety created because of house lock-down.

You might be wondering why not just register our walks with the location on our phones. I would say that some times you do not want to take your phone off the house because you want to enjoy the view, fresh air, etc, without being distracted. In that case, you just need to tell the drone where you would want to go, for how long and that is it. The drone will use object detection and tracking algorithms helped with OpenCV to track you down and upload your location to the Map application with a layer- interactive map which shows a 3D 4 meters-bubble from you which can help others decide precise movements when they decide to go out. After that, someone else can take a look to the app, check where are the streets or hike ways with the least activity/danger and select that place to go out and relax a little.

This solution might even help people infected with Covid-19 to select a safer place to go out and take a hike or a breath without compromising anyone else because they would be advertising their moves. The thing here is that anyone deserves mental relief on any conditions. If they need to take a breath of fresh air in order to assuage their mental health, they should have the chance to do it taking care of everyone else. Of course not all the Covid-19 infected people have the energy nor the possibility to go out for a stint, but the app could be used for those who have not gone critical or those who are close to overcome the infection.

How is it integrated and builtLessons Learned from previous HoverGames contestOne of the main problems I faced during previous Hovergames contest was the fact that the video from the camera was disturbed by the vibrations of the drone once it was flying. You can check the video here:

Secondly, the camera I used was a Logitech C920 which is pretty heavy for the drone. The latter is nicely resolved with the Coral Camera provided with the Navq board. Regarding the former, I took a different approach; printing a base mechanism which can support dampers and which can handle both Navq Board and Coral Camera to save space for the fly controller at the center of the drone. All the pieces were 3D printed with a Creality 3 Ender and designed by me. You can check them on Thingiverse. The link is provided on the attachments tab.

Worth to mention that I also design and printed for other hardware parts just as the telemetry radio and the Lidar which we will see later.

At the beginning I planned to use two cameras attached to the base, however, the other camera would have needed another board to handle it, like a Raspberry or Jetson Nano, thus, increasing the drone weight. So at the end i decided that the coral would be enough for the application, reducing the number of camera supports to one.

The camera support joints with 4 M3 screws

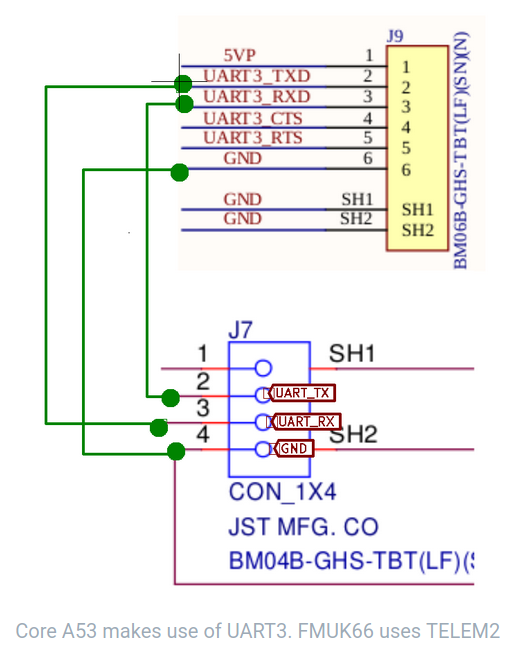

Taking this approach gave me some advantages. One of them regarding weight balance because on the other side of the tubs, I installed a Lidar sensor and giving that the whole mechanism (base, Navq board and Coral Camera) is not too heavy, the drone does not tilt to one side as happened me on previous Hovergames contest. Another advantage is that the TELEM2 port from FMUK66 is closer to the UART port from Navq, making the connection pretty easy.

Why did I decide to use a state machine running on PX4? Well, the ideas is this:

The drone battery will be powering the FMU K66, Navq board and sensors like the Lidar, GPS module, telemetry radio, motors, Coral camera through Navq, not to mention the amount of power is needed to run object detection and tracking while powering the camera. That is a lot of power to supply. Hence I decided to give Navq a relief regarding camera handling and the system in general; a module running a state machine on PX4, which uses information from uorb topics to decide when should the camera be running, taking photos or taking a video. That way the camera will only be working while drone is flying, of course depending on how we set the variables for it. The new hovergames message will carry the information of the internal state machines (running on the hovergames_sm Module) and communicate it through mavlink so Navq can know what to do with the Coral camera module. There is also the possibility to run the same state machine on the Navq board and avoid creating a new module in PX4, however, reading all the topics inside the FMUK66 would be more efficient from a communication point of view.

The first step was to decide how will the state machine will work. We ca check it out from the following UML:

Then, add values from the enums and the information of the message in the mavlink/v2.0/message_definitions/common.xml file. I had to use a message ID which was not already used by the protocol and the same ID was to be used both for PX4 and MAVSDK message generation.

<enum name="STATE_MACHINE_MODE">

<description>Values defining the state of the main sm</description>

<entry value="0" name="STANDBY_STATE">

<description>first state at power up</description>

</entry>

<entry value="1" name="PREACTIVE_STATE">

<description>second state, before activation of the vision algorithm</description>

</entry>

<entry value="2" name="ACTIVE_STATE">

<description>state which activates the vision algorithms</description>

</entry>

<entry value="3" name="FAULTED_STATE">

<description>state when something goes wrong and camera stops working</description>

</entry>

</enum>

<enum name="ACTIVE_SUBSTATE">

<description>Inside active, camera can be recording or taking photos</description>

<entry value="0" name="RECORDING_STATE">

<description>Take and save video</description>

</entry>

<entry value="1" name="IMAGE_CAPTURE_STATE">

<description>Take photos</description>

</entry>

<entry value="2" name="PAUSED_STATE">

<description>Camera opened but not running algorithm</description>

</entry>

The next entries goes under the <messages> definitions:

<message id="12916" name="HOVER_GAMES_STATUS">

<description>Hovergames message for A53 camera handling</description>

<field type="uint32_t" name="time_boot_ms" units="ms">Timestamp (time since system boot).</field>

<field type="uint8_t" name="HoverGames_SM" enum="STATE_MACHINE_MODE" >Value of the main state machine</field>

<field type="uint8_t" name="HoverGames_ActiveSM" enum="ACTIVE_SUBSTATE" >Value of the Active state machine</field>

</message>OnOnce we have that , we can run mavgenerate to actually generate the headers and C message definitions:

Next, we will have some files generated, one of them, the file containing headers and struct definitions to use with mavlink within Firmware/mavlink/include/mavlink/v2.0/common.

Hint: I set the output file generation path outside the Firmware to avoid lose of information already present. Then, just perform a comparison between the folders "common" (the created one and the one from Firmware) and add what is pertinent to the hovergames new message.

Next, lets code the state machine within Firmware directory. The state machine will run as a module as I want it to run every certain time, handle some uorb subscriptions, and publish to the new hovergames message trough uorb publisher.

- hovergames_sm.h:

Has class definitions for the state machine module. It defines struct typedefs which I am going to use in the hovergames_sm.cpp file. Public functions include constructor and destructor functions, task_spawn, which creates and instance of the module class, print_usage function which prints information about the module, Init function which sets the interval for the module to be running, and print_status function which prints whether the module is running or not and the actual state of both state machines.

Private functions include the parameters_update function, which updates information coming from the subscriptions to the distance sensor, battery status, vehicle status and actuator armed messages. The Run function, which has the handling of the machine state which we will see on detail in the hovergames_sm.cpp section. Besides, I defined two private member data variables of type failureValues and armingValues which are used by the state machine.

- hovergames_sm.cpp:

The class constructor HoverGamesSM initializes both state machines as Standby for the Global state machine and Paused for the Active State Machine. Furthermore, it puts the module in the hp_default work queue configuration. In the Init function, we set the module to run every 1000 milliseconds. As mentioned earlier, task_spawn function creates an instance of the class module and registers it to be executed.

The Run function executes the state machine. Lets explain the purpose of every state. The first global state is the Standby state, it basically tells that the drone is up and running but we dont want to execute vision algorithms nor initialize the camera system. Standby will have a transition to PreActive State once the drone is armed. In PreActive state, camera will start running without taking photos or record video. The transition from PreActive to Active will happen if the drone is still armed and the health of the battery is proper (more than 12 volts and more than 20% remainig). In Active State is where the magic will happen, it has three substates; Recording, ImageCapture and Paused. It is Paused state every time the Global State is in Standby, Preactive or Faulted State as we do not want to do anything with the camera on those states. Recording and ImageCapture depends on the distance from the drone to the ground. We will have a state of ImageCapture whenever we are from 20 to 34 meters above the ground. From 5 to 20 meters, inclusive, we will have a state of Recording video, because from that height is easier for the detection algorithm to recognize human pose. It is in this state where also the tracking takes place. Paused state is the default value and it will be whenever we are flying at less than 5 meters.

Faulted state can be reached from any other global state. It tells us that the drone is running out of power or that something wrong has happened. In that case, the module should inform Navq board that a camera shutdown should be performed as well as Navq should go to sleep in order to protect data for anything else to damage both hardware parts.

At the end of every run, hovergames advertiser is updated to send last value through mavlink.

Before every run of the state machine, the parameters_update function is called to update the subscription values. The destructor member function safety deletes both used counters. The print_usage function prints information regarding the module.

There are additional steps to be performed before successfully running the module on real hardware; CMake module file, which is pretty straightforward (depicted below), where I just needed to add the dependencies and specify the source files to add as well as the module name and the main function name. The default.cmake file under boards/nxp/fmuk66-v3/default.cmake, where I added the name of the module in order to built it during compilation (this step had to be performed on boards/px4/sitl/default.cmake to execute it on SITL). In the file ROMFS/px4fmu_common/init.d/rcS I needed to add the hovergames_sm start command in order to run the module at startup (this step goes in ROMFS/px4fmu_common/init.d-posix/rcS for SITL).

It is mandatory to add the new message handling to the mavlink module in the PX4 firmware in order to read it from other systems like an off-board computer.

Firstly, I created a file called HOVERGAMES_STATUS.hpp under src/modules/mavlink/streams which has a class definition to handle the message stream. The most important function is the protected send function. It basically updates a mavlink_hover_games_status_t structure defined in the already generated message definition file (mavgenerate) and then sends it through the mavlink protocol. It copies the data coming from a hovergames subscription.

Secondly, I had to add three lines to the mavlink_messages.cpp file: It adds a hovergames stream to the mavlink module.

Finally I just needed to add a line of code to configure the hovergames message stream in src/modules/mavlink/mavlink_main.cpp

I also put the configure_stream_local code line to the CONFIG mode

Real Hardware (Navq board) using MAVSDK telemetry plugin:

Note: The configuration of MAVSDK telemetry plugin to read custom messages is shown in the 3rd step

Note: Both videos include description that explains what is happening

SITL demo also using MAVSDK telemetry plugin:

One of the things I realized that could perturb the state machine is the reading from the distance sensor. Even though the physical connection of the sensor includes a capacitor to reduce noise, it is not completely exempt of having outlier signal values. Therefore, the application of a digital filter is a proper solution to handle it

- Hardware connection

The connection is quite straightforward, Vcc to Vcc, ground to ground, FMU SCL (2) to Lidar SCL (4) , FMU SDA (3) to Lidar SDA (3) and 680 mF capacitor between Vcc and GND.

A video is provided at the end to show how it is physically connected

- Filter code

For the implementation to take place, firstly I assessed two types of filters, floating point and integer. I used Qt Creatror to visually asses the filters.

Mathematical details are out of the scope of this project guide. They are going to be added to my personal Gitbook for specific notes.

Floating point filter:

Built through a template class called filter. It has three public functions; constructor filter function, which initializes the values of an array. The new_sample function which actually executes the filter. The get_result function, which returns the value filtered. FIR algorithm is pretty easy; multiplication between an array of weights times a buffer of stored input values. The order of the filter showed below is 17, which means, it has 17 weight values and the buffer is of size 17 as well.

Integer filter:

Managed through a template class called filter_order5 to let it manage different types if integers data types from uin16 to uin64. It also has three public member functions just as the previous class. The sample_t type name is the parent of the other type names. This time, for new_sample function, there are five class template parameters for the weight values (this will depend on the order of the filter to be implemented)

Code to asses the filters:

Worth to mention that from the test run for the filters, it seemed proper to implement a cascade filter of 2 filters.. A cascade filter is basically applying the result from the first filter as the input to the second filter.

Normal Signal vs Floating point digital filter (one instance and cascade)

Normal vs Integer digital filter (one instance and cascade):

Normal signal vs Floating point cascade filter of order 17 vs Integer cascade filter order 5:

Delving at the distance sensor PX4 source code, I realized the filter that matches the implementation of the LidarLite the most, is the floating point digital filter, mainly because it uses floating point values when the reading is converted to meters.

So, the implementation of the digital filter to the PX4 distance sensor driver was as follow: First, I created a filter.hpp file where the class fpfilter was implemented. Worth to mention that instead of standard template libraries from c/cpp compilers, matrix/Matrix.hpp was used to handle vector and matrix types.

The class implementation follows the same as the previous Qt explained code.

In the LidarLiteI2C.h the following lines were added. They declare the b (weight) vectors of the two filters (as I am using cascade filter implementation) as member data variables of the LidarLiteI2C class. Of course also adding the proper header include line (#include "filter.hpp"). The private function initialize_filteters, initialize the b and b1 vectors.

In the LidarLiteI2C.cpp, the initialize_filters function is called from the constructor of the LidarLiteI2C class. The initialize function sets the data of b and b1 vectors and gives the filters and initial new sample to internally initialize the values vector.

Then, the magic occurs at the function collect, where the new sample is read from the lidar sensor with the function lidar_transfer and after some validations, it is converted to meters, followed by the application of the the first filter object which has distance_m as its input variable and next, by the filter_cascade object, each of which having its weights (b) values. Finally, the variable distance_filtered is the one which is sent by _px4_rangefinder.update function.

I did not initialize b, b1 vector values with some random data because for this filter, the newest sample should be multiplied by the highest coefficient.Results:

The next video shows how the current distance value behaves before and after the digital filter:

Step 3. Edit MAVSDK telemetry source code so that we can read custom messages and vehicle GPS.I am using MAVSDK api's to communicate Navq with NXP FMU board as I think is a robust and stable SDK. First of all, the same previous PX4 steps must be done for the hovergames message generation files. But instead of using the mavlink folder from PX4, our new input should be the xml under /MAVSDK/src/third_party/mavlink/include/mavlink/v2.0/message_definitions/common.xml.

After i generated the header files, I edited 4 files:

- telemetry.h

Highlighted code shows my updates to the telemetry plugin: I added a structure which has same information as the mavlink generated header, callback of type std::function which runs every time we call HoverGamesStatus (called internally) and the definition of set_rate functions which basically sets the rate at which I want to listen to the hovergames topic.

- telemetry.cpp

Has the implementations of the above definitions. Overloaded friend functions act as stream options for the plugin.

- telemetry_impl.h

Declarations of functions and mutex which handles the call to the process_HovergamesStatus function

- telemetry_impl.cpp

This is the most important implementation. From these definitions you will handle what is going to happen every time you receive a hovergames message. The process_HoverGamesStatus is where the magic occurs, it manages the incoming data from serial port, decodes the information if the message happens to be a hovergames mavlink message and puts it into the hovergamesStatus_struct being a type Telemetry::HoverGamesStatus_s which I also defined previously. Then it uses a mutex to call HoverGamesStatus which in turn calls the function callback that we are to pass as a lambda function or just a callable from our executable.

To be able to listen to Gps data also from Telemetry MAVSDK plugin, I added a few lines of code in:

- telemetry.h

Previously, the structure only had definitions for the num_satellites and fix_type variables. However, I added Lat, Lon and Alt int32 variables so we can also read them from the vehicle_gps_position topic. These variables are going to help us to draw a line in the maps application which marks the path taken by a person.

- telemetry_impl.cpp

Here, we decode and assign the information coming from the mavlink message to the already edited structure Telemetry::GpsInfo

A demo can be found in step 1, under the heading: "Demo for reading the new hovergames message, both in real hardware and SITL"

Step 5. Connect all sensor to the droneStep 4. Set up Yocto Environment and Opencv to code object detection and tracking algorithms

I had been working on a guide on how to perform this step to create a well working cross development set up for the Navq Board.

You can find it under: https://hovergames.gitbook.io/hovergames2/

Integrating with the example: https://github.com/ARM-software/armnn/tree/branches/armnn_20_11/samples/ObjectDetection

Successful cross compilation but still need to train a network to able to run it on target.

Step 5 MapBox SDK to handle applications with maps.Mapbox SDK will be used to acquire data from the Gps position data coming from FMUK66, bypassed by MAVSDK so Mapbox can update the GeJSON line to the maps application, hence tracking the real position of the person. We can obtain a precision to the centimeter integrating the videos recorded by the Coral Camera.

Following the example under: https://docs.mapbox.com/mapbox-gl-js/example/geojson-line/

I chose this SDK because is easy to integrate with Android Studio, given that I want to run the application on a mobile device.

Contributions and Collaboration- NXP forum

One of my main ideas was to use the M4 core inside the Navq Board to run another state machine there, which could handle more things like on board diagnostics and inter core communication between M4 an A53 to handle mavlink protocol under an RTOS system. Running FreeRTOS programs in the M4 core was successful, however i failed to inter connect M4 core and A53 running linux. Here is a ticket I opened for that matter:

https://community.nxp.com/t5/NavQ-8MMNavQ-Discussion/Navq-RPMSG-for-M4-use/m-p/1149278

On the other hand, I also created another two tickets which later I was able to resolve:

- HoverGames2 main discussion board:

Here are some posts where I have been actively helping others:

https://www.hackster.io/contests/hovergames2/discussion/posts/7680#challengeNav

https://www.hackster.io/contests/hovergames2/discussion/posts/7668#challengeNav

https://www.hackster.io/contests/hovergames2/discussion/posts/7641#challengeNav

https://www.hackster.io/contests/hovergames2/discussion/posts/7562#challengeNav

https://www.hackster.io/contests/hovergames2/discussion/posts/7490#challengeNav

https://www.hackster.io/contests/hovergames2/discussion/posts/7243#challengeNav

Comments