In the past decade, there has been significant growth in the research and development of bionic prostheses due to their important applications in improving the life quality of post-stroke patients and amputees. According to Grand View Research Databases [1], the global prosthetics and orthotics market size was valued at US$6.11 billion in 2020 and is expected to grow at a compound annual growth rate of 4.2% from 2021 to 2028. To a person who has sustained the amputation, the control interface (i.e., neural-machine interface) between the residual muscles and prosthesis is critical to the natural movement of the bionic prostheses. As shown in the Fig.1, the electromyogram (EMG)-based control interface has been widely used medically as well as in some entertainment applications, such as prosthesis control, rehabilitation glove, or providing an alternative interaction method for VR games. The EMG-based neural machine interface can measure electrical activity in response to the nerve's stimulation of the muscle to identify human movement intentions and translate the recorded EMG signals into effective control signals to drive external prostheses. Recently, deep learning has shown great potential to further improve the accuracy and robustness of the EMG-based control interface design due to the huge amount of EMG data collected from many users and the advancement of learning algorithms and computing devices. However, a key challenge and impediment to the clinical deployment of the deep learning approach is their high computational cost since most control components are built using portable embedded systems with limited power and computation capability. In this project, we aim to utilize recent advancements in edge computing devices (i.e., Sony Spresense board) and develop real-time deep learning algorithms to improve the EMG-based neural-controlled bionic arm.

This project aims to deploy a deep neural network on the Sony Spresense micro-controller for real-time bionic arm control. We used a 2D Convolutional Neural Network (CNN) as our EMG pattern recognition algorithm, which has been fine-tuned and compressed before deploying to Sony Spresense. Our EMG data collection is based on Myo Armband and ESP32 Board. This project has 4 major parts:

- EMG signals collection and preprocessing

- Offline CNN model training and finetuning

- On-device CNN model deployment and inference

- Real-time bionic arm control

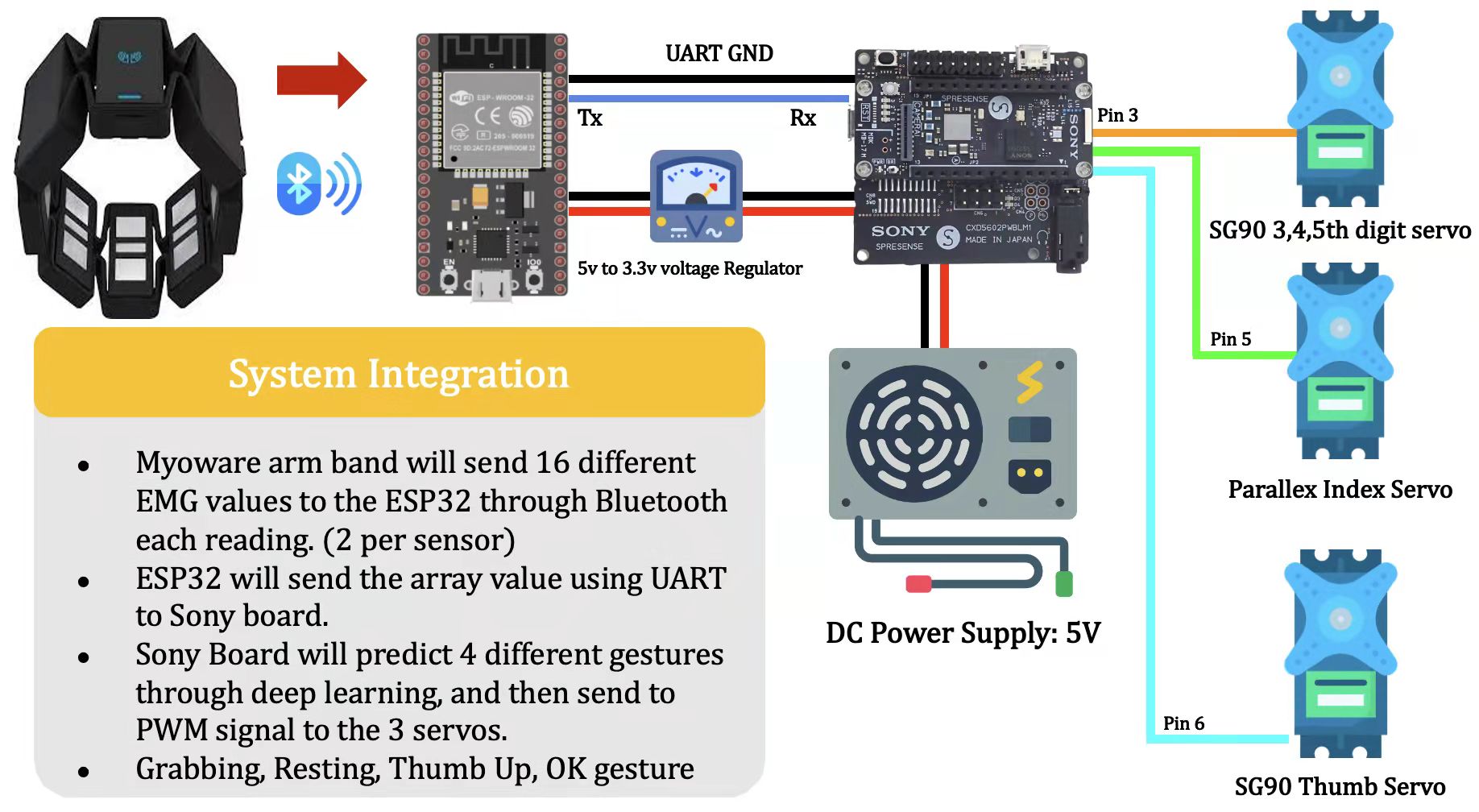

System Overview:Fig.2 shows the system overview. We first connect Myo Armband with ESP32 through Bluetooth. Second, Myo Armband can acquire EMG data while ESP32 transmits signals to Sony Spresense via UART serial communication simultaneously. Third, Sony Spresense preprocesses the transmitted EMG data and makes gesture predictions in real-time. Finally, the predicted gestures are translated to PWM signals to control the bionic arm, with 3 servos controlling varying fingers.

All the Github source code can be found at:https://github.com/MIC-Laboratory/Sony-Competition

The Youtube project video demo can be found at: https://youtu.be/r8Wh6ckIqSM

In the following sessions, we will analyze each part in detail.

EMG Pattern Recognition: The EMG signal is the recording of electric signals generated during muscle contraction from the surface of human skin. EMG signal pattern recognition is the technical core of non-intrusive neural-machine interface applications. As shown in the Fig. 3, the 8 sensors Myo Armband will be used to collect EMG signals from human skin. Then, the collected signals can be fed into either deep learning (e.g., CNN) or machine learning (e.g., LDA, SVM) models to perform pattern recognition, returning output probabilities over different gestures. Deep learning is superb at feature extraction, as opposed to traditional machine learning in which feature engineering is required, such as taking Mean Absolute Value (MAV) or Zero Crossing (ZC) from EMG signals.

In this project, we utilized the CNN model to directly learn raw EMG features without any additional feature engineering. However, the deep learning model may introduce additional computation overhead than machine learning models. As shown in the Fig.3, the CNN model is usually pre-trained by high-performance GPUs with heavy computation costs, large memory occupation, and high energy consumption. It is very challenging to deploy them to low-power devices with limited computation resources. To achieve a real-time interface on the Sony Spresense, we will utilize the TensorFlow Lite to accelerate the CNN model.

EMG Data Preprocessing: As shown in the fig.5, the Myo Armband includes 8 sensors/channels, so each EMG array is 1x8 (one EMG sample per channel). To extract spatial domain features from raw EMG signal, we combine 32 EMG samples to create an EMG window (8 × 32). During the EMG signal collection, we overlap EMG windows with a step size of 16 such that the following EMG window contains the final 16 samples from the prior EMG window in order to further incorporate time domain features. In addition, the raw EMG value is an 8-bit unsigned number ranges 0 - 255, so we change the EMG values from unsigned to signed by subtracting the value by 256 if the EMG value is greater than 127. Finally, we will have one set of mean and standard deviation for each of the 8 channels calculated from the EMG samples, given the 7 gestures obtained from the NinaPro DB5 dataset. And we subtract each EMG value with the local mean divided by the local standard deviation.

Deep Neural Network Model and Training: As shown in the fig.6, our CNN model consists of 2 convolutional layers followed by a fully-connected layer. The first convolutional layer consists of 32-filters, followed by a PReLU activation function, batch normalization to accelerate model training, spatial 2D dropout to counter overfitting, and max pooling. The second convolutional layer is the same as the first layer except that it uses 64 filters instead of 32. Lastly, we will end with N neuron fully-connected layer depending on N gestures to predict. During our project, We trained our model by utilizing 7 gestures from a large open source EMG dataset called NinaPro DB5 [3]. We then fine-tune it with real-time EMG signals collected from the Myo armband generalizing model learnings from the larger dataset to a more specific, downstream dataset.

3D - Printed Bionic Arm: As shown in the Fig. 7,our bionic arm is 3-D printed based on an open source community project called HACKberry hand from Mission ARM JAPAN Non-Profit Organization [2].

3 Servos are utilized for this bionic arm. The SG90 Servo controls the middle, ring, and little finger. The Parallex Servo controls the index finger. The second SG90 controls the thumb finger. For the scope of our project, we utilized the bionic arm to perform 4 gestures: the Rest Gesture, Thumbs Up Gesture, Fist Gesture, and Ok Sign Gesture.

Future Works: In this project, we successfully deploy the CNN model on the Sony Spresense board to achieve real-time bionic control. In the future, we seek to further enhance our system with the following methods. First, the intermediary ESP32 causes delay. By deploying Bluetooth directly onto Sony Spresense, we may minimize this. Second, we may improve the robustness of our model by using other architectures such as RNN. In addition, we can augment noise EMG data with GANs. Third, we can utilize higher dimension EMG collection sensors, to embed even more features. (Such as 192 channels instead of 8) Finally, we may also improve our user experience by achieving on-device training on Sony Spresense.

Project Githubhttps://github.com/MIC-Laboratory/Sony-Competition

YoutubeDemo Video

References[1] Prosthetics & Orthotics Market Size Report, 2021-2028 (2022). Available at: https://www.grandviewresearch.com/industry-analysis/prosthetics-orthotics-market (Accessed: 7 August 2022).

[2] HACKberry |3D-printable open-source bionic arm (2022). Available at: http://exiii-hackberry.com/ (Accessed: 7 August 2022).

[3] Atzori, M. and Müller, H., 2015, August. The Ninapro database: a resource for sEMG naturally controlled robotic hand prosthetics. In 2015 37th Annual International Conference of the IEEE Engineering in Medicine and Biology Society (EMBC) (pp. 7151-7154). IEEE.

Comments