In this tutorial we'll add a PiCamera to the AIY Voice Kit (v 1.0). Then we'll set up the Vision API on Google Cloud, and get a basic script working. In Part III, we'll enhance the user experience by editing the dialog and adding sound effects. There's a lot to do - so let's dive in!

Part I: Add the CameraAdd the Pi Camera

Thread the Pi Camera ribbon cable through the Voice HAT so that the pins are facing away from the USB ports as shown below. Gently, lift both sides of the ribbon holder, insert the ribbon, and push the black plastic holder back down to hold the cable in place.

Reattach Voice Hat & Speaker

Reassemble the Box

Assembling the Voice Kit is easier if you set the pi atop the cardboard insert with the header pins closest to the speaker. Fold the flap over the USB ports - if it fits easily, the orientation is correct. Set the speaker in its holder.

Position the Camera

As you can see, the camera module fits neatly between the two pieces of cardboard. (Note: the red LED is leftover from the doorbell tutorial. Since we're not taking anyone's picture here - it's optional.)

Finish Assembly

Once you connect the arcade button and microphone, tuck the wires inside and close the lid. Your finished kit should look like this.

Finally, download the latest release of the AIY-Projects Kit and copy it to your SD card ( I use Etcher for this task). Put the SD card back in the pi and connect your kit following the AIY Project Site directions. Be sure and test the microphone and speaker by running any of the scripts ( I use cloudspeech_demo.py).

Test the Camera

Take a picture and review it via the command line like this:

$ raspistill -rot 180 -o /home/pi/Pictures/testimg.jpg -w 640 -h 480

To view the picture from the command line, issue the following command:

$ DISPLAY=:0 gpicview /home/Pictures/testimg.jpg

If the resulting image quality is sub-optimal, there are several things you can try. For example, turning off Auto White Balance (AWB), or increasing brightness levels can make a big difference. To learn all about the PiCamera, take a look at this: RaspiCam Reference. Congratulations your Vision/Voice Kit is complete!

Tuning for Speedier Capture You may have noticed that it takes about 5 seconds to fire up the camera hardware and wait for the software to adjust to current light levels. To shorten the wait time, we'll edit the crontab file so the camera launches on startup and keeps running in the background. Issue this command:

$ crontab -e

Scroll to the bottom of the file and add the following:

@reboot raspistill -o /home/pi/AIY-projects-python/src/examples/voice/cloud-vision/aiyimage.jpg -s -t 0 -rot 180 -w 640 -h 480 --preview 500,90,640,480

This fires up the camera when the pi boots and keeps it running along with the preview window. Each time we push the button on the AIY Kit, the camera takes a picture and saves it to the project folder as aiyimage.jpg. (IMPORTANT: the location of the image file is hard coded. Please edit the "file_name" variable (line75 in whatisthat.py) as necessary. As you can see in the video demo at the top of this tutorial, the crontab script positions a camera preview next to the terminal window. This really comes in handy for testing your project. Now that we have the box working - we need to setup cloud services.

Part II: Google Cloud Vision APITurning on the Vision API is easy. Google have documented these 3 easy steps here: https://cloud.google.com/vision/docs/before-you-begin

1. Create a new project

2. Enable billing

3. Enable the Vision API within your account

From your cloud dashboard, make sure your new project name is listed in the top blue bar, and from the left hand menu select: API & SERVICES > CREDENTIALS.

In the CREATE CREDENTIALS dropdown, select SERVICE ACCOUNT KEY, select JSON as the key type. Download the JSON file and save it to your local machine. I renamed mine to "what-is-that.json", before moving it to the pi at this address: "/home/pi/what-is-that.json"

Install the Vision API Python Libraries

Leo White has done an excellent job of explaining how and why to do this important step. To quote his blog post from March 2018:

To utilize the Google Vision APIs we need to install the python libraries (as detailed at https://cloud.google.com/vision/docs/reference/libraries#client-libraries-install-python). However the Google application runs within a Python Virtual Environment to keep its selection of python libraries separate from any others installed on the Raspberry Pi. This means we have to take the extra step of entering the virtual environment before installation. This can easily be achieved by launching the 'Start dev terminal' shortcut from the Desktop, or by running '~/bin/AIY-projects-shell.sh' from a normal terminal (e.g. if connecting via SSH). Once inside the Virtual environment run the following to install the libraries.

pip3 install google-cloud-vision

Get My Updated Scripts

I've updated Leo's scripts to work with the latest AIY Rasbian Image. You can get them both from my repo: https://github.com/LizMyers/AIY-projects > 02_AIY_VisionAPI. I've saved them to this directory: /home/pi/AIY-projects-python/src/examples/voice/cloud-vision/.

Before running cloudspeech_whatisthat.py - we need to stop any services that are already running. For example, each time my pi boots I have a service called assistant_grpc_demo that starts up. To stop it, I issue the following command.

1. Stop any active services (assistant_grpc_demo)

$ sudo services Assistant_GRPC_demo stop

2. Export credentials - next we need to export the JSON credentials we created earlier so that we can talk to the Vision API.

export GOOGLE_APPLICATION_CREDENTIALS="/home/pi/what-is-that.json"

3. Run cloudspeech_whatisthat.py - navigate to the directory where you saved the scripts. (In my case, "AIY-projects-python/src/examples/voice/cloud-vision/") and run the script.

$ ./cloudspeech_whatisthat.py

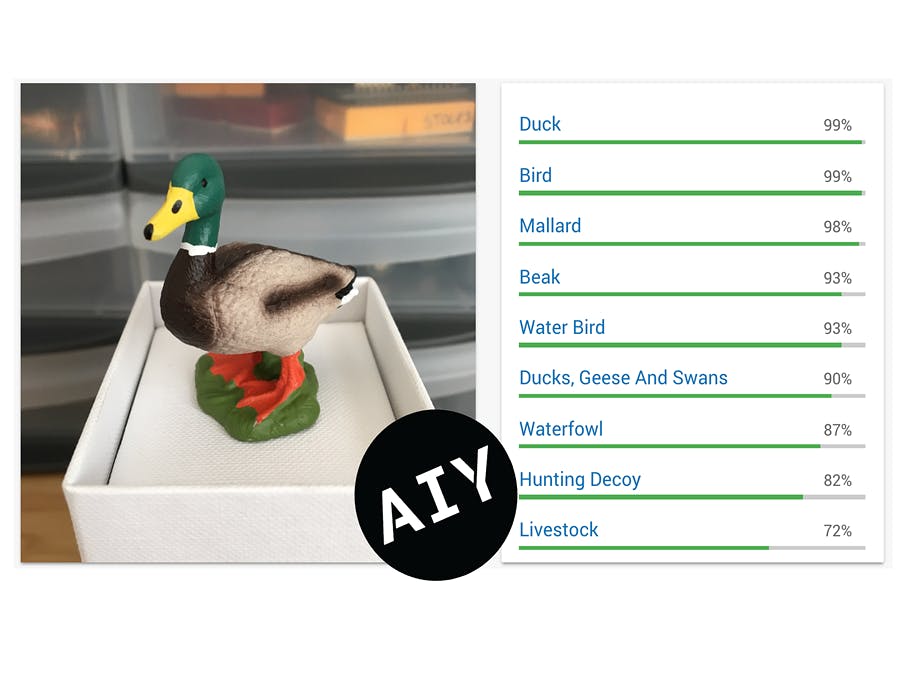

Now point the camera at an every day object - like a coffee cup, dinner fork, banana, or beverage - push the button and say "what is that?". The system should come back with a list of words. I've included some sample_images on GitHub. Actually, you can open these photos on your computer and point the pi at the screen and ask: "what is that?", "whose logo is that?", or "can you read that?".

Alternatively you can drag the animal photos into this web page. If you're curious about using this data in your own applications - check out the JSON tab.

Now let's improve the UX by editing cloudspeech_whatisthat.py. As you can see, I've already spiced things up with random hello/goodbye phrases, plus help and error messages. To personalize the experience, scroll down to line 37 and replace "friend" with your first name.

Next, uncomment lines 186 - 194. This code looks for a specific word within the results set from the Vision API. For example, if "bird" is part of the results set, the systems says: "that's a mallard duck" and then plays the corresponding sound. Admittedly, this is a bit of a party trick - but shows how you can boost the impact by adding just a few sound files or custom phrases.

If you want to return a single result rather than a list of applicable labels, try uncommenting lines 92-96 in whatisthat.py. Finally, another way to limit the number of results is to increase the label score (on line 102). In other words, if the system only returns results where it is at least 90% certain - you'll get fewer labels.

Of course, if you want to really boost accuracy, you can train your own models. But that's a fascinating topic for another tutorial!

Comments