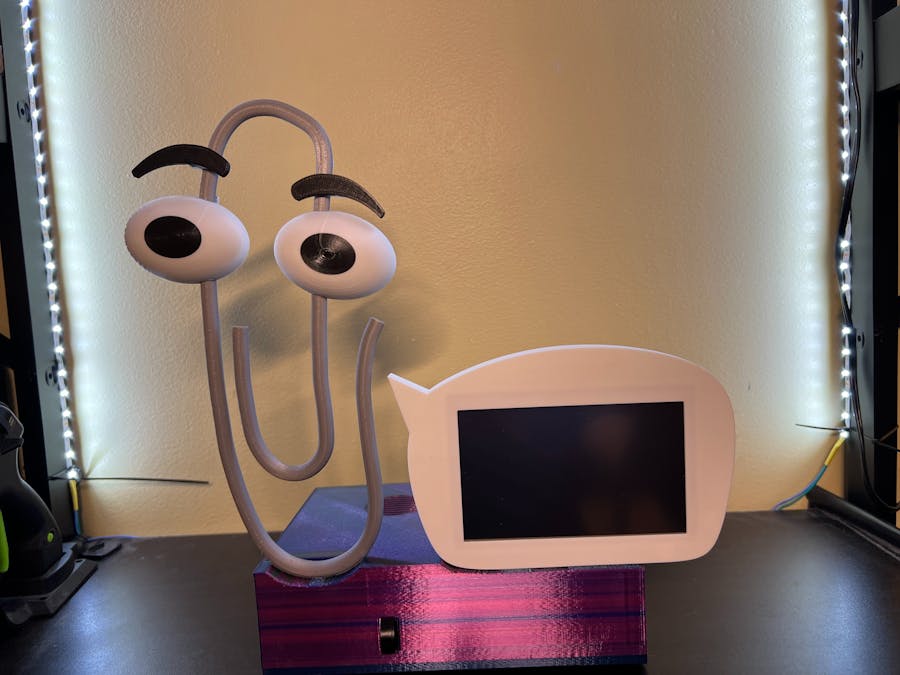

Did you ever want to make your robot friend more conversational? I sure have, so I built ClippyGPT as a proof of concept for integrating OpenAI and Azure Speech Services into robotics using Python to give my companion bots more personality and make them more engaging. Now you can build your own ClippyGPT or use the sample code to integrate ChatGPT into your next robot project!

*NOTE: See the 3D Printing Parts section for a link to the STL files!

*NOTE: Updated on May 1, 2023 to clean up code and add new ChatGPT 3.5 multi-turn "chat mode".

The BOMSo, this section is split up a bit, and there is a lot of room here for you to choose different devices based on your needs. First I’ll talk about a basic build which doesn’t include any moving parts to make a basic echo dot smartspeaker experience. Then I’ll go over what else is needed to build the full ClippyGPT experience.

Basic BOMThe bare minimum needed to build the basic non-moving talking chatgpt experience, You’ll need:

- ·A Single Board Computer, (eg. Raspberry Pi), running a Debian distro, which includes Raspbian (ideally full desktop Bullseye). NOTE: It’s important that the board have the USB ports positioned similarly to a Raspberry Pi 3B or else you’ll need to use a USB extension cable instead of a Right-Angle USB adapter to get the microphone into the right position.

- · A Power Supply, ideally a 5v 5a switching power supply with a 5.5mm barrel jack. You can use a higher voltage PS if you also add a step-down regulator like this one https://www.pololu.com/product/4091which has mounting holes for it in the model.

- · A 5.5mm female barrel jack connector, like these https://www.amazon.com/gp/product/B01GPQZ4EE

- · A through-hole protoboard or a small breadboard to split the power out to the components.

- · A small speaker with a mounting hole pattern of 1.25 inches (31.75mm). I used a speaker recycled from an old echo dot.

- · A 2.5w mono amp like this one https://www.adafruit.com/product/2130

- · A right-angle USB adapter like this one https://www.amazon.com/gp/product/B073GTBQ8V

- · A USB microphone, like this one https://www.amazon.com/gp/product/B07SVYVZ1H.

If you want the full build, in addition to the above, also get:

- ·Two Tower Pro SG90 or Feetech FS90 servo motors.

- · A servomotor controller, I used an Adafruit Crickit Hat for Raspberry Pi but have mounting holes for the Adafruit 16 channel PWM controller too.

- · Fishing line – This is what moves the eyebrows. I use 100 test lbs braided, but whatever you have should work as long as there’s no stretch.

- · An HDMI flat ribbon cable kit like this one https://www.amazon.com/dp/B07R9RXWM5

- · A compact HDMI 5 inch display with power and HDMI cable connections at the top of the screen, like this one https://www.amazon.com/dp/B013JECYF2.

- · Some micro USB pigtail cables like these https://www.amazon.com/dp/B09DKYPCXKfor powering the SBC, display, and whatnot. (you may need barrel jack and/or USB C ones depending on which SBC and servo driver you choose).

- · Audio jack screw down adapter, like these https://www.amazon.com/dp/B06Y5YJRPD

- · A spring-loaded retractable ink pen. (You’ll use the compression spring in it for the eyebrow movement.)

The STL files are available at https://www.printables.com/model/413897-clippygpt.

These parts were printed on various printers at.02 layer height using both PLA and PETG, so either or should work fine. The top and bottom shells should be the only parts that really need supports and brims may be useful if you have problems with large prints curling up.

The only parts you may want to use a finer resolution on are the bits for the eyebrow movement, where.01 may give them some more strength.

The eyes were made with white filament and a manual filament change to black where the pupils start.

AssemblyClippy:1. First, if you’re trying for movement, thread one length of line for each side, one from the left slot in the back and the other through the right slot, until they come out the bottom with a good bit extra on each end, you can cut them to size after tying them down to the moving parts.

2. Glue the eyes so they align with the horizontal slots. If you find that gluing isn’t working for you, use some spare filament across those aligned gaps to reinforce it with either glue or by plastic welding them in place.

3. Tie the lines to the small holes in the brow clip pieces, (applying superglue to the knots can prevent slipping, just make sure it’s dry before handling), then insert those into the slots in the back above the respective eyes.

4. Get some short M2 heatset inserts and install one in the back of each eyebrow, then you can use 8mm M2 screws to attach the eyebrows to the clips.

5. Get that spring you liberated from the retractable pen and cut it in half. Then place each half on each of the long posts on the eyebrow clips. Using that post as a guide, place the small U-shaped Brow Hoops at the very end of those posts and hold it in place while you use a soldering iron tip to weld it to Clipply, while avoiding melting it so much that it sticks to the posts. You want this part to hold the spring and post in place while allowing the clip to move up and down.

1. Prep the bottom part of the case by installing the M2 and M2.5 hex nuts in the underside of the bottom part of the base as shown here:

2. Now you should be able to install the bottom base electronics as shown below:

3. I find that using 5 or 6mm M2 screws work fine for the parts using M2 fasteners.

Note: For the SBC mount, you’ll want to put 1.8mm M2.5 standoffs in place first, then bolt the SBC onto those in order to provide the right height for the right-angle USB adapter to align with the slot for the microphone.

Note: The power jack cover should fasten to the bottom to secure the jack without heatset nuts, just use a longer screw to secure it, like a 12mm M2.

Top Base1. Prep the top cover of the base by embedding heatset knurled nuts as described in this image:

2. While you’re at it, might as well do the M2 heatset inserts for these parts too:

3. Now we can mount the electronics inside the top part of the base:

4. The servomotor arm holes may need to be enlarged a little. I recommend using a small drill bit to enlarge the second hole from the end in order to pass the fishing line through it easily. You can mount the servo motors using a single screw (the screw that comes with the servomotor should work) on the angled mounting points behind where Clippy will be mounted. Also, you may want to align the arms so that they are in this position to start with at the appropriate end of the range for each motor so that they enough range to pull on the lines as described in the image below:

5. Now we can mount the display by placing it inside the cavity of the BubbleFront piece. It should fit snug, but if it doesn’t then feel free to use a file to make it fit better, it should be pushed all the way in.

6. Now, use M2 screws to bolt the BubbleBack to the BubbleFront to secure the display between both pieces. If you’ve done it right the outer edge of both should be flush to each other.

7. Insert M3 square nuts in the three slots in the lower part of the BubbleBack, then bolt the bubble assembly to the top case with M3 screws. You should be able to connect the HDMI and power cables in through the gap in the case at this point.

8. Now to mount Clippy. First, pass the fishing line in through the gap where Clippy goes, then push clippy down into that gap, then forward into the slot as shown below.

9. NOTE: If you have trouble sliding Clippy forward into that slot you may need to clean out any remaining bits of support material that might still be there until it fits in there snuggly, but without too much effort.

10. If you put Clippy in the slot correctly, there should be enough room to slide the ClippyClip piece down behind Clippy to secure Clippy into place. At this point, you can bolt that ClippyClip into position using M2 screws from the underside of the top cover.

11. With the servo arms in the right position as noted above in step 4, go ahead and tie the lines to the arms so that there is as little slack as possible without moving the brows. You may want to glue the knots here to keep them from slipping.

12. If you didn't use a breadboard or a regulator to distribute power to your parts, you can use a thru-hole protoboard to do so, like in this image:

13. Go ahead and wire up all the electronics before using M3 screws to fasten the bottom case to the top. NOTE: Make sure the SD card for your SBC is installed before shutting the case.

14. Once bolted together, go ahead and insert the USB microphone into the front of the case where the right-angle USB adapter should be aligned with the slot at the front of the case:

Now that everything is put together and your SBC’s operating system has been installed, let’s get the software ready. (NOTE: I recommend using the latest Debian or Raspbian Bullseye. Ubuntu won’t work because there’s a library dependency on OpenSSL1, which Ubuntu doesn’t support.)

1. Using the instructions for your Single Board Computer (SBC) install the operating system and set up all the basics that you need, like SHS, VNC, screen orientation, GPIO mapping, etc…

2. Optional, before you start, you can do this all in a virtual environment if you choose. So if you do, now’s the time to set that up.

3. In the config, make sure to enable i2c.

4. As always, run sudo apt-get update and sudo apt-get upgrade in the terminal.

5. Set up the libraries and setting for the servomotor controller you’re using. If you’re using the Crickit hat, do the following in the terminal:

pip3 install Adafruit-blinka

i2cdetect -y 1(if you see a matrix with 49 inside of it, then you’re good so far).

pip3 install Adafruit-circuitpython-crickitIf any of this gave you errors or if it doesn’t work, refer to https://learn.adafruit.com/adafruit-crickit-hat-for-raspberry-pi-linux-computers/python-installationfor help.

6. Set up Azure Speech Services

a. Sign up for a free Azure account at azure.microsoft.com (we’ll be using free tier speech services, so you shouldn’t expect any charges unless you use ClippyGPT A LOT!).

b. Create a speech resource in Azure portal using the following steps: https://portal.azure.com/#create/Microsoft.CognitiveServicesSpeechServices

c. Get the keys and region for your resource: https://learn.microsoft.com/azure/cognitive-services/cognitive-services-apis-create-account#get-the-keys-for-your-resource.

d. In the files I share there will be a file that contains the offline keyword model table for the “Hey Clippy” wakeword which require no extra steps or code changes to use. However, if you want a different wakeword, you’ll want to create one using the steps at https://learn.microsoft.com/azure/cognitive-services/speech-service/custom-keyword-basics?pivots=programming-language-python.

e. On the terminal, type the following commands:

sudo apt-get install build-essential libssl-dev libasound2 wget

pip3 install azure-cognitiveservices-speech

pip3 install –upgrade azure-cognitiveservices-speech7. Set up OpenAI

a. Sign up for an account at https://openai.com/

b. Get an API key!

c. This part does cost a little bit of money, but you get an $18 grant to start with.

d. On the SBC terminal, type:

pip3 install openai8. Then install OpenAI's tiktoken

pip3 install tiktoken9. Copy the files and update them:

a. Copy the offline keyword table to your device in the same location you plan to run the python script from.

b. Copy the python script to the same location.

c. Type sudo nano pythonscriptname to edit the script. For example, if you use my python script you'll type:

sudo nano ClippySampleCode.pyd. In the script, replace all the placeholders for the API keys and region info with the information you grabbed from Azure and OpenAI.

10. Double Check your Configuration.

a. On the desktop, right-click the speaker to ensure that you’re using the audio jack as your default audio output.

b. Right-click the microphone to ensure your audio input is set to the USB microphone.

11. Run the code:

python3 ClippySampleCode.py11. To start a conversation, say "Hey Clippy". Once Clippy replies, you can either ask it a question for single-turn completion answers, or say "Let's chat" to activate multi-turn chat mode where you can ask follow-up questions while Clippy carries context over to each turn. To end chat mode, just say "I'm done".

12. Have fun with it, try different things, and let me know what you do to improve it if you do!

Comments