Picture a Traxxas RC car drifting from asphalt onto packed gravel. Nothing changes in the motor output. Nothing changes in the control loop. The robot is driving the same way it did on pavement, and it has no idea.

That friction between what a robot does and what the ground is was the starting point for GRIP. Most wheeled robots today are terrain blind. They apply the same control parameters across every surface they encounter, which means they either drive conservatively everywhere and sacrifice performance where it isn't needed, or drive aggressively and fail when conditions don't cooperate. Adaptive traction, odometry correction, and path planning all benefit from knowing what the robot is driving on, but only if that information is available in real time, on device, and without external infrastructure.

GRIP was built to answer a simple question: can a low-cost IMU and microphone, fused together, give a wheeled robot continuous terrain awareness with no cameras, no GPS, and no cloud connection?

For build instructions please visit: https://github.com/DanVelarde00/GRIP/blob/main/BUILD_GUIDE.md

The sensor fusion argumentThe answer required two sensors because neither is sufficient alone.

An IMU captures vibration and acceleration profiles well on high-contrast surfaces. Gravel produces impulsive transients while snow damps oscillations. But it struggles on smooth hard surfaces where vibration energy is uniformly low regardless of material. The robot could be on polished tile or wet asphalt and the IMU signal looks nearly identical.

A microphone captures contact energy from tire-surface interaction, but acoustic signatures collapse at low speeds. Below a certain velocity, the signal differences between hard surfaces become indistinguishable from noise.

Fused together, the two modalities resolve ambiguities neither can handle alone. Snow and grass both produce damped acoustics because soft material compression looks similar acoustically. The IMU separates them because snow compresses and rebounds with a distinct vertical vibration pattern that grass does not produce. Gravel is the easiest class: both sensors agree emphatically, with high-frequency acoustic transients from stone impacts arriving simultaneously with sharp VMAG spikes. Hard surfaces produce low VMAG and elevated low-band microphone energy from road rumble; soft terrain inverts that signature. The boundary is cleanest when both sensors contribute.

This wasn't a hypothesis. It was validated. Ablation studies with the trained Edge Impulse model show measurable degradation when either modality is removed, with the largest drops on the classes where each sensor has known blind spots. That is the core claim of the project, and the data supports it.

Building toward four classes, not five

The original design included five terrain classes: snow, smooth surface, gravel, asphalt, and grass. During data collection, two of them proved physically indistinguishable with this sensor suite. Asphalt and smooth indoor floor produced nearly identical MIC_LOW, MIC_HIGH, and VMAG statistics, with differences smaller than typical session-to-session variation from speed changes. This outcome is physically expected. The primary difference between asphalt and polished tile is friction, not vibration or acoustics. Rubber tires transmit contact energy proportional to surface roughness and hardness, and both surfaces are hard and flat.

Training a model to separate them would have produced a classifier learning noise rather than terrain. Rather than inflate class counts with a distinction the sensors cannot make, the two classes were merged into flat_surfaces. The result is a four-class system covering snow, flat surfaces, gravel, and grass that reflects what the sensors can actually resolve. That is a stronger scientific claim, not a weaker one.

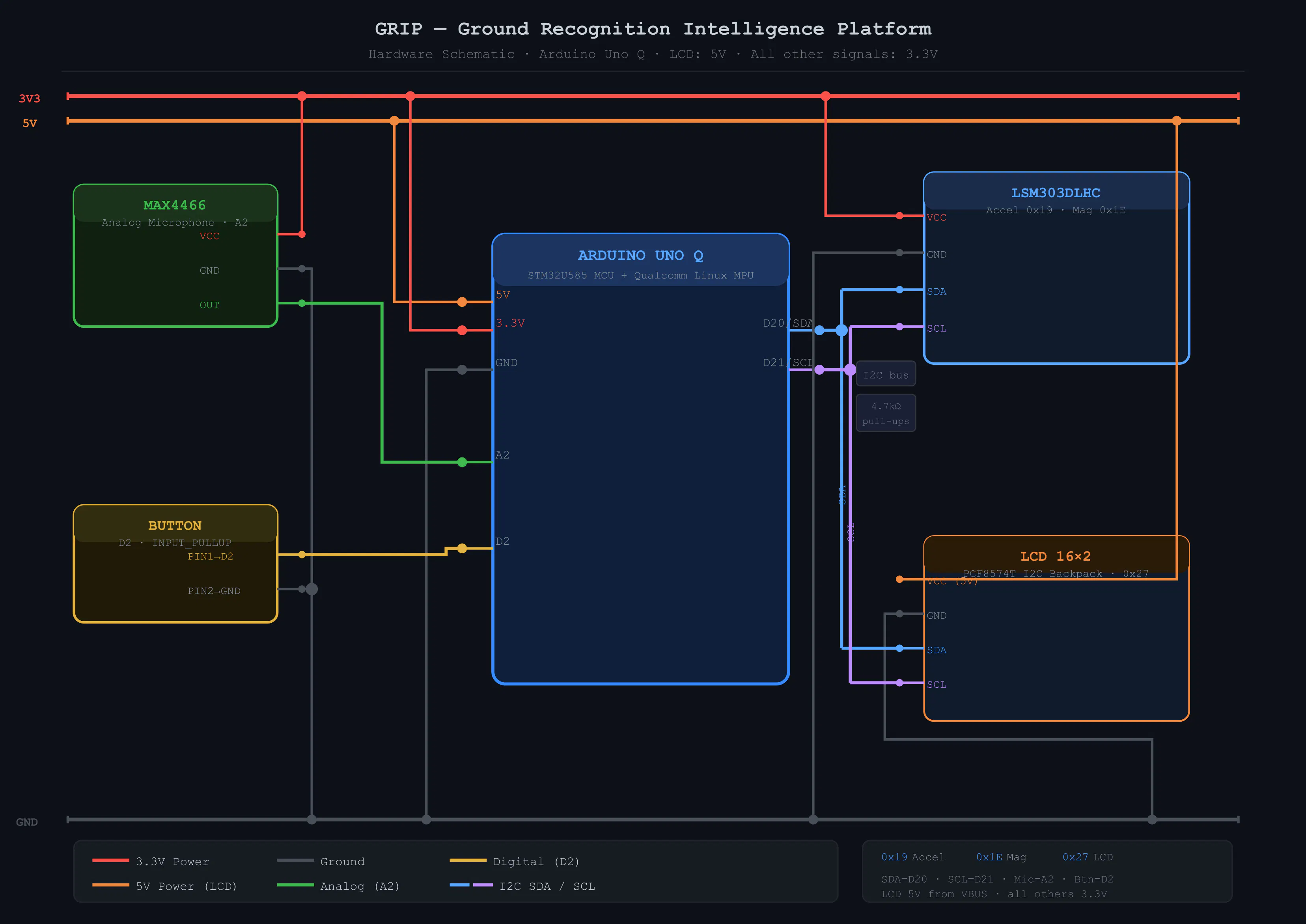

From RC car to real hardwareThe GRIP module runs entirely on an Arduino Uno Q with STM32U585 MCU and Qualcomm Linux MPU. Data collection happens without a laptop: the MCU streams 14-field CSV at 100 Hz over RouterBridge to the Linux MPU, which buffers sessions, trims warmup and braking periods, flags flatlined microphone data, and serves clean sessions over HTTP for later retrieval. The sensor stack uses an LSM303DLHC IMU mounted upside down on the rear support frame to maximize wheel-ground vibration capture, paired with a MAX4466 analog microphone. That microphone was chosen after the originally planned I2S microphone proved incompatible with the STM32U585's SAI interface. That substitution took a day; the rest of the hardware integration went cleanly.

The feature vector was engineered to expose terrain-relevant information while remaining rotation-invariant as the robot turns. IIR high-pass filtered acceleration removes gravity and slow tilt to isolate terrain-induced vibration transients, VMAG captures vibration magnitude regardless of sensor orientation, and a two-band microphone split separates rolling contact energy from sharp impulsive events.

What the model achievesThe Edge Impulse model uses Spectral Analysis with a 2000 ms window at 33 Hz and int8 quantization, achieving 84.9 percent validation accuracy with a ROC AUC of 0.97. Snow is the strongest class at 91.4 percent recall, consistent with its unique combined signature. Gravel is the hardest at 73.8 percent, bleeding into flat surfaces and grass when the vehicle travels slowly on packed gravel where impulsive transients are weaker. The quantized model runs in 46 ms using 24.1 KB RAM and 1.4 MB flash, well within the STM32U585's 2 MB budget, and classifies at approximately 2 Hz on live hardware.

Where it goes nextThe flat_surfaces merge is a constraint of this specific hardware deployment, not of the overall approach. A robot with harder wheels, an encoder for slip detection, or a higher-frequency microphone could support a five or six class model on the same architecture. GRIP is structured for exactly that: add new sensor data to the feature vector, relabel training data, retrain, and redeploy. The session protocol, data pipeline, and firmware architecture remain unchanged

Comments