ARte is a project developed by Ivan Fardin, Claudiu Ivan and Francesco Ottaviani, as part of the Internet of Things 2020 course, at Sapienza University of Rome.

Why ARte?The world is continuously evolving and the way to visit a museum is not an exception. Just looking at artworks is no longer enough, visitors want to interact with them. Lots of users like to visit museums, but also lots of them admit that they would like to have some different kinds of interactions with the artworks with respect to the nowadays available ones. The need is real and not only justified by surveys, but also by several investments done by some museums in the world like The National Museum of Singapore, The Art Gallery of Ontario (Toronto) and The Smithsonian Institution (Washington D.C.).

As a solution for this problem, today we propose ARte (Augmented Reality to educate): a smartphone application, available as a web app based on the IoT paradigm, developed for the Sapienza University of Rome's Arte Classica Museum, which aims to improve the interaction between users and artworks and social distancing (COVID-19 era), providing useful information to the museum’s managers at the same time.

Visitors will be able to perform various actions on their device (some based on IoT data) in order to toggle features based on the Augmented Reality technology. This makes use of the smartphone's camera and each framed artwork will appear as a 3D model in the scene.

FunctionalitiesThe available functionalities, provided to offer a better user experience, are the following:

- Contextualization: the appearance of the room will be modified according to the artwork in order to reproduce its historical setting

- Dynamic Artwork Reimagination: a colored filter will be generated and applied to the artwork and the background based on the real-time visitors’ flow inside the room

- Interactive Music: the music will be generated and played based on the real-time visitors’ flow inside the room

- Storytelling: the artwork will tell a story, a fact or a curiosity about itself

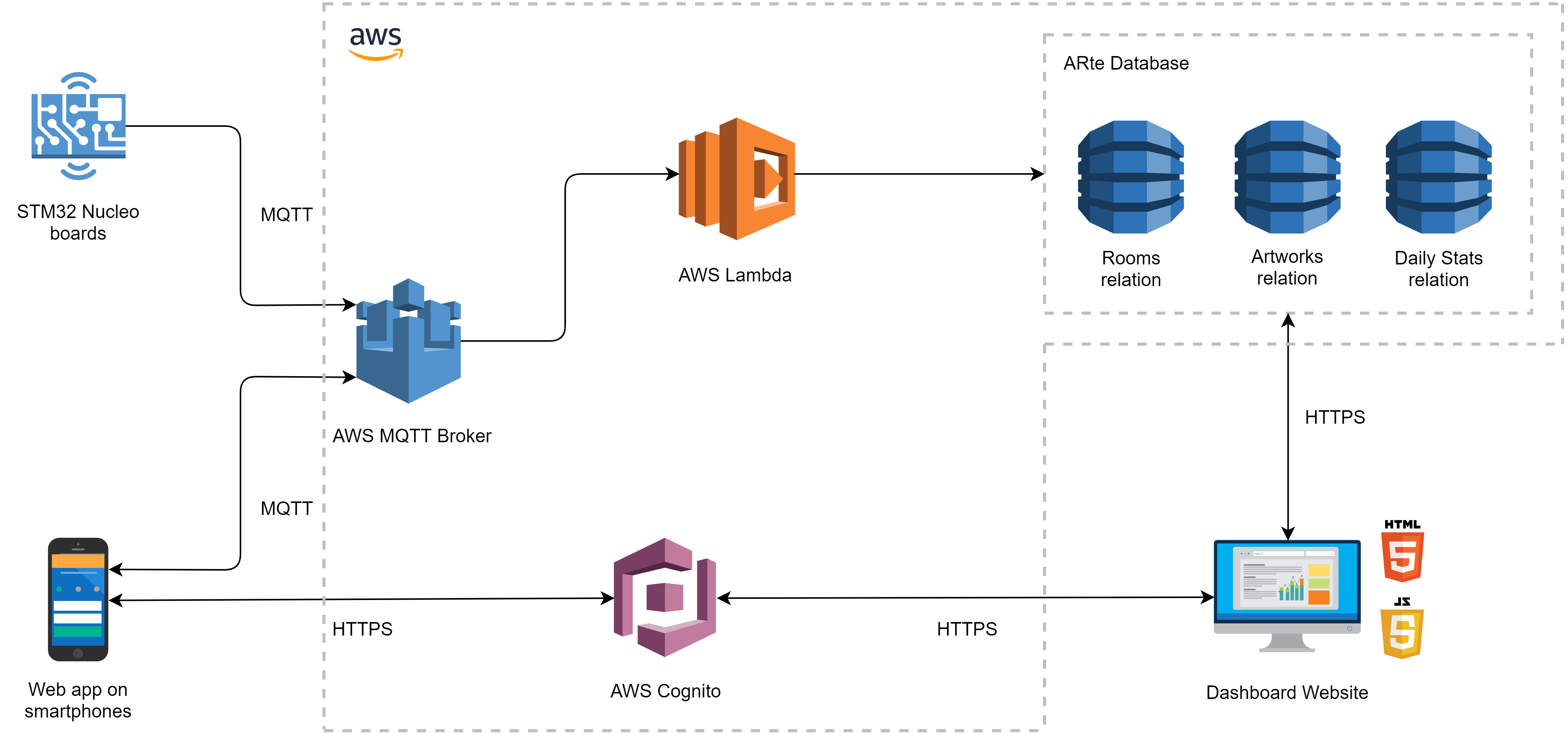

The architecture of ARte is mainly based on the MQTT communication protocol. The main purposes are enhancing visitors' experience in visiting the museum, maintaining social distance (COVID-19 era) and collecting data about the most liked artworks and areas of the museum, through the use of a web application and several STM32 Nucleo boards.

The first one runs on the users’ smartphones, collects information while they are using the augmented reality powered by IoT data, according to the crowdsensing technique, and finally sends them to an MQTT cloud message broker. Furthermore, it displays real-time data about the crowding of specific areas of the museum, so that visitors can avoid crowding situations.

The boards, on the other hand, are divided into two types, based on the sensor they implement:

- People counting boards use a specific sensor to count people entering a certain area of the museum

- Motion detection boards use PIR sensors to detect people's motion in some areas of interest

and both publish messages on the broker as well.

The message broker is linked to a cloud service, which has the role of storing all the information of interest for ARte, through its database, and feeding the mobile web app with all the data needed for its IoT interactive features. The database has a specific structure designed to obtain the best performances: a relation manages web app data and artworks’ information, a second one deals with data sent by people counting boards placed in the museum rooms and a last one with information on the daily visitors flow at the museum entrance.

A website (aimed at museum managers) extracts information from the ARte database, providing a detailed report, in the form of a readable dashboard.

STM32 Nucleo boards

The boards are distinguished in people counting boards and motion detection boards based on their functionalities and the corresponding sensor they implement.

People counting boards make use of the X-NUCLEO-53L0A1 expansion board that features the VL53L0X ranging and gesture detection sensor (based on ST’s FlightSense™, Time-of-Flight technology) to spot when new people enter certain areas. Near the board will be installed a LED, that lights up in different colors based on the number of visitors in the room (given by the sensor via PIN), to avoid overcrowding. Every board stores a local counter and sends real-time data for web app IoT interactive features and dashboard consulting, in order to have detailed statistics over time.

Motion detection boards make use of the STEVAL-IDI009V1 evaluation board, which conditions the signal generated by a passive infrared (PIR) sensor, for human movement detection. In every museum room, a determined number of these boards are installed in order to detect the visitors' flow inside it.

Such data (from both types of boards) are published via MQTT on a topic identified by the room ID where the board is installed. The MQTT-SN bridge collects all data from a specific room in order to send a single message to the backend at fixed time intervals and to align the detected values of the PIR sensors with the actual number of visitors inside the room.

Due to the current COVID-19 situation, we had to simulate the IoT system with the native emulator of RIOT-OS, which supports most low-power IoT devices and microcontroller architectures. The software has been developed in C language and the visitors’ flow was implemented taking into account possible museum attendance times. The computed crowding situation of the room is given in input to the associated LED placed above the room entrance and connected via PIN in order to properly illuminate it. The LED acts as a traffic light to limit access to rooms whose number of visitors is high.

Crowding LEDs

At the entrance of each room, there is a colored LED light that signals the crowding of the environment. It will simply indicate with three colors (green, orange and red), if there’s no problem entering the room, if it’s almost full or if there’s no more space for other people.

Software componentsSmartphone app

The Smartphone App is proposed in the form of a web app, available through a simple website. In this way, visitors don't need to install any OS-based app, as only an Internet connection and a browser is needed. The app makes use of Three.js and Three.ar.js APIs for virtual and augmented reality components, which allow users to interact with museum’s artworks and simultaneously provide background data.

The Dynamic Artwork Reimagination and Interactive Music features are powered by data sent from the motion detection boards scattered throughout the museum. Such data (referring to visitors’ flow inside every room of the museum) are received by subscribing to a specific topic at the MQTT broker and then are processed by simple Javascript algorithms, coded in the app according to the edge computing principle, to produce graphical effects on the artwork 3D model and the background and to generate unique music.

Usage information (such as framed artwork, features enabled on it and view time) is sent to the broker, using Paho Javascript APIs, on a specific topic identified by a device ID via MQTT, a lightweight and widely adopted messaging protocol designed for constrained devices. The app pushes data in real-time using this unique randomly generated ID, therefore information is collected anonymously in order to guarantee users’ privacy. The device ID is also used to correctly count the number of views of artworks by associating a view with each artwork the visitor interacts with within a session.

The application also receives data sent by people counting boards scattered throughout the museum by subscribing to a specific topic at the message broker in order to display real-time crowding situations of the rooms through a scrollable list. In this way, visitors can check the accessibility of the next rooms they want to visit and, therefore, decide to enter them later on if rooms are currently non-accessible or too crowded for them.

The website is implemented using the main web programming languages (CSS, HTML and Javascript) and offers a Bootstrap realized frontend for museum’s managers. Information is presented in a simple and comprehensible dashboard using charts extensively. The dashboard functionalities include the possibility to add information about a specific work of art, to modify it and to remove it. For analytics purposes, it offers the possibility to display information about the visitors’ interaction with every artwork (e.g. to know the favorite artworks), the daily visitors flow of the museum (e.g. to monitor the museum trend and income) and the number of visitors of every room both in real-time and in absolute terms (e.g. to know the most visited room ) by querying the database via Javascript APIs provided by Amazon AWS.

The broker runs on an online server, using Amazon AWS IoT services. It makes use of the MQTT protocol which offers a lightweight message exchange. The broker role is to forward messages published by the visitors’ devices and the STM boards to the database (persistent layer) by specifying rules that trigger the associated AWS lambda functions. The database will then be queried by the dashboard website to display information.

Users’ privacy is guaranteed by the random generation of a device ID which is used to properly count artworks’ views without repetition during a session.

Broker received messages are JSONs of the following form:

- { <deviceID>, <feature>, <usageTime>, <artworkID>, <newArtwork> }

- { <roomID>, <datetime>, <peopleCurrent>, <peopleTotal>, <crowdingPercentage>, <infraredValues> }

respectively submitted by the smartphones and the MQTT-SN bridge on different topics.

MQTT-SN bridge received messages are JSONs of the following form:

- { <roomID>, <datetime>, <peopleCurrent>, <peopleTotal> }

- { <roomID>, <datetime>, <infraredSensors>, <peopleCurrent>, <peopleTotal>, <crowdingPercentage> }

- { <roomID>, <datetime>, <value> }

respectively submitted by the people counting board at the museum entrance, the people counting boards at the rooms entrance and the motion detection boards on topics identified by the room ID.

AWS Cognito

Cognito is an Amazon web service for secure user sign-up, sign-in, and access control. In ARte Architecture, it is mainly adopted to perform communication between the client software (of the ARte web app and dashboard website) and the backend resources protected by server-side access control, defining AWS IAM policies for Cognito roles.

Furthermore, we used Cognito to implement sign-in of museum managers in the dashboard website but not sign-up by design, as we decided to provide them with server-side-created accounts when needed. In this way, any user cannot sign-up and access museum information.

AWS Lambda

Lambda is a serverless computing service where we defined functions triggered by the AWS IoT Message Broker based on the execution of specific rules upon message receipt belonging to a determined MQTT topic. We implemented two lambda functions triggered respectively by the STM board sending and of smartphone sending events. Both functions (available in the src/server directory) perform server-side input validation and add or update entries in the corresponding database tables.

ARte Database

The ARte database is implemented using Amazon DynamoDB, a fully managed NoSQL database service that provides fast and predictable performance with seamless scalability, and contains three relations: one manages web app data and artworks’ information, a second one deals with real-time data collected by people counting boards placed inside the museum rooms and a last but not least one to store information about the daily visitors flow at the museum entrance. Here is a short description of how each table works:

- Daily Relation: it stores daily people flow inside the museum in order to track the trend of the museum during opening hours. Its entries are periodically updated by a people counting board placed at the museum’s entrance and are as follows:{ <date>, <peopleTotal>, <timesAndPeople> }where date is the primary key and denotes the date on which data are collected while peopleTotal represents the total number of people who visited the museum on such date and timesAndPeople is an array consisting of tuples (people, time) denoting the number of visitors in the museum at that specific time.

- Rooms Relation: this relation has the role of storing information about the current and total number of people who visited each room of the museum. The information is periodically received by AWS Lambda functions triggered by the MQTT broker rules and is stored in the following format:{ <roomID>, <crowding>, <datetime>, <infraredValues>, <peopleCurrent>, <peopleTotal> }where the roomID attribute is the primary key while crowding is the percentage of crowding of the room, datetime references to the datetime of the STM board when the message is sent, infraredValues is an array of values collected by the infrared sensors in the room, peopleCurrent represents the number of people in the room at the specified datetime and peopleTotal is the total number of visitors that entered in the room.

- Artworks Relation: it holds information about each work of art including the statistics on features usage that visitors activated on it. The table is of interest for the museum managers because they can learn about the most liked artworks and preferred interactions with them. Other information includes the possibility to adapt to museum layout changes. Its entries are as follows:{ <artworkID>, <author>, <available>, <datetime>, <description>, <keyword>, <model3D>, <photo>, <room>, <title>, <featuresStats> }where the artworkID attribute is the primary key while author is the artwork’s author, available denotes if the art of work is currently exhibited in the museum, datetime references to the inserting or updating datetime of the entry by museum managers via the dashboard website, description provides historical details about the artwork, keyword is used for searching an item in the table, model3D and photo are URLs for the corresponding resources, room is the room ID in which the artwork is located, title is the artwork’s title and featuresStats is a JSON object used to store information about every feature activated on the work of art. The featureStats object is as follows:{ <views>, <viewTime>, <featureName1>, …, <featureNameN> }where views denotes the number of times that the work of art has been displayed in the web app (one view for each session), viewTime is the total time that visitors have viewed the artwork via the web app and featureNameX is a JSON object in its turn containing views and viewTime about the specified feature.

The first thing to do, in order to make the system work, is the configuration of the MQTT-SN broker, that is the Mosquitto Really Small Message Broker (RSMB), contained in the src/mosquitto.rsmb/rsmb/src folder. Since that we have already set up the broker, you can simply run it by typing the following command in the Linux console once you are in the above folder:

> ./broker_mqtts config.confNow, the broker will wait for connections on port 1885 for MQTT-SN and 1886 for MQTT, with the IPv6 enabled.

As you can see in the image above, we will communicate through the MQTT-SN channel using the specified port, so we just have to configure the application that will communicate with this object.

In order to implement an application that will share a connection with the broker, we will use the RIOT-OSemulator. First, we must configure some global addresses, as the RSMB doesn't seem to be able to handle link-local addresses. For a single RIOT native instance, once we are in the src/RIOT folder, we must:

- Set up the interfaces and the bridge using the RIOT's tapsetup script:

> sudo ./RIOT/dist/tools/tapsetup/tapsetup -c <N_INTERFACES>The number of currently opened interfaces changes with respect to the number of boards you have to emulate.

- Assign a site-global prefix to the tapbr0 interface:

> sudo ip a a fec0:affe::1/64 dev tapbr0- Start the RIOT-OS application relative to the type of STM32 board we are interested in from the corresponding folder contained into ../Boards/ by typing the following command:

> make all term PORT=tap0Note that there are several types of sensor boards (which were listed in the previous sections: counters and infrared sensors for each room, or for the museum entrance) and the value of the port attribute must be changed for each type of initialized sensor, be it one of the museum_counter sensor, the room_counter sensor or the room_infrared sensor.

- Assign a site-global address to every RIOT-OS instance:

> ifconfig 5 add fec0:affe::99In this case, it is also necessary to assign different site-global addresses to each RIOT-OS instance, in order not to have interference between them.

At this point, the configuration of the applications is finished and we can start to communicate with the MQTT-SN broker.

To achieve it, we will enter the command name (start), the address of the broker, the port and specify different parameters, depending on the type of sensor chosen:

- Museum entrance: start <address> <port> <numRooms>

where numRooms indicates the current number of available rooms in the museum

- Room counter: start <address> <port> <roomID> <roomCapacity> <infraredSensors>

where roomID is the identification number, roomCapacity is the maximum capacity of people for that room, and infraredSensors represents all the active IR sensors in the room.

- Room infrared: start <address> <port> <roomID> <roomCapacity>

where roomID is the identification number of the room in which the IR sensor is placed, and maxValue is the maximum value generated by the sensor.

You can check if all the sensors are well connected to the broker if they start to send messages, like in the images below.

Now the RIOT-OS devices and the MQTT-SN broker connected to the application are configured, we have a final step: set up communication with AWS.

Since the connection between RSMB and our cloud service is not supported, we had to create it with a Python script, named MQTTSNbridge.py. The purpose is to receive messages by the STM32 boards, and send them to AWS IoT via MQTT; furthermore, thanks to a Lambda function, messages will be processed in order to put them into different tables of the DynamoDB service. Messages from rooms with the same ID (both counters and infrared sensors) will be merged at the bridge level, such that in the database there will be a tuple for each room.

So, let's see how to run the bridge. You can move into the src/Boards/client_MQTTSN directory and simply run this command:

> python3 MQTTSNbridge.pyThe script will start receiving data (from the STM32 boards emulated by RIOT-OS applications) and send it to the AWS MQTT broker.

Now, the dashboard and the web app are ready to be used, according to the functionalities and the sections described in this article.The script will start receiving data (from the STM32 boards emulated by RIOT-OS applications) and send it to the AWS MQTT broker.

Evaluation and resultsIn a kind of system like the ARte one, performances need to be evaluated using different metrics, which are related to technical aspects, but also to user experience parameters. ARte addresses two different types of users:

- museum visitors (who want to interact with artworks by using the smartphone web app) need a simple, intuitive and attractive interface

- museum managers (who wish to get museum statistics using the dashboard website) need data displayed in a clear and easily understandable way, as well as a feasible method to update artworks’ information to respond to museum layout changes

Another relevant aspect that we had to take into account is response time performances, since no user will use an application if it isn’t sufficiently smooth and no manager will accept to wait for requested data for too long a period of time.

In order to achieve these results we also had to take into account the hardware components and verify the correctness of their implementations.

More in general, the Key Performance Indicator (KPI), provides a good understanding of the four main indicators a developer has to pay attention to when designing a new service:

- General indicators

- Quality indicators

- Cost indicators

- Service indicators

User experience is the first major aspect we considered for the ARte project and it concerns the way a user interacts and experiences our service, including the person's perceptions of utility, ease of use, and efficiency.

Taking into account that UX is subjective, our aim is the service we offer must improve the overall user experience by providing something unique to the museum’s visitor that cannot be observed by visiting it without the ARte web app and by providing useful information to the museum's managers in order to better organize the layout of the museum itself and/or of the guided tours through the dashboard website.

Extracting a main question results from a conducted survey, we can say that the goal was fully achieved, confirming the goodness of our work:

Furthermore, during the development of the project we also considered the following aspects as UX evaluation metrics:

- Accessibility: every type of user must be able to interact with the application, even those with disabilities.

- GUI: the eye wants its part, so a clear, understandable and responsive user interface but also captivating with an inspired style compliant with material design guidelines are highly appreciated by users.

- Privacy: users' personal information must remain hidden, so it must be properly protected. To have it at the highest level and given the functionalities of the app, no type of sensitive data has to be stored unless necessary or publicly disclosed.

- Simplicity: the app must be intuitive for any user. Understandable use of features and easily identifiable position on the screen are once again something that has to be taken into account.

- Usability: nobody wants to wait too much for a 3D model to appear, for an action to be performed or for a web page to load. Moreover, features must be bug-free as much as possible.

Therefore, we designed and implemented the ARte architecture and software components always keeping these key aspects in mind.

To evaluate the GUI, Simplicity and Usability we relied on two surveys we conducted in which we showed a short demo of the web app, based initially on mockups and then on the actual implementation. The following question about the mobile web app confirmed the fact that is intuitive and easy to use.

Talking about Accessibility, we evaluated it both for the web app and the dashboard once we completed their implementation through the tools indicated in the last section of this document, in particular Web accessibilitywebsite anda11ycolor contrast analyzer. Both the services stated that all the rules about accessibility were observed.

Talking about Privacy, we carried out an evaluation by design indeed ARte does not store any sensitive data about users, but only features’ related data. In addition, this one is also collected anonymously by generating a unique device ID for each visitor device using the mobile web app and of course by nature by STM boards, so that museum managers, but also programmers, cannot obtain any personal information about who’s using the software. In detail, the mobile web app also explicitly declares that ARte privacy policy and terms and conditions are accepted by the user who starts to use the app. If you are interested in reading them, you can find references on the intro page of the mobile web app.

Quality of Service (QoS) is crucial for the ARte system, this means we have different aspects that had to be analyzed from a technical point of view, such as:

- Compliance with standards: the code must adhere to the main standards of good programming guidelines (safety, security, reliability, testability, maintainability and portability).To accomplish these, we constantly developed code according to modular programming software design technique to ensure maintainability and portability and tested it in order to avoid harmful behavior. Moreover, we evaluated the code of the websites by using the W3C online tool for HTML correctness and it did not return any error on the main pages of the AR web app and Dashboard. Thus, the code is perfectly compliant with W3C standards. To security, we dedicated a separated section a few lines below.

- Cost: the whole system must not affect the expenses of the museum too much. The hardware components, which in our case are the STM boards and their sensors, have to be quite cheap as well as the cloud services have to be chosen looking at the best price/quality ratio.So, we conducted some market research for all hardware and software components and about the former: the STM32 Nucleo Board can be purchased for about 14, 00€, the STEVAL-IDI009V1 evaluation board for about 32, 00€ and the X-NUCLEO-53L0A1 expansion board for about 39, 00€. Cloud services costs instead are zero because the AWS Educate service is free for students and educators. Concerning hardware prices, we reported the prices for a single piece of each component, but probably for multiple components the expense could be lower. Nevertheless, this is clearly an affordable cost for a museum.

- Latency: the service needs to be efficient, so the values computed by the sensors of the boards and the actions performed by users (also by museum managers) must be carried out without or within minimum tolerant delays.Latency was evaluated together with Performance using the Pingdom online tool.

- Performance: it concerns computational complexity, as code execution needs to be fast. Algorithmic optimization and efficiency are the keys to good service. This is a crucial point on a microprocessor like the one we want to use, as its hardware is not among the best performing.The performance was evaluated using the Pingdom online tool. It provides an overall score for the load times of all elements of the page, based on various criteria. It returned a score of 80 out of 100 for the web app, which is a pretty good score for software that has to load several heavy components like 3D models, images and storytelling audios.

- Scalability: the whole system needs to remain performant with the increasing number of connected visitors, connected museum’s managers or stored information. A new STM board, for example, must be able to be added to the museum without having the need to rework the entire infrastructure.To evaluate it, we read the documentation of the chosen cloud service and AWS services proved to be really powerful in terms of scalability. DynamoDB has a throughput of up to 40, 000 read request units and 40, 000 write request units. AWS Lambda can process up to 1, 000 concurrent executions and AWS MQTT Broker up to 500, 000 concurrent connections. In the end, AWS Cognito can scale millions of users.

- Security:the system must be robust against vulnerabilities in order to be resistant to cyberattacks of any kind. In order to accomplish this, secure communication protocols, correct server-side passwords and keys handling must be adopted, especially in an IoT service like this.AWS IoT Core connections use RSA keys and certificates. These plus HTTPS everywhere grant CIA requirements for data in transit between AR web app \ dashboard and the server, in order not to expose any data. Server-side access control is implemented to deny attackers to perform functionalities or access resources for which they are not entitled via Cognito authentication and IAM roles. Furthermore, server-side input validation, via triggered lambda functions, ensures protection against Injection and XSS attacks. In the end, the absence of using vulnerable libraries (as far as we know), AWS CloudWatch logging and monitoring and dashboard website defense-in-depth for most critical actions add another important security block. We assessed that a really good level of security is applied to the entire infrastructure.

In the current situation (COVID-19 era), it is really difficult to build the IoT environment of the ARte project with physical STM32 boards implementing the proposed features. That’s why we emulated some hardware elements and we did not implement some features.

In the future, the aim is to try to improve some of the already implemented features, like the framing of the artwork or the interactive music generation, with machine learning algorithms. As regards the framing of the work of art, our goal is to recognize every statue thanks to a collection of pictures taken from the users, such that it can be recognized from any angle thanks to a machine learning algorithm.

For the interactive music generation, we want again to improve the generation of the music through a machine learning algorithm that can give a more cohesive and complete experience.

The reason we haven't used these algorithms to improve functionalities was the lack of links with the principal topics of the course, furthermore, it would have required some specific background knowledge.

Other possible improvements could be done in the crowding map section, with a museum map that makes use of the user’s real-time position, and also implementing the missing features. In fact, the surveys’ results showed us that the missing features are really appreciated by users.

If you are interested in the ARte project, check out our repository on GitHub.

Comments