The aim of this project is to create an application that is able to recognize human activity through the smartphone, in particular using the accelerometer sensor, which is now present in almost all devices. The data received from the sensors are analyzed both on the device itself and on the Cloud. Cloud processing takes place through Lambda Functions, which are called up through APIs, specially implemented. The set of processed data is then always saved on the Cloud thanks to Aws' DynamoDB service.

- DynamoDB

First of all I created the tables of my database through DynamoDB, in particular I made two tables, one for the data processed on the device and the other for the data processed on the cloud. The two tables have the same fields:

- dates: containing the date of the surveys;

- activity: containing the detected activity.

To create a new table, click on Create Table. Each table must have a unique name and a primary key that uniquely identify the elements.

Once you click on Create, you're done. A key element that will be used later is Amazon Resource Name (ARN), which uniquely identifies each Amazon AWS service.

- IAM Role

In order to access AWS resources you need to create roles. To create these roles you need to access the IAM Identity Access Management section. Since I also used Lambda Functions in this project, I created three roles:

- policy_dynamodb_cloud: to access the table of data processed on the cloud;

- policy_dynamodb_edge: to access the table of data processed on the device;

- policy_lambda_function: to allow the Lambda Functions to use the data saved on DynamoDB.

We create a new Role using the Create Role button, the following window will appear:

Select Lambda as a use case and move on. Now we need to tie policies to our role, because policies grant permissions. So let's name our role and add the two policies shown in the following image.

We can proceed with Create Role. The next step is to uniquely identify the resource to which the role refers. We proceed on Add inline polici.

At this point we must enter the following data:

- Service: or the service we use, in this case DynamoDB;

- Action: or is the actions we can perform, in this case read and write on the database;

- Resource: or uniquely identify the database table.

The identification of the resource takes place through ARN, the information we have seen previously. Once the ARN has been added we can proceed with the Review Policy.

This operation is repeated, almost in the same way, also for the other table and for the Lambda Functions.

- Lambda Function

Once the permits have been obtained, we can proceed with the creation of the Lambda Functions that we need. Lambda Functions allow us to perform calculations in response to events and automatically manage the resources required by the programming code. In this case I used Node.js to perform the activity recognition calculation. The data required to create a new Lambda Function are: the name and the role.

Having previously created the role and its policy, we can tick 'use an existing role' and choose it from the drop-down menu. After completing this operation, there are two things left to do: implement the code that will process the data and the trigger that will activate the function. From 'add trigger' we select API Gateway, a service we will get to later. As for the code I have implemented 4 Lambda Functions:

- Two to read data from previously created tables;

- Two to write the data in the tables.

The functions for reading are similar to each other, only the resource, to which they refer, changes. The code is very simple, through the functions, made available by the AWS-SDK, I connected to the database and with the 'scan' function I accessed the saved data. To display only the data of the last hour I used the expression 'between', so as to filter the search only on the data entered in the last hour.

const AWS = require('aws-sdk');

const docClient = new AWS.DynamoDB.DocumentClient({region: 'eu-central-1'});

exports.handler = function(e, ctx, callback) {

let nowdate = Date.now();

let millis = nowdate - 3600*1000;

let scanningParameters = {

TableName: 'activityEdge',

FilterExpression: "#date between :limitdate and :nowdate",

ExpressionAttributeNames:{

"#date": "date"

},

ExpressionAttributeValues: {

":limitdate": millis,

":nowdate": nowdate

},

ScanIndexForward: false,

Limit: 100

};

docClient.scan(scanningParameters, function(err, data){

if(err){

callback(err, null);

} else {

callback(null, data);

}

});

};The functions for writing are conceptually different from each other, because one saves only the data that is passed to it in the database, the other instead first processes the data that has been passed to it and then saves them; the implementation is very similar.

The data is passed through a 'Rest' call with the 'post' method, in this case it can be recovered by invoking event. <nameresource>. Once I have the sensor data I can calculate the SMA (Signal Magnitude Area) which allows me to understand the user's activity. If the value of the SMA is below 1.5, then the user is stopped; if the value is between 1.5 and 4, then the user is walking; instead if the value is very high then the user is running. The data is sent from the application already sampled, an operation carried out in the application that we will see shortly.

const AWS = require('aws-sdk');

const docClient = new AWS.DynamoDB.DocumentClient({region: 'eu-central-1'});

exports.handler = function(event, ctx, callback) {

let x = parseFloat(event.x);

let y = parseFloat(event.y);

let z = parseFloat(event.z);

let sma = Math.abs(x) + Math.abs(y) + Math.abs(z);

let act;

if (sma < 1.5) {

act = "still";

}

if (sma > 1.5 && sma < 4) {

act = "walking";

}

if (sma > 4) {

act = "running";

}

var params = {

Item: {

date: Date.now(),

activity: act

},

TableName: 'activityCloud'

};

docClient.put(params, function(err, data){

if(err){

callback(err, null);

} else {

callback(null, data);

}

});

}Once the data has been received, the second function saves them directly in the database through the 'put' function.

- API Gateway

We have reached the last step: creating the trigger to activate the lambda function. The Gatwey API service is used to create, publish and manage API Rest capable of accessing AWS services. In fact, in this case I created two API Rest: one to send and receive the data processed by the device and the other to send and receive the data processed on the cloud. Creating one is very simple: once you click on the 'create API' button, just enter a name and the first step is done.

From the 'Actions' button we add a new Resource, once the name has been chosen, it is important to tick 'Enable CORS', otherwise we wouldn't access to our resource from an external domain.

Creata la nostra risorsa possiamo aggiungere I metodi 'GET' e 'POST', sempre dal menù 'Actions'.

Once our resource has been created, we can add the 'GET' and 'POST' methods, always from the 'Actions' menu.

We check Lambda Function and at the bottom we select, from the drop-down menu, the function we need, we finish everything with the 'save' button.

The 'GET' does not need other configurations, instead for the 'POST', in addition to repeating the previous step, it is necessary to specify how the request takes place. After assigning the Lambda Function, the following window will appear:

By clicking on 'Integration Request' we can add the 'Body' of the request. From the 'Mapping Templates' section we can click on 'Add mapping template' and add content of type 'application / json'. Once this is done, an editor appears in which to insert the body.

Once this step is completed, all we have to do is enable CORS and deploy the APIs. Always from the 'Action' menu we enable the CORS leaving the default configurations unchanged.

At this point we can proceed with the deployment of the API: always from the 'Action' menu we select 'Deploy API' and we create a new stage. So that's it.

From the 'Stage' section, in the side menu, you can find the API endpoint.

- Mobile Application

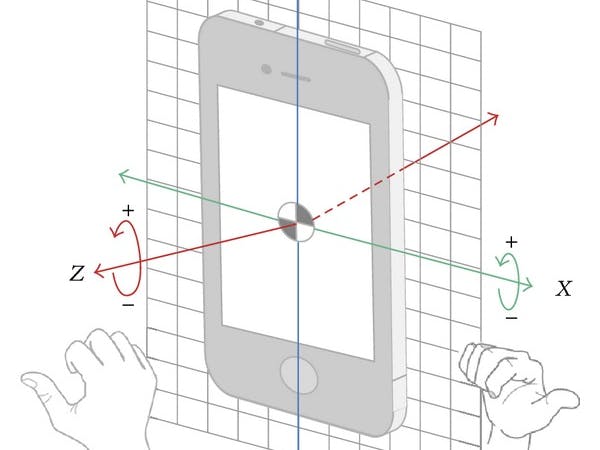

The application has been developed with HTML5, Bootstrap and javascript, and deployment via GitHubPages, a very convenient feature for developing projects because it is able to host a website directly from the repository. Through API Generic Sensor it is possible to access the sensors of the device and use them on the web platform. In fact, through a few calls in javascript I was able to read the accelerometer data, through which it is possible to recognize the user's activity.

The principle on which the recognition is based is the calculation of the value of the SMA (Signal Magnitude Area), for greater accuracy the data are sampled. The SMA is calculated every 5 seconds, and after 12 readings, 60 seconds, the average is taken. Then every 5 seconds the send_data_cloud () function is called, and sends the raw data to the cloud through the APIs built previously. Instead, the send_data_edge () function is called every minute and sends the activity detected by the device.

var API_CLOUD = 'https://...amazonaws.com/cloud/cloud';

var API_EDGE = 'https://....amazonaws.com/edge/entries';

// sending sensor data to the cloud

function send_data_cloud() {

$.ajax({

type: 'POST',

url: API_CLOUD,

data: JSON.stringify({

"x": x_sensor,

"y": y_sensor,

"z": z_sensor

}),

contentType: "application/json",

success: function (data) {

console.log("sending sensor data successful");

}

});

return false;

}

// send user activity to the cloud

function send_data_edge() {

$.ajax({

type: 'POST',

url: API_EDGE,

data: JSON.stringify({"activity": user_state}),

contentType: "application/json",

success: function (data) {

console.log("sending user activity successful");

}

});

return false;

}

setInterval(() => send_data_cloud(), 5000);

setInterval(() => send_data_edge(), 60000);- Web Site

The last piece is the website that can display all the processed data. The site consists of a simple HTML5 page which, through the APIs created previously, is able to access data saved on the cloud and view the history of the last hour. So from this web page you can see both the data processed by the devices and the data processed directly on the cloud. Also in this case the graphics are very simple: there are two tables represented the detected activity with the respective detection time.

// endpoint Lambda function AWS

var API_CLOUD = 'https://...amazonaws.com/cloud/cloud';

var API_EDGE = 'https://...amazonaws.com/edge/entries';

// last hour data calculated on the device

$(document).ready(function () {

$.ajax({

type: 'GET',

url: API_EDGE,

success: function (data) {

$('#edge').html('');

data.Items.forEach(function (activityItem) {

let date_activity = new Date(activityItem.date).toLocaleString();

$('#edge').append('<tr>' + '<td>' + date_activity + '</td>'

+ '<td>' + activityItem.activity + '</td>' + '</tr>');

})

}

});

});

// last hour data calculated on Cloud

$(document).ready(function () {

$.ajax({

type: 'GET',

url: API_CLOUD,

success: function (data) {

$('#cloud').html('');

data.Items.forEach(function (activityItem) {

let date_activity = new Date(activityItem.date).toLocaleString();

$('#cloud').append('<tr>' + '<td>' + date_activity + '</td>'

+ '<td>' + activityItem.activity + '</td>' + '</tr>');

})

}

});

});

// reload the page every 60 seconds

setInterval(() => location.reload(), 60000);All the code can be found in my repository. The mobile application can be reached from here, and Dashboard here.

Good fun!

Comments