I've always been a lonely child growing up, I play often with myself the simple rock paper scissors hand game. I'm currently an Android developer and I'm also fan of machine learning technologies.

I decide to build a toy robot which can play the hand game with human and is also able to detect the opponent player's gesture in order to decide who wins the game.

Here is my project explained in the xkcd way:

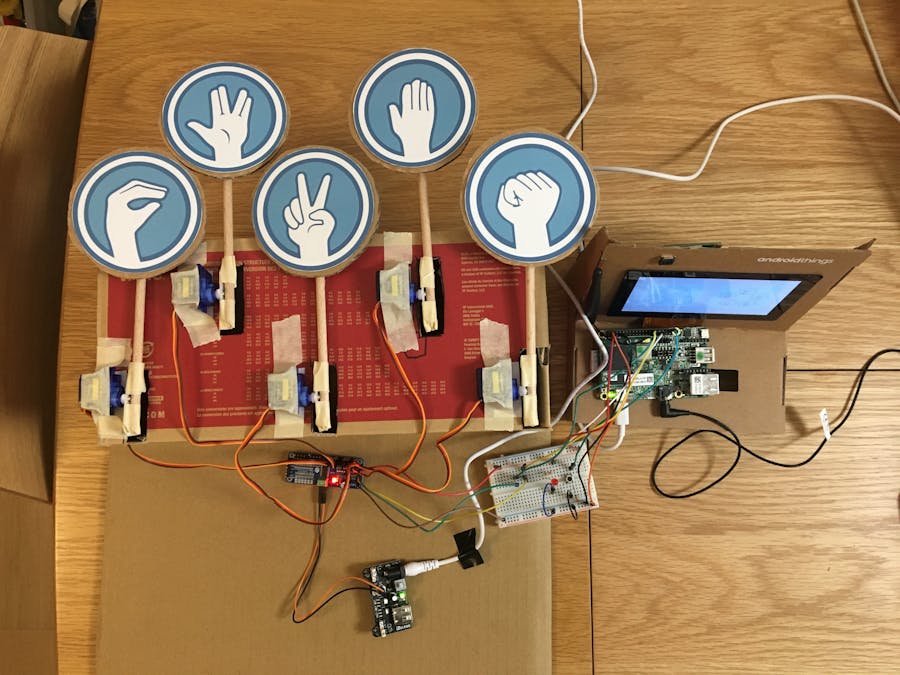

For the hardware part, I mainly used Android Things NXP i.MX7D starter kit which contains a camera module (robot's eyes), a 16-channel PWM/Servo driver to controls 5 servo motors. The robot arms are made with wooden sticks and cardboard.

One important thing to remember, in order to reduce the noise for the servo motors, the power for the driver should be provided via another source instead of your development board.

The final circuit looks like this:

For the software part, the application deployed on the Pico board is developed with Android Things SDK and some common Android APIs (Text2Speech, Camera).

For Machine Learning part, the model is a TensorFlow lite model. In order to train the model I needed to collect as many hand gesture images as possible. What I did was:

- crawling from internet

- taking photos of my own hands

- asking friends and co-workers to send me photos via a mobile web application that I developed with Node.js/Express/Firebase function and storage and deployed via Firebase Host

All the codes and scripts can be found in the github repository.

In actionThe first version looks like this:

Next StepsIn the future I hope the robot will be able to learn its error progressively by collecting images with player's consent, which means:

- building a feedback channel for human to indicate if the recognition is correct or not

- building up a training pipeline so the model can be updated with the latest photos and be deployed to the application

You can find my slides which explain the whole project here:

https://speakerdeck.com/jinqian/play-rock-paper-scissors-spock-lizard-with-your-android-things

_3u05Tpwasz.png?auto=compress%2Cformat&w=40&h=40&fit=fillmax&bg=fff&dpr=2)

Comments