The VirtualReflex is a motion tracking technology which aims to go beyond commercial VR, which typically only integrates a user's auditory and visual senses. Instead, the VirtualReflex provides fine-tuned hand and full body controls, to create a more appealing and realistic Virtual Reality.

ExplanationFor the visual aspect of the VirtualReflex, the PC or laptop converts that information into orders for the visual aspect of the game, and sends it the the phone set in the headset. Using the Trinus or Gagagu software, the phone streams those PC visuals to your eyes, with 3D effects through the lens of the VR headset.

Attached to the phone, PC, or laptop are the BOSE QuietComfort 15 noise-cancelling headphones. Being biaural, or having different sounds produced in each ear, along with the active noise-cancelling of these headphones, they provide insular, biaural audio for the VR experience.

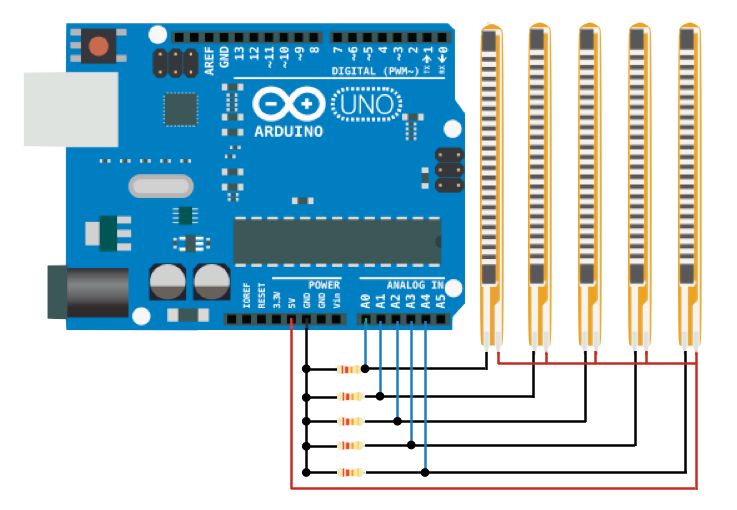

The tactile dimension of the VirtualReflex is integrated using the NXP Freedom Board, the centerpiece of a glove which has 5 flex sensors wired to each individual finger. The flex sensors sense the degree of which each finger is bent, making the NXP's quick "wake-up" essential where the glove will be used spontaneously, but will be shut off when not in use to avoid wasting power. Such information is conveyed to the Freedom Board, being suited for high-performance wearables with the amount of information the board will be getting. That data is then sent to the PC or laptop, which interprets those movements as actions in game and are seen in the VR's visuals, by being sent back to the phone. However, since the Freedom board has multiple opportunities for increased connectivity, if possible I'll also integrate a temperature sensor, which would send the temperature to a heater and AC unit to give the user the dimension of heat and the lack of heat as well. That same aspect of the Freedom board would also allow me to install NXP's 6-axis sensor, a sensor far more advanced than the 4-axis sensors in iPhones. The input of the exact tilt from the user's hand, sent to the PC or laptop, would make the graphics of the user's hands ingame at the correct angles while improving the overall fluidity of ingame gesture visuals. This information would all run through the Kinetis SDK.

Finally, the entire body is tracked using the Kinect Xbox 360 Sensors' depth perception camera. With the Kinect SDK and connecting cable, the PC or laptop utilizes the FAAST software to track the users' skeletal movements, which is sent back to the phone to be integrates in the VR's visuals; giving the user the so far unheard of experience to control their avatar in the VR through their movements in the real world. Although this technology could be used for the hand tracking as well, the flex sensor system is far more accurate for individual fingers, which is essential for effective hand controls.

Compatibility

Comments