This project is by Matthew Hurley, and is a submission for the IoT Into the Wild Contest for Sustainable Planet 2022with Seeed Studio

MotivationBees are critical to ecosystems and food security all across the globe. With some considerable experience on our team both keeping and researching bees, we decided to take a closer look at creating an affordable hive monitoring system that can still hold its technological weight. The idea was to create something to facilitate and inspire amateur beekeepers to become more scientific with and invested in their bees. We also hope that a device like this can encourage suburban households across the U.S. and beyond to take up bee keeping. Suburbia is a huge land area rich with food for pollinators. Communities can make a positive impact on the environment simply by taking ownership over their land, and practicing sustainability. What better way to get involved than by keeping bees!

The ProblemWhile we are not addressing any one specific problem in the beekeeping world, we believe that bringing sensor technology and AI vision to the beehive can help identify symptoms of an unhealthy hive. There are a number of villains in the beekeeping world:

- Varroa mites

- Asian hornets

- Low food supply

- Colony Collapse Disorder

- Extreme weather events

- Pesticides and herbicides

Our Solution:

By collecting data on hives, we can begin to better recognize patterns and effects from these problems. Of course, technology like AI vision shows extreme promise for capturing and classifying data about bee activity, pollen supply, mites, and hornets. However, even simple, passive sensors can bring value to the equation. Does the hive noise level correlate to health in winter months? Does increased humidity affect hive activity? These are the kinds of questions that can be answered with small scale research in large volume, and exactly why we wanted to pursue the BeeO Terminal.

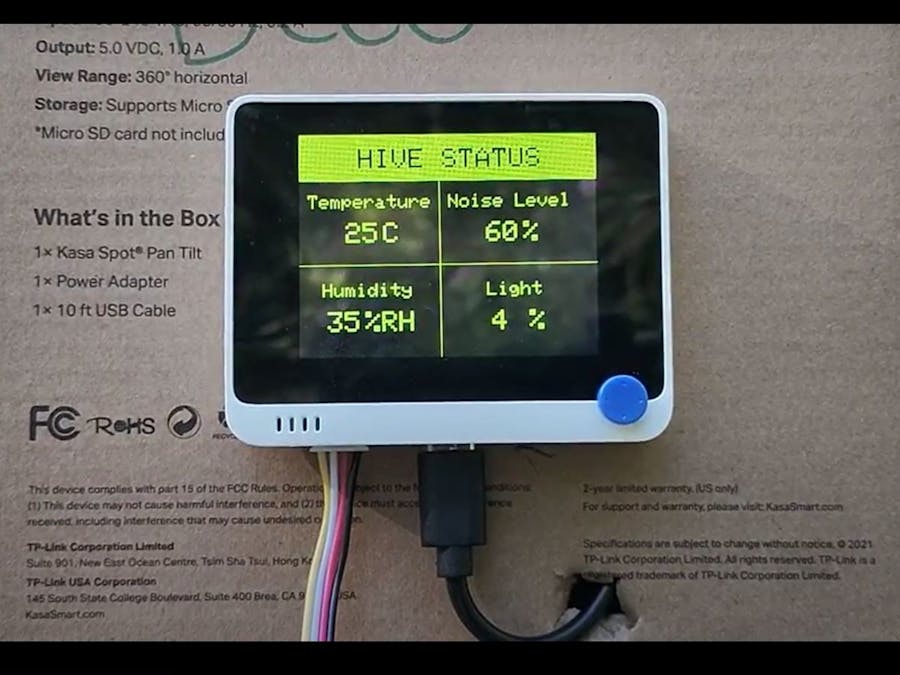

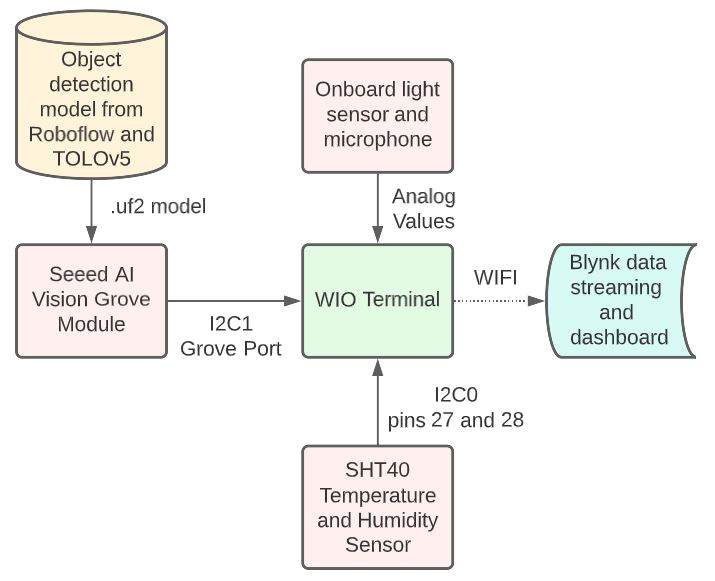

BeeO Terminal and CodeThe BeeO terminal is based on the SenseCAP K1100 Sensor Prototype Kit. We utilized the onboard light sensor and microphone, the SHT40 temperature and humidity sensor, and the grove vision AI module. There were three goals with the device:

- Monitor and display sensor data on the terminal, and provide some user input for bee hive health checks.

- Use the AI vision module to track bee activity at the hive entrance

- Transmit all sensor data to a Blynk web dashboard

*We decided to go with wifi simply because you don't need the lora infrastructure (gateway or helium), and in a suburban environment wifi is generally accessible.

AIVision Module

A dataset of bee images was pulled from Roboflow Universe (see attribution below) and used to train a model (included below). We followed this tutorial to do so. The dataset has 5 classes - bee, pollen, asian hornet, and hornet (x2). The dataset was heavy with images in the bee and pollen classes, so the model is decent at identifying objects that are similar to those. However, it's still primitive, as showcased by the images below when testing the model on google images of bees.

For a proof of concept, the model tests were promising, but the camera quality is probably not sufficient to get extremely meaningful data in practice.

BeeOTerminal Code

No WIFI

The code block titled "BeeO_code" (at the bottom of this document) is the final code with no wifi implementation included (explained a little later). The code is fairly self explanatory, and generally was pulled from Seeed's examples and tutorials and then tweaked to fit the application. The sensor data is all represented on the first "page", while Bee Activity hive health check status is represented on the second page. To try to make the AI vision data a little more meaningful, the confidence values for each class detected were averaged, and the classes detected were all just considered bees. Interrupts were put on some of the terminal buttons to trigger different pages and hive check status. Here is a list of some of the tutorials that were used to aid in development:

WIFI

After experiencing some bugs associated with having the wifi libraries running while also creating a buffer for the LCD display, we broke the code into two separate files - one for displaying data on the terminal, and one for transmitting to the web dashboard (no LCD usage). It's a future goal to reconcile the files into one (our suspicion s it has to do with the TFT_eSPI library), but for now they can be loaded individually to demonstrate each functionality. You can find the wifi enabled code at the bottom of this document, titled "Wifi_BeeO_code". It is very similar to the terminal implementation, except data acquisition is wrapped up in a BlynkTimer to push results to Blynk. We followed this tutorial for getting data to the dashboard.

ResultsThe first thing worth mentioning is that the hive on which we planned to test the device unfortunately died. As a result, we didn't get to test on an intact hive of honeybees. We did try putting the device outside of a solitary beehive, but there were no bees home. Nevertheless, we are still able to demonstrate the success with the devices functionality, and there are certainly a few key takeaways from these results.

The base features of the device worked great. It is definitely feasible to use this as an onboard hive system for helping to keep track beekeeping tasks and display some useful data.

AIVision

While it would have been ideal to develop a data set for model training using an active hive with a consistent camera angle and background, it may not have made too much of a difference because of the camera quality. The bees themselves are pretty small and very clustered, and it seems like the module struggles to detect them distinctly unless the bee mostly fills the FOV. In general, though, the AI vision tests were successful as a proof of concept. It is certainly possible to have cutting edge technology on an affordable system like this.

It's worth noting that the implementation used for invoking the vision module is a little buggy. It seems that sometimes the module fails to be successfully setup, and the terminal must be restarted to begin data acquisition. To remedy this, it may be appropriate to periodically check for the module address when it is not connected.

WIFI

The implementation for pushing the results to a Blynk dashboard definitely had some issues, but was possible and periodically successful. There seems to be a bug, perhaps with a library, where the code runs through timer events but they never get pushed to the dashboard. It's possible that the wifi connection fails, but doesn't restart if a connection was initially successful. The first image below shows successful data upload to the dashboard. The second image below is of data acquisition occurring, but there is no upload to the dashboard despite the absence of errors.

Overall, the project was successful, and we were able to accomplish our three goals all to some extent. Moving forward:

- Use a higher quality camera, such as the SenseCAP A1101.

- Use LoRa for data upload to the cloud. This allows for longer range and probably more reliability.

- Use on an actual hive to develop better AI vision models and results

Comments