Long time ago (2006), in a kingdom far away (France), lived a rabbit. It wasn't a normal rabbit, it had very special powers. It could wiggle it's ears, had RGB LED lights in his belly, and it could talk! Reading up the weather forecast, new emails, or just chit-chat, it was the most famous rabbit of all. So it was given a special name: Nabaztag (Armenian for "hare").

But as with many fairy tales, the story took a twist and the rabbit died.

Nabaztag was the very first IoT device available on the market that humans could buy. But ahead of his time, the inventor went bankrupt and had to shut down their servers. The rabbit wasn't open source and heavily relied on the servers from it's inventor.

Since then, the rabbit was sitting in a corner of my room, it didn't talk, it didn't wiggle it's ears, it didn't bring any joy. Playing with Raspberry Pi's, I always had the idea to bring it alive one day, but it was on the long list of projects.

But today, using my Google AIY Voice HAT, I'm going to change that!

And the rabbit happily lived ever after!

This is what every hacker likes, taking stuff apart. So starts this project too, to see if there is room enough to fit a Raspberry Pi and the Google AIY Voice HAT inside it. Goal is to leave the motors for the ears in tact, and see what else can survive. It turns out that the main PCB has all components soldered right to it, including the LEDs. Only separate PCB is the RFID reader and a small WiFi-antenna. So unplug all wires (don't cut them!) and disassemble the rabbit.

Save all parts, we will use some of them, others might come in handy later on for other projects maybe?

Wiring it up for the basicsYour rabbit is stripped, time to connect the components! Get your Google AIY kit, you will need these components: 1, 2, 3, 6, 7. Don't throw away the other parts, they might come in handy later on.

The Voice HAT seems to come in different versions: with and without soldered headers. Mine had headers at the Servo 0/1/2/3/4/5 and Driver 0/1/2/3 sections, others seem to have no headers at all. So probably you will need to solder your headers on. In my first build I did this quick and dirty by using straight headers, but using the default wire connectors, the rabbit shell cannot be closed. So I've ordered angled headers and will add pictures of this once I've received and soldered them on.

Take your Raspberry Pi, click the spacers (nr 3) on the 2 corners opposite the GPIO header, and click the Voice HAT (nr 1) on top of it.

Take the microphone board (nr 2), the 5-wire cable (nr 7) and connect these together and to the Voice HAT. There is only 1 way of connecting.

Take the speaker cable from the rabbit (yellow/orange), remove the connector (this time you can cut it) and insert the stripped wires in the screw-terminal. Yellow is + and Orange is -.

Take the 4-wire cable (nr 6) and eject the wires from the connector (pinch them with a small screw driver). Take the button cable from the rabbit (gray/white) and remove the connector from this too. Now insert the 2 clips into the connector from the kit, where the button-wires are the 2 at the bottom, replacing the black and white wires (order doesn't matter).

Booting your Raspberry Pi and make basic settings.Specifically for the AIY Voice Kit, Google prepared a Raspbian version (last version is based on Raspbian Stretch, so this is not a Raspberry OS or Buster yet). Download the Voice Kit SD image from the Google website, and write it to an SD-card. I've used Etcher for that.

WiFi networking

To setup your wireless network, we will take a small step at start before inserting the SD-card. Open the SD-card in Windows/Mac/whatever, and add a file with the name "wpa_supplicant.conf" having these contents (modify your country and network credentials):

ctrl_interface=DIR=/var/run/wpa_supplicant GROUP=netdev

update_config=1

country=<Insert 2 letter ISO 3166-1 country code here>

network={

ssid="yourssid"

psk="password"

}Now it's time to insert the SD-card into your Raspberry Pi and boot it up for the first time.

If you have an HDMI-screen, you can proceed with the next steps from a Terminal window. Without a screen, use a tool like PuTTY to connect through SSH to your Raspberry Pi or use an extension in the Chrome browser.

*** Note to self (and others): don't apt-get upgrade, in earlier versions it did break audio/microphone support so I haven't done that anymore ***

Enabling VNC Server

As we will use the Raspberry Pi / Voice HAT without a screen (doesn't fit inside the rabbit), we use VNC to teleport it to another computer. You can do this on-screen or at the command line:

With a screen, select Menu > Preferences > Raspberry Pi Configuration > Interfaces. Ensure VNC is Enabled.

Or you can enable VNC Server through the (SSH)-terminal:

sudo raspi-configEnable VNC Server by doing the following: Navigate to 5 Interfacing Options. Scroll down and select P3 VNC > Yes.

To use the VNC Server, download the VNC Viewer and open it on your Windows/Mac/whatever computer. Just enter the Raspberry Pi IP-address, you will now be connected to your very own Raspberry Pi.

To change the display resolution, click the Raspberry icon top-left, Preferences > Raspberry Pi Configuration. Click the Set Resolution button and make the change to what your screen fits. Reboot to apply the change.

Enable I2C

I2C (Inter-Integrated Circuit, pronounce like "i squared c") is a very commonly used standard designed to allow one chip to talk to another. Since your Raspberry Pi (and Python) can talk I2C, we can connect it to a variety of I2C capable chips and modules.

By default it is turned off, so we need to enable I2C.

sudo raspi-configEnable I2C by doing the following: Navigate to 5 Interfacing Options. Scroll down and select P5 I2C > Yes.

The I2C bus allows multiple devices to be connected to your Raspberry Pi, each with a unique address, that can often be set by changing jumper settings on the module. It is very useful to be able to see which devices are connected to your Pi as a way of making sure everything is working.

In the terminal window, enter this to see if you have a correct connection:

i2cdetect -y 1Result should look like:

pi@raspberrypi:~ $ i2cdetect -y 1

0 1 2 3 4 5 6 7 8 9 a b c d e f

00: -- -- -- -- -- -- -- -- -- -- -- -- --

10: -- -- -- -- -- -- -- -- -- -- -- -- -- -- -- --

20: -- -- -- -- -- -- -- -- -- -- -- -- -- -- -- --

30: -- -- -- -- -- -- -- -- -- 39 -- -- -- -- -- --

40: -- -- -- -- -- -- -- -- 48 -- -- -- -- -- -- --

50: -- -- -- -- -- -- -- -- -- -- -- -- -- -- -- --

60: -- -- -- -- -- -- -- -- -- -- -- -- -- -- -- --

70: -- -- -- -- -- -- -- 77That means that I have some I2C devices connected already.

Configuration of the Google Voice toolsWhen you have completed all these steps, you should be able to start with the Google Voice configuration. So test your setup and connections (also see Google documentation).

On the desktop, click the "Check Audio", "Check WiFi" links to see if this is configured correctly.

The version of Voice HAT (v1) I have, needs an additional line for installation, the "Check Audio" script will tell you what to do. For me, I had to run this in a terminal window, and after that restart the Raspberry Pi:

echo "dtoverlay=googlevoicehat-soundcard" | sudo tee -a /boot/config.txtNow we will connect to the Google Cloud Platform. You can follow the detailed steps here, to get your credentials.

Some more steps; the Python version on the Raspberry is 3.7.3, and according to the manual, the webbrowser.register() should accept 4 parameters since 3.7. But it is not working... So we need a little manual fix. Open the auth_helpers file:

nano ~/AIY-projects-python/src/aiy/assistant/auth_helpers.pyScroll down to line 75, and remove the ", -1" from the end of this line:

webbrowser.register('chromium-browser', None, webbrowser.Chrome('chromium-browser'), -1)It now looks like this:

webbrowser.register('chromium-browser', None, webbrowser.Chrome('chromium-browser'))Press Ctrl+X and Enter to close and save.

And some sad news, with a fix! When running Google Assistant functionality, I get a "segmentation fault" error. This is due to the deprecated google-assistant-library. The Google Assistant Service no longer supports hotword detection like “Ok Google” and “Hey Google”. But there is a simple trick to add it back in. Run this command to downgrade the Google Assistant library from 1.0.1 back to 1.0.0, and then install the latest version again:

pip3 install google-assistant-library==1.0.0

pip3 install google-assistant-libraryOnce done, go ahead by starting the Google Assistant for the first time!

On the desktop, click the "Start dev terminal" link to open a prepared terminal. And run the following:

~/AIY-projects-python/src/examples/voice/assistant_library_demo.pyThis will prompt you for your Google login, but only the first time. Complete the login steps to grant your own assistant to look into your own Google data.

You will now be ready to use all the basics of the Voice Recognition. Play something around by asking silly questions (hint: ask for singing a song, beat-boxing, sounds that animals make, nicknames).

Add our own components!This is what it is all about: adding your own code and components to the Google Assistant (otherwise you would just have bought one in store).

Use this map of the Voice HAT for adding your own components to the GPIO pins:

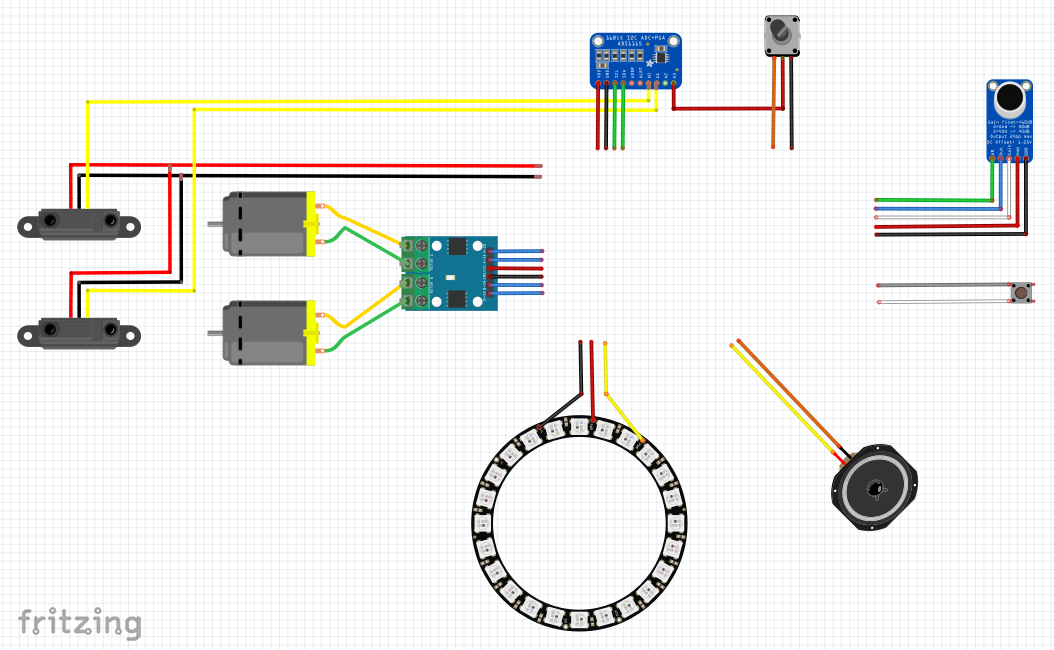

This video shows all hardware steps I describe in the next paragraphs:

LED lightsAs said, the RGB LEDs were soldered to the main PCB and are removed. But what would a rabbit be without shiny lights... In the base there is not much room for adjustments, so I have to place it somewhere else. Also the regular NeoPixels don't work with a Raspberry Pi, except when you jump through some hoops.

There are some newer types of LEDs that have a less time constraints for controlling them and there now is a module called rpi_ws281x with a Python wrapper that will make it work with a Raspberry Pi. And as some of these hoops were disabling the on-board audio, you will be happy that the Voice HAT does have it's own audio controller. Meaning we can disable the on-board snd_bcm2835 controller!

So I've decided to add a LED ring of 32 lights, 11 cm wide. This will exactly fit the top of the base. This LED ring contains SK6812 RGBW LEDs, so it has 3 colors Red/Green/Blue and a White led. This LED ring will replace the LED that is in the kit.

Wiring it up

The LED ring uses 3 wires: DATA-IN, PWR and GND. The LED ring is made to be used on multiple voltage ranges, but if you send 3.3V DATA, it expects you to use 3.3V on PWR too. Or 5V on both. As we need the system PWM pin for driving the LED ring, we can only use GPIO12 (servo 4), which serves 3.3V, so take the PWR from somewhere else. For example from the I2C or SPI pins (you might need to solder something in there). In my build, I'll need more 3.3V sources, so I'm going to add another solution for that.

Installation of the module

Once the LED ring is wired up, you can start installing modules for that. I'll use the rpi_ws281x module, which is written in C and there is a Python library that can communicate with it. In the past you had to jump through hoops by compiling the C code and building/installing the Python library, but today there is a readily installable module using PIP that does all you need in 1 step. Just run:

sudo pip3 install rpi_ws281x==4.2.4Since this library and the onboard Raspberry Pi audio both use the PWM, they cannot be used together. You will need to blacklist the Broadcom audio kernel module by running the following command:

echo "blacklist snd_bcm2835"| sudo tee -a /etc/modprobe.d/snd-blacklist.confIf the audio device is still loading after blacklisting, you may also need to comment it out in the /etc/modules file.

Hook the LED ring into Google AIY

The Google AIY code runs as a normal user. But the rpi_ws281x module needs to be run as SUDO (system user with all powers), because it does write directly to memory. Meaning this cannot be done within one program. So we will apply some little trickery here! We will make a simple communication system:

- The Google Assistant will write a command into the file (replacing any previous command may it still be there)

- The LED_ring program will keep looping to read the file and see if there appears a new LED command. At the same time, it keeps looping through executing the latest command. This is called an iteration cycle. When a new command is received, it directly starts with performing the new command as the iteration is adjusted.

We will use a file called "AIY_LED_ring.txt" in the /etc/ folder to store and read the commands. Lets create the file and assign the proper access for both my local user (pi) and the sudo user:

echo "starting"| sudo tee -a /etc/AIY_LED_ring.txt

sudo chmod a+rwx /etc/AIY_LED_ring.txtFor each type of LED matrix or ring, there is a different set of setup needed. For my LED ring, This is working correctly:

# LED strip configuration:

LED_COUNT = 32 # Number of LED pixels.

LED_PIN = 12 # GPIO pin connected to the pixels (must support PWM!).

LED_FREQ_HZ = 800000 # LED signal frequency in hertz (usually 800khz)

LED_DMA = 10 # DMA channel to use for generating signal (try 10)

LED_BRIGHTNESS = 255 # Set to 0 for darkest and 255 for brightest

LED_INVERT = False # True to invert the signal (when using NPN transistor level shift)

LED_CHANNEL = 0

LED_STRIP = ws.SK6812_STRIP_GRBWUse the Nabaztag_led_ring.py file as attached below, and store it in your own folder, I created a folder Nabaztag in the AIY Projects src folder. Start it with running this command from the terminal:

sudo python3 ~/AIY-projects-python/src/Nabaztag/Nabaztag_led_ring.pyThe LED ring will turn on, with a white color and will slowly move a light around. This means my program is in "starting" mode and will wait for the Google Assistant to wake up.

As a quick show what it does, use the file "Nabaztag_assistant_library_ledring.py" from the files and execute this to see what happens if you ask your assistant questions.

python3 ~/AIY-projects-python/src/Nabaztag/Nabaztag_assistant_library_ledring.pyHint; it will respond to the "EventType" values from the assistant library:

- Ready (waiting for your to wake the rabbit)

- Listening (you said "Hey Google" or the assistant asked you a question and is waiting for your answer)

- Thinking (your input is complete, assistant is processing and giving you the answer)

- Ready (dialog is complete, waiting for your next wake word),

Start by saying "Hey Google", and ask silly questions. You will see that the LED ring responds to your button presses and shows a rainbow in resting state:

MotorsThe ears are turned by 2 motors, having 2 wires each. That means using PWR/GND for turning one side, and GND/PWR for turning the other side.

The Voice HAT does not have the powers to control this type of motors out-of-the-box, so I'll need to add an H-Bridge. The one I used, can drive both ears. It has 4 signal pins that you connect to GPIO, and a PWR and GND pin. Behold the confusing pin names, but basic idea is that pin A-1A & A-1B control motor A, and pin B-1A & B-1B control motor B. If you set 1A to HIGH and 1B to LOW, the motor will turn one way. If you set 1A to LOW and 1B to HIGH, the motor will turn the other way. Both LOW means no movement, both HIGH is not recommended.

Start by connecting the pins to the VoiceHAT: GPIO 26/06/13/05 or Servo 0/1/2/3, and connect PWR/GND to the corresponding pins right next to GPIO26/Servo0.

As we will call GPIO pins, import the RPi.GPIO module. And set the PIN numbers for the servos:

import RPi.GPIO as GPIO

# Use BCM GPIO references instead of physical pin numbers

GPIO.setmode(GPIO.BCM)

# Define GPIO signals to use

StepPinLeftForward=26

StepPinLeftBackward=6

StepPinRightForward=13

StepPinRightBackward=5I've added 2 threads (left/right) for rotating the ears. Reason is that I can avoid this way that I give 2 opposite commands and having both pins HIGH.

def t_earLeft(StepForwardPin, StepBackwardPin, EncoderPin):

global earLeft_millis, earLeft_run, earLeft_direction

earLeft_run = 1

earLeft_direction = 0

earLeft_millis = int(round(time.time() * 1000)) - 1

while earLeft_run == 1:

if earLeft_direction == 1 and earLeft_millis >= int(round(time.time() * 1000)):

GPIO.output(StepForwardPin, GPIO.LOW)

GPIO.output(StepBackwardPin, GPIO.HIGH)

elif earLeft_direction == -1 and earLeft_millis >= int(round(time.time() * 1000)):

GPIO.output(StepBackwardPin, GPIO.LOW)

GPIO.output(StepForwardPin, GPIO.HIGH)

else:

GPIO.output(StepForwardPin, GPIO.LOW)

GPIO.output(StepBackwardPin, GPIO.LOW)

earLeft_direction = 0

# avoid overflow

time.sleep(0.1)The thread is started in the main() function when the main.py file loads:

# Set ear-motor pins

GPIO.setup(StepPinLeftForward, GPIO.OUT)

GPIO.setup(StepPinLeftBackward, GPIO.OUT)

GPIO.setup(StepPinRightForward, GPIO.OUT)

GPIO.setup(StepPinRightBackward, GPIO.OUT)

GPIO.output(StepPinLeftForward, GPIO.LOW)

GPIO.output(StepPinLeftBackward, GPIO.LOW)

GPIO.output(StepPinRightForward, GPIO.LOW)

GPIO.output(StepPinRightBackward, GPIO.LOW)

thread_earLeft = threading.Thread(target=t_earLeft, args=(StepPinLeftForward,StepPinLeftBackward,EncoderPinLeft))

thread_earLeft.start()

thread_earRight = threading.Thread(target=t_earRight, args=(StepPinRightForward,StepPinRightBackward,EncoderPinRight))

thread_earRight.start()The actual direction and duration of turning that direction is triggered from the actions:

# Move Left Ear

earLeft_millis = int(round(time.time() * 1000)) + 1600

earLeft_direction = -1

# Move Right Ear

earRight_millis = earLeft_millis

earRight_direction = -1See the full main.py code at the bottom of this project page.

Ear encodersThe movement of the ears is registered by 2 encoders. There is a wheel with long teeth moving through an IR reader. Each teeth/gap will trigger a HIGH/LOW on the reader so we can see it turns. To know when the ears are UP, there is missing a (set of) teeth, meaning we will have a longer LOW at that time. For reading we could use the normal GPIO pins with an INTERRUPT, or use ANALOG signals.

For reading the ear encoders and the volume control (more on that later), I'll use an ADC (Analog to Digital Convertor) to see the analog data. I've used an ADS1115 ADC for that, it can read up to 4 channels with 16 bits precision.

It will be connected to the I2C header we soldered on in a previous step.

At the startup, I want to let the ears go to a basic upwards position. That way I can make sure there is no doubt about where the ears are after a hickup. The encoders can help us with that, as it tells when the ears are reaching the top.

Adafruit made a module for talking to the ADC. Let's install it with their instructions:

sudo pip3 install adafruit-ads1x15Connect to these pins (see I2C section on the Voice HAT): V = PWR (3.3V), G = GND, SCL = SCL, SDA = SDA

Before wiring the encoders to the ADC, we have to add 2 resistors; 100 Ω a 10K Ω. See the video for placement. Now connect the encoders to the ADC: Orange = to A0/A1 on the ADC, Yellow = PWR (3.3V), Green = GND.

As we have the module installed, we can use it in our code (default code looks at device 48):

# Import the ADS1x15 module.

import Adafruit_ADS1x15

adc = Adafruit_ADS1x15.ADS1115()

GAIN = 1

DATA_RATE = 860For example I could call this thread to constantly see what happens at the encoders:

EncoderPinLeft=1

EncoderPinRight=0

millisLeft = int(round(time.time() * 1000))

valuesLeft = 0

millisRight = int(round(time.time() * 1000))

valuesRight = 0

def ReadEncoders():

global millisLeft, millisRight, valuesLeft, valuesRight

millisLeft = int(round(time.time() * 1000))

millisRight = int(round(time.time() * 1000))

while True:

millisLeftTemp = int(round(time.time() * 1000))

valuesLeftTemp = adc.read_adc(EncoderPinLeft, gain=GAIN)

print ("Left :", millisLeftTemp - millisLeft, valuesLeftTemp)

if ((valuesLeftTemp > 2000 and valuesLeft < 2000) or (valuesLeftTemp < 2000 and valuesLeft > 2000)):

if (((millisLeftTemp - millisLeft) > 140) and (valuesLeftTemp < 2000)):

print ("Left UP:", millisLeftTemp - millisLeft, valuesLeftTemp)

millisLeft = millisLeftTemp

valuesLeft = valuesLeftTemp

millisRightTemp = int(round(time.time() * 1000))

valuesRightTemp = adc.read_adc(EncoderPinRight, gain=GAIN)

#print ("Right:", millisRightTemp - millisRight, valuesRightTemp)

if ((valuesRightTemp > 2000 and valuesRight < 2000) or (valuesRightTemp < 2000 and valuesRight > 2000)):

if (((millisRightTemp - millisRight) > 140) and (valuesRightTemp < 2000)):

print ("Right UP:", millisRightTemp - millisRight, valuesRightTemp)

millisRight = millisRightTemp

valuesRight = valuesRightTempThe ADS1115 turned actually out to be a wrong choice... I could have better used an ADS1015 with 12 bit precision as that has a faster reading frequency (DATA RATE of 3300 SPS instead of 860 SPS). I can now only read the proper "UP" state by turning the ears backwards. Running forward there is too much noise.

Scroll wheelAt the backside of the rabbit (it's tail?) there is a scroll wheel. This used to be the volume control. We can connect it to the ADC to read the values: Brown = GND, Orange = PWR (3.3V), Red = A3 on the ADC.

As we use the same code as on the Ear Encoders, nothing to install for this.

In the header of main.py, import these 2 modules:

import math

import subprocessAnd create a process that we will execute as a thread:

def _ReadScrollWheel():

global valuesScrollWheel

valuesScrollWheel = 0

while True:

valuesScrollWheelTemp = adc.read_adc(3, gain=GAIN)

if ((valuesScrollWheelTemp - 500 > valuesScrollWheel) or (valuesScrollWheelTemp + 500 < valuesScrollWheel)):

vol = math.floor(valuesScrollWheelTemp / 250)

vol = max(0, min(100, vol))

valuesScrollWheel = valuesScrollWheelTemp

subprocess.call('amixer -q set Master %d%%' % vol, shell=True)

# avoid overflow

time.sleep(0.1)Now start the thread in the main() function:

thread_scrollWheel = threading.Thread(target=_ReadScrollWheel)

thread_scrollWheel.start()With all these steps in place, you can put the shell back over the base. You have a fully fledged Nabaztag Assistant that listens to you, shows you his colors and wiggles his ears. Oh and it gives you answers to silly (and smart) questions, using the Google Assistant framework!

To Do: Proximity sensorTo trigger the Voice HAT, you can use a button (like the one you got in the kit and what we used on his head), or you can create your own triggers. It sounds cool to have a Proximity and Gesture Sensor (APDS-9960) for this trigger function. Sparkfun does have one, and I did order a clone of it.

But later on I found out that there is no out-of-the-box Python implementation for the APDS-9960 version as of yet. There is a module for a little older/simpler version of the chip, so I'll need to extend that. So for now I've parked this idea to the To Do list, but it will be added later on for sure!

To Do: RFIDBy taking apart the Nabaztag, I saved all components, didn't throw anything away (yet). So I now have an RFID reader, and this project does give some examples on how to hook it up on I2C, so maybe I connect it back in later on!

Comments