I know now why you cry, but it is something I can never do."

- T-800/"Uncle Bob".

While its true robots cannot cry but in this project I will teach them how to understand emotions, recognize friends and analyze the situation to detect threats.

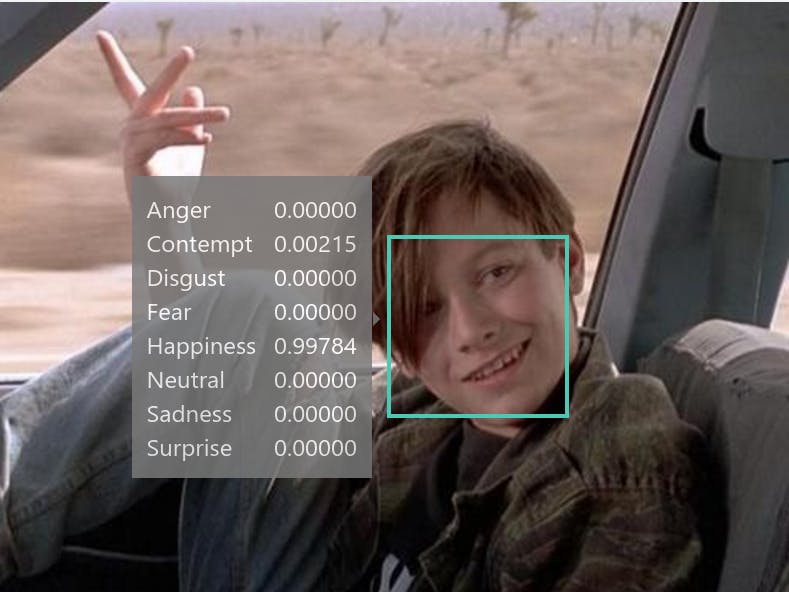

For the purpose of this demonstration, I will use onboard LEDs to show when Ci20 detects an emotional sentitment or a hostile situation.

The emotion detection is no doubt cool, but the situation analysis surprized me with the kind of details retuned. Scroll down to see what it guesed from a low-res webcam picture.

BackgroundOnce an image has been acquired it goes thorugh the following steps:

The commonly used methods for Feature Extraction can be divided into two catagories:

- geometrical methods

- appearance-based methods

Geometric features are selected from landmarks positions of prominent parts of the face like mouth, eyes, eyebrows. This technique is simple and fast, but its accuracy depands on face recognition performances.

The appearance based method work on image directly. it does not use a single extracted point and Local Binary Patterns. It analyzes the skin texture and extracts features relevant to emotion detection. It needs to work with lot of of data which demands high CPU cost.

Some researches combine the geometric and appearance extraction to successfully build Hybird technique. These techniques still carry a high CPU demand and probebly not feastible to do natively on a device such us Ci20.

The Machine Learning part of Feature Classification requires a decent amount of training before it is usual. The congnitive serivice we are about to use was trained on millions of free images on internet, so its pretty robust.

Microsoft Cognitive ServicesCreate a Microsoft account if you don't own one alreay then head over "Get Started for Free" on subsequnt page the says "Request new trials" Select the followings

- Emotions

- Computer Vision

- Face

- Speaker Recognition

- Linguistic Analysis

Select "agree" to license terms. You should get a page like the following:

The API keys will be used later to call the Microsoft services.

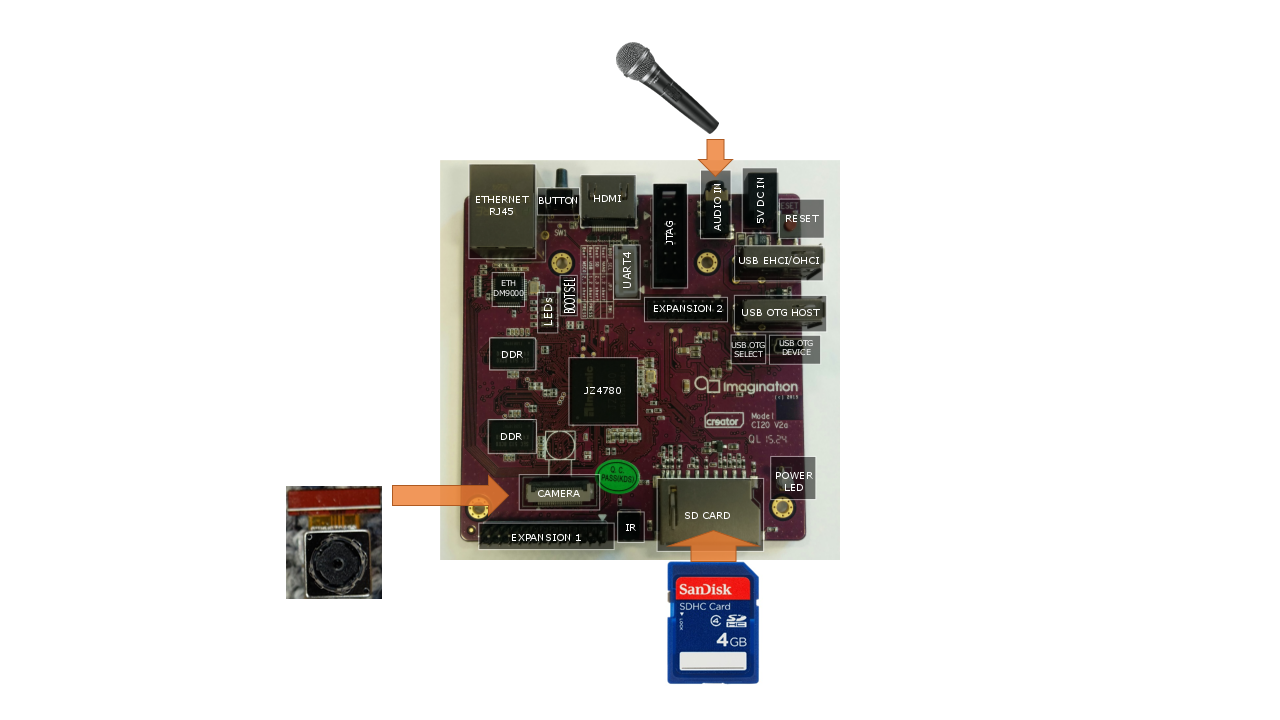

Hardware SetupHardware setup is easy. Connect microphone to Audio port. If you have OV5640 camera module, connect it to camera port using instructions . If you don't have the camera module, you can use a USB webcam.

You can connect USB keyboard and HDMI display and work directly on console, or connect network cable and ssh into the board.

Ci20 Software SetupFactory installed Debian on Ci20 does not have the features we need. Download latest Debian image and flash by following instructions from here.

Onec Debian is booted, attache ethernet or setup WiFi and use the following to install required pacakges and bring them up to date

$sudo echo 'deb http://httpredir.debian.org/debian jessie-backports main contrib non-free' >> /etc/apt/sources.list

$sudo apt-get update

$sudo apt-get upgrade

$sudo apt-get install streamer openjdk-8-jdk

To enable LED control do the following:

$sudo -i

echo none > /sys/class/leds/led1/trigger

echo none > /sys/class/leds/led2/trigger

echo none > /sys/class/leds/led3/trigger

chmod a+w /sys/class/leds/led1/brightness

chmod a+w /sys/class/leds/led2/brightness

chmod a+w /sys/class/leds/led3/brightness

exit

Download and install Intellij IDEA Community edition. It has built in git client so you can clone the repo. The project depands on the folloowing libraries that you'll need to download and fix referneces.

Right clicking on the 'module' and select "Open Moudle Settings" then "Libraries" to edit the paths.

Once build is succcessfull, select Build->Artifacts as shown below to generate a jar file. It will go to azure_cognitive/out/artifacts/azure_congnitive folder. You can now copy it to your Ci20.

You need to copy azure_congnitive.jar from your host PC to Ci20, You can use SD Card, USB disk or sftp, whatever seems easier.

Open command prompt on Ci20, cd to the folder where you have placed the .jar. Before running, you need to set envirnment variables for your API keys from Microsoft Cognitive page seen earlier.

export EMOTIONDETECT_KEY=[paste Emotion key]

export SITUATIONANALYSIS_KEY=[paste Computer Vision key]

Run the application:

java -jar azure_congnitive.jar

The output will be shown on console and you can also see the LEDs near the Ethernet port turn ON in response to the sentiment detected during processing. For example LED1 will turn ON if it is a postive sentiment as a result of "happiness" or "surprise" emotion detected from the camera image. LED2 will turn on if it is a negative emotion or hostile situation. LED3 indicates nutural.

Here is a sample run:

If you look closely, there is a treasure trove of information returend during situation analysis, even though the picture is out of focus and poorly lit. It correctly guessed my age and location. It even guessed that my posture is showng confidence etc.

You can do lot more stuff with the might of Cognative AI. I have provided a solid framework to easily interface the Cognative AI with your Ci20 hardware. Feel free to fork the repo and extend it to other services.

Your robot can now understand emotions and analyze its envirnment. Have fun!

"I'm a machine. I can't be happy... but I understand more than you think."

- Cameron.

Comments