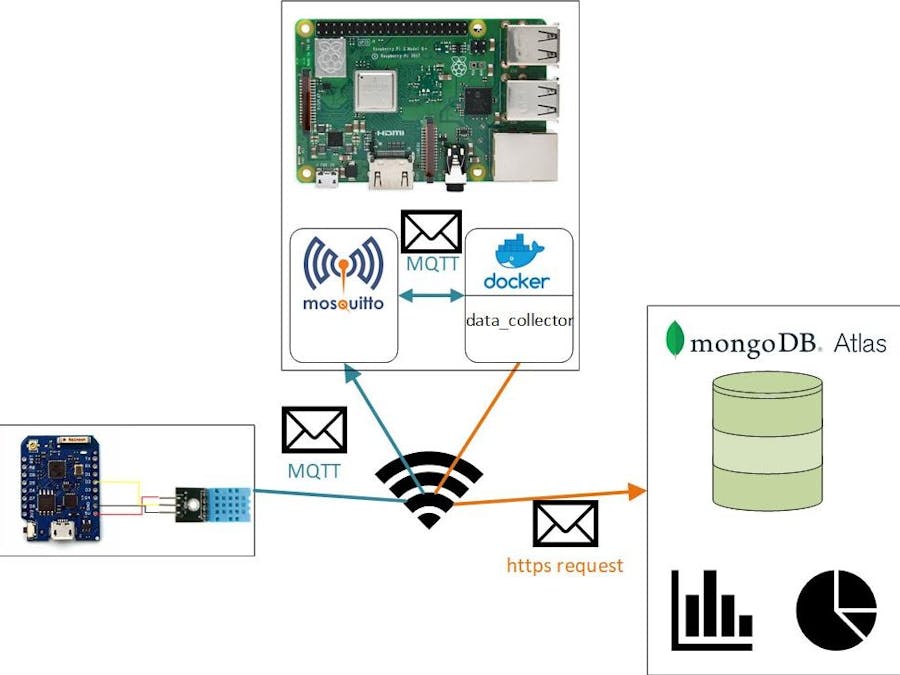

The goal of the project is to install a infrastructure for another IoT projects at home.To verify the functionality, I have implemented a ESP8266 to measure the temperature and the humidity of my bedroom. The ESP8266 sends the collected data over MQTT to a raspberry pi which runs as MQTT broker.

On the raspberry pi runs also a application (data_collector) which subscribes on the topics from the ESP8266 and save the collected data in a mongodb.

To display the data I use the chart function of mongodb.

The ProblemI want to make my home smarter and for this I need a way to communicate between different microcontroller in different rooms. The infrastructure project should include the possibility to collect data and save it, so that I have a history of the data and also to send informations to remote microcontroller. So that I have the possibility to install a central controlling instance. Also I need a possibility to display the data in a comfortable way and for different types of data.

The IdeaI want to realize the communication over MQTT. For that I need a MQTT-broker, this could be also the central controlling unit. For that I use a raspberry pi. To save data for a long time and also have a fast access I need a database. I have chose the mongodb for this project, because of possibility to save documents in json format. For me this is the perfect database in interaction with MQTT. In order to use it easily on other systems later, I use docker for my applications.

To collect some data from different sensors in different rooms I need a microcontroller with a WiFi-module. In this case I use the cheap ESP8266.

As dashboard I use the mongodb charts, so I can display all kind of data in a fast way.

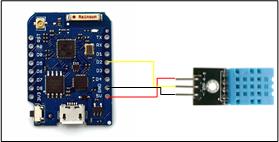

Its just a sample circuit to collect data and test the smart home infrastructure. For this I used a EPS8266 microcontroller and a DHT11 temperature and humidity sensor.

The MQTT-broker

At first I set up a raspberry pi as MQTT-broker. For this I install the mosquitto-broker. You can do this over the following commands:

$ sudo apt-get update

$ sudo apt-get install mosquitto mosquitto-clientsThen start the mosquitto service with the following command:

$ sudo systemctl enable mosquittoIf you want to check if everything runs correctly type:

$ systemctl status mosquitto.serviceTo check the broker function subscribe in one terminal on every topic.

$ mosquitto_sub -t "#"And in a other terminal publish some data.

$ mosquitto_pub -t "test" -m "first check"If everything runs correctly you see in the first terminal the message "first check".

Docker

To install docker and all required packages on the raspberry pi you have to run following command:

sudo curl -fsSL https://get.docker.com | shTo use docker in a comfortable way we also install docker-compose, with this tool you can configure and run docker-container. For more information click here.

sudo apt-get install docker-composeyou will find the following code on my GitHub repository and can use it for your own projectsdata_collector

Lets us implement the data_collector app. For this I will use python and the PyCharm IDE. First we have to implement a MQTT subscriber to collect data from other devices. As framework we will use paho.

To make this MQTT access usable for many applications we will define an new file with the name mqtt_client.py. In this file we will define a class named Subscriber. Here we want to have a constructor which initializes the class.

Here you can see that the will have two attributes, first the host with the default "localhost" this is the case if we do not overwrite the host by creating a object from this class. The localhost will be the right hostname if we run the broker and the application on the same computer. The second attribute is the port, this has also a default value, namly the standard port of MQTT 1833.

In the next row it will be set up a client from type paho.mqtt.client. We will need this to get connected to the broker and to receive data.

After this we will define some public variables which we need later to use the received data.

Now we will implement methods to start and stop the client.

Then a method to subscribe on a topic.

We will use this method to subscribe to specific topics.

The last method we need is the callback function to receive data from the broker.

If the broker gets some data for a topic we have subscribed, the __on_message method will be active. In this method we use the public variables we have defined before in the constructor. We will save the received payload and the topic for the message and also will increment a counter, which will later make it easier for us to make sure that we have new data.

We should also define a publisher class, so that we can send informations to our remote controller.

This is almost the same like the Subscriber, but we do not need the subscribe and the __on_message methods, but for that we define a publish method.

If you like you can test the communication with short an application.

db_service

If the MQTT broker and client runs correctly we can implement a class to enable the access to a mongodb. For this we need the pymongolibrary. The class should only be a abstraction layer between your application and the database. So if we later want to use another database we only have change this class and not the whole application. In this case we don't have to implement much.

In the constructor you can see that in my case I have only make the db_address configurable, but in the most other case change this and make the db and collection configurable! For this you only have to append the __init__ with two further attributes.

The private method __create_doc will convert the topic and payload to the desired format. Don't forget that the mongodb works with json documents.

The private method __insert_doc will insert the data into the database and gives us the key of the document. We can use the key to get a fast access to the data.

The last method save_data is the only public method we can use over a defined object of this class and will call the other two methods.

The database

To test the functionality of the db_service we have to run a mongodb. For this we have two different way.

-> One way, we start a local database described here:

If you want to run the mongodb locally on a raspberry pi you can use a image from docker hub. In this case I have used the image from andresvidal. To start and stop the container with the right configuration, I write a docker-compose-file. For this start the terminal on your raspberry pi. Then we have to create a folder in which will save the docker-compose.yml. For example:

$ mkdir ~mongodb_composeThen edit the compose-file:

$ nano ~/mongodb_compose/docker-compose.ymlIn the file we define a service named mongodb, with image andresvidal/rpi3-mongodb3, with a defined volume and that the standard mongodb port 27017 will be forwarded to 27017 on the localhost. If you want check the volume later, you do it with the command:

$ sodu docker volume lsNow you can start the container.

$ cd ~/mongodb_compose

$ sudo docker-compose upIf you want to check if the container is running, please type:

sudo docker psIf everything runs correctly you can test the db_service.py.

-> And the second way, we use the free Atlas service from mongodb:

If you want to use the Atlas service, please create a free account.

- log in

- create a new cluster

- choose your provider and your region, cluster tier and name

While your cluster is being set up, you can configure the network and database access. In the last point you have to create a user.

If your cluster is ready, please click on collections and create a database and a collection.

Now go back and click on connect. And choose in the opening window "Connect your application"

This will lead to a new window in which you can select your driver and the driver version. In this case we will choose Python 3.6.

After choosing this you will get a connection string which you can include into your application code.

Now that we have finished all the preparations we can start to program the main program.

To you use all our classes we defined before we have to include they into the main program. We also need to include the time library, but we'll get to that later.

After this we have to create some object from our classes.

We will start to create a publisher object and as attribute we set the hostname of the raspberry pi (if the program runs on the raspberry pi you can use localhost) and the MQTT port. We previously set the port as the default, but for readability, I set it again in the main program.

The subscriber object will be defined in the same way like the publisher, but here we have to set some more values. First we will create a helper variable old_counter with they we can later check if we have get a new message. Then we have to subscribe to a topic over which we will receive data from the microcontroller.

And at least don't forget to start the publisher and subscriber.

After initializing the MQTT communication we have to set up the database client. Here we can include the communication string we have get from the mongodb Atlas service.

The aim of our application is to filter the data, decode it and then save into the database. So after starting the subscription with the multi-level wildcard on "/bedroom/corner/#" before, every time if the microcontroller sends new data, the callback function __on_message will be active and save the data into the public variables of the Subscriber object topic and payload.

First we need a infinite loop, because we want to run this application for ever on our raspberry pi so that we lose no data. Then we will check if some new data is there by doing check if the counter of the subscriber object is bigger then our helper variable old_counter. If this compare operation is true, we only have to check with which topic exactly comes new data. Then we will print the meaning of the topic and the payload and also save the decoded data into the database. If you remember the DatabaseService class we convert the data into the json format and then send it to the mongodb. At the end we let the controller sleep for 250 milliseconds and then everything starts all over again.

createmongodb charts

To create some mongodb charts please login again in the atlas service and click on the tab Charts.

Now add a new dashboard or use the default.

You can give the dashboard a specific name and write some description.

After clicking on the create button. Click on the dashboard name to add some charts.

In this drop-down-list you can choose between a few kinds of diagrams. For this project I use the line diagram.

To get some data for the chart you need to choose a data source.

Then you can select between all kinds of data points in your database. Include it over drag and drop. You can give your graph specific colors over the customize tab.

as alternative you can create a dashboard with Losant

If you want to create a dashboard with Losant you have to create a connection between your database and your Losant-device.

To do this, we need to create a device in Losant:

Click on the Devices button on the left side and than add a new device

Give your device a name and describe it, if you want.

Then go on the tab Attributes

In this tab we have to create the attributes of your device. In our case we have to set up a temperature, humidity and a dew_point attribute. Over this attributes we will later link the data from the mongodb to the dashboard.

Now let us add a new workflow:

Click on the button Workflows on the left side and then on Add Workflow.

Here we can give the workflow a specific name and also describe it. As type we can choose the default option Application.

First we need a trigger to make a query to the database. In this case we can use a timer.

You can configure the Timer node like you want, in this case I collect with the microcontroller every 10 minutes data, so I will configure the timer the same.

The next node will be the MongoDB node. Here we have to create a connection to the Atlas service over the connection string you have used for the database_service.

For the connection we have also to choose the collection name. Now we can set up a query. In our case we need a findOne in combination with a sort by date in descending order, because we want to get every 10 minutes the latest entry.

After this we have to define the path to the payload of the query.

Now copy the node and paste it two times and change the key string in the findOne query to "/bedroom/corner/humidity" and "/bedroom/corner/dew_point" and the payload path to data.content.humidity and data.content.dew_point.

Now we set up Device: State node to fill the the defined attributes of the device.

After selecting the device, you can configure the data with which the attributes are to be linked in the node.

Now our workflow is ready. For debugging and easier implementing of the attribute <-> data linking you can also add a Debug node.

Now lets add a new dashboard...

... and give a name and describe it.

Here you can add a new Block. In this case I added a gauge diagram of type Thermometer. Configure the blocks like you want and choose some diagram types to create a nice dashboard for your application.

*********************************************************************************************************

I hope this gives you a idea how to implement a smarthome project from the microcontroller up to the dashboard.

Please contact me if you have any questions about the project. If you have found any errors, I would be grateful if you would tell me.

Comments