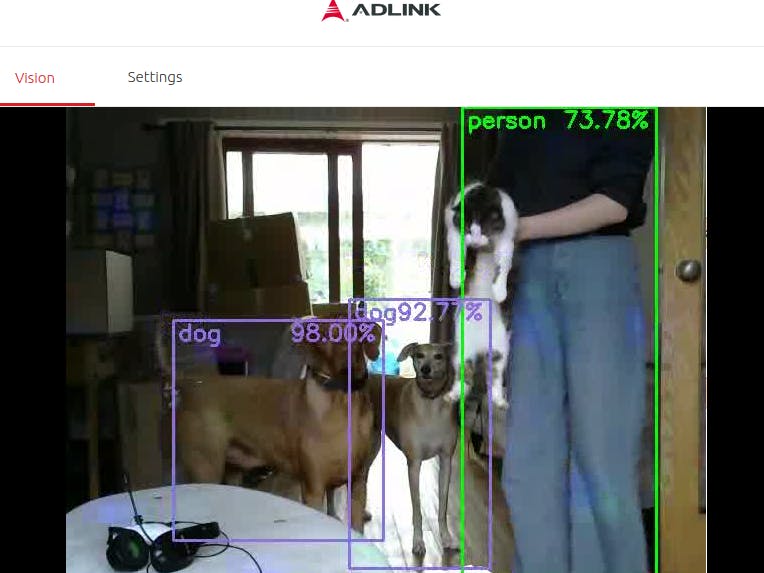

My goal was to trigger disco lights when a person or dog is detected and if a person is detected to play "staying alive". If a dog is detected to play "Who let the dogs out?"

This project is designed as a test application for the trial and use of pre-trained models within an edge compute/edge AI environment. Intel's OpenVINOsoftware has the capability to aggregate pre-trained models from a number of sources (https://docs.OpenVINOtoolkit.org/latest/omz_models_intel_index.html). Using ADLINK’s Vizi-AI and which has an Intel® Movidius™ X VPU chipset and Intel Atom 3940 CPU, with the ADLINK Edge software vision bundle included. The model used was the default Vizi-AI model; Intel’s objection detection model (frozen_inference_graph).

Future development, with appropriate models, for a more industrial usage would allow an edge compute smart camera to detect if a person is in a workspace where people should not be or with some tuning a visual based alarm for predators within the agricultural setting.

It is positioned as being easy to do with the ADLINK Edge platform by managing configured profiles for compatible hardware and utilising the tools included like frame streamer, AI Model manager, training steamer, AWS Model Streamer, OpenVINO and Node-RED to give the building blocks for machine vision solutions.

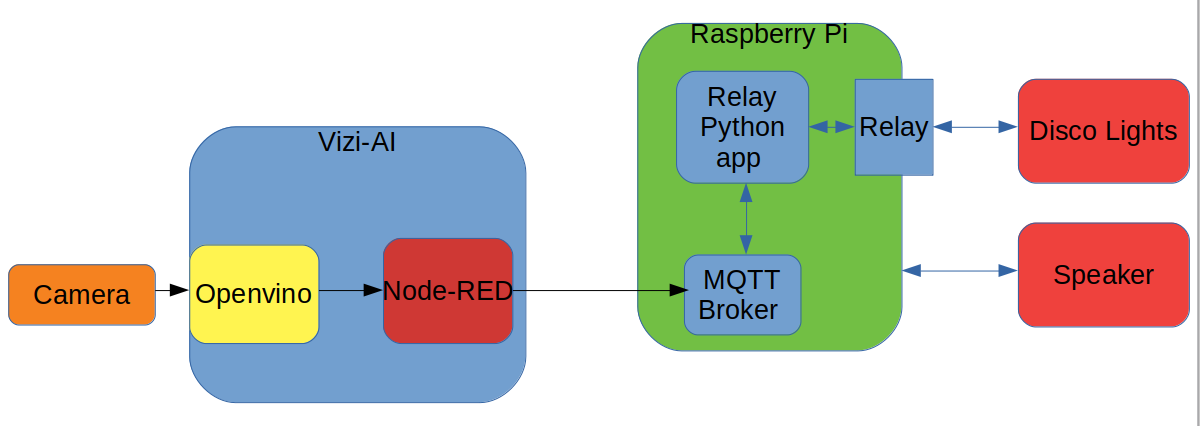

The two components within the system are :

1. Vizi AI with Web Cam.

2. Raspberry pi with relay board, speaker and disco lights

The camera captures the scene and the data is sent to the inference engine (in this case OpenVINO) via ADLINK’s Data River, which is a publish/subscribe data bus. The results of the inference are then published to the Data River and received by a Node-RED instance running on the Vizi-AI (greater detail of the Node-Red flow that then happens is shown below). This processes the labels generated by the inference engine and if a person is detected it send a MQTT message to the broker (topic, control payload "Disco").

The Relay python application running on the raspberry pi subscribes to the control topic. When it receives a message on the control topic, it examines the payload, if the payload consists of "Disco", it starts the Disco lights and plays "staying alive". after 30s of music and lights, the light will switch off and the music stops, until either person or dog is detected.

The Node red flow is executed on the Vizi-AI. (It is included in the attachments):

It has 3 debug taps through the flow to allow efficient debug and starting from left to right, the functional are as follows:

Detectionbox_reader : This is the listener on the ADlink Data River. Listening for the inferred values broadcast from the inference engine (OpenVINO).

extract inferred objects : This removes the labels from the inferred data (the inferred data contains a bounding box and label for objects)

content switch : The content forwards the data down a path based on the label (in this case the labels of interest are dog and person)

Msg Delay: The messages are generated for each frame of video. The delay box allows only one message per 30 seconds to be broadcast and discards the intermediate messages.

report a X : this box defines the value to the be sent to the MQTT broker.

disco : this is the publisher, that sends the message to the appropriate MQTT broker Topic.

In summary

The project was a success, I was able to detect the objects and create the outcome of playing a specific type of music and turn on the lights. As mentioned earlier, it is fairly easy to use the ADLINK Vizi-AI to be able to configure their apps rather than write large amounts of code to deliver a quick solution. To put this into working inustrial practice it would be very easy to adapt this to recognise different objects/things and to change the music to a recorded message and to have an alarm sound to alert for safety or security. It would then be very easy to also scale that out across numerous devices using ADLINK’s device management through profile builder which I used on this project.

Comments