Unlock the power of embedded AI with this hands-on project that turns an ESP32-S3 microcontroller into a smart voice assistant capable of natural interaction and hardware control using the Model Context Protocol (MCP). Unlike typical voice assistants that rely on proprietary cloud services, this DIY solution blends locally captured voice, real AI reasoning, and smart device control into a cohesive, customizable system for makers and developers.

This project walks you through creating a portable AI voice assistant based on the ESP32-S3-WROOM-1 module. Your assistant can:

- Listen for a wake word

- Capture your voice

- Stream audio to cloud AI models

- Generate natural language responses

- Control smart devices via MCP integrations

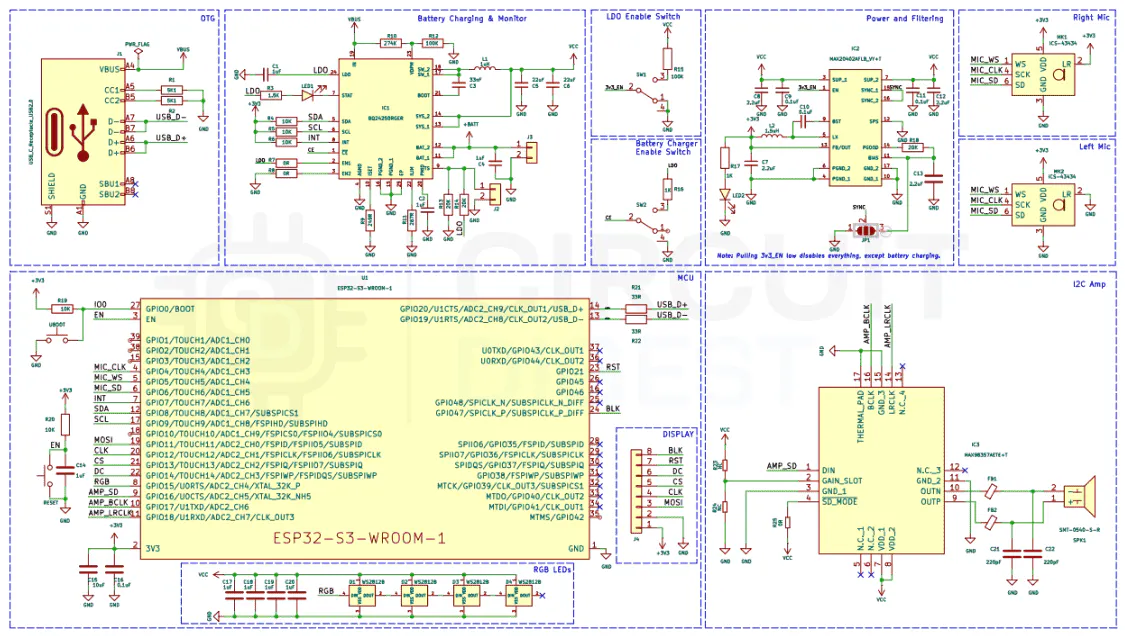

At its core, the design combines Espressif’s Audio Front End (AFE) framework for clean audio capture with real-time voice processing and a hybrid AI architecture that splits tasks between the ESP32 and cloud services.

- Efficient Voice Capture: Dual MEMS microphones and AFE enable echo cancellation, noise suppression, and accurate voice detection.

- Hybrid Intelligence: On-device wake-word detection with heavy NLP (speech-to-text, reasoning, text-to-speech) processed remotely ensures both responsiveness and deep conversational capability.

- MCP Integration: Using the Model Context Protocol, your assistant can discover, understand, and control connected hardware — such as lights, relays, sensors, and IoT devices — just by talking to it.

- Portable & Flexible: Runs on USB power or a Li-ion battery, with visual feedback via LEDs and manual controls through buttons.

Wake-Word & Voice Capture: The ESP32 stays in low-power listening mode. Once the wake word is detected, audio is captured using the onboard microphones and AFE suite.

Streaming & AI Processing: Captured audio is streamed over Wi-Fi to a cloud backend running scalable AI (ASR, LLM, and TTS services) via WebSockets.

Natural Language Understanding: The backend uses state-of-the-art AI to understand the intent and generate a response.

MCP Control & Feedback: Through MCP, the assistant can call hardware control functions — turning on devices, reading sensors, or performing actions — and then speak the result back to the user.

- Designing and assembling embedded AI hardware

- Configuring Espressif AFE for voice processing

- Integrating MCP protocol for bidirectional AI ↔ hardware interaction

- Streaming audio and handling real-time AI conversation flows

- Building a hybrid cloud + edge system that feels native and responsive

With thisESP32 AI Voice Assistant, you’ll go beyond basic voice activation and build a true conversational AI interface that can interact both verbally and physically with the world. It’s an open, hackable platform — no proprietary voice ecosystems or subscription fees — letting you own every layer: hardware, firmware, and cloud AI logic.

Comments