In contemporary urban life, digital presence is mostly experienced as a form of absence. People inhabit public spaces while being perceptually elsewhere; scrolling, messaging. Non-places such as transit zones, corridors, and waiting areas amplify this condition: spaces designed for passing through rather than being in.

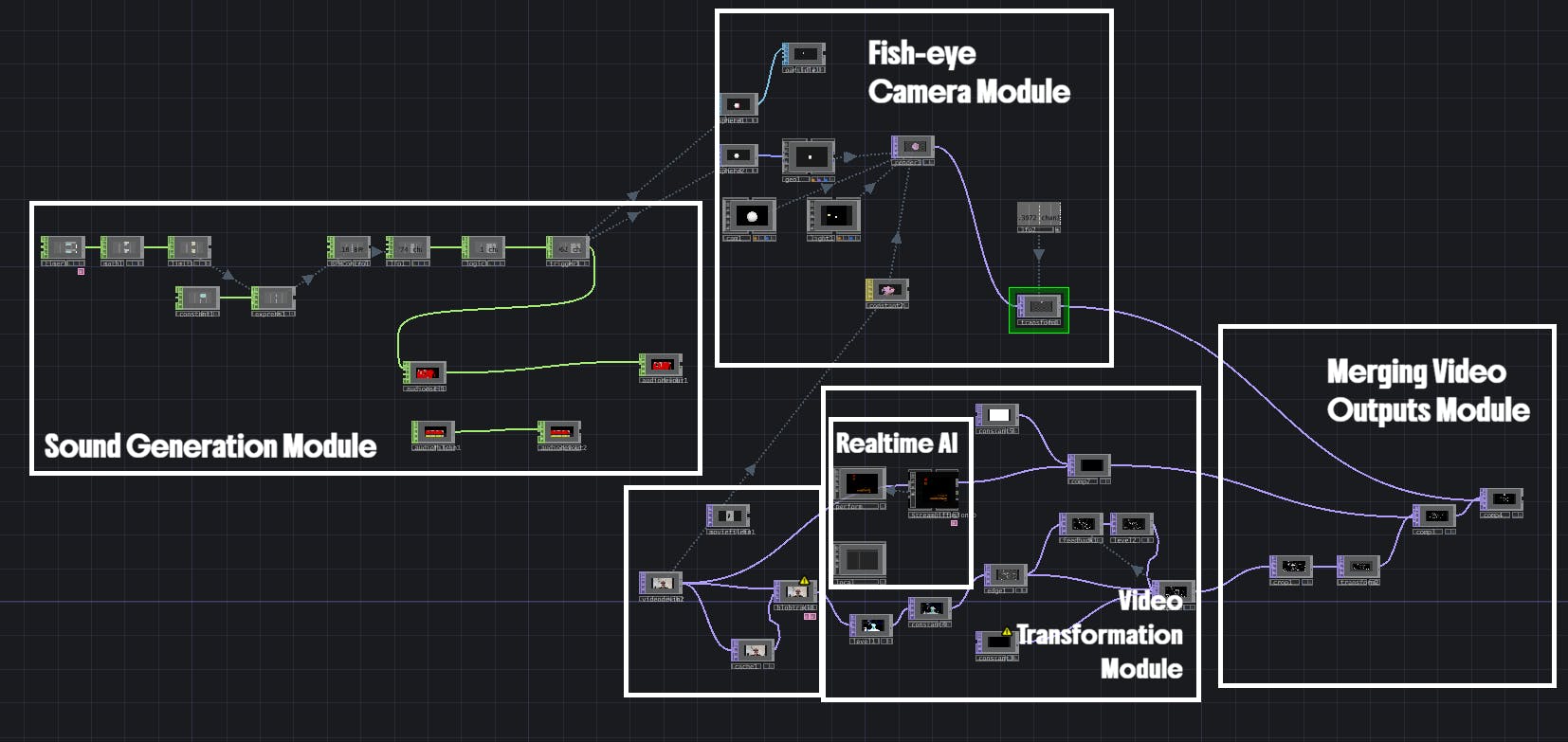

Rather than treating digital systems as tools for information delivery or efficiency, this project explores digital presence as a perceptual and affective mediator, a layer that can redistribute attention, perception, and bodily awareness. The installation proposes a speculative interface; a metronom-like holder where participants are invited to put their phones. The input from the real-time camera is processed via TouchDesigner and an AI prompting; the environment becomes a jungle-like landscape, human figures remain visible but rendered as outlines, and the centered sphere presents a fisheye reality. The jungle functions not as an escape but as a speculative ecology, contrasting the anonymity of non-places with an imagined environment of density, attention, and co-presence.

Participants become aware not only of their own presence, but of others’ presence as moving bodies outlined within the same altered reality. Rather than asking users to disconnect from their devices, the project reframes the device itself as a choreographic apparatus. Digital presence here is not about representation or immersion, but about re-imagination using algorithmic transformation to make the act of being in space visible, strange, and shared. In that sense, the project doesn't try to solve or fix digital distraction. Instead, it performs a critical gesture; proposing that digital systems could function as infrastructuresthat capable of producing new forms of relational presence between bodies, technologies, and environments.

footage: https://vimeo.com/1162812965?share=copy&fl=sv&fe=ci

Key learnings & considerations:

- Current solution requires multiple hardware and software elements; it must be considered as a functional prototype. Further standardization and consolidation work are needed for end-user utilisation.

- Model prompting is limited; for example, making the model not generate something or limiting the generation scope was unsuccessful. Therefore, we had to take a tradeoff and have a visual module showing the human bodies from the captured environment.

Comments