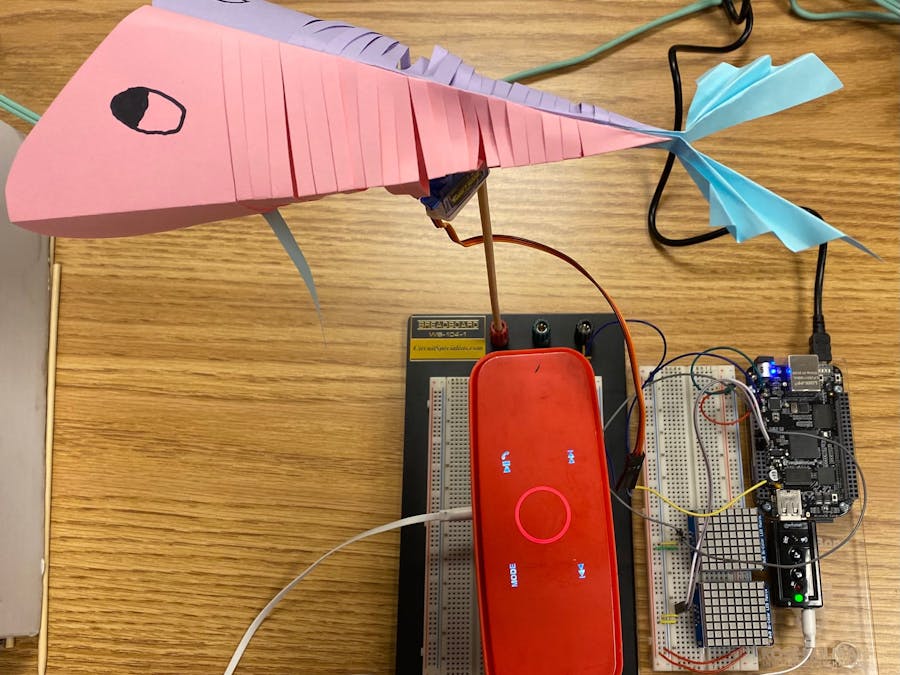

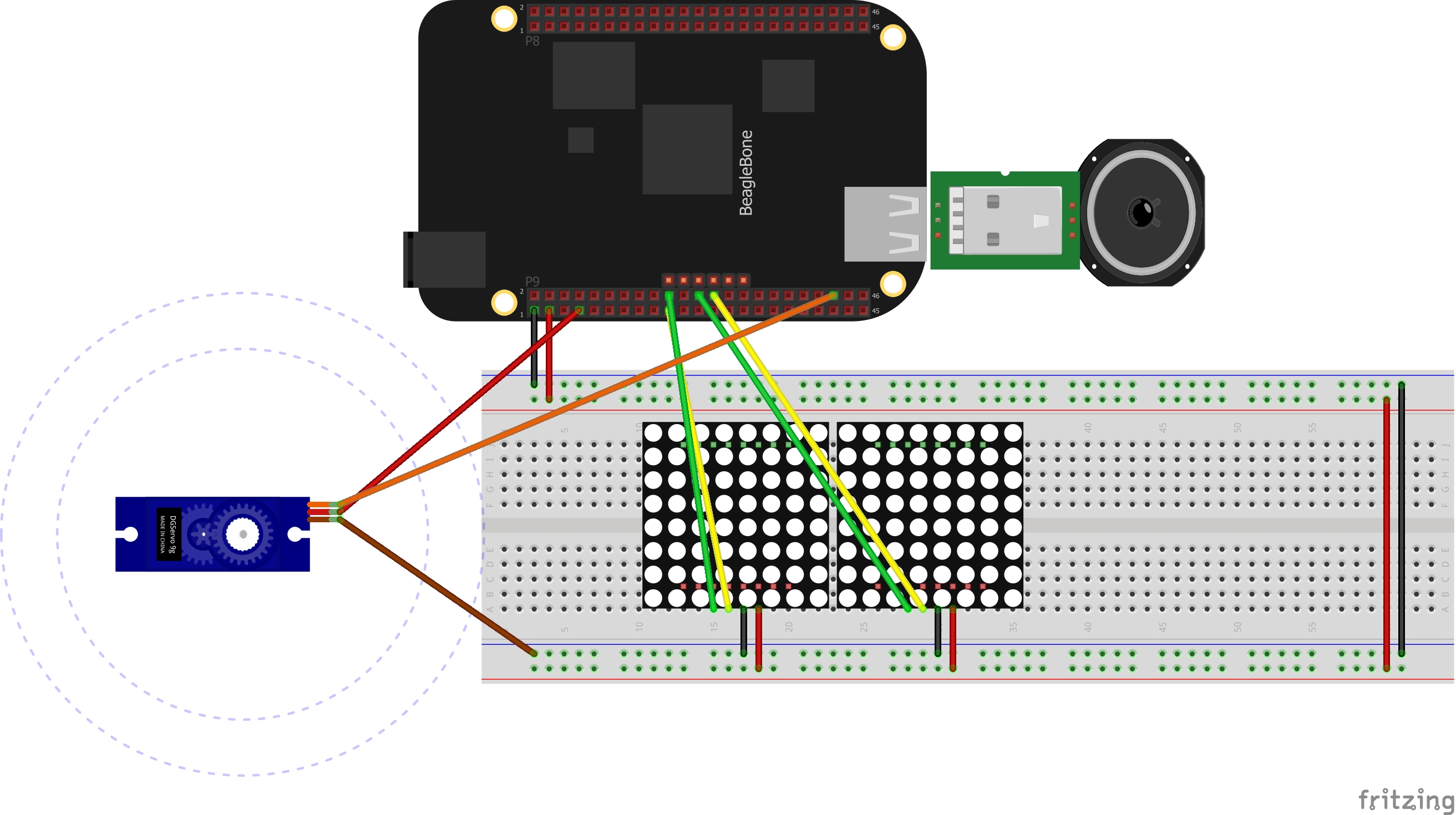

We were inspired by a viral video of an Alexa connected to a speaking fish on the wall. While we didn't want to copy this idea exactly and instead wanted to include the learnings from some of our classes, this is where the basis of the dancing fish began. This project is making a device using the Beaglebone which can stream any chosen audio. The music will come from a specified youtube video and will be played on a wired speaker. In order to make the music being played more interactive, the device will feature a fish that moves on beat with the music controlled by a servo. Additionally, we will have a sound-activated LED Music spectrum (on two different 8x8 LED Matrices) to reflect the different tones that are being played.

Currently, the program functions by having the user interact with the computer terminal and select a song from Youtube. A script handles the input and uses the YoutubeDL library to download a.wav file into the audio downloads folder. Once the file is downloaded, the script runs two processes in parallel: a python file for the audio processing and a script to play the music. Within the python script, we compute the fourier transform of the audio with the help of various libraries. Once the Fourier transform is computed on periodic intervals of the audio, the python file calls two different interfaces: one to handle the LED Matrices output and the other for the servo motor output.

We'd like to add several features, or at least suggest some ideas for future additions. First of all, we'd like to look into making the visualizer code compatible with streaming services other than Youtube (i.e. Spotify, Apple Music, Pandora, etc.). Additionally, we would like to add more capabilities related to playing the music like pausing and displaying the cover of the music video on an LCD.

Comments