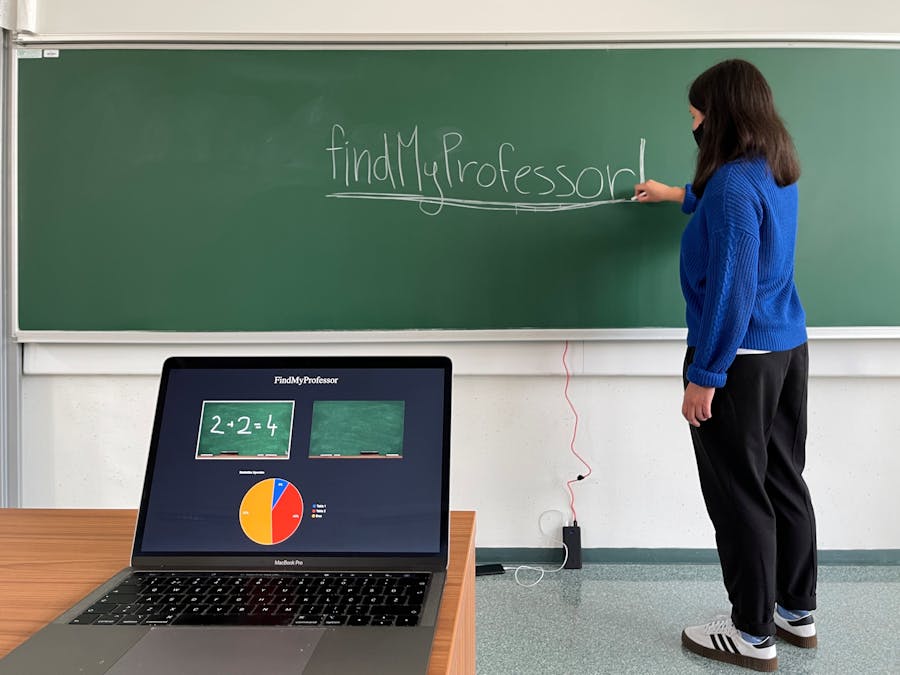

It was March 2020 and suddenly the whole world fell into a screen abyss - COVID devastated our lives and forced us to experience lectures through long/ frustrating Zoom meetings. The worst part? We couldn't see any darn thing, the letters were just too small to actually discern and the whole image compression thingy didn't help either, we tried to bargain with professors to move the camera during lectures and different types of scenarios but to no avail, thus we decided to solve the problem ourselves.

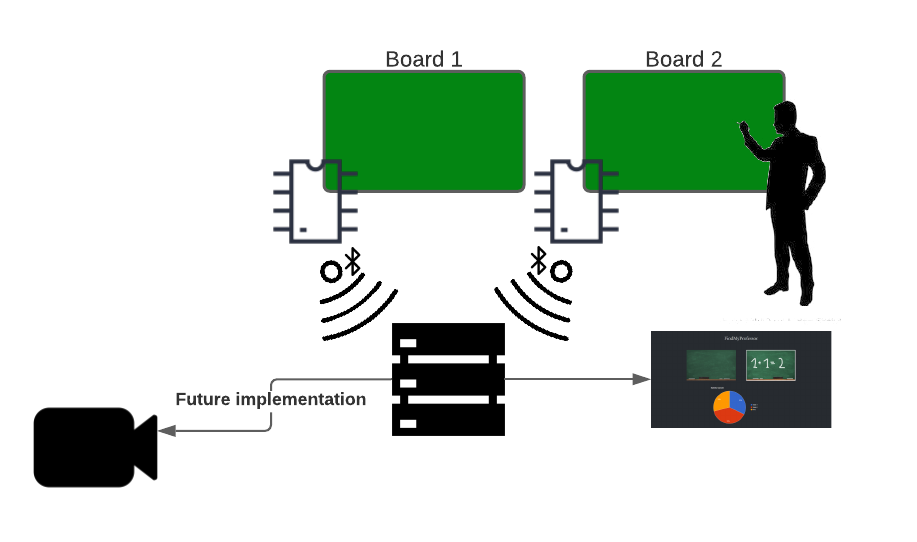

Using the Arduino Nano 33 BLE, ML models, and a central Python-based server we designed a solution for detecting which board the professor is using and issued commands to the pre-installed PTZ cameras to turn and zoom into them.

You might be wondering, isn't face or just plain image tracking easier? It may be, but it has privacy/ tracking implications in the academic world and requires magnitudes more expensive hardware. On top of that, the Arduino just looks cute.

How?Our project works by adhering an Arduino to each of the boards we want to get data from. The Arduinos need a power source, and the program we have written and uploaded on our GitHub. The program incorporates a machine learning model based on 140+ samples of multiple states (idle, multiple types of writing, and talking) and developed using the Edge Impulse platform. The ML model takes the recording an Arduino makes and calculates the probability of a specific board being written on, using MFCC analysis and an NN-classifier.

We are aware of the fact that no writing board is the same - composition, mounting mechanism, and even writing utensil types contribute to many small differences that add up. Even though our model is quite generic and works on most of them, it's not always perfect. If need be, you can always sample model data in your particular classroom and use the MFCC analysis in combination with the NN-classifier, but be careful with data labels, we found that too much segregation can lead to not so stellar results and came to the conclusion that we should split the data into two simple groups: writing (includes writing on the board, talking while writing on the board, drawing on the board, writing while someone else is talking,...) and idle (talking, walking around, someone else talking, complete silence,...). This classification method yielded the best results, with confidence being above 92% most of the time!

This data is then sent to a server via a BLE connection where a Python program uses probabilities from both Arduinos to make a final decision whether the professor is writing on a blackboard and if he is, on which one. In short, we look if either probability is higher than 60% and then pick the higher value - as simple as pie :). We have also put together a simple web application, served through Flask on the backend, that dynamically receives data through SocketIO and displays the activity on the blackboards. We implemented a piechart to display statistical information for the whole session that is being recorded for added value.

Don't forget! In order for this project to work, you need to change the hard-coded MAC/ UUID addresses in the Python server code. You can check your Arduino address using a pre-coded Bleak example called "scanner.py". Available on the developers GitHub: https://github.com/hbldh/bleak/blob/develop/examples/scanner.py

What next?Because of the time and scope limitation for our project, this is the extent of work that we have set out to accomplish, but have a lot of ideas for improvements and future development. The next very obvious step is to adapt the code on the server so that it will be capable of sending commands to the PTZ camera via IP HTTP GET requests and maybe even integrate vision-based tracking.

P.S. Most modern PTZ cameras use simple GET or POST requests for operation, thus a simple CURL message from the server would suffice.

Comments