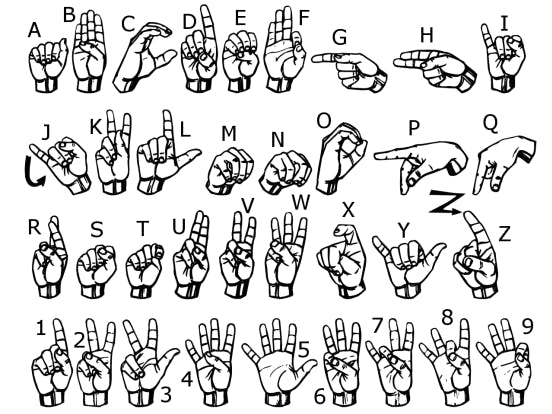

This open-source project came into existence for one sole purpose, to help the deaf community easily communicate and interact with their nearby surrounding. The aim is to convert basic symbols that represent the 26 English alphabets as mentioned under ASL (American Sign Language) script and display them on a smartphone screen.

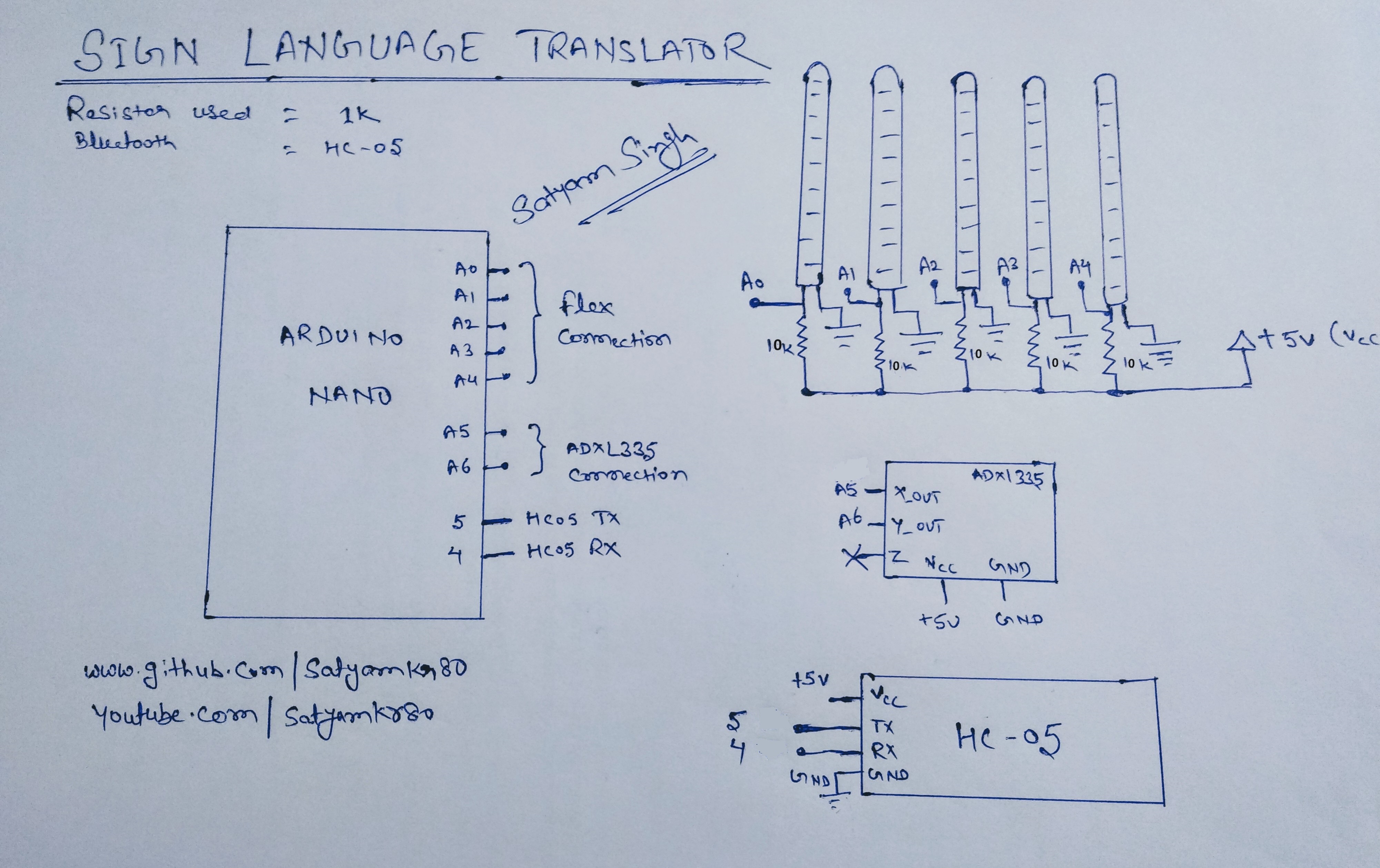

The project was inspired with the idea of controlling robotic arm with the help of hand movements. Most of the working is same but implementing the remaining part is rather a complex task. Accelorometer is used to measure the tilt in the palm. Five bend sensors are placed on a glove, four for the fingers and one for the thumb. These sensors measure the bend in the fingers and thumb and palm and according to the bend angle value the Arduino Nano microcontroller understands which set of value represent which symbol and transfer the appropriate outcome value to the Android app via Bluetooth which displays and speaks the symbol generated.

Representing the first few symbols was quite easy and fun, but there were few symbols that were hard to distinguish such as “U” and “V” which are very slightly different form each other and gave almost same value. The earlier prototype failed drastically to represent the same but the problem was solved using a metallic strip between the finger, which was used to tell if they are in contact or not.

The accuracy was increased by continuously updating the data set for each symbol from time to time.

Working VideoSample PictureI have used Android Studio to create the app. The app shows the symbol generated with voice output. For testing purpose you can use the below app from the google play store for android.

https://play.google.com/store/apps/details?id=com.zaptha.bluetooth.bluetoothsensor&hl=en_IN&gl=US

Before starting you must calibrate the sensor for min and max value. Connect digital pin 7 to 5V and close and open your hand to auto calibrate min and max value of sensor. I have used if(digitalRead(7)==HIGH) for calibration only. If you don't want to calibrate the sensor you can remove the line 64-119.

Future ScopeThis tool can be:

1) Further integrated with various services and help to generate employment for the deaf and dumb people.

2) Geared up with the controller to provide home automation on finger tips.

3) Paired up with fitness sensor to monitor health of the individual.

Reference:

Comments