My wife likes to go mushrooming, and I like when she continues living as a result of not eating dangerous mushrooms! She also is highly allergic to poison ivy, so if we're already identifying plants on the go with a wearable we may as well kill the poison ivy.

What We're Aiming ForIn a literal sense, we're aiming at poison ivy in an effort to destroy it and all it holds dear. But, in terms of what we're going for with the project, the idea is fundamentally pretty simple. There will be a wearable someone can have on their hand/arm such that they can walk around and it'll tell you what stuff is. If it's a plant, it'll tell what it is and light up green if it's edible, yellow if it might be edible but more info is needed (like mushrooms with poisonous look-alikes), or red if it's inedible/poisonous. And, for brownie points (and because it's somewhat neat), we'll automatically spray poison ivy.

The crazy contraption can be worn on your arm just below a glove because it's a lot easier to control where you're spraying for poison ivy and grab whatever edible mushroom it's pointing at.

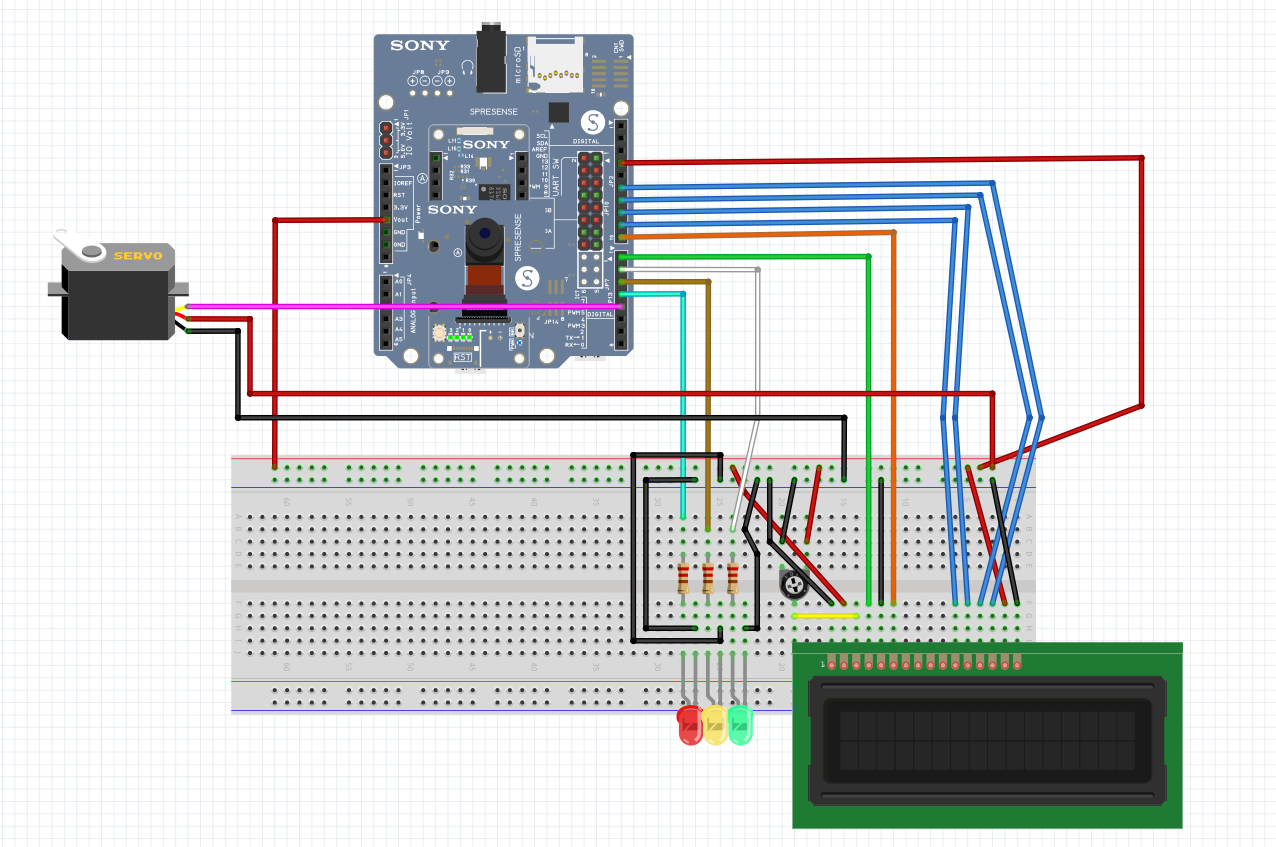

To do this, I used the Spresense with the extension board and camera as a starting point. Beyond that, I'd need an LCD screen to display the result of any given identification, 3 different colored LED lights, a potentiometer for the LCD, a servo for spraying the poison ivy killer, and somewhere in the ballpark of a hundred million wires (I didn't count but I think that's right). Needless to say, the breadboard got a little crowded, but there was enough space for everything and, since everything cooperated, this was by far the easy part! I did my best with the schematic to make it readable despite how much is going on.

Step 2 - Neural NetworkingSony developed a Neural Networking console that can be found at https://dl.sony.com/ and can be downloaded to your computer. A lot of resources are in Japanese, but they do have some resources in English as well as many sample projects. Especially considering Sony also built the Spresense I was using, it felt like an ideal fit.

Step 3 - The Onset of Despair and Screaming at The DarknessMostly kidding, but for awhile there the Neural Networking results were not where they needed to be at all. They looked a little something like this:

And, honestly, for awhile there this was one of the better ones.

But then something amazing happened. I tried a model that was not only NOT hot garbage, but was about as perfect as I could possibly hope for with 28x28 resolution. Check out this confusion matrix:

See all that confusion? Me neither! It's working PERFECTLY! At first I thought I had screwed up and had the same training and evaluation data or something, but no it was actually just cooperating incredibly well, at long last. In looking at the output results, it was actually determining the likelihood of which image was what and just legitimately getting the highest likelihood for the correct result.

Yes, you're seeing a dog and an airplane in there. I wanted this to be able to recognize more than just plants, and figured it might help to have things that looked different than just a bunch of different leaves and mushrooms.

This is what the setup that cooperated for me looked like:

To use the results of the Neural Networking project, you right click the evaluation and select Export --> NNB.

It'll bring up the exported folder in Explorer. From there you just copy and paste the model.nnb file onto a Micro SD Card. Important side note - the SD Card has to be formatted as FAT 32 to be recognized by the Spresense. Once your SD card is nice and FAT(32) and plugged into the Spresense with your model.nnb file on it, you're ready to move onto the code.

Step 5 - CodeCode, glorious code. Now that it's all functional, it's boiled down to a pretty simple setup.

We initiate the camera and DNNRT (neural networking part), and tell the camera to start streaming. The logic all happens within the stream results code.

Important note: if your model.nnb file isn't super tiny, you may get a memory error. I was experiencing it such that if I initialized the camera first, the DNNRT wouldn't initialize. If I initialized the DNNRT first, the camera wouldn't initialize. The fix is super easy. Just go to Tools --> Memory and increase to 1024KB. I initially tried turning it to 1536KB and it gave a different error, but 1024KB just makes it happy and work correctly.

Back to the code!

With each image from the camera's stream, we loop through the DNNRT and see which of the results has the highest likelihood. If the highest likelihood result is an Oyster Mushroom, we can handle logic accordingly.

For all results, we display what it is. For any plant, we turn on a light to indicate whether we should eat it (green), get more info to determine if it's edible (yellow), or not eat it (red). And, of course, if it's poison ivy we spray it with poison ivy killer.

Step 6 - The Crazy ContraptionWhile I can't see this ending up in any Walmart's anytime soon, it turned out in a pretty fun way.

To get the poison ivy spraying aspect to work, I had to use a weak servo because the powerful one I bought apparently wants to spin indefinitely, which really defeats the purpose. So, I held the spray button down such that it just barely wasn't spraying, so that all that the servo needed to do was provide just a tiny amount of additional pressure. This worked about as perfectly as I could have ever hoped something engineered together with duct tape and rubber bands could work.

For power, I found an old on-the-go phone charger from the dawn of time. Amazingly, it still worked.

I found some random metal piece in the garage and attached a cardboard base to it for a place for all the electronics to sit. To attach that to my arm without the involvement of duct tape and a horrifying sacrifice of arm hair, I found one of those old ipod holders that attaches to your arm so you can listen to music while you run. This worked great.

Time to Identify and Destroy!There were admittedly multiple occasions that the contraption identified my lawn as an airplane, which I'm fairly confident is untrue but who am I to make that kind of ruling? Regardless, for 28x28 resolution I was very pleasantly surprised at how well this worked. It was reliably confused about whether morel mushrooms were dogs and vice versa, but the thing is it consistently thought it was one of the two (with a couple exceptions). The area in which it performed best was, thankfully, in identifying poison ivy! This was where it very consistently would see poison ivy leaves and activate and, by the power of God and anime, the servo actually successfully had just the right amount of force to turn the spray on when it saw it and then back off.

So there you have it, the crazy glove that is surprisingly good at finding and killing poison ivy. It's also convinced half the time that dogs are oyster mushrooms and vice versa. Either way, it was a good time and an entertaining way to get started with the Spresense hardware, the Neural Network, and to learn more about the world of Arduino. Hope you enjoyed the ride!

Comments