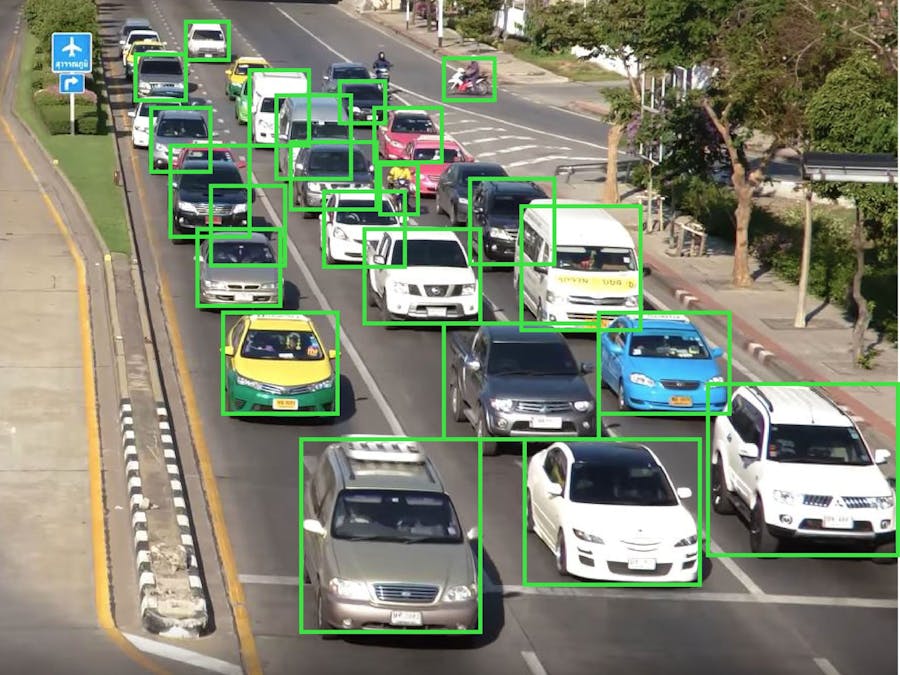

Automated vehicle detection and vehicle counting in streets, open doors to several applications including alternative route assigning in peak times, optimized traffic light timing, vehicle flow rate analysis in a particular road and other important data analytics which are needed for urban planning. The implemented method detects various types of vehicles using footages obtained from the cross junction CCTV cameras. The vision processing system runs on a Jetson Nano board and uses YOLO and Darknet.

Congestion level detection and Adaptive route planning

Detect congestion level at junctions and adjust traffic light timing to minimize the congestion level.

Read more

Comments