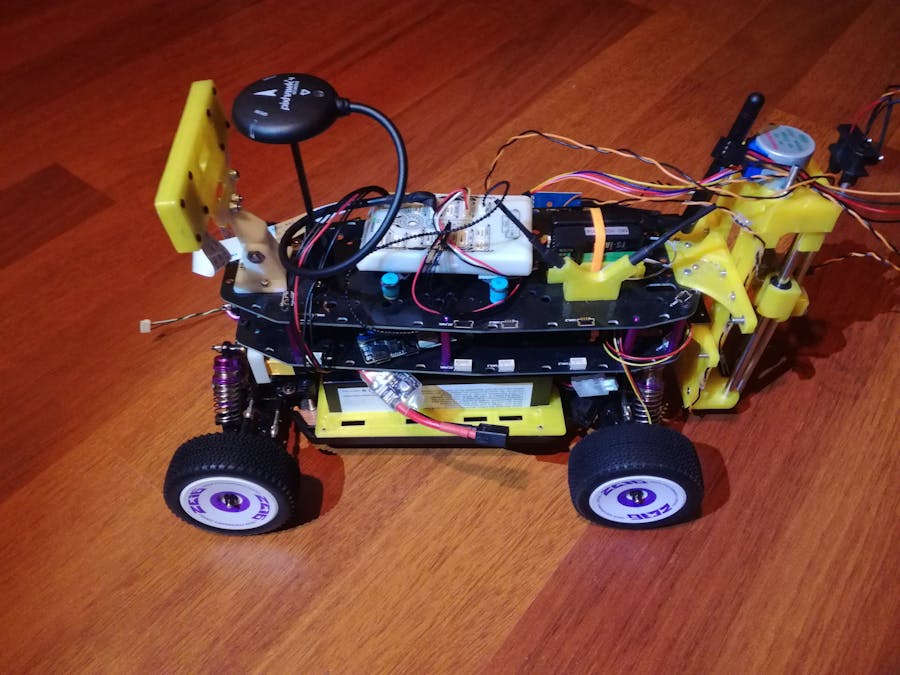

This is a write up, containing instructions, demos, experiments and lessons learned, on the AutoSQA project I developed for the NXP Hovergames3. The AutoSQA project, which can be seen as a system, is an autonomous platform for sampling soils in agricultural fields. It uses the NXP MR-Buggy3 as the robotics platform with add sensors to accomplish this task. The autonomous part is handled by the NXP NavQPlus with ROS 2 nodes. The NXP MR-Buggy3 Kit, together with the new NXP NavQPlus and the BOSCH BME688 where part of awarded hardware (discounts) from NXP, as part of the NXP HoverGames3, to accomplish this project.

Soil Monitoring: Importance, what and howAgriculture all over the world is facing a difficult and critical challenge with degradation of soil fertility and with that lower crop yields, even with higher fertilization. Soil fertility is decreasing due to a variety of factors: increase in soil salinity, shift of pH levels, topsoil erosion, etc. This is happening in part due to climate change and due to overfertilization, use of high mineral content water for irrigation and just overuse of soils. To prevent loss of fertility, as well as to recover fertility, it is crucial to monitor the soil so that adequate actions can be taken.

(As a small side note here, I played around with ChatGPT to look into soil monitoring, relevant properties and used sensors and it almost always highlighted at the end of each answer the importance of monitoring soils properties.)

This project aims to aid in the soil monitoring step, by developing an inexpensive and autonomous rover that can scan the soil with a sensor platform that can identify different relevant properties like pH, VOCs content, humidity, salinity and porosity/”stiffness”. By keeping the monitoring solution inexpensive, in relation to normal agriculture probing solutions, and autonomous, it will allow for more farmers, especially small farms and farmers in developing nations, to adequately monitor their soils and prevent, or even mend, soil fertility degradation.

Project Overview/OutlineThe AutoSQA system can be separated into four different parts, which together form the whole AutoSQA system:

- The robotics platform: The robotics platform is the physical platform of the AutoSQA system, where all the sensors are mounted to and allows them to be moved around. The basis for this is the Mobile Robotics Buggy3 (MR-Buggy3) kit from NXP, which itself uses the WLToys 124019 RC car chassis as the basis. It adds two plates (PCBs) on top as a support structure for fixing diverse sensors and controllers, like the RDDRONE-FMUK66, which is the controller of the MR-Buggy3 and runs the PX4 autopilot.

- The sensor platform: The sensor platform is a couple of sensors, together with their signal conditioning and conversion, and actuators used to sample the soil/fields. Two types of sensors are used: Direct contact sensors (in contact with the soil), and indirect contact sensors (like air sensors).

- The brains: The brains, the onboard computer, is what controls the whole autonomous acquisition platform. It gathers the sensor data and packets them, and enables the Buggy to traverse the field safely and on a defined trajectory. The onboard computer used is the NavQPlus from NXP.

- The GUI: This is the part interfacing with a user, allowing to set up missions, monitor progress and errors and display the acquired data.

Over the next sections I will go in more detail, explaining how each of these parts work, how they where developed and how they can be replicated. This is then followed by a section with the performed experiments (and the results) and a section with some demo videos as well as a section with my plans for future work.

Part 1: The robotics platform (MR-Buggy3)This project, AutoSQA, uses the Mobile Robotics Buggy3 (MR-Buggy3) kit from NXP as the robotics base. This kit is itself uses the WLToys 124019 RC Buggy chassis as the basis, and contains besides this buggy a Flight (Drive in this case?) Management Unit, the FMUK66 kit with GPS and Debugger, a better RC remote (FlySky FS-i6s), ESC and servo, as well as mechanical parts (two PCBs with standoffs) to create the Robotics base. The picture bellow shows the full kit with its parts that I received:

The MR-Buggy3 was build following the build instructions from the NXP Cup Car Gitbook that can be found here: https://nxp.gitbook.io/nxp-cup/mr-buggy3-developer-guide/mr-buggy3-build-guide. The instructions are very clear and I had no issues following them so I will not be repeating them here. Instead I will only show and write about the changes I made to my MR-Buggy3 platform, specifically to the WLToys 124019 chassis, which are the following:

- New battery holder: Designed a battery holder for larger batteries (in this case a 2S 5200 mAh from OVONIC (https://www.amazon.es/-/pt/gp/product/B08NJKB456).

- New drive gear: Changed the motor mount and pinion gear (stock 27T to 17T) for greater gear reduction (from the stock 4.074:1 to 6.47:1) and therefore reducing the top speed (stock 55 km/h) down to a more reasonable 35 km/h.

- RC and Telemetry holder: Designed a holder for the RC and Telemetry Receiver as well as a V-Style holder for the RC antennas.

- Back side mounting points: Designed a mounting holder for the PCB mid-plate for the back shock absorber with vertical mounting points for additional parts, replacing the spoiler as holder, as well as a complementary "L-Holder" with vertical mounting points for the top-plate.

- Wheel Encoder: Developed and added a wheel encoder with simple PX4 integration.

The following sub-sections I will go into more detail about each of these, and give instructions on how to use and/or replicate them.

Battery Holder

The battery holder of the MR-Buggy3 can only fit batteries of up to 100x10x10mm, which I didn't know when I selected and bought a battery for it (the kit had not yet arrived). So I ended up buying one that is to large for this holder. Specifically I bought the OVONIC 2S 50C Hardcase LiPo with 5200 mAh (138x46x24 mm), which looked perfect for the application with ample capacity and a hardcase for added protection. I was therefore left with two choices, either replacing this battery with a smaller one, or come up with a new mounting solution. I took the later approach and designed and tested two different mounting options. The first option I came up with was to mount the battery horizontally, with riser brackets to lift it over the plastic skirts of the buggy:

And the second one where the battery is mounted vertically, with a bracket that holds the battery against the MR-Buggy3 mid-plate/drive shaft cover:

I ended up using the latter one, as it keeps the battery better protected and lower, keeping the center of gravity (CG) of the buggy lower and closer to the center line, improving stability. The holder is 3D printed, with the STL file available for download in the attachments section. It is screwed into place thorough the 3 holes in the side skit of the buggy, it has some unused holes in the skirt already, with 25mm long M2 screws and nuts.

DriveGear

The stock driving gear ratio of the MR-Buggy3, of the WLToys 124019 chassis, is a very tall, designed for high top speed (55 km/h), and not really appropriate for a robotics application where much lower driving speeds are often used and required. The problem with this is that it limits the lowest driving speed achievable, the limit at which the motor (and wheels) start spinning, and reduces the speed control resolution of the Buggy. This is why I took the decision to change the gearing ratio.

After some research I found a great post on replacing the stock motor mount and pinion gear: https://www.quadifyrc.com/rccarreviews/the-ultimate-pinion-gear-guide-for-wl-toys-144001-144002-144010-124016-124017-124018-124019. Although this post focuses mainly on changing the gearing when replacing the stock brushed motor with a brushless one (also a great upgrade to be performed in the future!) it pointed me into the right direction. I then ordered an adjustable motor mount kit (https://pt.aliexpress.com/item/1005004690257050.html), which comes with a few two different pinion gears but I ordered an additional even smaller pinion gear one as well, a T17 one (https://pt.aliexpress.com/item/1005004692034775.html).

To replace the motor mount, the WLToys mid-plate has to be removed. Next the stock motor mount can be unscrewed from the bottom plate, by removing these two screws (the screws my look differently):

These screws are looked in place with a very strong lock-tight, and I was unable to remove mine with a screwdriver, and resorted to drilling them out... I later read that a better solution is to use heat to soften the lock-tight, there is always something to learn! With that the motor mount together with the motor can be removed. The next step is then to remove the stock pinion from the motor, again this is done by removing a screw (in the pinion gear) with a very strong lock-tight, that again can probably be softened by heat but that I drilled out. This story is repeated for the two screws holding the motor to the motor mount, and by drilling them out I also damaged the motor and had to buy a replacement one. So long story short, removing the stock motor mount and motor is quite a challenge and count on breaking both! After the motor, pinion and mount are removed we can replace them with the new adjustable mount, smaller pinion gear, and in my case new motor.

Now because the motor will be closer to the drive gear, and drive shaft, some adjustment cuts have to be made to both the drive drain cover (as seen in the figure bellow) and to the top mid-plate. Good both parts are from a very soft plastic:

Next we can mount the motor to the new motor mount. The motor should at first not be tightly screwed to the motor mount, so that its position can be adjusted. At this stage also add the new pinion gear to the motor and screw it in with a bit of lock-tight. The assemble can then be put in place, and the motor position adjusted so that the new pinion makes good contact with the drive gear. Remove the assembly again and now screw the motor tightly to the motor mount, add some lock-tight to the screw as well, and finally the motor mount to the bottom plate. The result of this can be seen in the figure bellow:

With the T17 it is a very tight fit, almost no clearance between the motor and drive shaft, so this is the smallest pinion that can be used. After that, the mid-plate is screwed back in place (don't forget to add the drive shaft bearing holder top part of the motor mount holder), with the necessary adjustments/cuts to make space for the now closer motor. The picture shows all fully assembled again, with the cuts in the mid-plate:

This adventure gives us a new overall gearing ratio increase from 4.074:1 to 6.47:1, a increase of 37%, bring down the top speed from over 55 km/h to a more reasonable 35 km/h, with the added benefit of increased torque!

I also had the idea of replacing the motor by one with encoders, and even ordered one (a smaller RS-385 one: https://www.ebay.com/itm/191880076979) but it turned out it had a to short shaft to pass through the motor mount and to mount a pinion gear on it.

Back Mounting Points

As outlined before, the objective of this project is to sample field soils, and one of the ways this is can be achieved is with a sensor that makes direct contact with the soil, it penetrates the soil. To be able to use this type of sensors on this Buggy platform, a linear actuator was designed that pushes (and lifts) such soil sensors into the ground. This will be explained further in section "Part 2: The sensor platform". This actuator has to be mounted to the MR-Buggy3, and the best location for that is on the backside of the Buggy, with custom mounting hardware that had to be designed.

Two mounting holders where developed, to create two vertical mounting points increasing mounting stability. The first holder mounts onto the rear shock absorber and replaces the rear spoiler, which therefore has to be removed, as the mounting holder of the mid-plate.

To mount this holder, after 3D printing it, the first step is to remove the spoiler holder and slide the new holder onto the rear shock absorber. It is then fixed in place by four self tapping screws, similar to the holder on the front shock absorber. The result is shown in the figure bellow:

Next 4 M2 nuts are added to this holder, two inserted from bellow and two into a slot from the back. These nuts, in combination with M2 screws, are used to screw the mid-plate to the holder. Finally, 4 M2.5 nuts are inserted into the back of the holder, which create the vertical mounting points. The assembly can be seen in the figure bellow:

The second mounting holder is for the top-plate, and it is fixed to it through 4 M2.5 screws and nuts. This mounting holder has space for 6 M2.5 nuts on the back, adding an additional 6 vertical mounting points. The inner 4 are in line with the mounting points of the lower holder. The full assembly, with both holders is shown in the figure bellow:

These mounting points are later used to mount the soil sensor actuator to the Buggy, but they can also be used for other hardware/sensors as well, like rear mounted distance sensors e.g. an ultrasound sensor arrays. The CAD (.STL) files for both holders are available for download in the attachments section.

RC/Telemetry Holder

The standard placement for the RC receiver and telemetry unit on the MR-Buggy3, following the build guide, is on the end of the top-plate, stacked on top of each other and fixed in place with double sided tape. This placement option interferes with mounting anything vertically to the backside of the Buggy, like the added holders from above, and therefore the placement of the RC receiver and telemetry unit had to be changed.

For this I designed a holder for both the telemetry unit and RC receiver, to mount them further away from the end of the top-plate, closer to the FMUK66. At the same time I designed an antenna holder for the RC receiver antennas, holding them in a V-Shape (the recommended orientation of the antennas). The holder and antenna holder are screwed, from the bottom, to the top-plate by M2x7mm screws. The antenna are secured by friction in the antenna holder, while the receiver and telemetry unit are fixed in place by a zip-tie. The picture bellow shows the full assembly:

The CAD files for these holders are available on my Thingiverse, and can easily be 3D printed: https://www.thingiverse.com/thing:5890392

RCReceiver RSSI

The stock firmware of the included RC receiver, the FS-iA6B, does not provide any RSSI signal feedback even though the hardware is capable of it. It is possible to enable this feature by flashing a new firmware, developed by the community: https://github.com/povlhp/FlySkyRxFirmware

This GitHub has all the instructions necessary on how to access the programing interface of the RC receiver and how to flash the new firmware. Important to note here is that the stock firmware is read protected and can't be backed up before flashing this new firmware...

With the new firmware flashed, we now have the receiver RSSI signal output on SBUS Channel 14, which then can be read by the FMUK66 by setting the RC_RSSI_PWM_CHANNEL parameter in the PX4 firmware (can be done through QGroundControl) as shown in the figure bellow:

The RSSI value is then also displayed in QGroundControl like can be seen in the figure bellow:

Wheel Encoder

As mentioned at the end of the "DriveGear" section, the initial idea was to use a motor with encoder to add odometry to the MR-Buggy3. This didn't work out and so an alternative, and now in retrospect better, solution had to be found. The alternative is a wheel encoder, instead of a motor encoder. The addition of odometry allows for more precise localization, by fusing it with IMU and GPS data. It also gives a way to have localization when no GPS is available like indoors or outdoors in overcast situations like in fields with a lot of trees. In addition it also enabled to development of a velocity controller (PID loop) on the Buggy.

The developed wheel encoder uses simple 1-Axis HAL sensors (S49e) and magnets glued to the inside of the wheel. The HAL sensor is mounted to the rear wheel cups (I have not developed yet a mounting holder for the front wheels) with the help of a 3D printed piece and single screw (the HAL sensor is glued to this piece first):

Next, we have to add magnets to the wheel, that then can be sensed by the HAL sensor when they pass over it. Small 5x5mm square Neodymium magnets are used for this, glued to the inside of the rear wheels (two on opposite sides). The magnets have to be glued in place with the same pole facing inwards, so that the same pole passes over the encoder. Which pole is used is not relevant as long as it is the same for all magnets added to a wheel (can be more then 2 magnets for increased encoder resolution).

A first prototype of the Wheel Encoder acquisition was developed using a Arduino Pro Mini, with the encoders connected to analog input ports (A0 and A1) and powered from its 3V3 rail, all connected to the FMUK66 through the Arduino I2C powered with 5V from the FMUK to the Arduino Vraw input. This prototype can easily be built by anyone with only an Arduino Pro Mini. The firmware is available in the same GitHub page as the more advanced Wheel Encoder, and it still works with the PX4 firmware of my fork.

The second version uses a custom developed board, the Wheel Encoder board (using mostly NXP parts to keep the NXP spirit of the HoverGames). The HAL sensors are connected this board via a 3-Pin JST-GH connector. The board has 4 inputs for HAL sensors, each of the inputs is connected to a comparator (NXP part NCX2220) with adjustable trigger level through a potentiometer. The signals are then acquired with a MCU (NXP part MKE16Z64VLD4), pin interrupts, and increment an internal counter which then can be read over a digital interface (I2C/CAN/UART). Big thanks here to NXP for providing me with free samples for the MKE16Z64VLD4 MCU, as all major distributors where out of stock on this part...

At this time the only interface implemented on both the Wheel Encoder board firmware and the PX4 firmware is the I2C one. The hardware is ready to be switched to the preferred CAN interface, having a NXP TJA1463 CAN transceiver on it (inspired by the NavQPlus, which uses the same transceiver chip), but this is not implemented yet. Bellow is a picture of Wheel Encoder board, mounted to the mid-plate and connected to each HAL sensor on the back wheels:

The Wheel Encoder board is connected to the FMUK66 through I2C, specifically to the I2C/NFC port of the FMUK66. After that, and with the FMUK66 powered so that it supplies the Wheel Encoder, we can now adjust the trigger level for the HAL sensors with the potentiometer. Depending on the used magnet polarity, we want that the respective LED turns OFF (or ON) when the magnet in the wheel passes over the encoder.

The FMUK66 has to be flashed with my fork of the PX4 firmware so that the encoder data is read from the Wheel Encoder board and published over MAVLink. This PX4 firmware samples the encoder data over I2C at a rate of 10 Hz and publishes the data as a uORB message (Encoder message that is already present in the PX4 firmware). The wheel encoder driver is not enabled by default, and the following command must be added to the SD Card file at /etc/extras.txt so that it is run on startup:

set +e

encoder start -X 1

set -eThe PX4 firmware with this driver is available on my fork of the PX4 firmware (Beta V1.14) (https://github.com/NotBlackMagic/PX4-Autopilot). This fork in addition also adds this uORB message to MAVLink links. Right now the PX4 firmware only forwards the encoder data to the MAVLink interface and does not use it itself. Velocity and position control is done on the NavQPlus but I have the idea to move that code over to PX4.

All files, firmware and hardware, are available on my GitHub (https://github.com/NotBlackMagic/WheelEncoder). I also made a small video explaining and demonstrating the Wheel Encoder: https://youtu.be/qfiv8_sycEg

Stereo Camera Holder

The MR-Buggy3 kit comes with a camera holder for the Pixy Camera, which can also be used to mount Google Coral Camera that comes with the NavQPlus by using the also included enclosure. But, one of the big improvements over the original NavQ, the NavQPlus has two camera ISP! This invites the use of a stereo camera setup for depth perception, and other applications. For this a new camera enclosure had to be developed, one that can hold two cameras (two Google Coral cameras in this case) with a certain distance between them. This is what I developed here.

The new stereo camera enclosure takes inspiration from the original single camera enclosure, using similar styling, assembly and the possibility to mount it directly to the camera holder that comes with the MR-Bugg3. This enclosure has space for two Google Coral Camera modules, with a distance of 70mm in-between them, the often recommended distance for a stereo camera setup. It also has a space/opening in the center for a ultrasound range sensor, like the GY-US42 for which the opening was designed, or any other additional hardware e.g. another camera module, an IR dot projector (I'm eyeing the ams OSRAM APDE-00), a illumination light, etc...

The enclosure is composed of two parts, the backside and the cover, with the camera modules sandwiched in-between them, just like the original enclosure. The assembly is also similar, with the camera placed in-between the two half and then all screwed together with 8 M2.5 screws.

The assembled stereo camera enclosure, with two Google Coral Cameras, mounted to the MR-Buggy3 is shown in the figure bellow.

I also bought some longer 24-Pin, Reverse Direction, 0.5mm pitch and 30cm long FPC cables (https://www.aliexpress.com/item/1005002259855390.html), to have some more slack as specially the bought separate Google Coral camera came with a very short one (I think 15cm).

The CAD files for the enclosure are available on my Thingiverse, and can easily be 3D printed: https://www.thingiverse.com/thing:5935267

Shock Absorber Spring

The stock shock absorber springs of the MR-Bugg3, the WLToys 124019, are quite soft, and specially with the added weight of the soil sensor actuator on the backside, they are not able to handle the weight of the buggy. The good is the springs in the shock absorbers can be replaced with harder ones. I ordered a set of 40mm long, 16mm in diameter and 1.4mm thick coils (the stock coils are 1mm) from AliExpress (https://www.aliexpress.com/item/1005002511575059.html). From measuring these seem to be the right dimensions, but as the springs have not yet arrived I was unable to test them yet.

Part 2: The sensor platformThe sensor platform is responsible for gathering information from the environment, in this application this is from the field soil. It can be split into 4 functional parts:

- Sensor Acquisition Board: The acquisition board, consisting simply of a STM32F103 blue pill board (what I had at hand), is responsible to acquire data from the sensors (soil and air) and do necessary conversions so that the data passed to the NavQPlus are already processed.

- Soil Actuator: The soil actuator is a linear actuator, with force sensing, developed for this project. It is responsible to bring the soil sensor into contact with the soil. Different types of soil sensors can be mounted to it, but the one used in this project from a 3 in 1 soil sensor.

- Soil Sensor: There are a large variety of different soil sensors, ones that require direct contact and some that don't. Here a direct contact one is used, specifically from a 3 in 1 soil sensor.

- Air sensor: The air sensor is the BME688 gas sensor from BOSCH provided for free for this challenge. It is a great compact sensor that gives temperature, humidity and pressure values as well as integrating a novel gas sensor that, with help of AI models, can detect, and in some cases differentiate, between different VOCs and VSCs.

This 4 parts together form the sensor platform and which is connected to the NavQPlus. The NavQPlus is what controls the acquisition steps, gathers the sensor data and publishes them. Bellow is a block diagram showing an overview of these 4 parts and how they are connected together, and to the NavQPlus:

And the figure bellow shows a all components of the sensor platform:

Sensor Acquisition Board

As mentioned above, the acquisition board is responsible to both acquire the signals from the soil sensors (analog signals) and from the air sensor (I2C). It consists simply of a STM32F103 blue pill board, with the soil sensor connected to its analog inputs (PA0 and PA1) through a buffer amplifier (LM358). The BOSCH BME688 sensor is connected through I2C (PB6 and PB7). The acquisition board itself is then connected over a serial (UART on PA9 and PA10) interface to the NavQPlus, which requests and receives already processed sensor information.

Control of the BME688 is done on the acquisition board, using the BME68x library from BOSCH. Conversion from the soil sensors analog signal to relevant soil parameters is also done on the acquisition board. The software running on the acquisition board can be found to download in the attachments (in a.zip file).

Soil Actuator

To get the direct contact soil sensor to make contact with the soil, to insert it into the soil, I had to design an actuator. I choose to design a linear actuator that can be 3D printed easily and can be used for other applications in the future. One special feature I added to this actuator is the capability to sense the actuation force, specifically the force used to push the carriage "down". This was added because for his application it can be used to measure the hardness of the soil, as well as to stop/abort the actuation if it hits a hard surface like a rock and prevent it from lifting the whole Buggy.

To build this actuator the following parts are required:

- 3D Printed Parts (8 parts) (https://www.thingiverse.com/thing:5936797)

- 2x Smooth Rods, 150mm by 6mm

- 1x T8 Lead Screw Rod and Nut, 150mm

- 2x LM6UU Linear Bearing

- 1x Coupler, 8mm to 6mm

- 1x 28BYJ-48 Stepper

- 2x Leaver Switches (end stops)

- 1x Membrane Pressure Sensor (load cell), 4mm 50g-2kg + Amplifier Board (https://www.aliexpress.com/item/1005001324635941.html)

- Assortment of M2.5 screws and nuts

The assembly is designed in a way to simplify 3D printing, favoring smaller parts that require assembly then larger single pieces. The basis of the actuator is composed of 4 parts, the base, 2 sides and the motor mount. All of these screw together with either direct into plastic M2.5 screws (motor mount to top side piece) or with M2.5 nuts and screws (side pieces to base plate). The full assembly instructions can be found on the Thingiverse page. The fully assembled actuator, with the stepper, end stops and force sensor, is shown in the figure bellow:

The force sensing is achieved by using a free moving carriage and a pusher mounted to the lead screw nut. The pusher is connected to the carriage by two screws, but with play, so that it can move slightly up and down without moving the carriage. This pusher pushes the carriage, with a membrane force sensor added at the contact point. The screws are required to keep the pusher from rotating, and to be able to pull the carriage back up. This assembly is shown in the figure bellow:

The stage is controlled by a simple board, build from an Arduino Pro Mini and the DRV8825 stepper driver (see: https://microdigisoft.com/drv8825-motor-driver-and-28byj-48-stepper-motor-with-arduino/). A small detail that I overlooked here is that this stepper driver only works with supply voltages above 8V, so it only works with almost fully charged battery (2S lipo fully charged have 8.4V). Also, the stepper motor is a 5V part so the stepper driver overdrives the motor a bit. From my testing it doesn't seem to be a problem, even with no current limiting the motor does not get warm in the relatively short time it takes for one full actuation. Also, I disable the stepper driver when no movement happens, to avoid over-driving the motor more then needed. The Arduino also samples the force sensor and aborts the actuation if the force is above a threshold (the force value that started to lift the Buggy). Control of this board, of the Arduino, is done over a serial (UART) interface with simple ASCII style commands, and it is connected to the NavQPlus.

The hardware, 3D printed parts are available on my Thingiverse (https://www.thingiverse.com/thing:5936797), while the software, Arduino code, is added to the attachments section.

Air Sensor

The air sensor used is the BME688, a 4 in 1 compact sensor that includes a temperature, pressure, humidity and gas sensor under the same package. Temperate, humidity and pressure can be read out directly (over I2C) while the gas sensor can be trained with the BME AI-Studio to customize its sensitivity to the target application. Sadly, do to time limits I was unable to fully utilize the BME688 sensor, specifically the training of the gas sensor for my application was not done but the software and tools are in place on the acquisition board.

Soil Sensor

As mentioned before, their are a large variety of different soil sensors. They can be separated into two groups, depending on how they interact/sample the soil: Direct contact and indirect contact sensors. This projects uses the first category of soil sensors, although initially I had planned on developing a low cost indirect contact soil sensor using the EM sensor principle of inducing a magnetic field in the soil and then reading out the reflected and attenuated secondary field. But, again, the limited time made me choose the simpler solution of using an off-the-shelf direct contact sensor.

I actually acquired a few (4) of those off-the-shelf sensors, all very popular and easy to find online. Two of them measure the EC (electrical conductivity) of the soil, using a PCB with two exposed probes for that purpose. Another measures the dielectric property (capacitance) of the soil, again using a PCB with two probes but this time they are isolated from the soil. And finally a so called 3-in-1 soil sensor, that is sold for home use and that uses the galvanic principle and is therefore self-powered. The bellow figure show all 4 of these sensors:

I, with the help of my plant loving girlfriend, tested all four of these sensors, in different test conditions. More about this in the "Experiments and Results" section. In the end, although the most promising one was the capacitive sensors, the 3-in-1 sensor is used as it is the most robust one, for inserting into the soil, and the easiest one to integrate with the soil actuation platform (very long probes!).

To end this section, and with that the hardware part, a picture of the soil sensor mounted to the actuator that is mounted to the MR-Buggy3:

So as can be seen from the figure above, with the relatively heavy actuator mounted to the back of the MR-Buggy3, the poor Buggy bottoms out. The springs on the buggy are just to soft, specially with the added weight. That's why the springs in the shock absorber have to be changed, but as I only became aware of this problem late in the project, the new springs have not yet arrived (also long shipping time on those...).

Part 3: The brains (NavQPlus)The NXP NavQPlus mounted and integrated with the MR-Buggy3 forms the center of the autonomous sampling Buggy. It is responsible for managing all the actions occurring on the Buggy, moving around in the environment, gather sensor data, get missions, and following them, and reporting back sampled data (not all of these are implemented...). The code responsible or achieving these tasks are developed as ROS 2 nodes, that are running on the NavQPlus. A global overview of the ROS 2 nodes, and in general the software system architecture, is presented in the block diagram bellow:

From this we can see that the tasks of the NavQPlus, the ROS 2 nodes, can be split into 4 groups:

- Locomotion: These are the nodes responsible to get the Buggy from point A to point B while keeping track of its location [mostly implemented but not all tested and some parameters are not tuned].

- Data Acquisition: This node is responsible for moving sensors into position, the soil actuator, gathering data from the sensors throughout the Acquisition Board, and publishing them to the acquisition server (Hub) [mostly implemented]. Code for it can be found here: https://github.com/NotBlackMagic/AutoSQA-Acquistion

- Mission Planning (and path following): These nodes are responsible for requesting a new Mission from the mission server (Hub), and make the Buggy follow the mission: following the mission path and sample points at defined points, as well as avoid obstacles and other dangers [Not implemented].

- Image Processing: These nodes are responsible for locating obstacles on the Buggys path, through a depth sensing stereo camera setup, and to find interesting features in the environment, through image recognition. [Only basic test with stereo camera is implemented].

Some of these parts, like the locomotion and image processing, can be used independently for other applications as well. All the ROS 2 nodes were developed with ROS 2 Humble, and are tested to run on the NavQPlus running the Ubuntu 22.04 image with ROS 2 preinstalled (https://github.com/rudislabs/navqplus-create3-images/releases/tag/v22.04.2).

The following sub-sections will talk, and give instructions, in more detail of some of these parts.

Locomotion

The current version of the PX4 autopilot, Beta V1.14, only supports two flight (drive?) modes for the rover, and boat, frame types: Manual Mode and Mission Mode, and the latter also only with GPS. (https://docs.px4.io/main/en/getting_started/flight_modes.html#rover-boat). Due to this, and because for my project I needed to have position control of the Buggy, I took the decision to implement a full, offboard, movement and odometry system for the MR-Buggy3 in ROS 2 running on the NavQPlus. I call this the Locomotion system, and I tried to design it in a way that the basics of it could be moved over to the PX4 autopilot in the future. It is also here that the developed Wheel Encoder is integrated into the full system.

The Locomotion system, as a ROS 2 package, is composed of 7 nodes that each do a small part in the whole system. The interaction between these nodes, with what message types and interfaces used, as well as the names of the python files for each Node, is shown in the block diagram bellow:

Before I explain each of these nodes better, we will take a look on how we can connect the PX4, here running on the FMUK66, to the NavQPlus and to integrate it into ROS 2. There are, as of the Beta V1.14 PX4 version, two main ways to connect the PX4 to an off-board PC: using MAVLink or using a uROS link (using microDDS). The uROS link is new for to the V1.14 version of PX4, and I only shortly tested it with the SITL simulation, but it simplifies a lot the integration to ROS over previous versions of PX4. Let's take a quick look on how to set it up.

PX4 to ROS over uROS (microDDS)

On the PX4 side, it is as simple as starting up the microDDS service, which can be done by adding the following startup command to the SD Card at /etc/extras.txt, for when the microDDS service is to be run over Ethernet (recommended). Also see: https://docs.px4.io/main/en/modules/modules_system.html#microdds-client and https://docs.px4.io/main/en/ros/ros2_comm.html.

set +e

microdds_client start -t udp -p 14551 -h 10.0.0.3

set -eOr this one, if it is to be run over a serial link (not recommended and can cause issues due to the low bandwidth of a serial link):

set +e

microdds_client start -t serial -d /dev/ttyS3 -b 921600

set -eThese commands start the microDDS service on the PX4, which will publish the defined uORB messages over the selected interface and which then can be accessed through uROS on the offboard computer side (here the NavQPlus). The uORB messages that are send over, and received, over this interface are defined in the file: src/modules/microdds_client/dds_topics.yaml. Depending on the application some additional uORB messages should be added, like to be able to directly control the Buggy the /fmu/in/manual_control_setpoint message must be enabled, which can be done by adding this line, under the subscriptions:

- topic: /fmu/in/manual_control_setpoint

type: px4_msgs::msg::ManualControlSetpointWith this the setup on the PX4, the FMUK66, side is finished and we can move on to the offboard computer side, here the NavQPlus.

Here it is as simple as following any uROS tutorial, like the official one that can be found here: https://micro.ros.org/docs/tutorials/core/first_application_linux/ and also here https://github.com/Jaeyoung-Lim/px4-offboard/blob/2d784532fd323505ac8a6e53bb70145600d367c4/doc/ROS2_PX4_Offboard_Tutorial.md, for a more specific tutorial for the PX4 (SITL) case. In essence the uROS tools have to be cloned, build (colcon), create the agent, build and source it with and finally run the agent as a ROS 2 node. With this, the PX4 messages are now available as ROS 2 topics. To use them don't forget to clone, to your ROS 2 workspace, the PX4 message definitions for ROS 2, found here: https://github.com/PX4/px4_msgs.

I was only able to test this interface with the SITL simulation, because before I was able to move my MAVLink implementation to the ROS 2, I burned my FMUK66 MCU by wrongly connecting the USB to Serial Adapter:

The adapter PCB is normally heat-shrunk to the USB-to-UART adapter but I removed it so that I could revert the RX-TX pins. When plugging the PCB pack to the adapter cable, I used the wrong orientation, in a hurry I didn't check to closely and just guided myself with the pin 1 indication and the "Black Wire 1" label, which it turns out is the opposite to what is the correct orientation... I would have caught it if I looked closer, as it is clear that the 5V supply line (red cable) connects to a data line (RX or TX) when wrongly connected, and the ground line (black cable) is connected to nothing... Anyways, I confirmed that the MCU is what got burned and will try to get a new one, already de-soldered the burned one, but it is quite a challenge as it is still in short supply and not in stock anywhere that are not "scalper" websites ( > 100$)...

PX4 to ROS over MAVLink

The second way, and the one that I used most, to connect the PX4 to an offboard computer is through MAVLink. This interface is already used for the telemetry link as well as when connecting the FMUK66 with the USB cable. Any of these could also be used to connect it to the NavQPlus, but the recommended way is to use the new T1 Ethernet interface of both the NavQPlus and FMUK66. To enable a new MAVLink link over this interface the following guide can be used: https://github.com/NXPHoverGames/GitBook-NavQPlus/blob/main/navqplus-user-guide/running-mavlink-via-t1-ethernet.md

The above guide starts a new MAVLink link over the T1 Ethernet, that publishes the "onboard" MAVLink message set. The message set to be used can be changed, and what messages each set includes is controlled by the following file in the PX4 firmware: src/modules/mavlink/mavlink_main.cpp. For my project, and to add the Encoder messages to a set, I modified the "onboard" message set to include this message by adding the following line under case MAVLINK_MODE_ONBOARD:

configure_stream_local("ENCODER", 10.0f);This finishes the setup on the PX4, FMUK66, side and we can move over to the offboard computer, the NavQPlus. To connect to a MAVLink link provided by the PX4 there is a tool to build libraries for the major programing langues, and the step-by-step guide for this can be found here: https://mavlink.io/en/getting_started/installation.html. For the python case this is not even necessary as there is a prebuild library, pymavlink that can be installed and used directly, and it is what I used here. Using this, to connect to the established Ethernet MAVLink link, the following python line is used:

master = mavutil.mavlink_connection("udpin:10.0.0.3:14551")An issue that I was not able to resolve, before I burned my FMUK66, is that when I enable the MAVLink over Ethernet the Wheel Encoder stops working, no messages from it are published to any of the MAVLink interfaces, while when I don't enable the Ethernet link they are, on both the Telemetry and USB link... It was actually this problem that caused me to burn the FMUK66, by trying to switch over from Ethernet to the TELEM2 port as the MAVLink interface to the NavQPlus.

Observations onROS 2 runningon NavQPlus

A collection of details that I run into when running ROS 2 on the NavQPlus. First, the Ubuntu 22.04 image uses the not default CycloneDDS for ROS 2, which is working great but before the first use the configuration file of it should be changed (found at user/CycloneDDSConfig.xml), omitting interfaces that don't exist or are not used (will throw errors when launching a ROS 2 node otherwise!). Instructions and some examples can be found here: https://iroboteducation.github.io/create3_docs/setup/xml-config/

Second, building a ROS 2 node, specially with the PX4 message definitions, can take a long time and freezed my NavQPlus in more then one occasion. I came across the following post, from a similar problem on the RPI: https://answers.ros.org/question/407554/colcon-build-freeze-a-raspberry-pi/. This suggests using single threaded build command, and which mostly fixed my issues:

colcon build --executor sequentialLastly, almost every time a network connection is dropped that was used for ROS (e.g. WiFi or the T1 ethernet), I had to restart the ROS deamon or Nodes and Topics would not be visible. This can be done by the two commands bellow:

ros2 daemon stop

ros2 daemon startWith this the basic setup and connection between the FMUK66 the NavQPlus is finished, and ROS 2 nodes can now be used that interact with the buggy through the FMUK66, just like the nodes I developed and will now explain. Because of the issues I had with Wheel Encoder messages and the Ethernet MAVLink connection, seem to be mutually exclusive, all the Locomotion tests where only done on my PC (Ubuntu 22.04 with ROS 2 Humble) and not on the NavQPlus. This is also why there are not many results and no demo videos from this part...

As seen in the start of this section, the Locomotion system can be split into the 7 ROS 2 nodes that make it up:

MAVLink Bridge

This node holds the MAVLink connection to the PX4 autopilot, here over the T1 Ethernet interface (or serial), and translates these messages back to PX4 ROS Topics. This node was added so that all nodes after it could interact with the PX4 directly when the switch to uROS (and microDDS) connection is performed.

Message Conversion

This node takes PX4 ROS Topics and translates them to there ROS 2 standard message equivalents. In this case it takes the "Sensor GPS", "Sensor Combined" and "Attitude" PX4 messages and translates them into "NatSatFix.msg" and "Imu.msg" messeages, which are then used by the Pose Estimation node, by the robot_localization package.

Odometry

This node takes the "Wheel Encoder" PX4 message and translates them into "Odometry.msg" using ackermann odometry model (inspired by: https://medium.com/@waleedmansoor/how-i-built-ros-odometry-for-differential-drive-vehicle-without-encoder-c9f73fe63d87). Ackermann is the type of steering used by the MR-Buggy3, and gives the change of position of the Buggy for a given steering angle and forward velocity. This is also where the developed Wheel Encoder is used, to get a position estimation of the Buggy without GPS.

Right now the odometry Node uses the wheel encoder information only for the forward velocity, and gets the steering angle from the steering command sent to the Buggy (scaled appropriately from tests that are shown in the "Experiments and Results" section). In the future, and specially when all 4 wheel of the Buggy have an encoder, the encoder values can also be used for calculating the actual steering angle of the Buggy. Calculated from the difference of speed of the inner and outer wheels due to the differential gear. They can also be used to predict tire slip and correct the position estimation for this in the odometry node.

Velocity Controller

The velocity controller node, as the name suggest, controls the velocity of the buggy. It takes a Twist message topic, that sets the target linear and angular velocity of the Buggy, and Odometry message topic, from the Odometry node, as feedback. For forward velocity a PID controller is implemented, that takes the target forward velocity, from the Twist topic, and compares it to the current forward velocity, from the Odometry topic, and computes the required Manual Control command to the Buggy, command that is passed through the PX4 to the motor ESC.

This PID controller was tuned with the Buggy not in contact with the soil, free spinning wheels, and will probably need adjusting for real world applications outside in the field. Also, because the velocity controller was developed with the wheels not in contact with the soil, only one of the wheel encoder values is actually used (the one that gives higher RPM), this because with the wheels free spinning and due to the differential gear, one wheel will often not spin at all or much slower (the same applies to the Odometry Node).

The figure bellow shows the forward velocity of the Buggy (orange), controlled by the PID loop, when a target velocity of 3 m/s is set (and removed):

The large spikes in the measured Buggy speed are due to in part low encoder resolution, problematic for low speeds, and in part because this is almost the lower limit of the Buggy speed and therefore when the PID loop lowers the output to much the ESC stops spinning the motor completely instead of just slower. But all in all it worked, at least on the bench.

The steering control does not have a PID loop, it just takes the desired angular velocity and by use of the Ackermann model, transforms them into steering angles and those in to Manual Control commands.

Position Controller

The position controller node, as the name suggest, controls the position of the buggy. It takes a Stamped Pose message topic, that sets the target position (pose is ignored) of the Buggy, and Odometry message topic, from the Odometry node as feedback. It then computes the necessary linear and angular velocities, which are published as a Twist message for the Velocity Controller.

The implemented position controller always moves from the current position to the target position via an arc, where both the start and end pose of the Buggy are tangent to this arc. This means that both the linear and angular velocities are constant over the full movement, except for the ramp up and down of the linear velocities at the start and end. See the diagram bellow, as an example on the calculated trajectory by the position controller for moving the Buggy from its start pose to the target position (only the target position is used, the pose/rotation is enforced by the position controller):

For testing, the target position was given through RVIZ, by setting a goal pose. Also, during testing, the Odometry message Topic used is the one published by the Odometry Node and not the ones published by the Pose Estimation nodes, as the later ones don't work well with the Buggy stationary, as should be the case.

Sadly due to burning the FMUK66, and not taking any results/pictures beforehand, I have not results to show for the position controller actually working...

Pose Estimation (robot_localization package)

For more accurate position estimation of the Buggy, the odometry information should be fused with IMU and GPS information. This is what this nodes does. The Pose Estimation node runs two EKF filter instances, one for fusing the odometry data with the IMU data and publishing them as Local Odometry topic and one that then fuses it with the GPS data and publishes them as Global Odomtry topic. These nodes are from the robot_localization package, and I followed the instructions and setup provided in the documentation of the package: http://docs.ros.org/en/melodic/api/robot_localization/html/index.html

It is important to not that I did not tune any of the parameters yet, specially the covariance matrices must be modified to reflect experimental results from the Buggy, to get accurate position estimations from these nodes.

Keyboard Controller

This node is used for testing, it publishes Twist messages that are used by the Velocity Controller. Taking keyboard inputs and translates them to linear and angular velocities for the Twist message.

All these nodes are available on my GitHub, ready to be cloned and used as a ROS 2 package: https://github.com/NotBlackMagic/MR-Buggy3-Offboard

Data Acquisition

As already mentioned at the start of this section, this node is responsible for moving sensors into position, the soil actuator, gathering data from the sensors, throughout the Acquisition Board, and publishing them to the acquisition server (Hub) (mostly implemented). The data acquisition node has two serial connections, one to the actuator controller board and one to the acquisition board. This means that I had to use two UART interfaces of the NavQPlus, from the total of 4 available. Now there are not actually 4 easily accessible UART interfaces available, some are hidden and some are already used:

- UART1 ("/dev/ttymxc0"): This one is on a special header used for Bluetooth modules and is not easily accessible.

- UART2 ("/dev/ttymxc1"): This one is easily accessible on a JST-GH header but is used for the serial terminal. To use it that service must be stopped first with the following command

systemctl stop serial-getty@ttymxc1 .serviceand then we have to give ourself permission to actually use it with the commandsudo chmod 666 ttymxc1.This is the one used to connect to the actuator controller board. - UART3 ("/dev/ttymxc2"): This one is both easily accessible on a JST-GH header and free to be used. No additional configurations or commands required. This is the one used to connect to the acquisition board.

- UART4 ("/dev/ttymxc3"): Also easily accessible on a JST-GH header but it is by default connected to the Cortex-M7, and is not easily made available to the Cortex-A53 cores, and with that to Linux. See: https://community.nxp.com/t5/i-MX-Processors/IMX8-mini-UART4/td-p/935322

Very simple ASCII commands, and data, are exchanged over these interfaces. For the air sensor acquisition, this node simple sends a request command, "A;", and the acquisition hub then samples the BME688 and returns a data string, e.g. "T: 25.65, H: 49.45, P: 1015.65, R: 80526.12". For sampling the soil their are a few more steps, first a homing command is sent to the actuator, "H;", and the this node will wait until the movement is complete by polling the actuator, "I;". After that, it send a move down command, "D50;", to move the actuator 50mm down and with that the soil sensor into the soil. Again the node wait for completion by polling the actuator. When the position is reached, this node request data from the acquisition hub, "S;", which then samples the soil sensor and returns the sampled, and processed, data back. Finally this node sends another homing command to the actuator to move the soil sensor back up to its resting position. All the gathered data is then packed together with the current position of the Buggy, from an Odometry message Topic, and sent over UDP to a server.

Code for it can be found here: https://github.com/NotBlackMagic/AutoSQA-Acquistion

Image Processing

As seen in section "Part 1: The robotics platform (MR-Buggy3)", one of the big improvements/addition to the NavQPlus is a second ISP, camera interface, allowing the implementation of a stereo camera setup, for depth perception. In the same section I also showed the stereo camera setup created, based on two Google Coral cameras with a distance of 70mm between them. Ideally the stereo camera pairs would be lower resolution BW cameras with global shutter and fixed focus (e.g. OV7251 from the ArduCam RPI Global Shutter Camera or the IMX296 from the new RPI Global Shutter Camera Module), but the Google Coral cameras also given good results at this stage.

The software used for interfacing with the cameras is OpenCV in Python. The first test code developed was to create 3D Anaglyph, red-cyan, pictures from the stereo camera. The code and tutorial followed for this was: https://learnopencv.com/making-a-low-cost-stereo-camera-using-opencv/

See bellow a 3D Anaglyph picture of the MR-Buggy3, the 3D effect is not the best, it would required quite a bit of tuning to move the two frames closer to each other to improve the effect.

The real objective of using a stereo camera setup was to get depth perception on the Buggy, and with that obstacle detection and avoidance. Due to time constraints I was only able to start this part, getting the basic software up and running and playing around with camera resolutions (see "Experiments and Results" section) and disparity parameters calibration. Again, the tutorial and code used is from here: https://learnopencv.com/depth-perception-using-stereo-camera-python-c/

See bellow an example screenshot of the disparity calibration GUI, with the resulting disparity at the bottom and the original left and right camera images on the right as well as frame timings and frame rates in the console at the right (using a resolution of 320x240).

I have to add here that this was my first introduction to OpenCV, and the almost flawless workflow of using OpenCV with python on the NavQPlus and the two Coral Cameras, has made it a great experience and will continue to explore this avenue! I also have to give a HUGE shout-out to author of the https://learnopencv.com website, the guides and code examples are phenomenal!

Although all the code used is mostly just the code from https://learnopencv.com (https://github.com/spmallick/learnopencv), I still added it to my GitHub, so that it is plug-and-play on the NavQPlus: https://github.com/NotBlackMagic/NavQPlus-OpenCV

Part 4: The GUITo control the Buggy on a higher level, plan missions and monitor sampled sensor data, a GUI interface was developed. The GUI interface developed uses the Unity game engine. This Unity project has two main tasks, to generate a mission plan from used input, generate paths and sample locations as well as to show the sampled data to the user in a simple way. Right now only part if this is implemented.

Starting with displaying the sampled sensor data, the Unity project hosts a UDP server to which the Buggy can publish sensor data messages. The sensor data messages contain the sampled sensor values together with the sampling location. This is then used in the Unity project to generate a texture that shows a color heat-map of the sensor values. The sensor values are extended by a configurable radius. This can be seen in the figure bellow, where sampled air temperature data is displayed (overlaid with a top-down image of a terrain):

This system is not limited to receiving data from the Buggy, in fact a number of different sensor stations, mobile or stationary, could send sensor data with location and all of that can be merged into one simple heat-map representation in Unity.

The second task of this Unity project is to control the Buggy at a high level. The idea is that we can Arm the Buggy, over the Telemetry MAVLink connection, and then the Buggy will request from the Unity server a mission to execute. Only art of this was implemented, the MAVLink connection is working and the Buggy can be Armed from the Unity project (it also sets the Buggy into Manual Control Mode, necessary for the Locomotion part).

A side note here about setting the Flight Mode through MAVLink, I could not find any information in the official documentation on what parameters, values, to use in the "command_long" MAVLink command to switch to a certain mode... Luckily someone in the community figured it out and they seem to be correct from my testing: https://discuss.px4.io/t/mav-cmd-do-set-mode-all-possible-modes/8495

A simple click and drop path creation is also implemented, together with path smoothing: conversion of sharp corners into arcs, motions that can actually performed by the Buggy, and how the Position Controller also works. This is shown in the figure bellow (in blue the original path, from the click and drop, and in red the smoothed path with the arcs, the full circle are shown as a debugging step):

Sadly this is where the implemented features stop, there is no mission creation from the drawn paths nor transferring the path to the Buggy (yet), which would also be done over a UDP connection (the stub code is already there).

The Unity project is available on my GitHub: https://github.com/NotBlackMagic/AutoSQA-Hub

Experiments and ResultsThis section contains the some, the recorded ones, experiments done for this project as well as the obtained results.

Buggy Tests

To properly implement the locomotion software to control the Buggy I had to first do some experiments to gather the basic driving characteristics of the Buggy: PX4 Manual Control command values to real world driving. Two tests where performed for this, first to get the relationship between the forward thrust manual control command to actual Buggy speed. The Buggy speed was obtained on the bench with wheels in the air and suing the developed Wheel Encoder. The result is shown in the figure bellow:

Because this results where obtained with the wheel in free spinning, the real world speeds will be lower and should be corrected in the future with in-field tests. Also, the results where obtained with the reduced motor gearing, so out of the box MR-Buggy3 will have higher speeds. Finally, the used PX4 firmware was V1.14 Beta, which added the ability to drive backwards.

The next test was to obtain the relationship between steering manual control command and actual steering angle. The setup used to measure the steering angle can be seen in the figure bellow:

As can be seen, a stick was tied to the wheel and the distance from resting is measured. With some basic trigonometry we then get the actual steering angle, which is shown in the plot bellow:

This result is applicable to an out-of-the-box MR-Buggy3 as no changes to the steering hardware or parameters where done. The obtained relationship is then used in the Ackermann calculations in both the Odometry and Velocity controller ROS 2 nodes.

Acquisition and Sensor Tests

As already mentioned, and pictured, in section "Part 2: The Sensor Platform", I bought a few, 4, different soil sensors. I then tested them, together with my girlfriends that was excited to test some soil sensors as she loves plants and gardening. We setup two different tests, one to evaluate the sensor output vs soil humidity and one to see the immunity of the sensors to pH.

The first test, soil humidity, we used a cheap potting soil mixture and filled 5 pots with the same amount of soil. One was then left to dry to get the dry weight, while the other 4 different amounts of water was added (one with no water added). This then gave us 5 different and known water to soil mixtures. Each soil sensors was then used to sample the soil, and for each pot 5 points where sampled with each sensor and the results averaged. The bellow graph shows the obtained results (see section "Part 2: The Sensor Platform" to know the used sensors):

We can see that the best results where obtained with the capacitive sensor, which is also the expected as it is generally considered that it has a lower dependency on other variables like mineralization of the soil, then the other tested sensors.

The second test performed with the soil sensors was to evaluate the dependency with pH levels, and to test the pH sensor of the 3-in-1 soil sensor. This was done using 2 different bottled water, with different pH level, one alkaline and one acidic, and with a neutral sample (distilled water). The results of this experiment are shown in the graph bellow:

We can see that only the 3-in-1 soil sensor has a monotonic response to the pH level, but sadly for both measuring outputs, moisture and pH. All other sensors gave very different values, specially with the distilled water. My guess is that this due to different mineralization and not pH, as the bottled waters used have different between them and higher mineralization then the distilled water (which should have close to 0). Another test where only the mineralization is changed while keeping the pH close to neutral should be performed to confirm this.

Finally, the actuator force sensor was tested/calibrated. This was done with a scale placed under the soil sensor probes, which are hold by the soil actuator, and then adding different weights on top and recording both the scale output as well as the force sensor output. See the picture bellow for the setup used:

The result of this test is shown in the graph bellow. The pressure is calculated assuming a flat circular cross-section for the soil probes.

OpenCV Depth Tests

I did a few test with the depth sensing software, the disparity calibration, with different resolutions and recorded the frame time and frame rate achieved with them:

Bellow is also a comparison of the disparity map generated be 3 of those resolutions. The scene is the same as shown in the "Image Processing" section, with the anaglyph 3D picture. The differences are in part due to the different resolution used, but also due to the different parameters used for each.

Based on the results I obtained, it looks like the 320x240 resolution is a sweet-spot, and which should be used to now develop the full depth perception: the disparity map to distance calibration as well as the object detection and then mapping those to the calculated distances.

DemosThis section contains a few short demo videos for parts of this project.

Wheel Encoder demo

Acquisition System demo video

Future WorkAs can be seen throughout this project write up, there are a lot of unfinished parts. For future work I will mainly focus on improving the developed Wheel Encoder, adding CAN interface and support for all 4 wheel of the Buggy. As well as on the locomotion part, and working on moving part of it into the PX4 autopilot firmware (already got some contact and interest in collaboration on this on twitter). I will also continue to explore the stereo camera setup, with OpenCV, on the NavQPlus. I would like to still develop a EM based soil sensor, but will see.

Contributions and community involvementI made two small contributions to the NXP GitBooks. First on to the MR-Buggy3 Build Guide. Added a part about mounting conflicts when using a ESC with T-Style power connector (PR: https://github.com/NXPHoverGames/GitBook-NXPCup/pull/2). And second to the NavQPlus GitBook by adding the chapter about using MAVLink over Ethernet from the NavQ GitBook with changes to reflect the used Ubuntu 22.04 image (PR: https://github.com/NXPHoverGames/GitBook-NavQPlus/pull/7).

I'm also working on better integrating the Wheel Encoder into the PX4 firmware, at the moment I have not made any PR yet as the driver is very simple and early and I want to flash it out and test better first.

As far as community involvement goes, I'm very active both in the discussion section of the HoverGames3 contest, providing help and some discoveries there (see some examples bellow):

https://www.hackster.io/contests/nxp-hovergames-challenge-3/discussion/posts/10225#challengeNav

https://www.hackster.io/contests/nxp-hovergames-challenge-3/discussion/posts/10138#challengeNav

https://www.hackster.io/contests/nxp-hovergames-challenge-3/discussion/posts/10117#challengeNav

https://www.hackster.io/contests/nxp-hovergames-challenge-3/discussion/posts/10107#challengeNav

I was also very active in the Hackster Discord in the HoverGames thread, helping quite a few contest members with their problems and interacting with members in general (unsure how to show this interactions here...). All in all that discord channel has been (and I hope continues to be, keep it open please) very active and a joy to interact with!

And finally, I also participated in the quite informative workshop: “Technology Stewardship, exploring how you can design responsibly”.

_t9PF3orMPd.png?auto=compress%2Cformat&w=40&h=40&fit=fillmax&bg=fff&dpr=2)

Comments