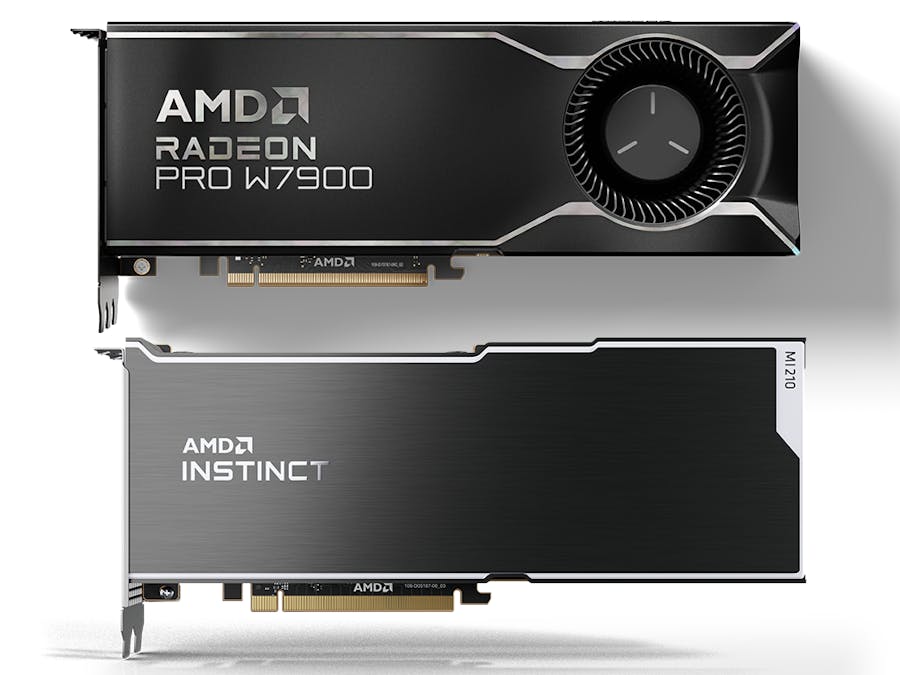

The AMD Instinct™ family of accelerators deliver leadership performance for the data center from single server solutions up to the world’s largest supercomputers. With new innovations in AMD CDNA™ 2 architecture, AMD Infinity Fabric™ technology and packaging technology, the AMD Instinct MI210 accelerators are designed to power discoveries and innovation at Exa scale, enabling developers to tackle the most pressing AI challenges. The AMD Instinct™ MI210 accelerator extends AMD industry performance leadership in accelerated compute for double precision (FP64) on PCIe® form factors for mainstream AI workloads. MI210 delivers exceptional single and double precision performance with up to 45.3 TFLOPS peak theoretical single precision (FP32) performance and 22.6 TFLOPS peak theoretical double precision (FP64) performance and offers optimized INT4, INT8, BF16, FP16, and FP32 Matrix capabilities.

Ultra-Fast HBM2e Memory

- AMD Instinct MI210 accelerators provide up to 64GB of high-bandwidth HBM2e memory with ECC support at a clock rate of 1.6 GHz.

- Has an ultra-high 1.6 TB/s of memory bandwidth to help eliminate bottlenecks transferring data in and out of the memory.

AMD Infinity Fabric Link Technology

- Delivers 64 GB/s CPU to GPU bandwidth without the need for PCIe switches.

- Up to 300 GB/s of peer-to-peer (P2P) bandwidth performance through three Infinity Fabric links.

AMD Acceleration Cloud (AAC)

The MI210 instances are hosted on AMD Acceleration Cloud (AAC) and made available to developers via a remote login account. Each MI210 instance is provisioned with 64 GB GPU memory and the user can schedule training and inference jobs, which will be serviced based on available GPU resources.

AMD Radeon™ GPU familyWith today’s ML models easily exceeding the capabilities of standard hardware that was not designed to handle ML applications, ML engineers are looking for cost-effective solutions to develop and train their ML-powered applications. A local PC or workstation system with AMD Radeon GPUs presents a capable, yet affordable solution to address these growing workload challenges thanks to large GPU memory sizes of 24GB and even 48GB.

As the industry moves towards an ecosystem that supports a broad set of systems, frameworks and accelerators, AMD is determined to continue to make AI more accessible to developers and researchers that benefit from a local client-based setup for ML development using AMD RDNA™ 3 architecture-based desktop GPUs.

Radeon 7900 series GPUs are built on the RDNA 3 GPU architecture, now come with up to 96 Compute Units and feature new AI instructions and more than 2x higher AI performance per Compute Unit compared to the previous generation[1], while second-generation ray tracing technology delivers significantly higher performance than the previous generation[2]. With 48GB of GDDR6 VRAM, this GPU allows professionals and creators to work with large neural network models and data for training and inference.

Researchers and developers working with Machine Learning (ML) models and algorithms using PyTorch can use AMD ROCm™ 5.7 software on Ubuntu® Linux® to tap into the parallel computing power of Radeon PRO W7900 graphics cards.

Product OverviewTechnical requirements and prerequisites for using Instinct MI210 in AMD Accelerator Cloud (AAC) or Radeon GPUs on your PC:

- Linux, Python, and Docker basics

- PyTorch framework for model training and inference

- CPU/GPU heterogeneous programming and performance tuning understanding

- Tools and libraries in ROCm ecosystem

In addition, depending on the complexity of the user’s application, there may be additional libraries that will need to be installed, e.g., transformers, diffusers, accelerate, NumPy, protobuf. The dependencies may conflict with each other. To resolve, developers may need to manually solve version conflicts before continuing development.

ROCm Libraries- HIP runtime APIs

- Math Libraries

- C++ Primitive Libraries

- AI Libraries

- Computer Vision Libraries

- Communication Libraries

- AAC docker pre-installed packages: Ubuntu 22.04.3 LTS, ROCm 5.7.1, Python 3.10.12

- User post-installed packages: PyTorch with ROCm 5.7. Install using the following command.

pip3 install --pre torch torchvision torchaudio --index-url https://download.pytorch.org/whl/nightly/rocm5.7AMD Instinct MI200 series: Instruction Set Architecture, Whitepaper, Tuning Guide.

MODEL DOMAINS

- VISION: torchvision contains definitions of models for addressing different tasks

- AUDIO: Torchaudio is a library for audio and signal processing with PyTorch

- TRANSFORMERS LLMS: Huggingface Transformers Library with 200+ pretrained generative AI models, e.g., BERT, BLOOM, OPT, Llama2, GPT.

- STABLE DIFFUSION: Huggingface Diffusers library with pretrained diffusion models for generating images and audio.

- AMD ROCm Debugger (

ROCgdb): Enables heterogeneous debugging on the AMD ROCm platform comprising of an x86-based host architecture along with commercially available AMD GPU architectures. [Documentation: ROCgdb] - ROCProfiler: A powerful tool for profiling HIP applications on AMD ROCm platforms. It can be used to identify performance bottlenecks in applications and to optimize their performance. [Documentation: ROCProfiler]

- HIPIFY: HIPIFY is a tool for translating CUDA sources into HIP sources. [Documentation: HIPIFY]

- AMD SMI, ROCm SMI and ROCm Data Center Tool: System Management Tools. [Documentation: Management Tools]

Personal Computer or Bare Metal Server

If you are working on a personal computer or bare mental server with AMD Radeon/Instinct GPUs, you can quickly establish your implementation environment by running a PyTorch Docker image.

1. Pull the latest public PyTorch Docker image

docker pull rocm/pytorch:latest2. Start a Docker container using the downloaded image

sudo docker run --device=/dev/kfd --device=/dev/dri --group-add video --cap-add=SYS_PTRACE --security-opt seccomp=unconfined --ipc=host -it -v $HOME/ROCM_APP:/ROCM_APP -d rocm/pytorch:latestAMD Accelerator Cloud

AMD Accelerator Cloud (AAC) is a cloud environment that enables developers to remotely access and use AMD Instinct GPUs and ROCm technologies to experience the power of AMD hardware and software. Developers may follow this guideline to quickly access and run applications on the platform.

In case you are using AMD Instinct GPUs in AMD Accelerator Cloud instance, you can access the running docker instance with pre-installed ROCm environment directly once you get your workloads launched. To run a workload on AAC, use the steps outlined below.

Running a WorkloadSelect Application:

1. In the top menu bar, navigate to “Applications” page.

2. Select “Ubuntu” from the list of “Featured Applications” and the prompted version “Jammy(SSH) 5_7_1” .

3. In the top right corner, click the “New Workload” button.

Select Input Files:

4. Click the button “Upload files” and then, “Browse Files”. Once you’ve selected your files, click “Upload”.

5. When you’ve uploaded all the required files, in the top right corner, click the “NEXT” button.

Select Resources:

This step allows the user to input the desired parameters for your job, such as: the number of GPUs, maximum allowed runtime, etc.

After making your selection, in the top right corner, click the “NEXT” button to proceed.

Select Compute:

Based on the resources selected in the previous step, a list of available queues will be displayed. One of the nodes in the selected queues will be assigned for your workload based on availability. If nodes are occupied, your workload goes in pending status. Once the node is available, the workload will start running. Click “NEXT” button to proceed.

Review Workload Submission

- You can view or change the configurations in this step before the final submission of your workload.

- Scroll down and click the “RUN WORKLOAD” button to proceed. The workload will start running once the node is available (as shown below). Please refer to HowTo_Monitor_Workloads to understand the workload status.

- Developers can click the “STSLOG” or “STDOUT” tab to check if the container is ready, which depends on the availability of resources and may take some time.

- Once the container is ready, the interactive endpoints appear and afford two instance visiting methods: SSH or Service Terminal, to visit the docker container.

- You can get root access by using the password displayed on the “STDOUT” dashboard.

SSH Visiting

1. Click “Connect” button on INTERACTIVE ENDPOINTS, it shows the SSH connection command.

2. Fill <USER> in the command with the USERNAME displayed on STDOUT tab, see below.

Service Terminal Visiting

If you selected “Service Terminal” for the Interactive Endpoint, a bash terminal will then attach to your web browser directly.

Hardware Verification with ROCmThe AMD ROCm™ platform ships with tools to query the system structure. To query the GPU hardware, the rocm-smi command is available. Running `rocm-smi --showhw` shows available GPUs in the system with their device ID and their respective firmware (or VBIOS) versions. To query the compute capabilities of the GPU devices, use rocminfo command. It lists specific details about the GPU devices, including but not limited to the number of compute units, width of the SIMD pipelines, memory information, and instruction set architecture.

Once your workload is finished, please remember to click Finish Workload.

Tutorials / ExamplesThe following tutorials and examples are coming soon.

- Finetuning Large Language Model walkthrough: This step-by-step tutorial demonstrates how to use ROCm to perform a base finetuning and a fast finetuning with bitsandbytes quantization on a LLM and then deploy the model on AMD MI210 GPU.

- Finetuning Stable Diffusion walkthrough: This step-by-step tutorial demonstrates how to use ROCm to finetune a Stable Diffusion model with a custom dataset and deploy the model on AMD MI210 GPU.

- HIP C++ design, Python binding and Huggingface transformers integration guide: This step-by-step tutorial demonstrates how to write a HIP application to explore AMD GPU compute capability and integrate it to Python application as a PyTorch HIP extension for improving the computation performance of Huggingface transformer models.

- Learn more about AMD Accelerator Cloud How-To Guide.

- Learn more about AMD Instinct™ MI200 series accelerators.

- Learn more about AMD ROCm open software platform.

[1] Based on AMD internal measurements, November 2022, comparing the Radeon RX 7900 XTX at 2.505 GHz boost clock with 96 CUs issuing 2X the Bfloat16 math operations per clocks vs. the RX 6900 XT GPU at 2.25 GHz boost clock and 80 CUs issue 1X the Bfloat16 math operations per clock. RX-821.

[2] Based on a November 2022 AMD internal performance lab measurement of rays with indirect calls on RX 7900 XTX GPU vs. RX 6900 XT GPU. RX-808

Comments