This robot (called Robley) is able to retrace a route by following a prerecorded video of the route. It can be used in three simple steps:

1. Record a training video by remote controlling the robot with the custom-made Android app.

2. Run the video through some pre-processing scripts to extract feature points from it.

3. Run the main driving script to have Robley retrace the route followed during training.

Unlike many other route-following robots, Robley does not require any fiducial markers or other modifications to the environment; anywhere with some mildly distinctive visual features will do. This means you can quickly teach Robley to follow a new route without having to meticulously place thick black lines or QR codes all over the place.

GitHub: https://github.com/SiliconSloth/RobleyVision

How It WorksRobley follows a video using OpenCV as follows:

1. A frame is captured from the camera, and the FAST feature detector is used to extract distinctive key points from it.

2. The key points from the camera are matched with the key points of the current frame of the training video, to see how they have moved between the two images.

3. The coordinates of each key point in the camera frame are subtracted from their coordinates in the target frame, to yield the vectors through which each point has moved between the two frames, called optical flow vectors.

4. The median optical flow vector is found, which summarises how the scene in front of the robot has moved between the camera and target frames. The median is used rather than the mean to reduce the impact of outliers caused by incorrect key point matches between the frames.

5. The median flow vector is used to decide what forwards/backwards and side-to-side rotational velocities the robot should have in order to more closely match the target frame, as described below. These velocities can be used to set the correct powers for the left and right motors.

6. As the robot orients itself to match the target frame, the magnitude of the median flow vector will decrease as the camera and target frames look more similar. Once it is sufficiently small Robley moves onto the next frame of the training video, and thus makes its way through the entire video.

Calculating Desired Velocity from the Median Optical Flow VectorAs mentioned above, Robley decides which direction to drive in based on the median optical flow vector between the current view from the camera and the current target frame. I'll explain how this is done using the following example:

This image shows the results of calculating the flow vectors of key points between two frames, with the arrows pointing from the positions in the camera frame to the positions in the target frame. In this example the vectors are pointing to the left, showing that the scene must move left relative to the robot in order for the camera to more closely match the target, and the robot must move right relative to the scene. If the vectors had been pointing to the right then the robot would need to turn left. This makes it pretty simple to decide whether to turn left or right based on the x component of the median optical flow vector.

When a robot (or human) moves forwards, objects appear to grow larger, emerging from a point in the distance known as the vanishing point. Likewise, if a robot (or human) moves backwards things will appear to shrink into the vanishing point. This means it is possible work out whether Robley has moved forwards or backwards between a given pair of frames by working out whether the flow vectors point towards or away from the vanishing point. This would usually be fairly difficult, however Robley simplifies this by using a camera angled slightly upwards so that the vanishing point is at the bottom of the image, as shown below:

This pattern of flow vectors would be typical of a situation where the robot must move forwards to match the target frame, as the vectors are pointing away from the vanishing point. Since the vanishing point is at the bottom of the image, it is possible to work out whether the flow vectors are pointing away from or towards it based on whether they are pointing predominantly upwards or downwards. In this case the vectors are pointing upwards and therefore away from the vanishing point. This means that the y component of the median flow vector can be used to decide whether the robot should drive forwards or backwards.

The x component of the median optical flow vector determines the left/right rotational velocity of the robot, and the y component determines the forwards/backwards velocity it should have. Once Robley has decided on these velocities it can use them to calculate the correct powers for the left and right motors.

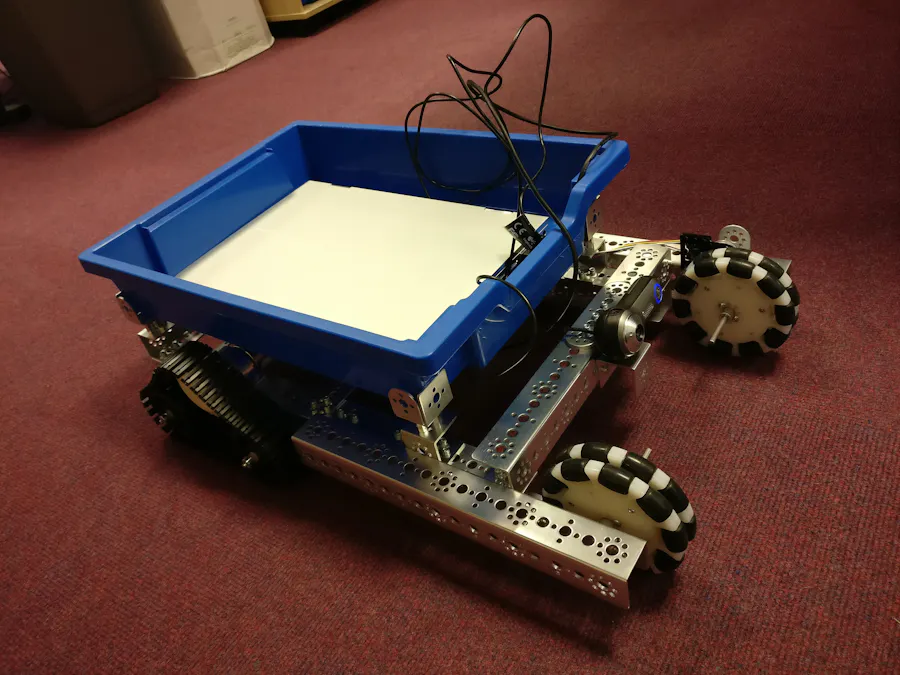

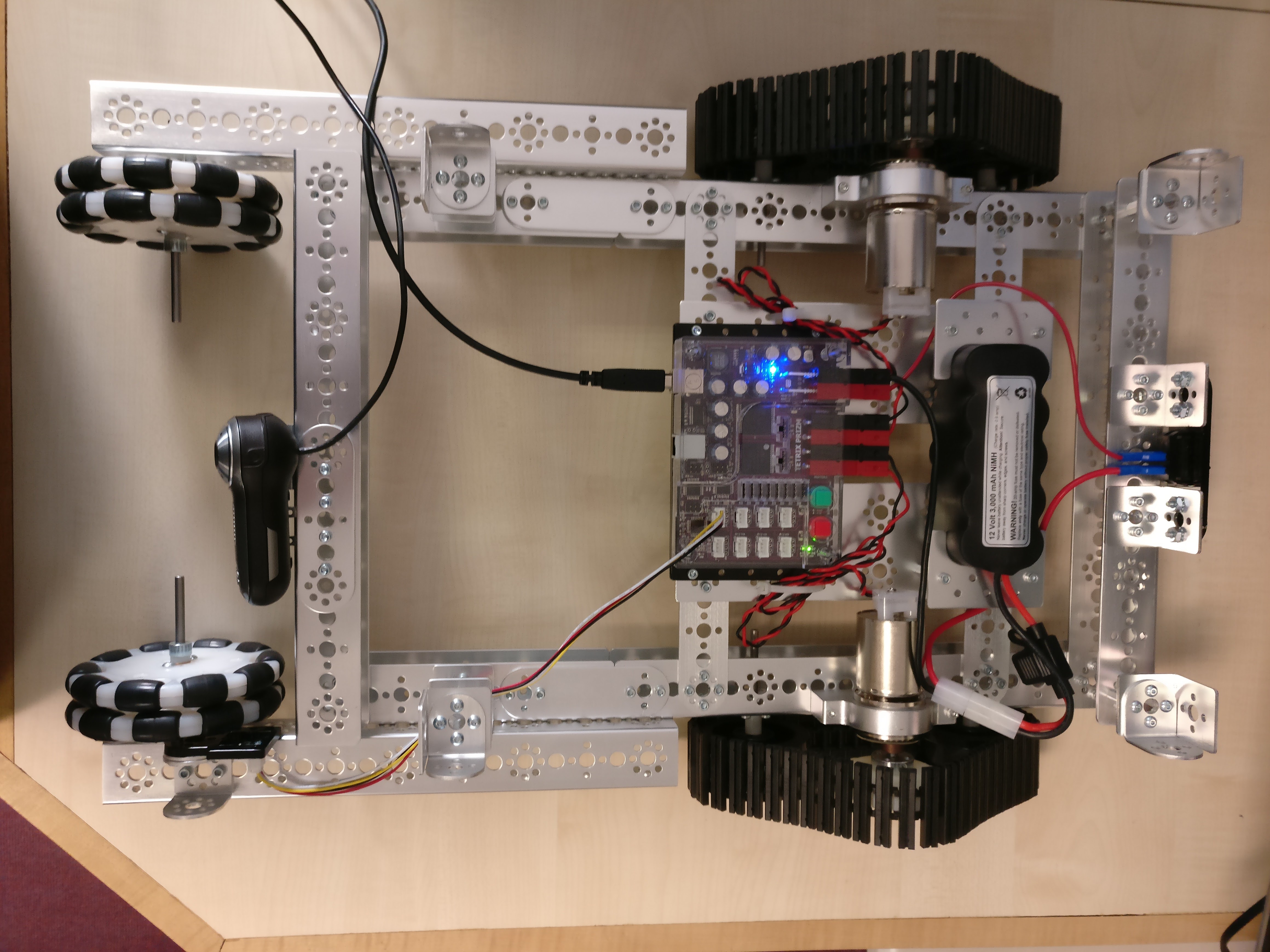

HardwareRobley is built from Tetrix, and carries a laptop that runs the main vision software. The motors are controlled by a Tetrix Prizm controller, which relays commands sent over USB from the laptop to the motors. Robley uses a USB webcam screwed to the front of the robot, angled upwards so that the vanishing point (horizon) is near the bottom of the image. The camera must be angled upwards, or else the software will not work.

During training the robot can be steered using a custom-made Android app, which communicates with the laptop via Bluetooth:

The software was only tested on a laptop, however it uses OpenCV's speedy FAST feature detector and binary features so should hopefully be capable of running on less powerful hardware such as a Raspberry Pi 2 or 3. Using Tetrix was probably a bit overkill for this project, as any generic four-wheeled robot kit capable of having a camera attached to the front would probably have been enough.

Comments